第一个程序是计算session 日志查询排行榜

SougouQA

package week2

/**

* Created by root on 15-8-21.

*/

import org.apache.spark.{SparkContext, SparkConf}

import org.apache.spark.SparkContext._

object SougouQA{

def main(args: Array[String]) {

if (args.length == 0) {

System.err.println("Usage: SougouQA <file1> <file1>")

System.exit(1)

}

val conf = new SparkConf().setAppName("SougouQA")

val sc = new SparkContext(conf)

sc.textFile(args(0)).map(_.split("\t")).filter(_.length==6).map(x=>(x(1),1)).reduceByKey(_+_).map(x=>(x._2,x._1)).sortByKey(false).map(x=>(x._2,x._1)).saveAsTextFile(args(1))

sc.stop()

}

}

join

package week2

/**

* Created by root on 15-8-21.

*/

import org.apache.spark.{SparkContext, SparkConf}

import org.apache.spark.SparkContext._

object join {

def main(args: Array[String]) {

if (args.length == 0) {

System.err.println("Usage: join <file1> <file2>")

System.exit(1)

}

val conf = new SparkConf().setAppName("join")

val sc = new SparkContext(conf)

val format = new java.text.SimpleDateFormat("yyyy-MM-dd")

case class Register (d: java.util.Date, uuid: String, cust_id: String, lat: Float,lng: Float)

case class Click (d: java.util.Date, uuid: String, landing_page: Int)

val reg = sc.textFile(args(0)).map(_.split("\t")).map(r => (r(1), Register(format.parse(r(0)), r(1), r(2), r(3).toFloat, r(4).toFloat)))

val clk = sc.textFile(args(1)).map(_.split("\t")).map(c => (c(1), Click(format.parse(c(0)), c(1), c(2).trim.toInt)))

reg.join(clk).take(2).foreach(println)

sc.stop()

}

}SougouQA的运行

bin/spark-submit –master spark://moon:7077 –class week2.SougouQA week2.jar hdfs://localhost:9000/user/SogouQ1.txt hdfs://localhost:9000/user/week2output

合并输出结果

hdfs dfs -getmerge hdfs://localhost:9000/user/week2output result1

这个resul1是在当前目录下,可以看看访问前10名

head result1

(b3c94c37fb154d46c30a360c7941ff7e,676)

(cc7063efc64510c20bcdd604e12a3b26,613)

(955c6390c02797b3558ba223b8201915,391)

(b1e371de5729cdda9270b7ad09484c4f,337)

(6056710d9eafa569ddc800fe24643051,277)

(637b29b47fed3853e117aa7009a4b621,266)

(c9f4ff7790d0615f6f66b410673e3124,231)

(dca9034de17f6c34cfd56db13ce39f1c,226)

(82e53ddb484e632437039048c5901608,221)

(c72ce1164bcd263ba1f69292abdfdf7c,214)

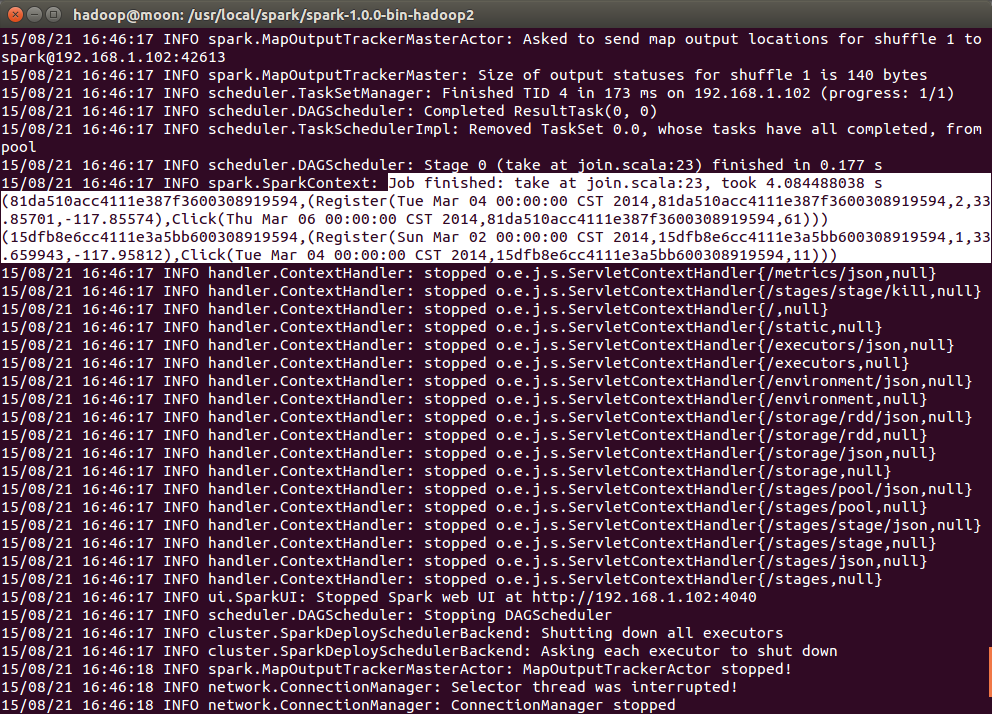

join的运行

bin/spark-submit –master spark://moon:7077 –class week2.join week2.jar hdfs://localhost:9000/user/join/reg.tsv hdfs://localhost:9000/user/join/clk.tsv

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?