Hadoop-2.4.0安装和wordcount运行验证

以下描述了64位centos6.5机器下,安装32位hadoop-2.4.0,并通过运行

系统自带的WordCount例子来验证服务正确性的步骤。

建立目录

/home/QiumingLu/hadoop-2.4.0,以后这个是hadoop的安装目录。

安装hadoop-2.4.0,解压hadoop-2.4.0.tar.gz到目录

/home/QiumingLu/hadoop-2.4.0即可

[root@localhosthadoop-2.4.0]# ls

bin etc lib LICENSE.txt NOTICE.txt sbin synthetic_control.data

dfs include libexec logs README.txt share

配置etc/hadoop/hadoop-env.sh

[root@localhosthadoop-2.4.0]#

cat etc/hadoop/hadoop-env.sh

#The java implementation to use.

exportJAVA_HOME=/home/QiumingLu/mycloud/jdk/jdk1.7.0_51

因为hadoop是默认32位的,所以要加这个:

exportHADOOP_COMMON_LIB_NATIVE_DIR=${HADOOP_PREFIX}/lib/native

exportHADOOP_OPTS="-Djava.library.path=$HADOOP_PREFIX/lib"

否则,可能出现一下错误:

Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Starting namenodes on [Java HotSpot(TM) 64-Bit Server VM warning: You have loaded library /home/hadoop/2.2.0/lib/native/libhadoop.so.1.0.0 which might have disabled stack guard. The VM will try to fix the stack guard now.

It's highly recommended that you fix the library with 'execstack -c <libfile>', or link it with '-z noexecstack'.

localhost]

sed: -e expression #1, char 6: unknown option to `s'

HotSpot(TM): ssh: Could not resolve hostname HotSpot(TM): Name or service not known

64-Bit: ssh: Could not resolve hostname 64-Bit: Name or service not known

Java: ssh: Could not resolve hostname Java: Name or service not known

Server: ssh: Could not resolve hostname Server: Name or service not known

VM: ssh: Could not resolve hostname VM: Name or service not known

配置etc/hadoop/hdfs-site.xml

[root@localhosthadoop-2.4.0]# cat etc/hadoop/hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/home/QiumingLu/hadoop-2.4.0/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/home/QiumingLu/hadoop-2.4.0/dfs/data</value>

</property>

</configuration>

配置etc/hadoop/core-site.xml

<configuration>

<property>

<name>fs.default.name</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>配置etc/hadoop/yarn-site.xml

<configuration>

<!--Site specific YARN configuration properties -->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>

配置etc/hadoop/mapred-site.xml.template

[root@localhosthadoop-2.4.0]# cat etc/hadoop/mapred-site.xml.template

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

格式化文件系统

[root@localhosthadoop-2.4.0]#

./bin/hadoop namenode -format

启动服务,这里使用root用户,需要输入密码的时候,输入root用户密码

如果使用非root,并假设分布式服务,需要先解决ssh登录问题,此处不详

细描述。

[root@localhosthadoop-2.4.0]#

sbin/start-all.sh

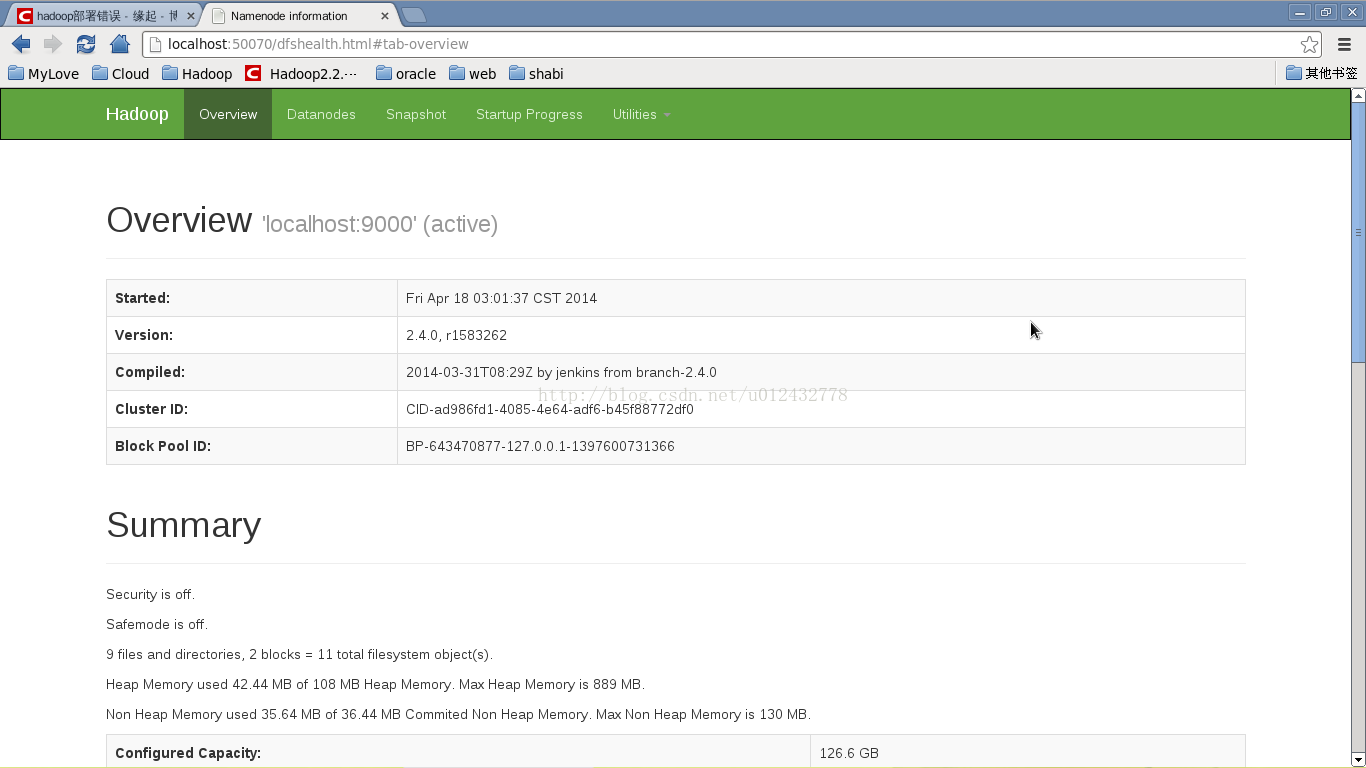

查看启动状态:

[root@localhosthadoop-2.4.0]#

./bin/hadoop dfsadmin -report

DEPRECATED:Use of this script to execute hdfs command is deprecated.

Insteaduse the hdfs command for it.

14/04/1805:15:30 WARN util.NativeCodeLoader: Unable to load native-hadooplibrary for your platform... using builtin-java classes whereapplicable

ConfiguredCapacity: 135938813952 (126.60 GB)

PresentCapacity: 126122217472 (117.46 GB)

DFSRemaining: 126121320448 (117.46 GB)

DFSUsed: 897024 (876 KB)

DFSUsed%: 0.00%

Underreplicated blocks: 0

Blockswith corrupt replicas: 0

Missingblocks: 0

-------------------------------------------------

Datanodesavailable: 1 (1 total, 0 dead)

Livedatanodes:

Name:127.0.0.1:50010 (localhost)

Hostname:localhost

DecommissionStatus : Normal

ConfiguredCapacity: 135938813952 (126.60 GB)

DFSUsed: 897024 (876 KB)

NonDFS Used: 9816596480 (9.14 GB)

DFSRemaining: 126121320448 (117.46 GB)

DFSUsed%: 0.00%

DFSRemaining%: 92.78%

ConfiguredCache Capacity: 0 (0 B)

CacheUsed: 0 (0 B)

CacheRemaining: 0 (0 B)

CacheUsed%: 100.00%

CacheRemaining%: 0.00%

Lastcontact: Fri Apr 18 05:15:29 CST 2014

[root@localhosthadoop-2.4.0]# jps

3614DataNode

3922ResourceManager

3514NameNode

9418Jps

4026NodeManager

构造数据文件(file1.txt,file2.txt)

[root@localhosthadoop-2.4.0]# cat example/file1.txt

hello world

hello markhuang

hello hadoop

[root@localhosthadoop-2.4.0]# cat example/file2.txt

hadoop ok

hadoop fail

hadoop 2.4

[root@localhosthadoop-2.4.0]#

./bin/hadoop fs -mkdir /data

把数据文件加入到hadoop系统。

[root@localhosthadoop-2.4.0]#

./bin/hadoop fs -put -f example/file1.txtexample/file2.txt /data

运行WordCount(java)版本。

[root@localhosthadoop-2.4.0]#

./bin/hadoop jar./share/hadoop/mapreduce/sources/hadoop-mapreduce-examples-2.4.0-sources.jarorg.apache.hadoop.examples.WordCount /data /output

查看结果。

[root@localhosthadoop-2.4.0]#

./bin/hadoop fs -cat /output/part-r-000002.4 1

fail 1

hadoop 4

hello 3

markhuang 1

ok 1

world 1

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?