Mahout简介

Apache Mahout是Apache Software Foundation(ASF)旗下的一个开源项目,提供了一些经典的机器学习算法,旨在帮助开发人员更加方便快捷地创建智能应用程序。

Mahout的主要目标是建立针对大规模数据集可伸缩的机器学习算法,主要包括以下五个部分:

1)频繁模式挖掘:挖掘数据中频繁出现的项集;

2)聚类:将诸如文本、文档之类的数据分成局部相关的组;

3)分类:利用已经存在的分类文档训练分类器,对未分类的文档进行分类;

4)推荐引擎(Taste协同过滤):获取用户的行为并从中发现用户可能喜欢的事务;

5)频繁子项挖掘:利用一个项集(查询记录或购物记录)去识别经常一起出现的项目

1.准备环境

| ip | 备注 |

|---|---|

| 192.168.2.4 | CentOS Linux release 7.4.1708 (Core) |

安装maven

https://blog.csdn.net/u012637358/article/details/106436819

2.下载编译

用于创建可扩展的高性能机器学习应用程序

mahout官网

mahout当前最新源码压缩包mahout-0.14.0-source-release.zip

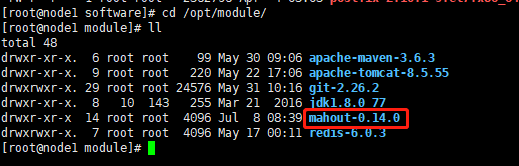

mkdir -p /opt/software

mkdir -p /opt/module

cd /opt/software

上传到/opt/software指定目录下

#进入software目录下

cd /opt/software

#解压mahout-xxx.zip到module目录下

unzip mahout-0.14.0-source-release.zip -d /opt/module

进入到mahout-0.14.0目录进行编译

cd mahout-0.14.0

#跳过测试进行编译

mvn clean install -X -Dmaven.javadoc.skip=true

命令说明:

-Dmaven.test.skip=true :跳过test

-Dmaven.javadoc.skip=true :跳过javadoc

-X :debug,显示详情

编译成功!

3.问题解决

1.编译时构建javadoc时失败

解决方法,mvn添加跳过javadoc参数

-Dmaven.javadoc.skip=true :跳过javadoc

2.在构建mahout core必须构建test不能跳过test

mvn clean install -X -Dmaven.javadoc.skip=true

3.在构建mahout spark engine 模块是必须跳过test

mvn clean install -X -Dmaven.javadoc.skip=true -Dmaven.javadoc.skip=true

保持网络良好,参考文档内容,一般不会在出现其他问题。

4.强烈建议

没特殊需求情况下,强烈建议官网下载编译好的apache-mahout-distribution-xxxx.tar.gz压缩文件

https://downloads.apache.org/mahout/0.13.0/

解压即用

cd /opt/module/apache-mahout-distribution-0.13.0

./bin/mahout

[root@node1 apache-mahout-distribution-0.13.0]# ./bin/mahout

MAHOUT_LOCAL is set, so we don't add HADOOP_CONF_DIR to classpath.

MAHOUT_LOCAL is set, running locally

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/module/apache-mahout-distribution-0.13.0/mahout-examples-0.13.0-job.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/module/apache-mahout-distribution-0.13.0/mahout-mr-0.13.0-job.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/module/apache-mahout-distribution-0.13.0/lib/slf4j-log4j12-1.7.22.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

An example program must be given as the first argument.

Valid program names are:

arff.vector: : Generate Vectors from an ARFF file or directory

baumwelch: : Baum-Welch algorithm for unsupervised HMM training

canopy: : Canopy clustering

cat: : Print a file or resource as the logistic regression models would see it

cleansvd: : Cleanup and verification of SVD output

clusterdump: : Dump cluster output to text

clusterpp: : Groups Clustering Output In Clusters

cmdump: : Dump confusion matrix in HTML or text formats

cvb: : LDA via Collapsed Variation Bayes (0th deriv. approx)

cvb0_local: : LDA via Collapsed Variation Bayes, in memory locally.

describe: : Describe the fields and target variable in a data set

evaluateFactorization: : compute RMSE and MAE of a rating matrix factorization against probes

fkmeans: : Fuzzy K-means clustering

hmmpredict: : Generate random sequence of observations by given HMM

itemsimilarity: : Compute the item-item-similarities for item-based collaborative filtering

kmeans: : K-means clustering

lucene.vector: : Generate Vectors from a Lucene index

matrixdump: : Dump matrix in CSV format

matrixmult: : Take the product of two matrices

parallelALS: : ALS-WR factorization of a rating matrix

qualcluster: : Runs clustering experiments and summarizes results in a CSV

recommendfactorized: : Compute recommendations using the factorization of a rating matrix

recommenditembased: : Compute recommendations using item-based collaborative filtering

regexconverter: : Convert text files on a per line basis based on regular expressions

resplit: : Splits a set of SequenceFiles into a number of equal splits

rowid: : Map SequenceFile<Text,VectorWritable> to {SequenceFile<IntWritable,VectorWritable>, SequenceFile<IntWritable,Text>}

rowsimilarity: : Compute the pairwise similarities of the rows of a matrix

runAdaptiveLogistic: : Score new production data using a probably trained and validated AdaptivelogisticRegression model

runlogistic: : Run a logistic regression model against CSV data

seq2encoded: : Encoded Sparse Vector generation from Text sequence files

seq2sparse: : Sparse Vector generation from Text sequence files

seqdirectory: : Generate sequence files (of Text) from a directory

seqdumper: : Generic Sequence File dumper

seqmailarchives: : Creates SequenceFile from a directory containing gzipped mail archives

seqwiki: : Wikipedia xml dump to sequence file

spectralkmeans: : Spectral k-means clustering

split: : Split Input data into test and train sets

splitDataset: : split a rating dataset into training and probe parts

ssvd: : Stochastic SVD

streamingkmeans: : Streaming k-means clustering

svd: : Lanczos Singular Value Decomposition

testnb: : Test the Vector-based Bayes classifier

trainAdaptiveLogistic: : Train an AdaptivelogisticRegression model

trainlogistic: : Train a logistic regression using stochastic gradient descent

trainnb: : Train the Vector-based Bayes classifier

transpose: : Take the transpose of a matrix

validateAdaptiveLogistic: : Validate an AdaptivelogisticRegression model against hold-out data set

vecdist: : Compute the distances between a set of Vectors (or Cluster or Canopy, they must fit in memory) and a list of Vectors

vectordump: : Dump vectors from a sequence file to text

viterbi: : Viterbi decoding of hidden states from given output states sequence

简单几步就配置完成了

400

400

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?