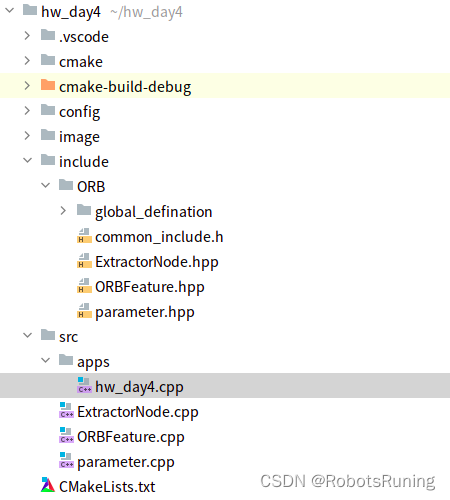

代码架构

src/apps/hw_day4.cpp

#include "ORB/global_defination/global_defination.h" // 导入ORB的全局定义

#include "ORB/ORBFeature.hpp" // 导入ORB特征相关的头文件

using namespace ORB; // 使用ORB命名空间

int main(int argc, char** argv)

{

google::InitGoogleLogging(argv[0]); // 初始化Google日志系统,参数是程序名

FLAGS_alsologtostderr = true; // 将日志同时输出到标准错误输出

std::string config_path = WORK_SPACE_PATH + "/config/camera_para.yaml"; // 相机参数配置文件的路径

std::string image_path = WORK_SPACE_PATH + "/image/distorted.png"; // 图像文件的路径

// 创建一个ORB特征的对象,使用智能指针管理其生命周期

std::shared_ptr<ORBFeature> orb_feature_ptr = std::make_shared<ORBFeature>(image_path, config_path);

std::vector<cv::KeyPoint> vKeypoints; // 用于存储提取出的关键点

cv::Mat descriptor; // 用于存储关键点对应的描述子

// 提取ORB特征

orb_feature_ptr->ExtractORB(vKeypoints, descriptor);

// 读入图像文件

cv::Mat image = cv::imread(image_path, cv::IMREAD_GRAYSCALE);

// 将关键点的数量和描述子的数量输出到日志

LOG(INFO) << "vKeypoints size " << vKeypoints.size();

LOG(INFO) << "descriptor size " << descriptor.rows;

cv::Mat outImage;

// 在图像中绘制关键点

cv::drawKeypoints(image, vKeypoints, outImage, cv::Scalar::all(-1), cv::DrawMatchesFlags::DEFAULT);

// 显示包含关键点的图像

cv::imshow("这是ORB-SLAM2提取的特征点", outImage);

// 提取ORB特征(这行代码可能是重复了,或者是预留的,下面没有给出输入参数)

orb_feature_ptr->ExtractORB();

// 等待用户输入

cv::waitKey();

return 0;

}

std::shared_ptr<ORBFeature> orb_feature_ptr =

std::make_shared<ORBFeature>(image_path, config_path);

ORBFeature(image_path, config_path):这部分代码调用了ORBFeature的构造函数,并且传入image_path和config_path这两个参数。也就是说,它创建了一个ORBFeature的对象。调用

ORBFeature类的构造函数,这个构造函数接收两个参数:image_path和config_path。这个调用会创建一个ORBFeature对象。std::make_shared<ORBFeature>:std::make_shared是一个模板函数,它的作用是创建一个对象并返回一个指向该对象的shared_ptr智能指针。在这个例子中,std::make_shared<ORBFeature>(image_path, config_path)就是创建了一个ORBFeature对象,并返回一个std::shared_ptr<ORBFeature>类型的智能指针,这个智能指针指向新创建的ORBFeature对象。所以,

std::make_shared<ORBFeature>(image_path, config_path)就是创建了一个ORBFeature对象,并用一个std::shared_ptr<ORBFeature>智能指针来管理它。这样做的好处是,你不需要手动管理这个ORBFeature对象的内存,当这个ORBFeature对象不再被使用时,shared_ptr会自动释放这个对象的内存。

std::make_shared是一个模板函数,它用于创建一个std::shared_ptr实例。这个函数需要一个类型参数(在尖括号< >中指定),以及一个用于构造该类型对象的参数列表(在圆括号( )中指定)。

std::make_shared会创建一个新的对象,并返回一个std::shared_ptr,该指针指向新创建的对象。例如,std::make_shared<int>(42)会创建一个新的int对象,其值为42,并返回一个指向该对象的std::shared_ptr<int>。

例子中,

std::make_shared<ORBFeature>(image_path, config_path)会创建一个新的ORBFeature对象,使用image_path和config_path作为构造函数的参数,并返回一个指向该对象的std::shared_ptr<ORBFeature>。

最后,将这个智能指针赋值给

orb_feature_ptr。以后你可以通过orb_feature_ptr来使用这个ORBFeature对象。

ExtractorNode.cpp

#include "ORB/ExtractorNode.hpp"

namespace ORB {

void ExtractorNode::DivideNode(ExtractorNode &n1, ExtractorNode &n2, ExtractorNode &n3, ExtractorNode &n4)

{

//得到当前提取器节点所在图像区域的一半长宽,当然结果需要取整

const int halfX = std::ceil(static_cast<float>(UR.x-UL.x)/2);

const int halfY = std::ceil(static_cast<float>(BR.y-UL.y)/2);

//Define boundaries of childs

//下面的操作大同小异,将一个图像区域再细分成为四个小图像区块

//n1 存储左上区域的边界

n1.UL = UL;

n1.UR = cv::Point2i(UL.x+halfX,UL.y);

n1.BL = cv::Point2i(UL.x,UL.y+halfY);

n1.BR = cv::Point2i(UL.x+halfX,UL.y+halfY);

//用来存储在该节点对应的图像网格中提取出来的特征点的vector

n1.vKeys.reserve(vKeys.size());

//n2 存储右上区域的边界

n2.UL = n1.UR;

n2.UR = UR;

n2.BL = n1.BR;

n2.BR = cv::Point2i(UR.x,UL.y+halfY);

n2.vKeys.reserve(vKeys.size());

//n3 存储左下区域的边界

n3.UL = n1.BL;

n3.UR = n1.BR;

n3.BL = BL;

n3.BR = cv::Point2i(n1.BR.x,BL.y);

n3.vKeys.reserve(vKeys.size());

//n4 存储右下区域的边界

n4.UL = n3.UR;

n4.UR = n2.BR;

n4.BL = n3.BR;

n4.BR = BR;

n4.vKeys.reserve(vKeys.size());

//Associate points to childs

//遍历当前提取器节点的vkeys中存储的特征点

for(size_t i=0;i<vKeys.size();i++)

{

//获取这个特征点对象

const cv::KeyPoint &kp = vKeys[i];

//判断这个特征点在当前特征点提取器节点图像的哪个区域,更严格地说是属于那个子图像区块

//然后就将这个特征点追加到那个特征点提取器节点的vkeys中

//NOTICE BUG REVIEW 这里也是直接进行比较的,但是特征点的坐标是在“半径扩充图像”坐标系下的,而节点区域的坐标则是在“边缘扩充图像”坐标系下的

if(kp.pt.x<n1.UR.x)

{

if(kp.pt.y<n1.BR.y)

n1.vKeys.push_back(kp);

else

n3.vKeys.push_back(kp);

}

else if(kp.pt.y<n1.BR.y)

n2.vKeys.push_back(kp);

else

n4.vKeys.push_back(kp);

}//遍历当前提取器节点的vkeys中存储的特征点

//判断每个子特征点提取器节点所在的图像中特征点的数目(就是分配给子节点的特征点数目),然后做标记

//这里判断是否数目等于1的目的是确定这个节点还能不能再向下进行分裂

if(n1.vKeys.size()==1)

n1.bNoMore = true;

if(n2.vKeys.size()==1)

n2.bNoMore = true;

if(n3.vKeys.size()==1)

n3.bNoMore = true;

if(n4.vKeys.size()==1)

n4.bNoMore = true;

}

}ORBFeature.cpp

#include "ORB/ORBFeature.hpp"

namespace ORB {

ORBFeature::ORBFeature(const std::string& image_path, const std::string& config_path)

{

image = cv::imread(image_path, cv::IMREAD_GRAYSCALE);

camera_ptr = std::make_shared<Parameter>(config_path);

setPara();

}

// 写的这么优秀,就不给点个赞吗??

void ORBFeature::ExtractORB()

{

// TODO 请在这里利用OpenCV函数进行ORB特征点的提取,并在图像上进行显示

// 创建ORB对象

cv::Ptr<cv::ORB> orb = cv::ORB::create();

// 用于存储关键点的向量

std::vector<cv::KeyPoint> keypoints;

// 用于存储描述符的Mat对象

cv::Mat descriptors;

// 使用ORB对象从图像中提取关键点和描述符

orb->detectAndCompute(this->image, cv::Mat(), keypoints, descriptors);

// 在图像上绘制关键点

cv::Mat keypointImage;

cv::drawKeypoints(this->image, keypoints, keypointImage, cv::Scalar::all(-1), cv::DrawMatchesFlags::DRAW_RICH_KEYPOINTS);

// 显示绘制了关键点的图像

cv::imshow("这是Open CV提取的特征点", keypointImage);

cv::waitKey(0);

}

// 提取ORB特征

// 写的这么优秀,就不给点个赞吗??

void ORB::ORBFeature::ExtractORB(std::vector<cv::KeyPoint>& _keypoints, cv::Mat& _descriptors)

{

// 对图像进行畸变校正

this->UndistortImage();

// 构建图像金字塔

this->ComputePyramid();

int nlevels = camera_ptr->nLevels; // 获取图像金字塔的层数

std::vector<std::vector<cv::KeyPoint>> allKeypoints; // 存储每层图像金字塔的关键点

// 在每层图像金字塔中计算关键点

ComputeKeyPointsQuadtree(allKeypoints);

cv::Mat descriptors; // 描述子

int nkeypoints = 0; // 关键点数量

// 统计所有层的关键点数量

for(int level = 0; level < nlevels; ++level)

nkeypoints += (int)allKeypoints[level].size();

if(nkeypoints == 0)

_descriptors.release(); // 如果没有关键点,则清空描述子

else

{

_descriptors.create(nkeypoints, 32, CV_8U); // 根据关键点数量创建描述子

descriptors = _descriptors;

}

_keypoints.clear(); // 清空关键点

_keypoints.reserve(nkeypoints); // 预分配空间

int offset = 0; // 偏移量,用于分割描述子

for (int level = 0; level < nlevels; ++level)

{

std::vector<cv::KeyPoint>& keypoints = allKeypoints[level];

int nkeypointsLevel = (int)keypoints.size();

if(nkeypointsLevel==0)

continue; // 如果这一层没有关键点,跳过

// 预处理图像

cv::Mat workingMat = mvImagePyramid[level].clone();

// 计算描述子

cv::Mat desc = descriptors.rowRange(offset, offset + nkeypointsLevel);

computeDescriptors(workingMat, keypoints, desc, pattern);

offset += nkeypointsLevel; // 更新偏移量

// 调整关键点坐标

if (level != 0)

{

float scale = mvScaleFactor[level];

for (std::vector<cv::KeyPoint>::iterator keypoint = keypoints.begin(), keypointEnd = keypoints.end(); keypoint != keypointEnd; ++keypoint)

keypoint->pt *= scale;

}

// 将关键点添加到输出

_keypoints.insert(_keypoints.end(), keypoints.begin(), keypoints.end());

}

}

void ORBFeature::UndistortImage()

{

int rows = image.rows;

int cols = image.cols;

cv::Mat image_undistort = cv::Mat(rows, cols, CV_8UC1);

for (int v = 0; v < rows; v++)

{

for (int u = 0; u < cols; u++)

{

double x = (u - camera_ptr->cx)/camera_ptr->fx;

double y = (v - camera_ptr->cy)/camera_ptr->fy;

double r = sqrt(x * x + y * y);

double r2 = r * r;

double r4 = r2 * r2;

double x_dis = x * (1 + camera_ptr->k1 * r2 + camera_ptr->k2 * r4) + 2 * camera_ptr->p1 * x * y + camera_ptr->p2 * (r2 + 2 * x * x);

double y_dis = y * (1 + camera_ptr->k1 * r2 + camera_ptr->k2 * r4) + camera_ptr->p1 * (r2 + 2 * y * y) + 2 * camera_ptr->p2 * x * y;

double u_dis = camera_ptr->fx * x_dis + camera_ptr->cx;

double v_dis = camera_ptr->fy * y_dis + camera_ptr->cy;

if (u_dis >= 0 && v_dis >= 0 && u_dis < cols && v_dis < rows)

{

image_undistort.at<uchar>(v, u) = image.at<uchar>((int)v_dis, (int)u_dis);

}

else {

image_undistort.at<uchar>(v, u) = 0;

}

}

}

cv::imshow("distored", image);

cv::imshow("undistorted", image_undistort);

cv::waitKey();

}

int ORBFeature::HALF_PATCH_SIZE = 15;

int ORBFeature::EDGE_THRESHOLD = 19;

int ORBFeature::PATCH_SIZE = 31;

static int bit_pattern_31_[256*4] =

{

8,-3, 9,5/*mean (0), correlation (0)*/, //后面的均值和相关性没有看懂是什么意思

4,2, 7,-12/*mean (1.12461e-05), correlation (0.0437584)*/,

-11,9, -8,2/*mean (3.37382e-05), correlation (0.0617409)*/,

7,-12, 12,-13/*mean (5.62303e-05), correlation (0.0636977)*/,

2,-13, 2,12/*mean (0.000134953), correlation (0.085099)*/,

1,-7, 1,6/*mean (0.000528565), correlation (0.0857175)*/,

-2,-10, -2,-4/*mean (0.0188821), correlation (0.0985774)*/,

-13,-13, -11,-8/*mean (0.0363135), correlation (0.0899616)*/,

-13,-3, -12,-9/*mean (0.121806), correlation (0.099849)*/,

10,4, 11,9/*mean (0.122065), correlation (0.093285)*/,

-13,-8, -8,-9/*mean (0.162787), correlation (0.0942748)*/,

-11,7, -9,12/*mean (0.21561), correlation (0.0974438)*/,

7,7, 12,6/*mean (0.160583), correlation (0.130064)*/,

-4,-5, -3,0/*mean (0.228171), correlation (0.132998)*/,

-13,2, -12,-3/*mean (0.00997526), correlation (0.145926)*/,

-9,0, -7,5/*mean (0.198234), correlation (0.143636)*/,

12,-6, 12,-1/*mean (0.0676226), correlation (0.16689)*/,

-3,6, -2,12/*mean (0.166847), correlation (0.171682)*/,

-6,-13, -4,-8/*mean (0.101215), correlation (0.179716)*/,

11,-13, 12,-8/*mean (0.200641), correlation (0.192279)*/,

4,7, 5,1/*mean (0.205106), correlation (0.186848)*/,

5,-3, 10,-3/*mean (0.234908), correlation (0.192319)*/,

3,-7, 6,12/*mean (0.0709964), correlation (0.210872)*/,

-8,-7, -6,-2/*mean (0.0939834), correlation (0.212589)*/,

-2,11, -1,-10/*mean (0.127778), correlation (0.20866)*/,

-13,12, -8,10/*mean (0.14783), correlation (0.206356)*/,

-7,3, -5,-3/*mean (0.182141), correlation (0.198942)*/,

-4,2, -3,7/*mean (0.188237), correlation (0.21384)*/,

-10,-12, -6,11/*mean (0.14865), correlation (0.23571)*/,

5,-12, 6,-7/*mean (0.222312), correlation (0.23324)*/,

5,-6, 7,-1/*mean (0.229082), correlation (0.23389)*/,

1,0, 4,-5/*mean (0.241577), correlation (0.215286)*/,

9,11, 11,-13/*mean (0.00338507), correlation (0.251373)*/,

4,7, 4,12/*mean (0.131005), correlation (0.257622)*/,

2,-1, 4,4/*mean (0.152755), correlation (0.255205)*/,

-4,-12, -2,7/*mean (0.182771), correlation (0.244867)*/,

-8,-5, -7,-10/*mean (0.186898), correlation (0.23901)*/,

4,11, 9,12/*mean (0.226226), correlation (0.258255)*/,

0,-8, 1,-13/*mean (0.0897886), correlation (0.274827)*/,

-13,-2, -8,2/*mean (0.148774), correlation (0.28065)*/,

-3,-2, -2,3/*mean (0.153048), correlation (0.283063)*/,

-6,9, -4,-9/*mean (0.169523), correlation (0.278248)*/,

8,12, 10,7/*mean (0.225337), correlation (0.282851)*/,

0,9, 1,3/*mean (0.226687), correlation (0.278734)*/,

7,-5, 11,-10/*mean (0.00693882), correlation (0.305161)*/,

-13,-6, -11,0/*mean (0.0227283), correlation (0.300181)*/,

10,7, 12,1/*mean (0.125517), correlation (0.31089)*/,

-6,-3, -6,12/*mean (0.131748), correlation (0.312779)*/,

10,-9, 12,-4/*mean (0.144827), correlation (0.292797)*/,

-13,8, -8,-12/*mean (0.149202), correlation (0.308918)*/,

-13,0, -8,-4/*mean (0.160909), correlation (0.310013)*/,

3,3, 7,8/*mean (0.177755), correlation (0.309394)*/,

5,7, 10,-7/*mean (0.212337), correlation (0.310315)*/,

-1,7, 1,-12/*mean (0.214429), correlation (0.311933)*/,

3,-10, 5,6/*mean (0.235807), correlation (0.313104)*/,

2,-4, 3,-10/*mean (0.00494827), correlation (0.344948)*/,

-13,0, -13,5/*mean (0.0549145), correlation (0.344675)*/,

-13,-7, -12,12/*mean (0.103385), correlation (0.342715)*/,

-13,3, -11,8/*mean (0.134222), correlation (0.322922)*/,

-7,12, -4,7/*mean (0.153284), correlation (0.337061)*/,

6,-10, 12,8/*mean (0.154881), correlation (0.329257)*/,

-9,-1, -7,-6/*mean (0.200967), correlation (0.33312)*/,

-2,-5, 0,12/*mean (0.201518), correlation (0.340635)*/,

-12,5, -7,5/*mean (0.207805), correlation (0.335631)*/,

3,-10, 8,-13/*mean (0.224438), correlation (0.34504)*/,

-7,-7, -4,5/*mean (0.239361), correlation (0.338053)*/,

-3,-2, -1,-7/*mean (0.240744), correlation (0.344322)*/,

2,9, 5,-11/*mean (0.242949), correlation (0.34145)*/,

-11,-13, -5,-13/*mean (0.244028), correlation (0.336861)*/,

-1,6, 0,-1/*mean (0.247571), correlation (0.343684)*/,

5,-3, 5,2/*mean (0.000697256), correlation (0.357265)*/,

-4,-13, -4,12/*mean (0.00213675), correlation (0.373827)*/,

-9,-6, -9,6/*mean (0.0126856), correlation (0.373938)*/,

-12,-10, -8,-4/*mean (0.0152497), correlation (0.364237)*/,

10,2, 12,-3/*mean (0.0299933), correlation (0.345292)*/,

7,12, 12,12/*mean (0.0307242), correlation (0.366299)*/,

-7,-13, -6,5/*mean (0.0534975), correlation (0.368357)*/,

-4,9, -3,4/*mean (0.099865), correlation (0.372276)*/,

7,-1, 12,2/*mean (0.117083), correlation (0.364529)*/,

-7,6, -5,1/*mean (0.126125), correlation (0.369606)*/,

-13,11, -12,5/*mean (0.130364), correlation (0.358502)*/,

-3,7, -2,-6/*mean (0.131691), correlation (0.375531)*/,

7,-8, 12,-7/*mean (0.160166), correlation (0.379508)*/,

-13,-7, -11,-12/*mean (0.167848), correlation (0.353343)*/,

1,-3, 12,12/*mean (0.183378), correlation (0.371916)*/,

2,-6, 3,0/*mean (0.228711), correlation (0.371761)*/,

-4,3, -2,-13/*mean (0.247211), correlation (0.364063)*/,

-1,-13, 1,9/*mean (0.249325), correlation (0.378139)*/,

7,1, 8,-6/*mean (0.000652272), correlation (0.411682)*/,

1,-1, 3,12/*mean (0.00248538), correlation (0.392988)*/,

9,1, 12,6/*mean (0.0206815), correlation (0.386106)*/,

-1,-9, -1,3/*mean (0.0364485), correlation (0.410752)*/,

-13,-13, -10,5/*mean (0.0376068), correlation (0.398374)*/,

7,7, 10,12/*mean (0.0424202), correlation (0.405663)*/,

12,-5, 12,9/*mean (0.0942645), correlation (0.410422)*/,

6,3, 7,11/*mean (0.1074), correlation (0.413224)*/,

5,-13, 6,10/*mean (0.109256), correlation (0.408646)*/,

2,-12, 2,3/*mean (0.131691), correlation (0.416076)*/,

3,8, 4,-6/*mean (0.165081), correlation (0.417569)*/,

2,6, 12,-13/*mean (0.171874), correlation (0.408471)*/,

9,-12, 10,3/*mean (0.175146), correlation (0.41296)*/,

-8,4, -7,9/*mean (0.183682), correlation (0.402956)*/,

-11,12, -4,-6/*mean (0.184672), correlation (0.416125)*/,

1,12, 2,-8/*mean (0.191487), correlation (0.386696)*/,

6,-9, 7,-4/*mean (0.192668), correlation (0.394771)*/,

2,3, 3,-2/*mean (0.200157), correlation (0.408303)*/,

6,3, 11,0/*mean (0.204588), correlation (0.411762)*/,

3,-3, 8,-8/*mean (0.205904), correlation (0.416294)*/,

7,8, 9,3/*mean (0.213237), correlation (0.409306)*/,

-11,-5, -6,-4/*mean (0.243444), correlation (0.395069)*/,

-10,11, -5,10/*mean (0.247672), correlation (0.413392)*/,

-5,-8, -3,12/*mean (0.24774), correlation (0.411416)*/,

-10,5, -9,0/*mean (0.00213675), correlation (0.454003)*/,

8,-1, 12,-6/*mean (0.0293635), correlation (0.455368)*/,

4,-6, 6,-11/*mean (0.0404971), correlation (0.457393)*/,

-10,12, -8,7/*mean (0.0481107), correlation (0.448364)*/,

4,-2, 6,7/*mean (0.050641), correlation (0.455019)*/,

-2,0, -2,12/*mean (0.0525978), correlation (0.44338)*/,

-5,-8, -5,2/*mean (0.0629667), correlation (0.457096)*/,

7,-6, 10,12/*mean (0.0653846), correlation (0.445623)*/,

-9,-13, -8,-8/*mean (0.0858749), correlation (0.449789)*/,

-5,-13, -5,-2/*mean (0.122402), correlation (0.450201)*/,

8,-8, 9,-13/*mean (0.125416), correlation (0.453224)*/,

-9,-11, -9,0/*mean (0.130128), correlation (0.458724)*/,

1,-8, 1,-2/*mean (0.132467), correlation (0.440133)*/,

7,-4, 9,1/*mean (0.132692), correlation (0.454)*/,

-2,1, -1,-4/*mean (0.135695), correlation (0.455739)*/,

11,-6, 12,-11/*mean (0.142904), correlation (0.446114)*/,

-12,-9, -6,4/*mean (0.146165), correlation (0.451473)*/,

3,7, 7,12/*mean (0.147627), correlation (0.456643)*/,

5,5, 10,8/*mean (0.152901), correlation (0.455036)*/,

0,-4, 2,8/*mean (0.167083), correlation (0.459315)*/,

-9,12, -5,-13/*mean (0.173234), correlation (0.454706)*/,

0,7, 2,12/*mean (0.18312), correlation (0.433855)*/,

-1,2, 1,7/*mean (0.185504), correlation (0.443838)*/,

5,11, 7,-9/*mean (0.185706), correlation (0.451123)*/,

3,5, 6,-8/*mean (0.188968), correlation (0.455808)*/,

-13,-4, -8,9/*mean (0.191667), correlation (0.459128)*/,

-5,9, -3,-3/*mean (0.193196), correlation (0.458364)*/,

-4,-7, -3,-12/*mean (0.196536), correlation (0.455782)*/,

6,5, 8,0/*mean (0.1972), correlation (0.450481)*/,

-7,6, -6,12/*mean (0.199438), correlation (0.458156)*/,

-13,6, -5,-2/*mean (0.211224), correlation (0.449548)*/,

1,-10, 3,10/*mean (0.211718), correlation (0.440606)*/,

4,1, 8,-4/*mean (0.213034), correlation (0.443177)*/,

-2,-2, 2,-13/*mean (0.234334), correlation (0.455304)*/,

2,-12, 12,12/*mean (0.235684), correlation (0.443436)*/,

-2,-13, 0,-6/*mean (0.237674), correlation (0.452525)*/,

4,1, 9,3/*mean (0.23962), correlation (0.444824)*/,

-6,-10, -3,-5/*mean (0.248459), correlation (0.439621)*/,

-3,-13, -1,1/*mean (0.249505), correlation (0.456666)*/,

7,5, 12,-11/*mean (0.00119208), correlation (0.495466)*/,

4,-2, 5,-7/*mean (0.00372245), correlation (0.484214)*/,

-13,9, -9,-5/*mean (0.00741116), correlation (0.499854)*/,

7,1, 8,6/*mean (0.0208952), correlation (0.499773)*/,

7,-8, 7,6/*mean (0.0220085), correlation (0.501609)*/,

-7,-4, -7,1/*mean (0.0233806), correlation (0.496568)*/,

-8,11, -7,-8/*mean (0.0236505), correlation (0.489719)*/,

-13,6, -12,-8/*mean (0.0268781), correlation (0.503487)*/,

2,4, 3,9/*mean (0.0323324), correlation (0.501938)*/,

10,-5, 12,3/*mean (0.0399235), correlation (0.494029)*/,

-6,-5, -6,7/*mean (0.0420153), correlation (0.486579)*/,

8,-3, 9,-8/*mean (0.0548021), correlation (0.484237)*/,

2,-12, 2,8/*mean (0.0616622), correlation (0.496642)*/,

-11,-2, -10,3/*mean (0.0627755), correlation (0.498563)*/,

-12,-13, -7,-9/*mean (0.0829622), correlation (0.495491)*/,

-11,0, -10,-5/*mean (0.0843342), correlation (0.487146)*/,

5,-3, 11,8/*mean (0.0929937), correlation (0.502315)*/,

-2,-13, -1,12/*mean (0.113327), correlation (0.48941)*/,

-1,-8, 0,9/*mean (0.132119), correlation (0.467268)*/,

-13,-11, -12,-5/*mean (0.136269), correlation (0.498771)*/,

-10,-2, -10,11/*mean (0.142173), correlation (0.498714)*/,

-3,9, -2,-13/*mean (0.144141), correlation (0.491973)*/,

2,-3, 3,2/*mean (0.14892), correlation (0.500782)*/,

-9,-13, -4,0/*mean (0.150371), correlation (0.498211)*/,

-4,6, -3,-10/*mean (0.152159), correlation (0.495547)*/,

-4,12, -2,-7/*mean (0.156152), correlation (0.496925)*/,

-6,-11, -4,9/*mean (0.15749), correlation (0.499222)*/,

6,-3, 6,11/*mean (0.159211), correlation (0.503821)*/,

-13,11, -5,5/*mean (0.162427), correlation (0.501907)*/,

11,11, 12,6/*mean (0.16652), correlation (0.497632)*/,

7,-5, 12,-2/*mean (0.169141), correlation (0.484474)*/,

-1,12, 0,7/*mean (0.169456), correlation (0.495339)*/,

-4,-8, -3,-2/*mean (0.171457), correlation (0.487251)*/,

-7,1, -6,7/*mean (0.175), correlation (0.500024)*/,

-13,-12, -8,-13/*mean (0.175866), correlation (0.497523)*/,

-7,-2, -6,-8/*mean (0.178273), correlation (0.501854)*/,

-8,5, -6,-9/*mean (0.181107), correlation (0.494888)*/,

-5,-1, -4,5/*mean (0.190227), correlation (0.482557)*/,

-13,7, -8,10/*mean (0.196739), correlation (0.496503)*/,

1,5, 5,-13/*mean (0.19973), correlation (0.499759)*/,

1,0, 10,-13/*mean (0.204465), correlation (0.49873)*/,

9,12, 10,-1/*mean (0.209334), correlation (0.49063)*/,

5,-8, 10,-9/*mean (0.211134), correlation (0.503011)*/,

-1,11, 1,-13/*mean (0.212), correlation (0.499414)*/,

-9,-3, -6,2/*mean (0.212168), correlation (0.480739)*/,

-1,-10, 1,12/*mean (0.212731), correlation (0.502523)*/,

-13,1, -8,-10/*mean (0.21327), correlation (0.489786)*/,

8,-11, 10,-6/*mean (0.214159), correlation (0.488246)*/,

2,-13, 3,-6/*mean (0.216993), correlation (0.50287)*/,

7,-13, 12,-9/*mean (0.223639), correlation (0.470502)*/,

-10,-10, -5,-7/*mean (0.224089), correlation (0.500852)*/,

-10,-8, -8,-13/*mean (0.228666), correlation (0.502629)*/,

4,-6, 8,5/*mean (0.22906), correlation (0.498305)*/,

3,12, 8,-13/*mean (0.233378), correlation (0.503825)*/,

-4,2, -3,-3/*mean (0.234323), correlation (0.476692)*/,

5,-13, 10,-12/*mean (0.236392), correlation (0.475462)*/,

4,-13, 5,-1/*mean (0.236842), correlation (0.504132)*/,

-9,9, -4,3/*mean (0.236977), correlation (0.497739)*/,

0,3, 3,-9/*mean (0.24314), correlation (0.499398)*/,

-12,1, -6,1/*mean (0.243297), correlation (0.489447)*/,

3,2, 4,-8/*mean (0.00155196), correlation (0.553496)*/,

-10,-10, -10,9/*mean (0.00239541), correlation (0.54297)*/,

8,-13, 12,12/*mean (0.0034413), correlation (0.544361)*/,

-8,-12, -6,-5/*mean (0.003565), correlation (0.551225)*/,

2,2, 3,7/*mean (0.00835583), correlation (0.55285)*/,

10,6, 11,-8/*mean (0.00885065), correlation (0.540913)*/,

6,8, 8,-12/*mean (0.0101552), correlation (0.551085)*/,

-7,10, -6,5/*mean (0.0102227), correlation (0.533635)*/,

-3,-9, -3,9/*mean (0.0110211), correlation (0.543121)*/,

-1,-13, -1,5/*mean (0.0113473), correlation (0.550173)*/,

-3,-7, -3,4/*mean (0.0140913), correlation (0.554774)*/,

-8,-2, -8,3/*mean (0.017049), correlation (0.55461)*/,

4,2, 12,12/*mean (0.01778), correlation (0.546921)*/,

2,-5, 3,11/*mean (0.0224022), correlation (0.549667)*/,

6,-9, 11,-13/*mean (0.029161), correlation (0.546295)*/,

3,-1, 7,12/*mean (0.0303081), correlation (0.548599)*/,

11,-1, 12,4/*mean (0.0355151), correlation (0.523943)*/,

-3,0, -3,6/*mean (0.0417904), correlation (0.543395)*/,

4,-11, 4,12/*mean (0.0487292), correlation (0.542818)*/,

2,-4, 2,1/*mean (0.0575124), correlation (0.554888)*/,

-10,-6, -8,1/*mean (0.0594242), correlation (0.544026)*/,

-13,7, -11,1/*mean (0.0597391), correlation (0.550524)*/,

-13,12, -11,-13/*mean (0.0608974), correlation (0.55383)*/,

6,0, 11,-13/*mean (0.065126), correlation (0.552006)*/,

0,-1, 1,4/*mean (0.074224), correlation (0.546372)*/,

-13,3, -9,-2/*mean (0.0808592), correlation (0.554875)*/,

-9,8, -6,-3/*mean (0.0883378), correlation (0.551178)*/,

-13,-6, -8,-2/*mean (0.0901035), correlation (0.548446)*/,

5,-9, 8,10/*mean (0.0949843), correlation (0.554694)*/,

2,7, 3,-9/*mean (0.0994152), correlation (0.550979)*/,

-1,-6, -1,-1/*mean (0.10045), correlation (0.552714)*/,

9,5, 11,-2/*mean (0.100686), correlation (0.552594)*/,

11,-3, 12,-8/*mean (0.101091), correlation (0.532394)*/,

3,0, 3,5/*mean (0.101147), correlation (0.525576)*/,

-1,4, 0,10/*mean (0.105263), correlation (0.531498)*/,

3,-6, 4,5/*mean (0.110785), correlation (0.540491)*/,

-13,0, -10,5/*mean (0.112798), correlation (0.536582)*/,

5,8, 12,11/*mean (0.114181), correlation (0.555793)*/,

8,9, 9,-6/*mean (0.117431), correlation (0.553763)*/,

7,-4, 8,-12/*mean (0.118522), correlation (0.553452)*/,

-10,4, -10,9/*mean (0.12094), correlation (0.554785)*/,

7,3, 12,4/*mean (0.122582), correlation (0.555825)*/,

9,-7, 10,-2/*mean (0.124978), correlation (0.549846)*/,

7,0, 12,-2/*mean (0.127002), correlation (0.537452)*/,

-1,-6, 0,-11/*mean (0.127148), correlation (0.547401)*/

};

void ORBFeature::setPara()

{

int nlevels = camera_ptr->nLevels;

double scaleFactor = camera_ptr->scalseFactor;

int nfeatures = camera_ptr->nFeatures;

mvScaleFactor.resize(nlevels);

mvLevelSigma2.resize(nlevels);

mvScaleFactor[0] = 1.0f;

mvLevelSigma2[0]=1.0f;

for(int i=1; i<nlevels; i++)

{

mvScaleFactor[i]=mvScaleFactor[i-1]*scaleFactor;

mvLevelSigma2[i]=mvScaleFactor[i]*mvScaleFactor[i];

}

mvInvScaleFactor.resize(nlevels);

mvInvLevelSigma2.resize(nlevels);

for(int i=0; i<nlevels; i++)

{

mvInvScaleFactor[i]=1.0f/mvScaleFactor[i];

mvInvLevelSigma2[i]=1.0f/mvLevelSigma2[i];

}

mvImagePyramid.resize(nlevels);

mnFeaturesPerLevel.resize(nlevels);

float factor = 1.0f / scaleFactor;

float nDesiredFeaturesPerScale = nfeatures*(1 - factor)/(1 - (float)pow((double)factor, (double)nlevels));

int sumFeatures = 0;

for( int level = 0; level < nlevels-1; level++ )

{

mnFeaturesPerLevel[level] = cvRound(nDesiredFeaturesPerScale);

//累计

sumFeatures += mnFeaturesPerLevel[level];

//乘系数

nDesiredFeaturesPerScale *= factor;

}

mnFeaturesPerLevel[nlevels-1] = std::max(nfeatures - sumFeatures, 0);

const int npoints = 512;

const cv::Point* pattern0 = (const cv::Point*)bit_pattern_31_;

std::copy(pattern0, pattern0 + npoints, std::back_inserter(pattern));

umax.resize(HALF_PATCH_SIZE + 1);

int v, //循环辅助变量

v0, //辅助变量

vmax = cvFloor(HALF_PATCH_SIZE * sqrt(2.f) / 2 + 1);

int vmin = cvCeil(HALF_PATCH_SIZE * sqrt(2.f) / 2);

const double hp2 = HALF_PATCH_SIZE*HALF_PATCH_SIZE;

for (v = 0; v <= vmax; ++v)

umax[v] = cvRound(sqrt(hp2 - v * v));

for (v = HALF_PATCH_SIZE, v0 = 0; v >= vmin; --v)

{

while (umax[v0] == umax[v0 + 1])

++v0;

umax[v] = v0;

++v0;

}

}

void ORBFeature::ComputePyramid()

{

int nlevels = camera_ptr->nLevels;

for (int level = 0; level < nlevels; ++level)

{

float scale = mvInvScaleFactor[level];

cv::Size sz(cvRound((float)image.cols*scale), cvRound((float)image.rows*scale));

cv::Size wholeSize(sz.width + EDGE_THRESHOLD*2, sz.height + EDGE_THRESHOLD*2);

cv::Mat temp(wholeSize, image.type()), masktemp;

mvImagePyramid[level] = temp(cv::Rect(EDGE_THRESHOLD, EDGE_THRESHOLD, sz.width, sz.height));

if( level != 0 )

{

//将上一层金字塔图像根据设定sz缩放到当前层级

// resize(mvImagePyramid[level-1], //输入图像

// mvImagePyramid[level], //输出图像

// sz, //输出图像的尺寸

// 0, //水平方向上的缩放系数,留0表示自动计算

// 0, //垂直方向上的缩放系数,留0表示自动计算

// cv::INTER_LINEAR); //图像缩放的差值算法类型,这里的是线性插值算法

//! 原代码mvImagePyramid 并未扩充,上面resize应该改为如下

cv::resize(image, //输入图像

mvImagePyramid[level], //输出图像

sz, //输出图像的尺寸

0, //水平方向上的缩放系数,留0表示自动计算

0, //垂直方向上的缩放系数,留0表示自动计算

cv::INTER_LINEAR); //图像缩放的差值算法类型,这里的是线性插值算法

cv::copyMakeBorder(mvImagePyramid[level], //源图像

temp, //目标图像(此时其实就已经有大了一圈的尺寸了)

EDGE_THRESHOLD, EDGE_THRESHOLD, //top & bottom 需要扩展的border大小

EDGE_THRESHOLD, EDGE_THRESHOLD, //left & right 需要扩展的border大小

cv::BORDER_REFLECT_101+cv::BORDER_ISOLATED); //扩充方式,opencv给出的解释:

}

else

{

cv::copyMakeBorder(image, //这里是原图像

temp, EDGE_THRESHOLD, EDGE_THRESHOLD, EDGE_THRESHOLD, EDGE_THRESHOLD,

cv::BORDER_REFLECT_101);

}

mvImagePyramid[level] = temp;

}

}

void ORBFeature::ComputeKeyPointsQuadtree(std::vector<std::vector<cv::KeyPoint>>& allKeypoints)

{

int nlevels = camera_ptr->nLevels;

int nfeatures = camera_ptr->nFeatures;

int iniThFAST = camera_ptr->iniThFAST;

int minThFAST = camera_ptr->minThFAST;

allKeypoints.resize(nlevels);

//图像cell的尺寸,是个正方形,可以理解为边长in像素坐标

const float W = 30;

for (int level = 0; level < nlevels; ++level)

{

//计算这层图像的坐标边界, NOTICE 注意这里是坐标边界,EDGE_THRESHOLD指的应该是可以提取特征点的有效图像边界,后面会一直使用“有效图像边界“这个自创名词

const int minBorderX = EDGE_THRESHOLD-3; //这里的3是因为在计算FAST特征点的时候,需要建立一个半径为3的圆

const int minBorderY = minBorderX; //minY的计算就可以直接拷贝上面的计算结果了

const int maxBorderX = mvImagePyramid[level].cols-EDGE_THRESHOLD+3;

const int maxBorderY = mvImagePyramid[level].rows-EDGE_THRESHOLD+3;

//存储需要进行平均分配的特征点

std::vector<cv::KeyPoint> vToDistributeKeys;

//一般地都是过量采集,所以这里预分配的空间大小是nfeatures*10

vToDistributeKeys.reserve(nfeatures*10);

//计算进行特征点提取的图像区域尺寸

const float width = (maxBorderX-minBorderX);

const float height = (maxBorderY-minBorderY);

//计算网格在当前层的图像有的行数和列数

const int nCols = width/W;

const int nRows = height/W;

//计算每个图像网格所占的像素行数和列数

const int wCell = ceil(width/nCols);

const int hCell = ceil(height/nRows);

//开始遍历图像网格,还是以行开始遍历的

for(int i=0; i<nRows; i++)

{

//计算当前网格初始行坐标

const float iniY =minBorderY+i*hCell;

//计算当前网格最大的行坐标,这里的+6=+3+3,即考虑到了多出来3是为了cell边界像素进行FAST特征点提取用

//前面的EDGE_THRESHOLD指的应该是提取后的特征点所在的边界,所以minBorderY是考虑了计算半径时候的图像边界

//目测一个图像网格的大小是25*25啊

float maxY = iniY+hCell+6;

//如果初始的行坐标就已经超过了有效的图像边界了,这里的“有效图像”是指原始的、可以提取FAST特征点的图像区域

if(iniY>=maxBorderY-3)

//那么就跳过这一行

continue;

//如果图像的大小导致不能够正好划分出来整齐的图像网格,那么就要委屈最后一行了

if(maxY>maxBorderY)

maxY = maxBorderY;

//开始列的遍历

for(int j=0; j<nCols; j++)

{

//计算初始的列坐标

const float iniX =minBorderX+j*wCell;

//计算这列网格的最大列坐标,+6的含义和前面相同

float maxX = iniX+wCell+6;

//判断坐标是否在图像中

//如果初始的列坐标就已经超过了有效的图像边界了,这里的“有效图像”是指原始的、可以提取FAST特征点的图像区域。

//并且应该同前面行坐标的边界对应,都为-3

//!BUG 正确应该是maxBorderX-3

if(iniX>=maxBorderX-6)

continue;

//如果最大坐标越界那么委屈一下

if(maxX>maxBorderX)

maxX = maxBorderX;

// FAST提取兴趣点, 自适应阈值

//这个向量存储这个cell中的特征点

std::vector<cv::KeyPoint> vKeysCell;

//调用opencv的库函数来检测FAST角点

cv::FAST(mvImagePyramid[level].rowRange(iniY,maxY).colRange(iniX,maxX), //待检测的图像,这里就是当前遍历到的图像块

vKeysCell, //存储角点位置的容器

iniThFAST, //检测阈值

true); //使能非极大值抑制

//如果这个图像块中使用默认的FAST检测阈值没有能够检测到角点

if(vKeysCell.empty())

{

//那么就使用更低的阈值来进行重新检测

FAST(mvImagePyramid[level].rowRange(iniY,maxY).colRange(iniX,maxX), //待检测的图像

vKeysCell, //存储角点位置的容器

minThFAST, //更低的检测阈值

true); //使能非极大值抑制

}

//当图像cell中检测到FAST角点的时候执行下面的语句

if(!vKeysCell.empty())

{

//遍历其中的所有FAST角点

for(std::vector<cv::KeyPoint>::iterator vit=vKeysCell.begin(); vit!=vKeysCell.end();vit++)

{

//NOTICE 到目前为止,这些角点的坐标都是基于图像cell的,现在我们要先将其恢复到当前的【坐标边界】下的坐标

//这样做是因为在下面使用八叉树法整理特征点的时候将会使用得到这个坐标

//在后面将会被继续转换成为在当前图层的扩充图像坐标系下的坐标

(*vit).pt.x+=j*wCell;

(*vit).pt.y+=i*hCell;

//然后将其加入到”等待被分配“的特征点容器中

vToDistributeKeys.push_back(*vit);

}//遍历图像cell中的所有的提取出来的FAST角点,并且恢复其在整个金字塔当前层图像下的坐标

}//当图像cell中检测到FAST角点的时候执行下面的语句

}//开始遍历图像cell的列

}//开始遍历图像cell的行

//声明一个对当前图层的特征点的容器的引用

std::vector<cv::KeyPoint> & keypoints = allKeypoints[level];

//并且调整其大小为欲提取出来的特征点个数(当然这里也是扩大了的,因为不可能所有的特征点都是在这一个图层中提取出来的)

keypoints.reserve(nfeatures);

// 根据mnFeatuvector<KeyPoint> & keypoints = allKeypoints[level];resPerLevel,即该层的兴趣点数,对特征点进行剔除

//返回值是一个保存有特征点的vector容器,含有剔除后的保留下来的特征点

//得到的特征点的坐标,依旧是在当前图层下来讲的

keypoints = DistributeQuadtree(vToDistributeKeys, //当前图层提取出来的特征点,也即是等待剔除的特征点

//NOTICE 注意此时特征点所使用的坐标都是在“半径扩充图像”下的

minBorderX, maxBorderX, //当前图层图像的边界,而这里的坐标却都是在“边缘扩充图像”下的

minBorderY, maxBorderY,

mnFeaturesPerLevel[level], //希望保留下来的当前层图像的特征点个数

level); //当前层图像所在的图层

//PATCH_SIZE是对于底层的初始图像来说的,现在要根据当前图层的尺度缩放倍数进行缩放得到缩放后的PATCH大小 和特征点的方向计算有关

const int scaledPatchSize = PATCH_SIZE*mvScaleFactor[level];

// Add border to coordinates and scale information

//获取剔除过程后保留下来的特征点数目

const int nkps = keypoints.size();

//然后开始遍历这些特征点,恢复其在当前图层图像坐标系下的坐标

for(int i=0; i<nkps ; i++)

{

//对每一个保留下来的特征点,恢复到相对于当前图层“边缘扩充图像下”的坐标系的坐标

keypoints[i].pt.x+=minBorderX;

keypoints[i].pt.y+=minBorderY;

//记录特征点来源的图像金字塔图层

keypoints[i].octave=level;

//记录计算方向的patch,缩放后对应的大小, 又被称作为特征点半径

keypoints[i].size = scaledPatchSize;

}

}

for (int level = 0; level < nlevels; ++level)

computeOrientation(mvImagePyramid[level], //对应的图层的图像

allKeypoints[level], //这个图层中提取并保留下来的特征点容器

umax); //以及PATCH的横坐标边界

}

std::vector<cv::KeyPoint> ORBFeature::DistributeQuadtree(const std::vector<cv::KeyPoint>& vToDistributeKeys, const int &minX,

const int &maxX, const int &minY, const int &maxY, const int &N, const int &level)

{

// Compute how many initial nodes

// Step 1 根据宽高比确定初始节点数目

//计算应该生成的初始节点个数,根节点的数量nIni是根据边界的宽高比值确定的,一般是1或者2

// ! bug: 如果宽高比小于0.5,nIni=0, 后面hx会报错

const int nIni = round(static_cast<float>(maxX-minX)/(maxY-minY));

//一个初始的节点的x方向有多少个像素

const float hX = static_cast<float>(maxX-minX)/nIni;

//存储有提取器节点的链表

std::list<ExtractorNode> lNodes;

//存储初始提取器节点指针的vector

std::vector<ExtractorNode*> vpIniNodes;

//重新设置其大小

vpIniNodes.resize(nIni);

// Step 2 生成初始提取器节点

for(int i=0; i<nIni; i++)

{

//生成一个提取器节点

ExtractorNode ni;

//设置提取器节点的图像边界

//注意这里和提取FAST角点区域相同,都是“半径扩充图像”,特征点坐标从0 开始

ni.UL = cv::Point2i(hX*static_cast<float>(i),0); //UpLeft

ni.UR = cv::Point2i(hX*static_cast<float>(i+1),0); //UpRight

ni.BL = cv::Point2i(ni.UL.x,maxY-minY); //BottomLeft

ni.BR = cv::Point2i(ni.UR.x,maxY-minY); //BottomRight

//重设vkeys大小

ni.vKeys.reserve(vToDistributeKeys.size());

//将刚才生成的提取节点添加到链表中

//虽然这里的ni是局部变量,但是由于这里的push_back()是拷贝参数的内容到一个新的对象中然后再添加到列表中

//所以当本函数退出之后这里的内存不会成为“野指针”

lNodes.push_back(ni);

//存储这个初始的提取器节点句柄

vpIniNodes[i] = &lNodes.back();

}

//Associate points to childs

// Step 3 将特征点分配到子提取器节点中

for(size_t i=0;i<vToDistributeKeys.size();i++)

{

//获取这个特征点对象

const cv::KeyPoint &kp = vToDistributeKeys[i];

//按特征点的横轴位置,分配给属于那个图像区域的提取器节点(最初的提取器节点)

vpIniNodes[kp.pt.x/hX]->vKeys.push_back(kp);

}

// Step 4 遍历此提取器节点列表,标记那些不可再分裂的节点,删除那些没有分配到特征点的节点

// ? 这个步骤是必要的吗?感觉可以省略,通过判断nIni个数和vKeys.size() 就可以吧

std::list<ExtractorNode>::iterator lit = lNodes.begin();

while(lit!=lNodes.end())

{

//如果初始的提取器节点所分配到的特征点个数为1

if(lit->vKeys.size()==1)

{

//那么就标志位置位,表示此节点不可再分

lit->bNoMore=true;

//更新迭代器

lit++;

}

///如果一个提取器节点没有被分配到特征点,那么就从列表中直接删除它

else if(lit->vKeys.empty())

//注意,由于是直接删除了它,所以这里的迭代器没有必要更新;否则反而会造成跳过元素的情况

lit = lNodes.erase(lit);

else

//如果上面的这些情况和当前的特征点提取器节点无关,那么就只是更新迭代器

lit++;

}

//结束标志位清空

bool bFinish = false;

//记录迭代次数,只是记录,并未起到作用

int iteration = 0;

//声明一个vector用于存储节点的vSize和句柄对

//这个变量记录了在一次分裂循环中,那些可以再继续进行分裂的节点中包含的特征点数目和其句柄

std::vector<std::pair<int,ExtractorNode*> > vSizeAndPointerToNode;

//调整大小,这里的意思是一个初始化节点将“分裂”成为四个

vSizeAndPointerToNode.reserve(lNodes.size()*4);

// Step 5 利用四叉树方法对图像进行划分区域,均匀分配特征点

while(!bFinish)

{

//更新迭代次数计数器,只是记录,并未起到作用

iteration++;

//保存当前节点个数,prev在这里理解为“保留”比较好

int prevSize = lNodes.size();

//重新定位迭代器指向列表头部

lit = lNodes.begin();

//需要展开的节点计数,这个一直保持累计,不清零

int nToExpand = 0;

//因为是在循环中,前面的循环体中可能污染了这个变量,所以清空

//这个变量也只是统计了某一个循环中的点

//这个变量记录了在一次分裂循环中,那些可以再继续进行分裂的节点中包含的特征点数目和其句柄

vSizeAndPointerToNode.clear();

// 将目前的子区域进行划分

//开始遍历列表中所有的提取器节点,并进行分解或者保留

while(lit!=lNodes.end())

{

//如果提取器节点只有一个特征点,

if(lit->bNoMore)

{

// If node only contains one point do not subdivide and continue

//那么就没有必要再进行细分了

lit++;

//跳过当前节点,继续下一个

continue;

}

else

{

// If more than one point, subdivide

//如果当前的提取器节点具有超过一个的特征点,那么就要进行继续分裂

ExtractorNode n1,n2,n3,n4;

//再细分成四个子区域

lit->DivideNode(n1,n2,n3,n4);

// Add childs if they contain points

//如果这里分出来的子区域中有特征点,那么就将这个子区域的节点添加到提取器节点的列表中

//注意这里的条件是,有特征点即可

if(n1.vKeys.size()>0)

{

//注意这里也是添加到列表前面的

lNodes.push_front(n1);

//再判断其中子提取器节点中的特征点数目是否大于1

if(n1.vKeys.size()>1)

{

//如果有超过一个的特征点,那么待展开的节点计数加1

nToExpand++;

//保存这个特征点数目和节点指针的信息

vSizeAndPointerToNode.push_back(std::make_pair(n1.vKeys.size(),&lNodes.front()));

//?这个访问用的句柄貌似并没有用到?

// lNodes.front().lit 和前面的迭代的lit 不同,只是名字相同而已

// lNodes.front().lit是node结构体里的一个指针用来记录节点的位置

// 迭代的lit 是while循环里作者命名的遍历的指针名称

lNodes.front().lit = lNodes.begin();

}

}

//后面的操作都是相同的,这里不再赘述

if(n2.vKeys.size()>0)

{

lNodes.push_front(n2);

if(n2.vKeys.size()>1)

{

nToExpand++;

vSizeAndPointerToNode.push_back(std::make_pair(n2.vKeys.size(),&lNodes.front()));

lNodes.front().lit = lNodes.begin();

}

}

if(n3.vKeys.size()>0)

{

lNodes.push_front(n3);

if(n3.vKeys.size()>1)

{

nToExpand++;

vSizeAndPointerToNode.push_back(std::make_pair(n3.vKeys.size(),&lNodes.front()));

lNodes.front().lit = lNodes.begin();

}

}

if(n4.vKeys.size()>0)

{

lNodes.push_front(n4);

if(n4.vKeys.size()>1)

{

nToExpand++;

vSizeAndPointerToNode.push_back(std::make_pair(n4.vKeys.size(),&lNodes.front()));

lNodes.front().lit = lNodes.begin();

}

}

//当这个母节点expand之后就从列表中删除它了,能够进行分裂操作说明至少有一个子节点的区域中特征点的数量是>1的

// 分裂方式是后加的节点先分裂,先加的后分裂

lit=lNodes.erase(lit);

//继续下一次循环,其实这里加不加这句话的作用都是一样的

continue;

}//判断当前遍历到的节点中是否有超过一个的特征点

}//遍历列表中的所有提取器节点

// Finish if there are more nodes than required features or all nodes contain just one point

//停止这个过程的条件有两个,满足其中一个即可:

//1、当前的节点数已经超过了要求的特征点数

//2、当前所有的节点中都只包含一个特征点

if((int)lNodes.size()>=N //判断是否超过了要求的特征点数

|| (int)lNodes.size()==prevSize) //prevSize中保存的是分裂之前的节点个数,如果分裂之前和分裂之后的总节点个数一样,说明当前所有的

//节点区域中只有一个特征点,已经不能够再细分了

{

//停止标志置位

bFinish = true;

}

// Step 6 当再划分之后所有的Node数大于要求数目时,就慢慢划分直到使其刚刚达到或者超过要求的特征点个数

//可以展开的子节点个数nToExpand x3,是因为一分四之后,会删除原来的主节点,所以乘以3

/**

* //?BUG 但是我觉得这里有BUG,虽然最终作者也给误打误撞、稀里糊涂地修复了

* 注意到,这里的nToExpand变量在前面的执行过程中是一直处于累计状态的,如果因为特征点个数太少,跳过了下面的else-if,又进行了一次上面的遍历

* list的操作之后,lNodes.size()增加了,但是nToExpand也增加了,尤其是在很多次操作之后,下面的表达式:

* ((int)lNodes.size()+nToExpand*3)>N

* 会很快就被满足,但是此时只进行一次对vSizeAndPointerToNode中点进行分裂的操作是肯定不够的;

* 理想中,作者下面的for理论上只要执行一次就能满足,不过作者所考虑的“不理想情况”应该是分裂后出现的节点所在区域可能没有特征点,因此将for

* 循环放在了一个while循环里面,通过再次进行for循环、再分裂一次解决这个问题。而我所考虑的“不理想情况”则是因为前面的一次对vSizeAndPointerToNode

* 中的特征点进行for循环不够,需要将其放在另外一个循环(也就是作者所写的while循环)中不断尝试直到达到退出条件。

* */

else if(((int)lNodes.size()+nToExpand*3)>N)

{

//如果再分裂一次那么数目就要超了,这里想办法尽可能使其刚刚达到或者超过要求的特征点个数时就退出

//这里的nToExpand和vSizeAndPointerToNode不是一次循环对一次循环的关系,而是前者是累计计数,后者只保存某一个循环的

//一直循环,直到结束标志位被置位

while(!bFinish)

{

//获取当前的list中的节点个数

prevSize = lNodes.size();

//保留那些还可以分裂的节点的信息, 这里是深拷贝

std::vector<std::pair<int,ExtractorNode*> > vPrevSizeAndPointerToNode = vSizeAndPointerToNode;

//清空

vSizeAndPointerToNode.clear();

// 对需要划分的节点进行排序,对pair对的第一个元素进行排序,默认是从小到大排序

// 优先分裂特征点多的节点,使得特征点密集的区域保留更少的特征点

//! 注意这里的排序规则非常重要!会导致每次最后产生的特征点都不一样。建议使用 stable_sort

std::sort(vPrevSizeAndPointerToNode.begin(),vPrevSizeAndPointerToNode.end());

//遍历这个存储了pair对的vector,注意是从后往前遍历

for(int j=vPrevSizeAndPointerToNode.size()-1;j>=0;j--)

{

ExtractorNode n1,n2,n3,n4;

//对每个需要进行分裂的节点进行分裂

vPrevSizeAndPointerToNode[j].second->DivideNode(n1,n2,n3,n4);

// Add childs if they contain points

//其实这里的节点可以说是二级子节点了,执行和前面一样的操作

if(n1.vKeys.size()>0)

{

lNodes.push_front(n1);

if(n1.vKeys.size()>1)

{

//因为这里还有对于vSizeAndPointerToNode的操作,所以前面才会备份vSizeAndPointerToNode中的数据

//为可能的、后续的又一次for循环做准备

vSizeAndPointerToNode.push_back(std::make_pair(n1.vKeys.size(),&lNodes.front()));

lNodes.front().lit = lNodes.begin();

}

}

if(n2.vKeys.size()>0)

{

lNodes.push_front(n2);

if(n2.vKeys.size()>1)

{

vSizeAndPointerToNode.push_back(std::make_pair(n2.vKeys.size(),&lNodes.front()));

lNodes.front().lit = lNodes.begin();

}

}

if(n3.vKeys.size()>0)

{

lNodes.push_front(n3);

if(n3.vKeys.size()>1)

{

vSizeAndPointerToNode.push_back(std::make_pair(n3.vKeys.size(),&lNodes.front()));

lNodes.front().lit = lNodes.begin();

}

}

if(n4.vKeys.size()>0)

{

lNodes.push_front(n4);

if(n4.vKeys.size()>1)

{

vSizeAndPointerToNode.push_back(std::make_pair(n4.vKeys.size(),&lNodes.front()));

lNodes.front().lit = lNodes.begin();

}

}

//删除母节点,在这里其实应该是一级子节点

lNodes.erase(vPrevSizeAndPointerToNode[j].second->lit);

//判断是是否超过了需要的特征点数?是的话就退出,不是的话就继续这个分裂过程,直到刚刚达到或者超过要求的特征点个数

//作者的思想其实就是这样的,再分裂了一次之后判断下一次分裂是否会超过N,如果不是那么就放心大胆地全部进行分裂(因为少了一个判断因此

//其运算速度会稍微快一些),如果会那么就引导到这里进行最后一次分裂

if((int)lNodes.size()>=N)

break;

}//遍历vPrevSizeAndPointerToNode并对其中指定的node进行分裂,直到刚刚达到或者超过要求的特征点个数

//这里理想中应该是一个for循环就能够达成结束条件了,但是作者想的可能是,有些子节点所在的区域会没有特征点,因此很有可能一次for循环之后

//的数目还是不能够满足要求,所以还是需要判断结束条件并且再来一次

//判断是否达到了停止条件

if((int)lNodes.size()>=N || (int)lNodes.size()==prevSize)

bFinish = true;

}//一直进行nToExpand累加的节点分裂过程,直到分裂后的nodes数目刚刚达到或者超过要求的特征点数目

}//当本次分裂后达不到结束条件但是再进行一次完整的分裂之后就可以达到结束条件时

}// 根据兴趣点分布,利用4叉树方法对图像进行划分区域

// Retain the best point in each node

// Step 7 保留每个区域响应值最大的一个兴趣点

//使用这个vector来存储我们感兴趣的特征点的过滤结果

std::vector<cv::KeyPoint> vResultKeys;

//调整容器大小为要提取的特征点数目

vResultKeys.reserve(camera_ptr->nFeatures);

//遍历这个节点链表

for(std::list<ExtractorNode>::iterator lit=lNodes.begin(); lit!=lNodes.end(); lit++)

{

//得到这个节点区域中的特征点容器句柄

std::vector<cv::KeyPoint> &vNodeKeys = lit->vKeys;

//得到指向第一个特征点的指针,后面作为最大响应值对应的关键点

cv::KeyPoint* pKP = &vNodeKeys[0];

//用第1个关键点响应值初始化最大响应值

float maxResponse = pKP->response;

//开始遍历这个节点区域中的特征点容器中的特征点,注意是从1开始哟,0已经用过了

for(size_t k=1;k<vNodeKeys.size();k++)

{

//更新最大响应值

if(vNodeKeys[k].response>maxResponse)

{

//更新pKP指向具有最大响应值的keypoints

pKP = &vNodeKeys[k];

maxResponse = vNodeKeys[k].response;

}

}

//将这个节点区域中的响应值最大的特征点加入最终结果容器

vResultKeys.push_back(*pKP);

}

//返回最终结果容器,其中保存有分裂出来的区域中,我们最感兴趣、响应值最大的特征点

return vResultKeys;

}

void ORBFeature::computeOrientation(const cv::Mat& image, std::vector<cv::KeyPoint>& keypoints, const std::vector<int>& umax)

{

// 遍历所有的特征点

for (std::vector<cv::KeyPoint>::iterator keypoint = keypoints.begin(),

keypointEnd = keypoints.end(); keypoint != keypointEnd; ++keypoint)

{

// 调用IC_Angle 函数计算这个特征点的方向

keypoint->angle = IC_Angle(image, //特征点所在的图层的图像

keypoint->pt, //特征点在这张图像中的坐标

umax); //每个特征点所在图像区块的每行的边界 u_max 组成的vector

}

}

float ORBFeature::IC_Angle(const cv::Mat& image, cv::Point2f pt, const std::vector<int> & u_max)

{

//图像的矩,前者是按照图像块的y坐标加权,后者是按照图像块的x坐标加权

int m_01 = 0, m_10 = 0;

//获得这个特征点所在的图像块的中心点坐标灰度值的指针center

const uchar* center = &image.at<uchar> (cvRound(pt.y), cvRound(pt.x));

// Treat the center line differently, v=0

//这条v=0中心线的计算需要特殊对待

//后面是以中心行为对称轴,成对遍历行数,所以PATCH_SIZE必须是奇数

for (int u = -HALF_PATCH_SIZE; u <= HALF_PATCH_SIZE; ++u)

//注意这里的center下标u可以是负的!中心水平线上的像素按x坐标(也就是u坐标)加权

m_10 += u * center[u];

// Go line by line in the circular patch

//这里的step1表示这个图像一行包含的字节总数。参考[https://blog.csdn.net/qianqing13579/article/details/45318279]

int step = (int)image.step1();

//注意这里是以v=0中心线为对称轴,然后对称地每成对的两行之间进行遍历,这样处理加快了计算速度

for (int v = 1; v <= HALF_PATCH_SIZE; ++v)

{

// Proceed over the two lines

//本来m_01应该是一列一列地计算的,但是由于对称以及坐标x,y正负的原因,可以一次计算两行

int v_sum = 0;

// 获取某行像素横坐标的最大范围,注意这里的图像块是圆形的!

int d = u_max[v];

//在坐标范围内挨个像素遍历,实际是一次遍历2个

// 假设每次处理的两个点坐标,中心线下方为(x,y),中心线上方为(x,-y)

// 对于某次待处理的两个点:m_10 = Σ x*I(x,y) = x*I(x,y) + x*I(x,-y) = x*(I(x,y) + I(x,-y))

// 对于某次待处理的两个点:m_01 = Σ y*I(x,y) = y*I(x,y) - y*I(x,-y) = y*(I(x,y) - I(x,-y))

for (int u = -d; u <= d; ++u)

{

//得到需要进行加运算和减运算的像素灰度值

//val_plus:在中心线下方x=u时的的像素灰度值

//val_minus:在中心线上方x=u时的像素灰度值

int val_plus = center[u + v*step], val_minus = center[u - v*step];

//在v(y轴)上,2行所有像素灰度值之差

v_sum += (val_plus - val_minus);

//u轴(也就是x轴)方向上用u坐标加权和(u坐标也有正负符号),相当于同时计算两行

m_10 += u * (val_plus + val_minus);

}

//将这一行上的和按照y坐标加权

m_01 += v * v_sum;

}

//为了加快速度还使用了fastAtan2()函数,输出为[0,360)角度,精度为0.3°

return cv::fastAtan2((float)m_01, (float)m_10);

}

void ORBFeature::computeDescriptors(const cv::Mat& image, std::vector<cv::KeyPoint>& keypoints, cv::Mat& descriptors, const std::vector<cv::Point>& pattern)

{

//清空保存描述子信息的容器

descriptors = cv::Mat::zeros((int)keypoints.size(), 32, CV_8UC1);

//开始遍历特征点

for (size_t i = 0; i < keypoints.size(); i++)

//计算这个特征点的描述子

computeOrbDescriptor(keypoints[i], //要计算描述子的特征点

image, //以及其图像

&pattern[0], //随机点集的首地址

descriptors.ptr((int)i)); //提取出来的描述子的保存位置

}

void ORBFeature::computeOrbDescriptor(const cv::KeyPoint& kpt, const cv::Mat& img, const cv::Point* pattern, uchar* desc)

{

//得到特征点的角度,用弧度制表示。其中kpt.angle是角度制,范围为[0,360)度

float angle = (float)kpt.angle*M_PI/180;

//计算这个角度的余弦值和正弦值

float a = (float)cos(angle), b = (float)sin(angle);

//获得图像中心指针

const uchar* center = &img.at<uchar>(cvRound(kpt.pt.y), cvRound(kpt.pt.x));

//获得图像的每行的字节数

const int step = (int)img.step;

//原始的BRIEF描述子没有方向不变性,通过加入关键点的方向来计算描述子,称之为Steer BRIEF,具有较好旋转不变特性

//具体地,在计算的时候需要将这里选取的采样模板中点的x轴方向旋转到特征点的方向。

//获得采样点中某个idx所对应的点的灰度值,这里旋转前坐标为(x,y), 旋转后坐标(x',y'),他们的变换关系:

// x'= xcos(θ) - ysin(θ), y'= xsin(θ) + ycos(θ)

// 下面表示 y'* step + x'

#define GET_VALUE(idx) center[cvRound(pattern[idx].x*b + pattern[idx].y*a)*step + cvRound(pattern[idx].x*a - pattern[idx].y*b)]

//brief描述子由32*8位组成

//其中每一位是来自于两个像素点灰度的直接比较,所以每比较出8bit结果,需要16个随机点,这也就是为什么pattern需要+=16的原因

for (int i = 0; i < 32; ++i, pattern += 16)

{

int t0, //参与比较的第1个特征点的灰度值

t1, //参与比较的第2个特征点的灰度值

val; //描述子这个字节的比较结果,0或1

t0 = GET_VALUE(0); t1 = GET_VALUE(1);

val = t0 < t1; //描述子本字节的bit0

t0 = GET_VALUE(2); t1 = GET_VALUE(3);

val |= (t0 < t1) << 1; //描述子本字节的bit1

t0 = GET_VALUE(4); t1 = GET_VALUE(5);

val |= (t0 < t1) << 2; //描述子本字节的bit2

t0 = GET_VALUE(6); t1 = GET_VALUE(7);

val |= (t0 < t1) << 3; //描述子本字节的bit3

t0 = GET_VALUE(8); t1 = GET_VALUE(9);

val |= (t0 < t1) << 4; //描述子本字节的bit4

t0 = GET_VALUE(10); t1 = GET_VALUE(11);

val |= (t0 < t1) << 5; //描述子本字节的bit5

t0 = GET_VALUE(12); t1 = GET_VALUE(13);

val |= (t0 < t1) << 6; //描述子本字节的bit6

t0 = GET_VALUE(14); t1 = GET_VALUE(15);

val |= (t0 < t1) << 7; //描述子本字节的bit7

//保存当前比较的出来的描述子的这个字节

desc[i] = (uchar)val;

}

//为了避免和程序中的其他部分冲突在,在使用完成之后就取消这个宏定义

#undef GET_VALUE

}

}parameter.cpp

#include "ORB/parameter.hpp"

namespace ORB {

BaseParameter::BaseParameter(const std::string config_file)

{

file_path = config_file;

ReadPara();

}

void BaseParameter::ReadPara()

{

if (1)

{

// 使用OpenCV函数读取配置文件

cv::FileStorage file(file_path, cv::FileStorage::READ);

if (!file.isOpened())

{

LOG(ERROR) << "文件打开失败,请检查文件路径";

exit(-1);

}

fx = file["Camera.fx"];

fy = file["Camera.fy"];

cx = file["Camera.cx"];

cy = file["Camera.cy"];

k1 = file["Camera.k1"];

k2 = file["Camera.k2"];

p1 = file["Camera.p1"];

p2 = file["Camera.p2"];

file.release();

}

else {

// 使用yaml文件读取配置文件

YAML::Node camera_node = YAML::LoadFile(file_path);

fx = camera_node["Camera.fx"].as<double>();

fy = camera_node["Camera.fy"].as<double>();

cx = camera_node["Camera.cx"].as<double>();

cy = camera_node["Camera.cy"].as<double>();

k1 = camera_node["Camera.k1"].as<double>();

k2 = camera_node["Camera.k2"].as<double>();

p1 = camera_node["Camera.p1"].as<double>();

p2 = camera_node["Camera.p2"].as<double>();

}

}

Parameter::Parameter(const std::string config_file):BaseParameter(config_file)

{

ReadPara();

ReadOtherPara();

}

void Parameter::ReadOtherPara()

{

if (1)

{

cv::FileStorage file(file_path, cv::FileStorage::READ);

if (!file.isOpened())

{

LOG(ERROR) << "文件打开失败,请检查文件路径";

exit(-1);

}

nFeatures = file["Features"];

scalseFactor = file["scaleFactor"];

nLevels = file["nLevels"];

iniThFAST = file["iniThFAST"];

minThFAST = file["minThFAST"];

}

else {

YAML::Node node = YAML::LoadFile(file_path);

nFeatures = node["Features"].as<int>();

scalseFactor = node["scaleFactor"].as<double>();

nLevels = node["nLevels"].as<int>();

iniThFAST = node["iniThFAST"].as<int>();

minThFAST = node["minThFAST"].as<int>();

}

}

}

global_defination.h.in

#ifndef ORB_GLOBAL_DEFINATION_H_IN_

#define ORB_GLOBAL_DEFINATION_H_IN_

#include <string>

namespace ORB {

const std::string WORK_SPACE_PATH = "@WORK_SPACE_PATH@";

}

#endifcommon_include.h

#pragma once

#ifndef ORB_COMMON_INCLUDE_H

#define ORB_COMMON_INCLUDE_H

// std

#include <atomic>

#include <condition_variable>

#include <iostream>

#include <list>

#include <map>

#include <memory>

#include <mutex>

#include <set>

#include <string>

#include <thread>

#include <typeinfo>

#include <unordered_map>

#include <vector>

#include "yaml-cpp/yaml.h"

// define the commonly included file to avoid a long include list

#include <Eigen/Core>

#include <Eigen/Geometry>

// typedefs for eigen

// double matricies

typedef Eigen::Matrix<double, Eigen::Dynamic, Eigen::Dynamic> MatXX;

typedef Eigen::Matrix<double, 10, 10> Mat1010;

typedef Eigen::Matrix<double, 13, 13> Mat1313;

typedef Eigen::Matrix<double, 8, 10> Mat810;

typedef Eigen::Matrix<double, 8, 3> Mat83;

typedef Eigen::Matrix<double, 6, 6> Mat66;

typedef Eigen::Matrix<double, 5, 3> Mat53;

typedef Eigen::Matrix<double, 4, 3> Mat43;

typedef Eigen::Matrix<double, 4, 2> Mat42;

typedef Eigen::Matrix<double, 3, 3> Mat33;

typedef Eigen::Matrix<double, 2, 2> Mat22;

typedef Eigen::Matrix<double, 8, 8> Mat88;

typedef Eigen::Matrix<double, 7, 7> Mat77;

typedef Eigen::Matrix<double, 4, 9> Mat49;

typedef Eigen::Matrix<double, 8, 9> Mat89;

typedef Eigen::Matrix<double, 9, 4> Mat94;

typedef Eigen::Matrix<double, 9, 8> Mat98;

typedef Eigen::Matrix<double, 8, 1> Mat81;

typedef Eigen::Matrix<double, 1, 8> Mat18;

typedef Eigen::Matrix<double, 9, 1> Mat91;

typedef Eigen::Matrix<double, 1, 9> Mat19;

typedef Eigen::Matrix<double, 8, 4> Mat84;

typedef Eigen::Matrix<double, 4, 8> Mat48;

typedef Eigen::Matrix<double, 4, 4> Mat44;

typedef Eigen::Matrix<double, 3, 4> Mat34;

typedef Eigen::Matrix<double, 14, 14> Mat1414;

// float matricies

typedef Eigen::Matrix<float, 3, 3> Mat33f;

typedef Eigen::Matrix<float, 10, 3> Mat103f;

typedef Eigen::Matrix<float, 2, 2> Mat22f;

typedef Eigen::Matrix<float, 3, 1> Vec3f;

typedef Eigen::Matrix<float, 2, 1> Vec2f;

typedef Eigen::Matrix<float, 6, 1> Vec6f;

typedef Eigen::Matrix<float, 1, 8> Mat18f;

typedef Eigen::Matrix<float, 6, 6> Mat66f;

typedef Eigen::Matrix<float, 8, 8> Mat88f;

typedef Eigen::Matrix<float, 8, 4> Mat84f;

typedef Eigen::Matrix<float, 6, 6> Mat66f;

typedef Eigen::Matrix<float, 4, 4> Mat44f;

typedef Eigen::Matrix<float, 12, 12> Mat1212f;

typedef Eigen::Matrix<float, 13, 13> Mat1313f;

typedef Eigen::Matrix<float, 10, 10> Mat1010f;

typedef Eigen::Matrix<float, 9, 9> Mat99f;

typedef Eigen::Matrix<float, 4, 2> Mat42f;

typedef Eigen::Matrix<float, 6, 2> Mat62f;

typedef Eigen::Matrix<float, 1, 2> Mat12f;

typedef Eigen::Matrix<float, Eigen::Dynamic, Eigen::Dynamic> MatXXf;

typedef Eigen::Matrix<float, 14, 14> Mat1414f;

// double vectors

typedef Eigen::Matrix<double, 14, 1> Vec14;

typedef Eigen::Matrix<double, 13, 1> Vec13;

typedef Eigen::Matrix<double, 10, 1> Vec10;

typedef Eigen::Matrix<double, 9, 1> Vec9;

typedef Eigen::Matrix<double, 8, 1> Vec8;

typedef Eigen::Matrix<double, 7, 1> Vec7;

typedef Eigen::Matrix<double, 6, 1> Vec6;

typedef Eigen::Matrix<double, 5, 1> Vec5;

typedef Eigen::Matrix<double, 4, 1> Vec4;

typedef Eigen::Matrix<double, 3, 1> Vec3;

typedef Eigen::Matrix<double, 2, 1> Vec2;

typedef Eigen::Matrix<double, Eigen::Dynamic, 1> VecX;

// float vectors

typedef Eigen::Matrix<float, 12, 1> Vec12f;

typedef Eigen::Matrix<float, 8, 1> Vec8f;

typedef Eigen::Matrix<float, 10, 1> Vec10f;

typedef Eigen::Matrix<float, 4, 1> Vec4f;

typedef Eigen::Matrix<float, 12, 1> Vec12f;

typedef Eigen::Matrix<float, 13, 1> Vec13f;

typedef Eigen::Matrix<float, 9, 1> Vec9f;

typedef Eigen::Matrix<float, Eigen::Dynamic, 1> VecXf;

typedef Eigen::Matrix<float, 14, 1> Vec14f;

// for cv

#include <opencv2/core/core.hpp>

using cv::Mat;

// glog

#include <glog/logging.h>

#endif // ORB_COMMON_INCLUDE_HExtractorNode.hpp

#ifndef ORB_EXTRACTORNODE_HPP_

#define ORB_EXTRACTORNODE_HPP_

#include "ORB/common_include.h"

namespace ORB {

class ExtractorNode

{

public:

/** @brief 构造函数 */

ExtractorNode():bNoMore(false){}

/**

* @brief 在八叉树分配特征点的过程中,实现一个节点分裂为4个节点的操作

*

* @param[out] n1 分裂的节点1

* @param[out] n2 分裂的节点2

* @param[out] n3 分裂的节点3

* @param[out] n4 分裂的节点4

*/

void DivideNode(ExtractorNode &n1, ExtractorNode &n2, ExtractorNode &n3, ExtractorNode &n4);

///保存有当前节点的特征点

std::vector<cv::KeyPoint> vKeys;

///当前节点所对应的图像坐标边界

cv::Point2i UL, UR, BL, BR;

//存储提取器节点的列表(其实就是双向链表)的一个迭代器,可以参考[http://www.runoob.com/cplusplus/cpp-overloading.html]

//这个迭代器提供了访问总节点列表的方式,需要结合cpp文件进行分析

std::list<ExtractorNode>::iterator lit;

///如果节点中只有一个特征点的话,说明这个节点不能够再进行分裂了,这个标志置位

//这个节点中如果没有特征点的话,这个节点就直接被删除了

bool bNoMore;

};

}

#endifORBFeature.hpp

#ifndef ORB_ORBFEATURE_HPP_

#define ORB_ORBFEATURE_HPP_

#include "ORB/parameter.hpp"

#include <opencv2/opencv.hpp>

#include "ORB/ExtractorNode.hpp"

namespace ORB { // 定义了一个名为 ORB 的命名空间

class ORBFeature{ // 定义了一个名为 ORBFeature 的类

public:

// 构造函数,接受图像路径和配置文件路径作为参数

ORBFeature(const std::string& image_path, const std::string& config_path);

//1、 提取ORB特征点,使用opencv函数实现

void ExtractORB();

//2、 图像去畸变

void UndistortImage();

//3、 ORB-SLAM2源码 ExtractORB()

void ExtractORB(std::vector<cv::KeyPoint>& _keypoints, cv::Mat& _descriptors);

private:

// 设置参数

void setPara();

// 构建图像金字塔

void ComputePyramid();

// 使用四叉树的方式计算每层图像的特征点并进行分配

void ComputeKeyPointsQuadtree(std::vector<std::vector<cv::KeyPoint>>& allKeypoints);

// 计算描述子

void computeDescriptors(const cv::Mat& image, std::vector<cv::KeyPoint>& keypoints, cv::Mat& descriptors, const std::vector<cv::Point>& pattern);

private:

// 计算单个关键点的ORB描述子

void computeOrbDescriptor(const cv::KeyPoint& kpt, const cv::Mat& img, const cv::Point* pattern, uchar* desc);

// 利用四叉树进行特征点的分配

std::vector<cv::KeyPoint> DistributeQuadtree(const std::vector<cv::KeyPoint>& vToDistributeKeys, const int &minX,

const int &maxX, const int &minY, const int &maxY, const int &N, const int &level);

// 计算关键点的方向

void computeOrientation(const cv::Mat& image, std::vector<cv::KeyPoint>& keypoints, const std::vector<int>& umax);

private:

// 计算关键点的角度

float IC_Angle(const cv::Mat& image, cv::Point2f pt, const std::vector<int> & u_max);

private:

// 相机参数

std::shared_ptr<Parameter> camera_ptr;

// 输入的图像

cv::Mat image;

// 尺度因子和逆尺度因子

std::vector<double> mvScaleFactor, mvInvScaleFactor;

// 尺度因子的平方和逆平方

std::vector<double> mvLevelSigma2, mvInvLevelSigma2;

// 图像金字塔

std::vector<cv::Mat> mvImagePyramid;

// 每层的特征点数

std::vector<int> mnFeaturesPerLevel;

// 最大的角度值

std::vector<int> umax;

// 半径大小

static int HALF_PATCH_SIZE;

// 描述子模式

std::vector<cv::Point> pattern;

// 边缘阈值

static int EDGE_THRESHOLD;

// PATCH的大小

static int PATCH_SIZE;

};

}

#endifparameter.hpp

#ifndef ORB_PARAMETER_HPP_

#define ORB_PARAMETER_HPP_

#include "common_include.h"

namespace ORB {

class BaseParameter{

public:

BaseParameter(const std::string config_file);

void ReadPara();

public:

std::string file_path;

double fx, fy, cx, cy; // 相机内参

double k1, k2, p1, p2; // 相机畸变参数

};

class Parameter: public BaseParameter {

public:

Parameter(const std::string config_file);

void ReadOtherPara();

public:

int nFeatures;

double scalseFactor;

int nLevels;

int iniThFAST;

int minThFAST;

};

}

#endif

849

849

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?