Apache Beam发布的第一个稳定版本2.0.0,想比较于之前的版本来说,API改变了很多,比如读取HDFS文件的API,以前的读取文件的类已经不适用了,改为使用普通的Text.IO就能读取HDFS文件,前提是建立了HDFS之间的连接,以前用的版本是Apache Beam 2.1.0,如有问题或者改进,可以在留言区给我留言

package com.fzu.test.kafka_beam_test;

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

import java.util.ArrayList;

import java.util.List;

import org.apache.beam.sdk.Pipeline;

import org.apache.beam.sdk.io.FileSystems;

import org.apache.beam.sdk.io.TextIO;

import org.apache.beam.sdk.io.hdfs.HadoopFileSystemOptions;

import org.apache.beam.sdk.metrics.Counter;

import org.apache.beam.sdk.metrics.Metrics;

import org.apache.beam.sdk.options.Default;

import org.apache.beam.sdk.options.Description;

import org.apache.beam.sdk.options.PipelineOptions;

import org.apache.beam.sdk.options.PipelineOptionsFactory;

import org.apache.beam.sdk.options.Validation.Required;

import org.apache.beam.sdk.transforms.Count;

import org.apache.beam.sdk.transforms.DoFn;

import org.apache.beam.sdk.transforms.MapElements;

import org.apache.beam.sdk.transforms.PTransform;

import org.apache.beam.sdk.transforms.ParDo;

import org.apache.beam.sdk.transforms.SimpleFunction;

import org.apache.beam.sdk.values.KV;

import org.apache.beam.sdk.values.PCollection;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.hdfs.DistributedFileSystem;

import com.fzu.test.beam.examples.ExampleUtils;

import com.fzu.test.beam.examples.WordCount;

import com.google.common.collect.ImmutableList;

public class HdfsReaderTest {

private static class PrintFn<T> extends DoFn<T, T> {

@ProcessElement

public void processElement(ProcessContext c) throws Exception {

System.out.println(c.element().toString());

}

}

static class ExtractWordsFn extends DoFn<String, String> {

private final Counter emptyLines = Metrics.counter(ExtractWordsFn.class, "emptyLines");

@ProcessElement

public void processElement(ProcessContext c) {

if (c.element().trim().isEmpty()) {

emptyLines.inc();

}

// Split the line into words.

String[] words = c.element().split(ExampleUtils.TOKENIZER_PATTERN);

// Output each word encountered into the output PCollection.

for (String word : words) {

if (!word.isEmpty()) {

c.output(word);

}

}

}

}

/**

* A SimpleFunction that converts a Word and Count into a printable string.

*/

public static class FormatAsTextFn extends SimpleFunction<KV<String, Long>, String> {

@Override

public String apply(KV<String, Long> input) {

return input.getKey() + ": " + input.getValue();

}

}

public static class CountWords extends PTransform<PCollection<String>, PCollection<KV<String, Long>>> {

@Override

public PCollection<KV<String, Long>> expand(PCollection<String> lines) {

// Convert lines of text into individual words.

PCollection<String> words = lines.apply(ParDo.of(new ExtractWordsFn()));

// Count the number of times each word occurs.

PCollection<KV<String, Long>> wordCounts = words.apply(Count.<String> perElement());

return wordCounts;

}

}

public static void main(String[] args) {

// HadoopFileSystemOptions options = PipelineOptionsFactory

// //.fromArgs("--hdfsConfiguration=[{\"fs.default.name\":

// \"hdfs://172.17.168.96:9000\"}]")

// .create() .as(HadoopFileSystemOptions.class);

// 或者使用以下方式配置

HadoopFileSystemOptions options = PipelineOptionsFactory.create().as(HadoopFileSystemOptions.class);

Configuration conf = new Configuration();

conf.set("fs.default.name", "hdfs://172.17.168.96:9000");

conf.set("fs.hdfs.impl", "org.apache.hadoop.hdfs.DistributedFileSystem");

List<Configuration> list = new ArrayList<Configuration>();

list.add(conf);

options.setHdfsConfiguration(list);

Pipeline p = Pipeline.create(options);

System.out.println(options);

p.apply("ReadLines", TextIO.read().from("hdfs://172.17.168.96:9000/user/hadoop/beamtest1/test.txt"))

.apply(new CountWords()).apply(MapElements.via(new FormatAsTextFn())).apply(ParDo.of(new PrintFn<>()));

p.run().waitUntilFinish();

}

}

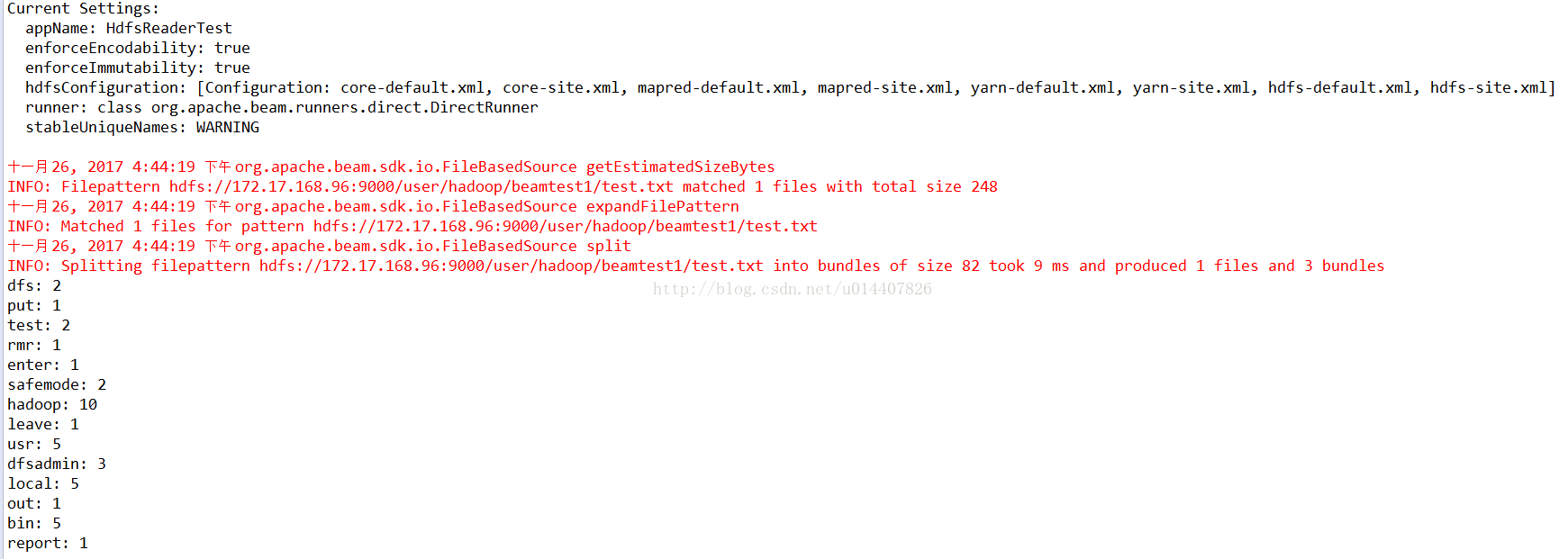

控制台输出:

973

973

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?