ElasticSearch环境搭建

采用docker-compose搭建,具体配置如下:

version: '3'

# 网桥es -> 方便相互通讯

networks:

es:

services:

elasticsearch:

image: registry.cn-hangzhou.aliyuncs.com/zhengqing/elasticsearch:7.14.1 # 原镜像`elasticsearch:7.14.1`

container_name: elasticsearch # 容器名为'elasticsearch'

restart: unless-stopped # 指定容器退出后的重启策略为始终重启,但是不考虑在Docker守护进程启动时就已经停止了的容器

volumes: # 数据卷挂载路径设置,将本机目录映射到容器目录

- "./elasticsearch/data:/usr/share/elasticsearch/data"

- "./elasticsearch/logs:/usr/share/elasticsearch/logs"

- "./elasticsearch/config/elasticsearch.yml:/usr/share/elasticsearch/config/elasticsearch.yml"

# - "./elasticsearch/config/jvm.options:/usr/share/elasticsearch/config/jvm.options"

- "./elasticsearch/plugins/ik:/usr/share/elasticsearch/plugins/ik" # IK中文分词插件

environment: # 设置环境变量,相当于docker run命令中的-e

TZ: Asia/Shanghai

LANG: en_US.UTF-8

TAKE_FILE_OWNERSHIP: "true" # 权限

discovery.type: single-node

ES_JAVA_OPTS: "-Xmx512m -Xms512m"

#ELASTIC_PASSWORD: "123456" # elastic账号密码

ports:

- "9200:9200"

- "9300:9300"

networks:

- es

kibana:

image: registry.cn-hangzhou.aliyuncs.com/zhengqing/kibana:7.14.1 # 原镜像`kibana:7.14.1`

container_name: kibana

restart: unless-stopped

volumes:

- ./elasticsearch/kibana/config/kibana.yml:/usr/share/kibana/config/kibana.yml

ports:

- "5601:5601"

depends_on:

- elasticsearch

links:

- elasticsearch

networks:

- es

部署

# 运行

docker-compose -f docker-compose-elasticsearch.yml -p elasticsearch up -d

# 运行后,给当前目录下所有文件赋予权限(读、写、执行)

#chmod -R 777 ./elasticsearch

ES访问地址:ip地址:9200 默认账号密码:elastic/123456

kibana访问地址:ip地址:5601/app/dev_tools#/console 默认账号密码:elastic/123456

设置ES密码

# 进入容器

docker exec -it elasticsearch /bin/bash

# 设置密码-随机生成密码

# elasticsearch-setup-passwords auto

# 设置密码-手动设置密码

elasticsearch-setup-passwords interactive

# 访问

curl 127.0.0.1:9200 -u elastic:123456

一、依赖

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<parent>

<artifactId>springboot-demo</artifactId>

<groupId>com.et</groupId>

<version>1.0-SNAPSHOT</version>

</parent>

<modelVersion>4.0.0</modelVersion>

<artifactId>elasticsearch</artifactId>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-data-elasticsearch</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-webflux</artifactId>

</dependency>

<dependency>

<groupId>com.alibaba</groupId>

<artifactId>fastjson</artifactId>

<version>${fastjson.version}</version>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-test</artifactId>

<scope>test</scope>

</dependency>

<dependency>

<groupId>io.projectreactor</groupId>

<artifactId>reactor-test</artifactId>

<scope>test</scope>

</dependency>

</dependencies>

</project>

二、配置文件和启动类

# elasticsearch.yml 文件中的 cluster.name

spring.data.elasticsearch.cluster-name=docker-cluster

# elasticsearch 调用地址,多个使用“,”隔开

spring.data.elasticsearch.cluster-nodes=127.0.0.1:9300

#spring.data.elasticsearch.repositories.enabled=true

#spring.data.elasticsearch.username=elastic

#spring.data.elasticsearch.password=123456

#spring.data.elasticsearch.network.host=0.0.0.0

@SpringBootApplication

public class EssearchApplication {

public static void main(String[] args) {

SpringApplication.run(EssearchApplication.class, args);

}

}

三、document实体类

@Ducument(indexName = "orders",type = "product")

@Mapping(mappingPath = "productIndex.json")//解决ik分词不能使用的问题

public class ProductDocument implements Serializable{

@Id

private String id;

//@Field(analyzer = "ik_max_word",searchAnalyzer = "ik_max_word")

private String productName;

//@Field(analyzer = "ik_max_word",searchAnalyzer = "ik_max_word")

private String productDesc;

private Date createTime;

private Date updateTime;

public String getId() {

return id;

}

public void setId(String id) {

this.id = id;

}

public String getProductName() {

return productName;

}

public void setProductName(String productName) {

this.productName = productName;

}

public String getProductDesc() {

return productDesc;

}

public void setProductDesc(String productDesc) {

this.productDesc = productDesc;

}

public Date getCreateTime() {

return createTime;

}

public void setCreateTime(Date createTime) {

this.createTime = createTime;

}

public Date getUpdateTime() {

return updateTime;

}

public void setUpdateTime(Date updateTime) {

this.updateTime = updateTime;

}

}

四、Service接口以及实现类

public interface EsSearchService extends BaseSearchService<ProductDocument>{

//保存

void save(ProductDocument... productDocuments);

//删除

void delete(String id);

//清空索引

void deleteAll();

//根据id查询

ProductDocument getById(String id);

//查询全部

List<ProductDocument> getAll();

}

@Service

public class EsSearchServiceImpl extends BaseSearchServiceImpl<ProductDocument> implements EsSearchService {

private Logger log = LoggerFactory.getLogger(getClass());

@Resource

private ElasticsearchTemplate elasticsearchTemplate;

@Resource

private ProductDocumentRepository productDocumentRepository;

@Override

public void save(ProductDocument ... productDocuments) {

elasticsearchTemplate.putMapping(ProductDocument.class);

if(productDocuments.length > 0){

/*Arrays.asList(productDocuments).parallelStream()

.map(productDocumentRepository::save)

.forEach(productDocument -> log.info("【保存数据】:{}", JSON.toJSONString(productDocument)));*/

log.info("【保存索引】:{}",JSON.toJSONString(productDocumentRepository.saveAll(Arrays.asList(productDocuments))));

}

}

@Override

public void delete(String id) {

productDocumentRepository.deleteById(id);

}

@Override

public void deleteAll() {

productDocumentRepository.deleteAll();

}

@Override

public ProductDocument getById(String id) {

return productDocumentRepository.findById(id).get();

}

@Override

public List<ProductDocument> getAll() {

List<ProductDocument> list = new ArrayList<>();

productDocumentRepository.findAll().forEach(list::add);

return list;

}

}

五、repository

@Component

public interface ProductDocumentRepository extends ElasticsearchRepository<ProductDocument,String> {

}

productIndex.json

{

"properties": {

"createTime": {

"type": "long"

},

"productDesc": {

"type": "text",

"analyzer": "ik_max_word",

"search_analyzer": "ik_max_word"

},

"productName": {

"type": "text",

"analyzer": "ik_max_word",

"search_analyzer": "ik_max_word"

},

"updateTime": {

"type": "long"

}

}

}

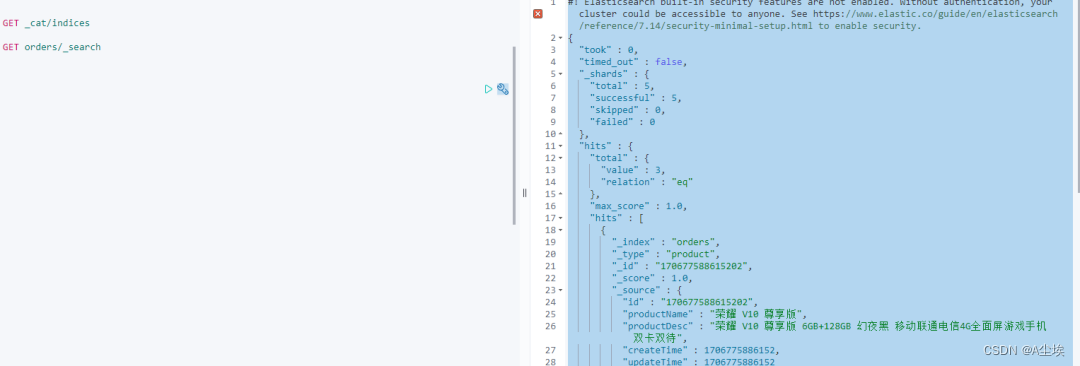

六、测试

@RunWith(SpringRunner.class)

@SpringBootTest

public class EssearchApplicationTests {

private Logger log = LoggerFactory.getLogger(getClass());

@Autowired

private EsSearchService esSearchService;

@Test

public void save() {

log.info("【创建索引前的数据条数】:{}",esSearchService.getAll().size());

ProductDocument productDocument = ProductDocumentBuilder.create()

.addId(System.currentTimeMillis() + "01")

.addProductName("无印良品 MUJI 基础润肤化妆水")

.addProductDesc("无印良品 MUJI 基础润肤化妆水 高保湿型 200ml")

.addCreateTime(new Date()).addUpdateTime(new Date())

.builder();

ProductDocument productDocument1 = ProductDocumentBuilder.create()

.addId(System.currentTimeMillis() + "02")

.addProductName("荣耀 V10 尊享版")

.addProductDesc("荣耀 V10 尊享版 6GB+128GB 幻夜黑 移动联通电信4G全面屏游戏手机 双卡双待")

.addCreateTime(new Date()).addUpdateTime(new Date())

.builder();

ProductDocument productDocument2 = ProductDocumentBuilder.create()

.addId(System.currentTimeMillis() + "03")

.addProductName("资生堂(SHISEIDO) 尿素红罐护手霜")

.addProductDesc("日本进口 资生堂(SHISEIDO) 尿素红罐护手霜 100g/罐 男女通用 深层滋养 改善粗糙")

.addCreateTime(new Date()).addUpdateTime(new Date())

.builder();

esSearchService.save(productDocument,productDocument1,productDocument2);

log.info("【创建索引ID】:{},{},{}",productDocument.getId(),productDocument1.getId(),productDocument2.getId());

log.info("【创建索引后的数据条数】:{}",esSearchService.getAll().size());

}

@Test

public void getAll(){

esSearchService.getAll().parallelStream()

.map(JSON::toJSONString)

.forEach(System.out::println);

}

@Test

public void deleteAll() {

esSearchService.deleteAll();

}

@Test

public void getById() {

log.info("【根据ID查询内容】:{}", JSON.toJSONString(esSearchService.getById("154470178213401")));

}

@Test

public void query() {

log.info("【根据关键字搜索内容】:{}", JSON.toJSONString(esSearchService.query("无印良品荣耀",ProductDocument.class)));

}

@Test

public void queryHit() {

String keyword = "联通尿素";

String indexName = "orders";

List<Map<String,Object>> searchHits = esSearchService.queryHit(keyword,indexName,"productName","productDesc");

log.info("【根据关键字搜索内容,命中部分高亮,返回内容】:{}", JSON.toJSONString(searchHits));

//[{"highlight":{"productDesc":"<span style='color:red'>无印良品</span> MUJI 基础润肤化妆水 高保湿型 200ml","productName":"<span style='color:red'>无印良品</span> MUJI 基础润肤化妆水"},"source":{"productDesc":"无印良品 MUJI 基础润肤化妆水 高保湿型 200ml","createTime":1544755966204,"updateTime":1544755966204,"id":"154475596620401","productName":"无印良品 MUJI 基础润肤化妆水"}},{"highlight":{"productDesc":"<span style='color:red'>荣耀</span> V10 尊享版 6GB+128GB 幻夜黑 移动联通电信4G全面屏游戏手机 双卡双待","productName":"<span style='color:red'>荣耀</span> V10 尊享版"},"source":{"productDesc":"荣耀 V10 尊享版 6GB+128GB 幻夜黑 移动联通电信4G全面屏游戏手机 双卡双待","createTime":1544755966204,"updateTime":1544755966204,"id":"154475596620402","productName":"荣耀 V10 尊享版"}}]

}

@Test

public void queryHitByPage() {

String keyword = "联通尿素";

String indexName = "orders";

Page<Map<String,Object>> searchHits = esSearchService.queryHitByPage(1,1,keyword,indexName,"productName","productDesc");

log.info("【分页查询,根据关键字搜索内容,命中部分高亮,返回内容】:{}", JSON.toJSONString(searchHits));

//[{"highlight":{"productDesc":"<span style='color:red'>无印良品</span> MUJI 基础润肤化妆水 高保湿型 200ml","productName":"<span style='color:red'>无印良品</span> MUJI 基础润肤化妆水"},"source":{"productDesc":"无印良品 MUJI 基础润肤化妆水 高保湿型 200ml","createTime":1544755966204,"updateTime":1544755966204,"id":"154475596620401","productName":"无印良品 MUJI 基础润肤化妆水"}},{"highlight":{"productDesc":"<span style='color:red'>荣耀</span> V10 尊享版 6GB+128GB 幻夜黑 移动联通电信4G全面屏游戏手机 双卡双待","productName":"<span style='color:red'>荣耀</span> V10 尊享版"},"source":{"productDesc":"荣耀 V10 尊享版 6GB+128GB 幻夜黑 移动联通电信4G全面屏游戏手机 双卡双待","createTime":1544755966204,"updateTime":1544755966204,"id":"154475596620402","productName":"荣耀 V10 尊享版"}}]

}

@Test

public void deleteIndex() {

log.info("【删除索引库】");

esSearchService.deleteIndex("orders");

}

}

SpringBoot+ElasticSearch实现文档内容抽取、高亮分词、全文检索

文件内容识别

第一步: 要用es实现文本附件内容的识别,需要先给es安装一个插件:Ingest Attachment Processor Plugin

这是一个文本抽取内容识别的插件,利用了Elasticsearch的ingest node功能,提供了关键的预处理器attachment。还有其他的:OCR之类的其它插件。

①、到es的安装文件bin目录下执行(es是使用docker安装的,所以需要进入到es的docker镜像里面的bin目录下安装插件)

②、创建一个文本抽取的管道

主要是用于将上传的附件转换成文本内容,支持(word,PDF,txt,excel没试,应该也支持)

{

"description": "Extract attachment information",

"processors": [

{

"attachment": {

"field": "content",

"ignore_missing": true

}

},

{

"remove": {

"field": "content"

}

}

]

}

③、定义内容存储的索引

- mapping:定义的是存储的字段格式

- setting:索引的配置信息,这边定义了一个分词(使用的是jieba的分词)

【注意:内容检索的是attachment.content字段,一定要使用分词,不使用分词的话,检索会检索不出来内容】

{

"mappings": {

"properties": {

"id":{

"type": "keyword"

},

"fileName":{

"type": "text",

"analyzer": "my_ana"

},

"contentType":{

"type": "text",

"analyzer": "my_ana"

},

"fileUrl":{

"type": "text"

},

"attachment": {

"properties": {

"content":{

"type": "text",

"analyzer": "my_ana"

}

}

}

}

},

"settings": {

"analysis": {

"filter": {

"jieba_stop": {

"type": "stop",

"stopwords_path": "stopword/stopwords.txt"

},

"jieba_synonym": {

"type": "synonym",

"synonyms_path": "synonym/synonyms.txt"

}

},

"analyzer": {

"my_ana": {

"tokenizer": "jieba_index",

"filter": [

"lowercase",

"jieba_stop",

"jieba_synonym"

]

}

}

}

}

}

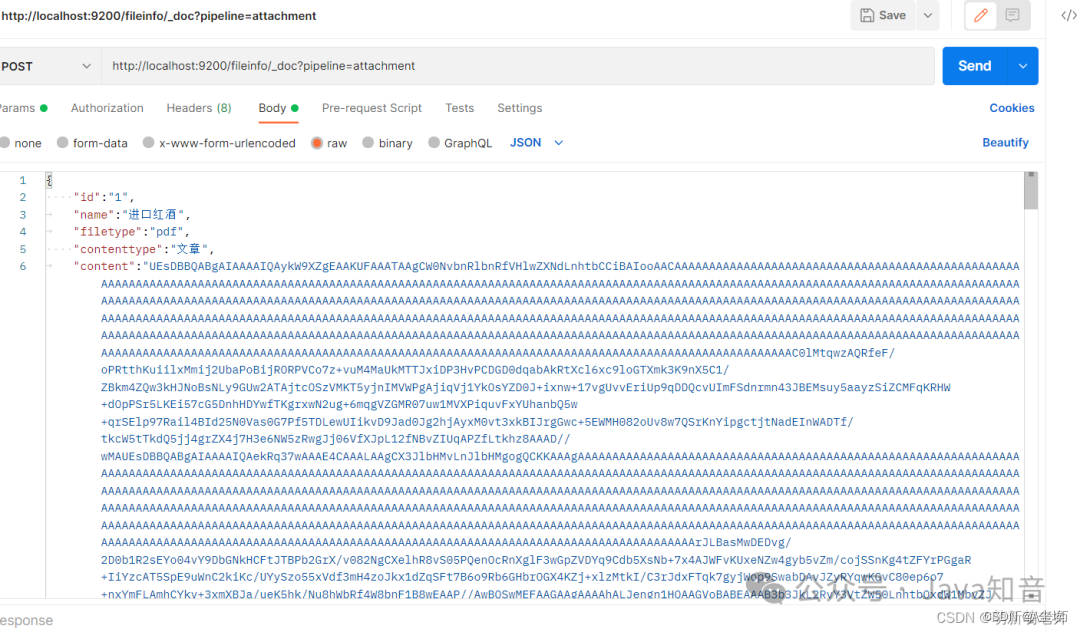

④、测试

测试内容需要将附件转换成base64格式

在线转换文件的地址:https://www.zhangxinxu.com/sp/base64.html

{

"id":"1",

"name":"进口红酒",

"filetype":"pdf",

"contenttype":"文章",

"content":"文章内容"

}

查询刚刚上传的文件:

{

"took": 861,

"timed_out": false,

"_shards": {

"total": 1,

"successful": 1,

"skipped": 0,

"failed": 0

},

"hits": {

"total": {

"value": 5,

"relation": "eq"

},

"max_score": 1.0,

"hits": [

{

"_index": "fileinfo",

"_type": "_doc",

"_id": "lkPEgYIBz3NlBKQzXYX9",

"_score": 1.0,

"_source": {

"fileName": "测试_20220809164145A002.docx",

"updateTime": 1660034506000,

"attachment": {

"date": "2022-08-09T01:38:00Z",

"content_type": "application/vnd.openxmlformats-officedocument.wordprocessingml.document",

"author": "DELL",

"language": "lt",

"content": "内容",

"content_length": 2572

},

"createTime": 1660034506000,

"fileUrl": "http://localhost:8092/fileInfo/profile/upload/fileInfo/2022/08/09/测试_20220809164145A002.docx",

"id": 1306333192,

"contentType": "文章",

"fileType": "docx"

}

},

{

"_index": "fileinfo",

"_type": "_doc",

"_id": "mUPHgYIBz3NlBKQzwIVW",

"_score": 1.0,

"_source": {

"fileName": "测试_20220809164527A001.docx",

"updateTime": 1660034728000,

"attachment": {

"date": "2022-08-09T01:38:00Z",

"content_type": "application/vnd.openxmlformats-officedocument.wordprocessingml.document",

"author": "DELL",

"language": "lt",

"content": "内容",

"content_length": 2572

},

"createTime": 1660034728000,

"fileUrl": "http://localhost:8092/fileInfo/profile/upload/fileInfo/2022/08/09/测试_20220809164527A001.docx",

"id": 1306333193,

"contentType": "文章",

"fileType": "docx"

}

},

{

"_index": "fileinfo",

"_type": "_doc",

"_id": "JDqshoIBbkTNu1UgkzFK",

"_score": 1.0,

"_source": {

"fileName": "txt测试_20220810153351A001.txt",

"updateTime": 1660116831000,

"attachment": {

"content_type": "text/plain; charset=UTF-8",

"language": "lt",

"content": "内容",

"content_length": 804

},

"createTime": 1660116831000,

"fileUrl": "http://localhost:8092/fileInfo/profile/upload/fileInfo/2022/08/10/txt测试_20220810153351A001.txt",

"id": 1306333194,

"contentType": "告示",

"fileType": "txt"

}

}

]

}

}

调用上传的接口,可以看到文本内容已经抽取到es里面了,后面就可以直接分词检索内容,高亮显示了

- 文件上传,数据库存储附件信息和附件上传地址;

- 调用es实现文本内容抽取,将抽取的内容放到对应索引下;

- 提供小程序全文检索的api实现根据文件名称关键词联想,文件名称内容全文检索模糊匹配,并高亮显示分词匹配字段;

# 数据源配置

spring:

# 服务模块

devtools:

restart:

# 热部署开关

enabled: true

# 搜索引擎

elasticsearch:

rest:

url: 127.0.0.1

uris: 127.0.0.1:9200

connection-timeout: 1000

read-timeout: 3000

username: elastic

password: 123456

elsticsearchConfig(连接配置)

@Configuration

public class ElasticsearchConfig {

@Value("${spring.elasticsearch.rest.url}")

private String edUrl;

@Value("${spring.elasticsearch.rest.username}")

private String userName;

@Value("${spring.elasticsearch.rest.password}")

private String password;

@Bean

public RestHighLevelClient restHighLevelClient() {

//设置连接的用户名密码

final BasicCredentialsProvider credentialsProvider = new BasicCredentialsProvider();

credentialsProvider.setCredentials(AuthScope.ANY, new UsernamePasswordCredentials(userName, password));

RestHighLevelClient client = new RestHighLevelClient(RestClient.builder(

new HttpHost(edUrl, 9200,"http"))

.setHttpClientConfigCallback(httpClientBuilder -> {

httpClientBuilder.disableAuthCaching();

//保持连接池处于链接状态,该bug曾导致es一段时间没使用,第一次连接访问超时

httpClientBuilder.setKeepAliveStrategy(((response, context) -> Duration.ofMinutes(5).toMillis()));

return httpClientBuilder.setDefaultCredentialsProvider(credentialsProvider);

})

);

return client;

}

}

文件上传保存文件信息并抽取内容到es

实体对象FileInfo

@Setter

@Getter

@Document(indexName = "fileinfo",createIndex = false)

public class FileInfo {

/**

* 主键

*/

@Field(name = "id", type = FieldType.Integer)

private Integer id;

/**

* 文件名称

*/

@Field(name = "fileName", type = FieldType.Text,analyzer = "jieba_index",searchAnalyzer = "jieba_index")

private String fileName;

/**

* 文件类型

*/

@Field(name = "fileType", type = FieldType.Keyword)

private String fileType;

/**

* 内容类型

*/

@Field(name = "contentType", type = FieldType.Text)

private String contentType;

/**

* 附件内容

*/

@Field(name = "attachment.content", type = FieldType.Text,analyzer = "jieba_index",searchAnalyzer = "jieba_index")

@TableField(exist = false)

private String content;

/**

* 文件地址

*/

@Field(name = "fileUrl", type = FieldType.Text)

private String fileUrl;

/**

* 创建时间

*/

private Date createTime;

/**

* 更新时间

*/

private Date updateTime;

}

Controller

@RestController

@RequestMapping("/fileInfo")

public class FileInfoController extends BaseController {

/**

* 服务对象

*/

@Resource

private FileInfoService fileInfoService;

@PutMapping("uploadFile")

public AjaxResult uploadFile(String contentType, MultipartFile file) {

return fileInfoService.uploadFileInfo(contentType,file);

}

}

serviceImpl实现类

@Service

@Slf4j

public class FileInfoServiceImpl implements FileInfoService{

@Resource

private ServerConfig serverConfig;

@Autowired

@Qualifier("restHighLevelClient")

private RestHighLevelClient client;

@Resource

private FileInfoMapper fileInfoMapper;

/**

* 上传文件并进行文件内容识别上传到es

* @param contentType

* @param file

* @return

*/

@Override

public AjaxResult uploadFileInfo(String contentType, MultipartFile file) {

if (FastUtils.checkNullOrEmpty(contentType,file)){

return AjaxResult.error("请求参数不能为空");

}

try {

// 上传文件路径

String filePath = RuoYiConfig.getUploadPath() + "/fileInfo";

FileInfo fileInfo = new FileInfo();

// 上传并返回新文件名称

String fileName = FileUploadUtils.upload(filePath, file);

String prefix = fileName.substring(fileName.lastIndexOf(".")+1);

File files = File.createTempFile(fileName, prefix);

file.transferTo(files);

String url = serverConfig.getUrl() + "/fileInfo" + fileName;

fileInfo.setFileName(FileUtils.getName(fileName));

fileInfo.setFileType(prefix);

fileInfo.setFileUrl(url);

fileInfo.setContentType(contentType);

int result = fileInfoMapper.insertSelective(fileInfo);

if (result > 0) {

fileInfo = fileInfoMapper.selectOne(new LambdaQueryWrapper<FileInfo>().eq(FileInfo::getFileUrl,fileInfo.getFileUrl()));

byte[] bytes = getContent(files);

String base64 = Base64.getEncoder().encodeToString(bytes);

fileInfo.setContent(base64);

IndexRequest indexRequest = new IndexRequest("fileinfo");

//上传同时,使用attachment pipline进行提取文件

indexRequest.source(JSON.toJSONString(fileInfo), XContentType.JSON);

indexRequest.setPipeline("attachment");

IndexResponse indexResponse = client.index(indexRequest, RequestOptions.DEFAULT);

log.info("indexResponse:" + indexResponse);

}

AjaxResult ajax = AjaxResult.success(fileInfo);

return ajax;

} catch (Exception e) {

return AjaxResult.error(e.getMessage());

}

}

/**

* 文件转base64

*

* @param file

* @return

* @throws IOException

*/

private byte[] getContent(File file) throws IOException {

long fileSize = file.length();

if (fileSize > Integer.MAX_VALUE) {

log.info("file too big...");

return null;

}

FileInputStream fi = new FileInputStream(file);

byte[] buffer = new byte[(int) fileSize];

int offset = 0;

int numRead = 0;

while (offset < buffer.length

&& (numRead = fi.read(buffer, offset, buffer.length - offset)) >= 0) {

offset += numRead;

}

// 确保所有数据均被读取

if (offset != buffer.length) {

throw new ServiceException("Could not completely read file "

+ file.getName());

}

fi.close();

return buffer;

}

}

高亮分词检索

@Data

@ApiModel(value ="WarningInfoDto",description = "告警信息")

public class WarningInfoDto{

/**

* 页数

*/

@ApiModelProperty("页数")

private Integer pageIndex;

/**

* 每页数量

*/

@ApiModelProperty("每页数量")

private Integer pageSize;

/**

* 查询关键词

*/

@ApiModelProperty("查询关键词")

private String keyword;

/**

* 内容类型

*/

private List<String> contentType;

/**

* 用户手机号

*/

private String phone;

}

Controller

@Api("搜索引擎")

@RestController

@RequestMapping("es")

public class ElasticsearchController extends BaseController {

@Resource

private ElasticsearchService elasticsearchService;

/**

* 告警信息关键词联想

*

* @param warningInfoDto

* @return

*/

@ApiOperation("关键词联想")

@ApiImplicitParams({

@ApiImplicitParam(name = "contenttype", value = "文档类型", required = true, dataType = "String", dataTypeClass = String.class),

@ApiImplicitParam(name = "keyword", value = "关键词", required = true, dataType = "String", dataTypeClass = String.class)

})

@PostMapping("getAssociationalWordDoc")

public AjaxResult getAssociationalWordDoc(@RequestBody WarningInfoDto warningInfoDto, HttpServletRequest request) {

List<String> words = elasticsearchService.getAssociationalWordOther(warningInfoDto,request);

return AjaxResult.success(words);

}

/**

* 告警信息高亮分词分页查询

*

* @param warningInfoDto

* @return

*/

@ApiOperation("高亮分词分页查询")

@ApiImplicitParams({

@ApiImplicitParam(name = "keyword", value = "关键词", required = true, dataType = "String", dataTypeClass = String.class),

@ApiImplicitParam(name = "pageIndex", value = "页码", required = true, dataType = "Integer", dataTypeClass = Integer.class),

@ApiImplicitParam(name = "pageSize", value = "页数", required = true, dataType = "Integer", dataTypeClass = Integer.class),

@ApiImplicitParam(name = "contenttype", value = "文档类型", required = true, dataType = "String", dataTypeClass = String.class)

})

@PostMapping("queryHighLightWordDoc")

public AjaxResult queryHighLightWordDoc(@RequestBody WarningInfoDto warningInfoDto,HttpServletRequest request) {

IPage<FileInfo> warningInfoListPage = elasticsearchService.queryHighLightWordOther(warningInfoDto,request);

return AjaxResult.success(warningInfoListPage);

}

}

serviceImpl实现类

@Service

@Slf4j

public class ElasticsearchServiceImpl implements ElasticsearchService {

@Resource

private WhiteListService whiteListService;

@Autowired

@Qualifier("restHighLevelClient")

private RestHighLevelClient client;

@Autowired

private RedisCache redisCache;

@Resource

private TokenService tokenService;

/**

* 文档信息关键词联想(根据输入框的词语联想文件名称)

*

* @param warningInfoDto

* @return

*/

@Override

public List<String> getAssociationalWordOther(WarningInfoDto warningInfoDto, HttpServletRequest request) {

//需要查询的字段

BoolQueryBuilder boolQueryBuilder = QueryBuilders.boolQuery()

.should(QueryBuilders.matchBoolPrefixQuery("fileName", warningInfoDto.getKeyword()));

//contentType标签内容过滤

boolQueryBuilder.must(QueryBuilders.termsQuery("contentType", warningInfoDto.getContentType()));

//构建高亮查询

NativeSearchQuery searchQuery = new NativeSearchQueryBuilder()

.withQuery(boolQueryBuilder)

.withHighlightFields(

new HighlightBuilder.Field("fileName")

)

.withHighlightBuilder(new HighlightBuilder().preTags("<span style='color:red'>").postTags("</span>"))

.build();

//查询

SearchHits<FileInfo> search = null;

try {

search = elasticsearchRestTemplate.search(searchQuery, FileInfo.class);

} catch (Exception ex) {

ex.printStackTrace();

throw new ServiceException(String.format("操作错误,请联系管理员!%s", ex.getMessage()));

}

//设置一个最后需要返回的实体类集合

List<String> resultList = new LinkedList<>();

//遍历返回的内容进行处理

for (org.springframework.data.elasticsearch.core.SearchHit<FileInfo> searchHit : search.getSearchHits()) {

//高亮的内容

Map<String, List<String>> highlightFields = searchHit.getHighlightFields();

//将高亮的内容填充到content中

searchHit.getContent().setFileName(highlightFields.get("fileName") == null ? searchHit.getContent().getFileName() : highlightFields.get("fileName").get(0));

if (highlightFields.get("fileName") != null) {

resultList.add(searchHit.getContent().getFileName());

}

}

//list去重

List<String> newResult = null;

if (!FastUtils.checkNullOrEmpty(resultList)) {

if (resultList.size() > 9) {

newResult = resultList.stream().distinct().collect(Collectors.toList()).subList(0, 9);

} else {

newResult = resultList.stream().distinct().collect(Collectors.toList());

}

}

return newResult;

}

/**

* 高亮分词搜索其它类型文档

*

* @param warningInfoDto

* @param request

* @return

*/

@Override

public IPage<FileInfo> queryHighLightWordOther(WarningInfoDto warningInfoDto, HttpServletRequest request) {

//分页

Pageable pageable = PageRequest.of(warningInfoDto.getPageIndex() - 1, warningInfoDto.getPageSize());

//需要查询的字段,根据输入的内容分词全文检索fileName和content字段

BoolQueryBuilder boolQueryBuilder = QueryBuilders.boolQuery()

.should(QueryBuilders.matchBoolPrefixQuery("fileName", warningInfoDto.getKeyword()))

.should(QueryBuilders.matchBoolPrefixQuery("attachment.content", warningInfoDto.getKeyword()));

//contentType标签内容过滤

boolQueryBuilder.must(QueryBuilders.termsQuery("contentType", warningInfoDto.getContentType()));

//构建高亮查询

NativeSearchQuery searchQuery = new NativeSearchQueryBuilder()

.withQuery(boolQueryBuilder)

.withHighlightFields(

new HighlightBuilder.Field("fileName"), new HighlightBuilder.Field("attachment.content")

)

.withHighlightBuilder(new HighlightBuilder().preTags("<span style='color:red'>").postTags("</span>"))

.build();

//查询

SearchHits<FileInfo> search = null;

try {

search = elasticsearchRestTemplate.search(searchQuery, FileInfo.class);

} catch (Exception ex) {

ex.printStackTrace();

throw new ServiceException(String.format("操作错误,请联系管理员!%s", ex.getMessage()));

}

//设置一个最后需要返回的实体类集合

List<FileInfo> resultList = new LinkedList<>();

//遍历返回的内容进行处理

for (org.springframework.data.elasticsearch.core.SearchHit<FileInfo> searchHit : search.getSearchHits()) {

//高亮的内容

Map<String, List<String>> highlightFields = searchHit.getHighlightFields();

//将高亮的内容填充到content中

searchHit.getContent().setFileName(highlightFields.get("fileName") == null ? searchHit.getContent().getFileName() : highlightFields.get("fileName").get(0));

searchHit.getContent().setContent(highlightFields.get("content") == null ? searchHit.getContent().getContent() : highlightFields.get("content").get(0));

resultList.add(searchHit.getContent());

}

//手动分页返回信息

IPage<FileInfo> warningInfoIPage = new Page<>();

warningInfoIPage.setTotal(search.getTotalHits());

warningInfoIPage.setRecords(resultList);

warningInfoIPage.setCurrent(warningInfoDto.getPageIndex());

warningInfoIPage.setSize(warningInfoDto.getPageSize());

warningInfoIPage.setPages(warningInfoIPage.getTotal() % warningInfoDto.getPageSize());

return warningInfoIPage;

}

}

测试

--请求jason

{

"keyword":"全库备份",

"contentType":["告示"],

"pageIndex":1,

"pageSize":10

}

--响应

{

"msg": "操作成功",

"code": 200,

"data": {

"records": [

{

"id": 1306333194,

"fileName": "txt测试_20220810153351A001.txt",

"fileType": "txt",

"contentType": "告示",

"content": "•\t秒级快速<span style='color:red'>备份</span>\r\n不论多大的数据量,<span style='color:red'>全库</span><span style='color:red'>备份</span>只需30秒,而且<span style='color:red'>备份过程</span>不会对数据库加锁,对应用程序几乎无影响,全天24小时均可进行<span style='color:red'>备份</span>。",

"fileUrl": "http://localhost:8092/fileInfo/profile/upload/fileInfo/2022/08/10/txt测试_20220810153351A001.txt",

"createTime": "2022-08-10T15:33:51.000+08:00",

"updateTime": "2022-08-10T15:33:51.000+08:00"

}

],

"total": 1,

"size": 10,

"current": 1,

"orders": [],

"optimizeCountSql": true,

"searchCount": true,

"countId": null,

"maxLimit": null,

"pages": 1

}

}

返回的内容将分词检索到匹配的内容,并将匹配的词高亮显示。

3263

3263

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?