7.3. Process Termination

Return from main

Calling exit

Calling _exit or _Exit

//Return of the last thread from its start routine (Section 11.5)

//Calling pthread_exit (Section 11.5) from the last thread

Calling abort (Section 10.17)

Receipt of a signal (Section 10.2)

//Response of the last thread to a cancellation request

Exit Functions

#include <stdlib.h> void exit(int status); //performs certain cleanup processing and then returns to the kernel. void _Exit(int status); //return to the kernel immediately #include <unistd.h> void _exit(int status); //return to the kernel immediately iso1999 默认exit(0) = return 0 gcc 加上-std=c99 才符合99标准 #include <stdlib.h> int atexit(void (*func)(void)); 注册顺序与执行顺序相反 linux中 main没有exit(0),会以最后函数的返回值返回。

7.5. Environment List

extern char **environ; 字符串数组和字符串都以0结束。

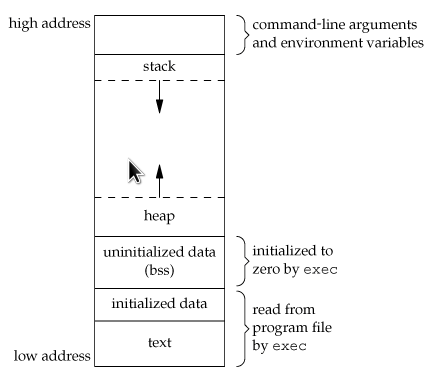

7.6. Memory Layout of a C Program

Text segment, the machine instructions that the CPU executes. //text Initialized data segment //data Uninitialized data segment //初始化全局数组之类 bss Stack Heap The only portions of the program that need to be saved in the program file are the text segment and the initialized data.

只有text和data在程序文件中分配,其余在运行时分配。bss在运行时初始化。但文件大小一般不等与text+data,有一些symbol table information that can be helpful in debugging a core file.

7.7. Shared Libraries

默认gcc使用shared libraries。阻止它使用的方法是 -static

7.8. Memory Allocation

malloc, which allocates a specified number of bytes of memory. The initial value of the memory is indeterminate.

calloc, which allocates space for a specified number of objects of a specified size. The space is initialized to all 0 bits.

realloc, which increases or decreases the size of a previously allocated area. When the size increases, it may involve moving the previously allocated area somewhere else, to provide the additional room at the end. Also, when the size increases, the initial value of the space between the old contents and the end of the new area is indeterminate.

#include <stdlib.h> void *malloc(size_t size); void *calloc(size_t nobj, size_t size); void *realloc(void *ptr, size_t newsize); void free(void *ptr);

alloca Function

One additional function is also worth mentioning. The function alloca has the same calling sequence as malloc; however, instead of allocating memory from the heap, the memory is allocated from the stack frame of the current function. The advantage is that we don't have to free the space; it goes away automatically when the function returns.

7.9. Environment Variables

#include <stdlib.h> char *getenv(const char *name); Note that this function returns a pointer to the value of a name=value string. We should always use getenv to fetch a specific value from the environment, instead of accessing environ directly. int putenv(char *str); //replace the old one if exists int setenv(const char *name, const char *value, int rewrite); //the old one is not removed, if rewrite is 0. Otherwise , replace. int unsetenv(const char *name);

Figure 7.7. Environment variables defined in the Single UNIX Specification

增加、修改新的环境变量时比较复杂,要考虑内存空间分配。

7.10. setjmp and longjmp Functions(?)

#include <setjmp.h> int setjmp(jmp_buf env); void longjmp(jmp_buf env, int val); val是return value, volatile阻止编译器将一些内存auto变量放进register里。 register里的变量在多层调用时,可能会roll back。

7.11. getrlimit and setrlimit Functions(?)

#include <sys/resource.h> int getrlimit(int resource, struct rlimit *rlptr); int setrlimit(int resource, const struct rlimit *rlptr); struct rlimit { rlim_t rlim_cur; /* soft limit: current limit */ rlim_t rlim_max; /* hard limit: maximum value for rlim_cur */ };

Three rules govern the changing of the resource limits.

-

A process can change its soft limit to a value less than or equal to its hard limit.

-

A process can lower its hard limit to a value greater than or equal to its soft limit. This lowering of the hard limit is irreversible for normal users.

-

Only a superuser process can raise a hard limit.

415

415

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?