前提条件:

Ubuntu18.04环境

安装好Spark2.x,并配置好环境变量

安装好python3

问题:

执行pyspark脚本报错

$ pyspark

pyspark: line 45: python: command not found

env: ‘python’: No such file or directory

原因:

因为没有配置Spark python的环境变量

解决办法:

添加python相关环境变量

$ vi ~/.bashrc

文件末尾添加如下语句

export PYTHONPATH=$SPARK_HOME/python:$SPARK_HOME/python/lib/py4j-0.10.7-src.zip:$PYTHONPATH

export PYSPARK_PYTHON=python3

注意:py4j-0.10.7-src.zip要到$SPARK_HOME/python/lib目录查看是否是这个名称。不同版本的py4j的名称会有差别

保存后,让环境变量生效

$ source ~/.bashrc

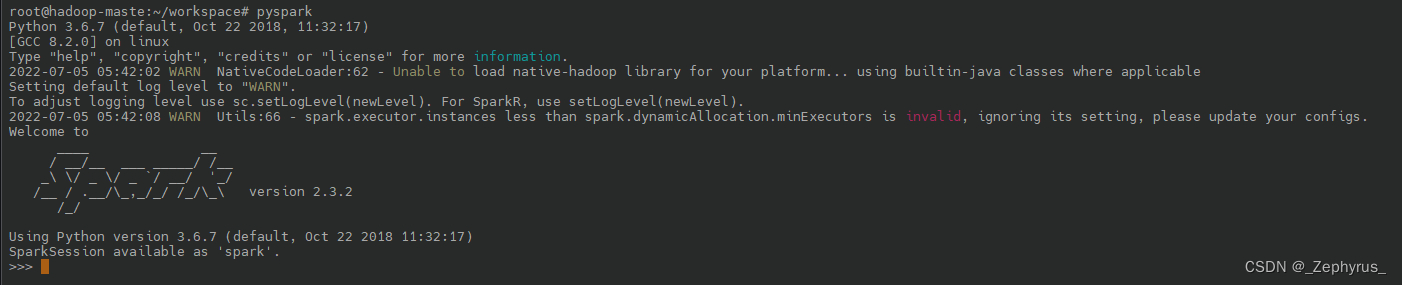

再次输入pyspark,成功如下:

$ pyspark

/home/hadoop/soft/spark/bin/pyspark: line 45: python: command not found

Python 3.5.2 (default, Nov 12 2018, 13:43:14)

[GCC 5.4.0 20160609] on linux

Type "help", "copyright", "credits" or "license" for more information.

19/01/23 00:27:46 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/__ / .__/\_,_/_/ /_/\_\ version 2.3.2

/_/

Using Python version 3.5.2 (default, Nov 12 2018 13:43:14)

SparkSession available as 'spark'.

查看Web监控页面:

浏览器输入ip:4040

/home/hadoop/soft/spark/bin/pyspark: line 45: python: command not found 修改如下:

sudo rm -rf /usr/bin/python

sudo ln -s /usr/bin/python3 /usr/bin/python

再次执行:

2214

2214

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?