摘要

1.本地连接的mysql,数据是随便生成的,这点找gpt就能做

2.100w条数据写入速度有显著提升:90s-11s(本机)

参考的这篇文章:

https://medium.com/@benmorel/high-speed-inserts-with-mysql-9d3dcd76f723

代码部分

import java.sql.Connection;

import java.sql.DriverManager;

import java.sql.PreparedStatement;

import java.sql.SQLException;

import java.util.concurrent.ExecutorService;

import java.util.concurrent.Executors;

import java.util.concurrent.TimeUnit;

public class java {

/**

* 快速添加一千万条测试数据

* @param args

*/

public static void main(String[] args) {

String url = "jdbc:mysql://localhost:3306/million_test";

String username = "root";

String password = "9606";

String tableName = "new_table";

long startTime = System.currentTimeMillis();

try (Connection connection = DriverManager.getConnection(url, username, password)) {

// 关闭自动提交

connection.setAutoCommit(false);

// 执行多线程并行插入

insertDataParallel(connection, tableName, 10_000_00);

// 手动提交事务

connection.commit();

} catch (SQLException e) {

e.printStackTrace();

}

long endTime = System.currentTimeMillis();

long totalTime = endTime -startTime;

System.err.println("该段代码执行耗时:" + totalTime + " ms");

}

private static void insertDataParallel(Connection connection, String tableName, int rowCount) throws SQLException {

int batchSize = 80_00;

int threadCount = 15; // 根据系统资源设置线程数量 目前看到的最好情况

int extend_size =800; //批处理条数

ExecutorService executorService = Executors.newFixedThreadPool(threadCount);

try {

for (int i = 0; i < rowCount; i += batchSize) {

// System.out.println(i);

int startId = i + 1;

int endId = Math.min(i + batchSize, rowCount);

executorService.submit(() -> {

try {

insertBatchData(connection, tableName, startId, endId,extend_size);

} catch (SQLException e) {

// e.printStackTrace();

}

});

}

// 等待所有线程执行完成

executorService.shutdown();

executorService.awaitTermination(1, TimeUnit.HOURS);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

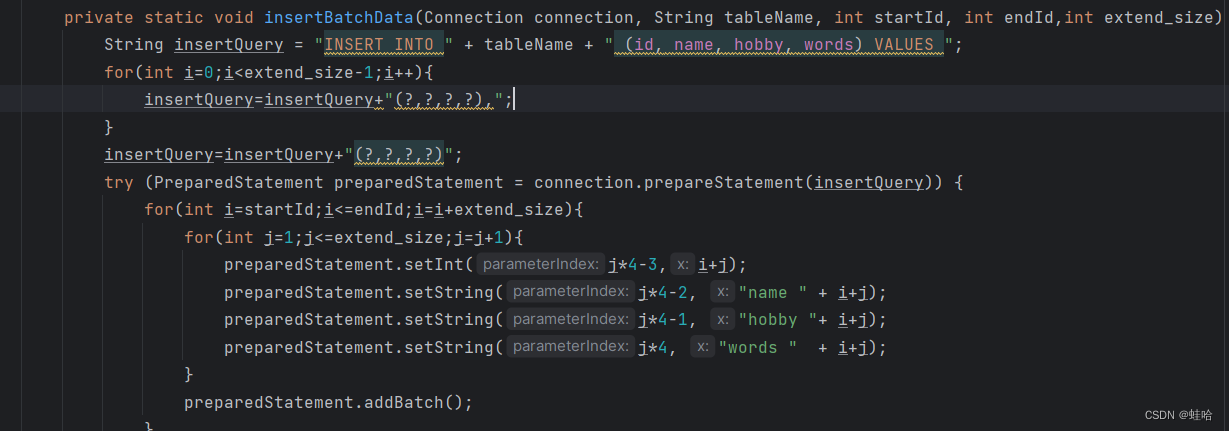

private static void insertBatchData(Connection connection, String tableName, int startId, int endId,int extend_size) throws SQLException {

String insertQuery = "INSERT INTO " + tableName + " (id, name, hobby, words) VALUES ";

for(int i=0;i<extend_size-1;i++){

insertQuery=insertQuery+"(?,?,?,?),";

}

insertQuery=insertQuery+"(?,?,?,?)";

try (PreparedStatement preparedStatement = connection.prepareStatement(insertQuery)) {

for(int i=startId;i<=endId;i=i+extend_size){

for(int j=1;j<=extend_size;j=j+1){

preparedStatement.setInt(j*4-3,i+j);

preparedStatement.setString(j*4-2, "name " + i+j);

preparedStatement.setString(j*4-1, "hobby "+ i+j);

preparedStatement.setString(j*4, "words " + i+j);

}

preparedStatement.addBatch();

}

// 执行批处理

preparedStatement.executeBatch();

// 提交事务

connection.commit();

}

}

}

优化思路:

1. java多线程(不知道有用没有,gpt写的代码)

2.优化语句(提升明显,核心提升就在这)

其实就是一次插入多条,比一条一条插入快多了,代码在这

后续优化思路

后面我就没在做了,mysql还是太慢了,11s根本不够,我转redis了,用个pipeline就不到2s 100w条了,太无敌了

但对mysql后续还是有点想法

1.可以看看mysql的sql操作文档,看看有没有能优化的地方

2.改改mysql的引擎innodb的参数,或许有用把

167

167

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?