目录

概述

mediasoup作为一个webrtc服务器,除了支持webrtc类型的终端接入外,还支持非webrtc类型的终端,例如ffmpeg/gstreamer。本文依据mediasoup官网的思路,在mediasoup-demo的基础上实验了如何使用ffmpeg消费mediasoup的音视频数据,如何使用ffmpeg向mediasoup发送音视频数据。

实验的方案是通过在房间里面自动创建一个ffmpegPeer,这个ffmpegPeer对应了一个外部的ffmpeg客户端,房间内的其它成员也可以看到这个ffmpegPeer。

1通过ffmpeg消费mediasoup的音视频流

1.1 创建ffmpegPeer

async createFFmpegPeer(protooWebSocketTransport) {

try {

ffmpegPeer = this._protooRoom.createPeer('ffmpeg-peerid', protooWebSocketTransport);

}

catch (error) {

logger.error('protooRoom.createPeer() failed:%o', error);

}

// Use the peer.data object to store mediasoup related objects.

// Not joined after a custom protoo 'join' request is later received.

//ffmpegPeer.data.consume = false;

ffmpegPeer.data.joined = false;

ffmpegPeer.data.displayName = undefined;

ffmpegPeer.data.device = undefined;

ffmpegPeer.data.rtpCapabilities = undefined;

ffmpegPeer.data.sctpCapabilities = undefined;

// Have mediasoup related maps ready even before the Peer joins since we

// allow creating Transports before joining.

ffmpegPeer.data.transports = new Map();

ffmpegPeer.data.producers = new Map();

ffmpegPeer.data.consumers = new Map();

ffmpegPeer.data.dataProducers = new Map();

ffmpegPeer.data.dataConsumers = new Map();

}在Room.js里面创建 ffmpegPeer,代码很简单。由于ffmpeg没有办法与mediasoup进行信令交互,所以需要在房间里面自动创建动ffmpegPeer。

1.1.2 创建consumer

创建音频consumer,将音频RTP包发送到192.168.1.195的8098端口:

ffmpegPeer.data.displayName = 'ffmpeg';

const audioTransport = await router.createPlainTransport(

{

listenIp: '0.0.0.0',

rtcpMux: false,

comedia: false

}

);

var cap = router.rtpCapabilities;

var audioCodec = {

channels: 2,

clockRate: 48000,

kind: 'audio',

mimeType: 'audio/opus',

parameters: { minptime: 10, useinbandfec: 0 },

preferredPayloadType: 100,

rtcpFeedBack: []

}

var videoCodec = {

clockRate: 90000,

kind: 'video',

mimeType: 'video/VP8',

parameters: {},

preferredPayloadType: 101,

rtcpFeedBack: []

}

ffmpegPeer.data.rtpCapabilities = { codecs: [], headerExtensions: [] };

ffmpegPeer.data.rtpCapabilities.codecs.push(audioCodec);

ffmpegPeer.data.rtpCapabilities.codecs.push(videoCodec);

var audioHeaderExtensions = {

direction: 'sendrecv',

kind: 'audio',

preferredEncrypt: false,

preferredId: 1,

uri: 'urn:ietf:params:rtp-hdrext:sdes:mid'

};

var videoHeaderExtensions = {

direction: 'sendrecv',

kind: 'video',

preferredEncrypt: false,

preferredId: 1,

uri: 'urn:ietf:params:rtp-hdrext:sdes:mid'

};

ffmpegPeer.data.rtpCapabilities.headerExtensions.push(audioHeaderExtensions);

ffmpegPeer.data.rtpCapabilities.headerExtensions.push(videoHeaderExtensions);

//ffmpegPeer.data.rtpCapabilities = peer.data.rtpCapabilities;

audioTransport.appData.consuming = true;

ffmpegPeer.data.transports.set(audioTransport.id, audioTransport);

this._createConsumer({

consumerPeer: ffmpegPeer,

producerPeer: peer,

producer: producer

});

audioTransport.appData.consuming = false;

audioTransport.connect({

ip: '192.168.1.105',

port: 8098,

rtcpPort: 8099

});

}

创建视频consumer,将视频RTP包发到192.168.1.105的8088端口:

const videoTransport = await router.createPlainTransport(

{

listenIp: '0.0.0.0',

rtcpMux: false,

comedia: false

}

);

videoTransport.appData.consuming = true;

ffmpegPeer.data.transports.set(videoTransport.id, videoTransport);

this._createConsumer({

consumerPeer: ffmpegPeer,

producerPeer: peer,

producer: producer

});

videoTransport.connect({

ip: '192.168.1.105',

port: 8088,

rtcpPort: 8089

});1.3使用ffplay播放rtp视频流

根据上面创建consumer的参数来构造一个sdp:

v=0

o=- 0 0 IN IP4 127.0.0.1

s=mediasoup

c=IN IP4 192.168.1.105

t=0 0

a=tool:libavformat 58.42.100

a=group:BUNDLE audio video

m=video 8088 RTP/AVP 101

a=rtpmap:101 VP8/90000

a=fmtp:101 packetization-mode=1

m=audio 8098 RTP/AVP 100

a=rtpmap:100 OPUS/48000

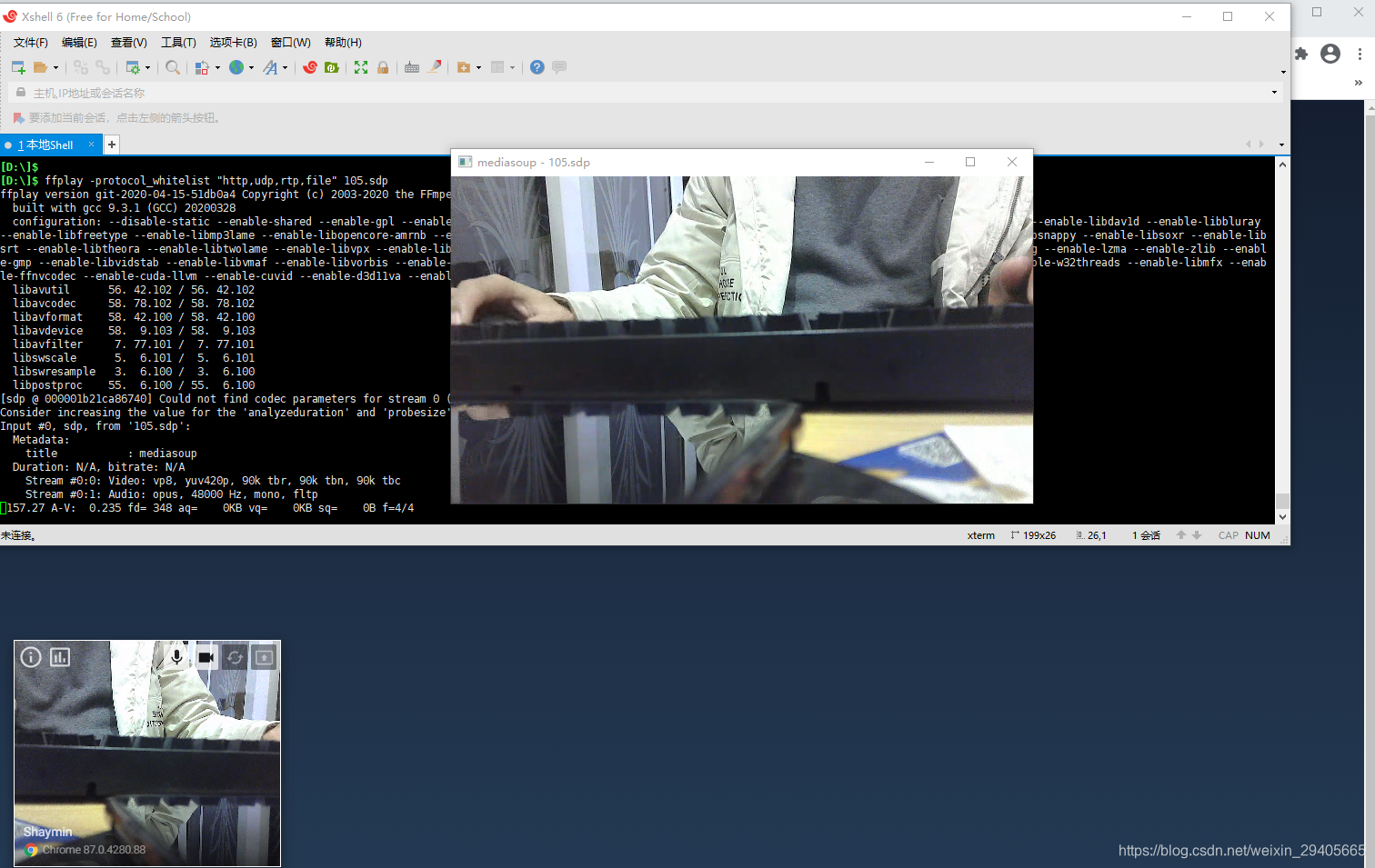

a=fmpt:100使用ffplay来播放视频: ffplay -protocol_whitelist "http,udp,rtp,file" 105.sdp。当然也可以使用ffmpeg来进行录像。

2通过ffmpeg向mediasoup推音视频流

2.1创建producer

async createFFmpegProducer()

{

if(initFFmpegTransport)

return;

initFFmpegTransport = true;

const audioTransport = await router.createPlainTransport(

{

listenIp : '0.0.0.0',

rtcpMux : false,

comedia :true

}

);

const videoTransport = await router.createPlainTransport(

{

listenIp : '0.0.0.0',

rtcpMux : false,

comedia : true

}

);

const audioRtpPort = audioTransport.tuple.localPort;

const audioRtcpPort = audioTransport.rtcpTuple.localPort;

const videoRtpPort = videoTransport.tuple.localPort;

const videoRtcpPort = videoTransport.rtcpTuple.localPort;

logger.info("audioRtpPort = %d, audioRtcpPort = %d, videoRtpPort = %d, videoRtcpPort = %d",

audioRtpPort, audioRtcpPort, videoRtpPort, videoRtcpPort);

var pp = {};

pp['sprop-stereo'] = 1;

pp['minptime'] = 10;

pp['usedtx'] = 1;

pp['useinbandfec'] = 1;

ffmpegAudioProducer = await audioTransport.produce(

{

kind : 'audio',

rtpParameters :

{

codecs :

[

{

mimeType : 'audio/opus',

clockRate : 48000,

payloadType : 101,

channels : 2,

rtcpFeedback : [ ],

parameters : pp//{ 'sprop-stereo': 1}

}

],

encodings : [ {ssrc: 11111111}]

}

}

);

ffmpegVideoProducer = await videoTransport.produce(

{

kind : 'video',

rtpParameters :

{

codecs :

[

{

mimeType : 'video/vp8',

clockRate : 90000,

payloadType : 102,

rtcpFeedback : [],

}

],

encodings : [{ssrc: 22222222}]

}

});

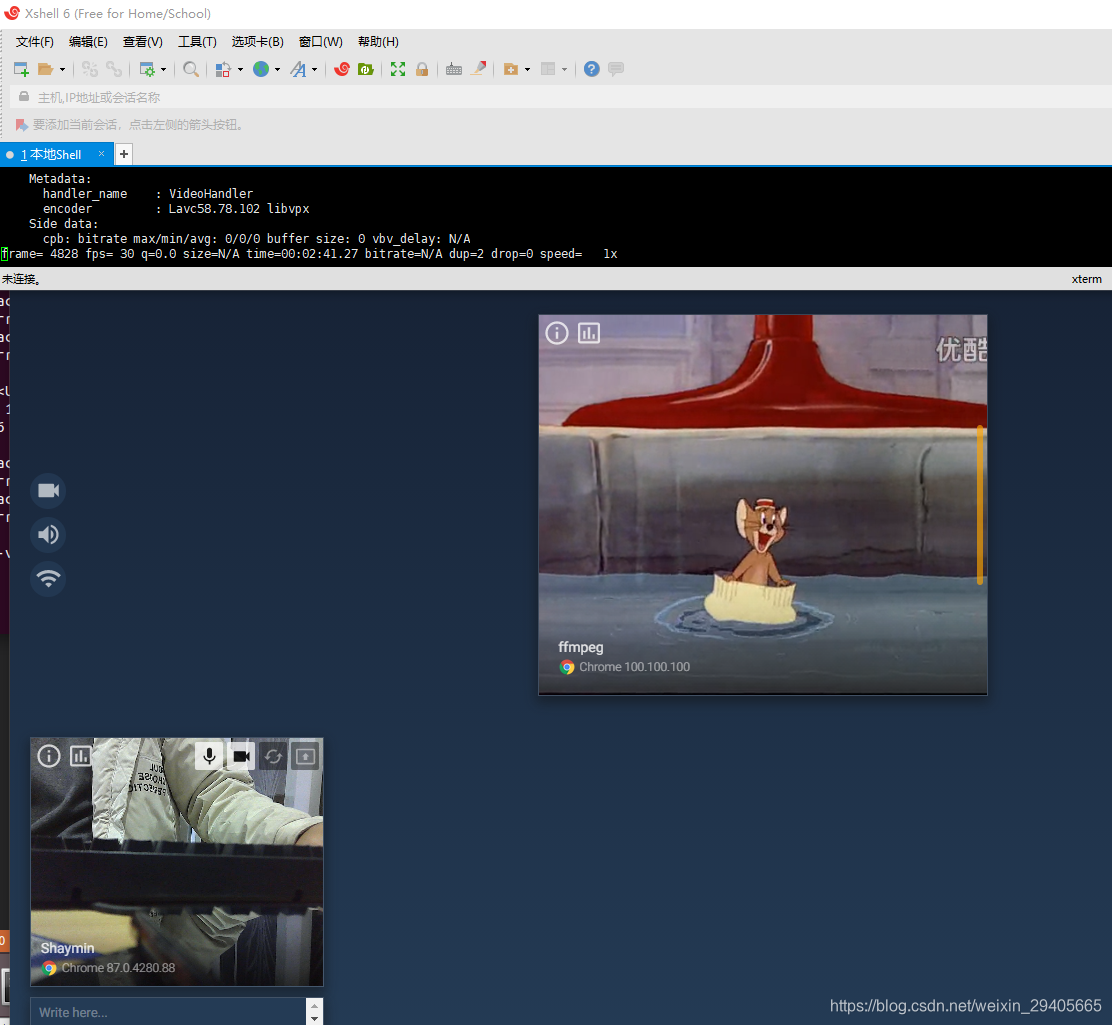

}2.2为其它peer创建consumer

var ffmpegPeer = peer;

ffmpegPeer.data.displayName = 'ffmpeg';

ffmpegPeer.data.device = {flag : 'chrome', name : 'Chrome', version : '100.100.100'};

peer.notify(

'newPeer',

{

id : ffmpegPeer.id,

displayName : ffmpegPeer.data.displayName,

device : ffmpegPeer.data.device

})

.catch(() => {});

// test

this._createConsumer({

consumerPeer : peer,

producerPeer : ffmpegPeer,

producer : ffmpegVideoProducer});

this._createConsumer({

consumerPeer : peer,

producerPeer : ffmpegPeer,

producer : ffmpegAudioProducer});2.3使用ffmpeg向mediasoup推音视频流

根据上面的audioRtpPort、audioRtcpPort、videoRtpPort、videoRtcpPort,使用ffmpeg向mediasoup推流: ffmpeg -re -v info -stream_loop -1 -i D:/001.mp4 -map 0:a:0 -acodec libopus -ab 128k -ac 2 -ar 48000 -map 0:v:0 -pix_fmt yuv420p -c:v libvpx -b:v 1000k -f tee "[select=a:f=rtp:ssrc=11111111:payload_type=101]rtp://192.168.1.205:45107?rtcpport=41318|[select=v:f=rtp:ssrc=22222222:payload_type=102]rtp://192.168.1.205:49628?rtcpport=40025",其它peer就可以看到ffmpeg推送的音视频流:

全文完。

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?