http://www.2cto.com/kf/201307/226576.html

,

,

这个是Sigmoid函数,在这个回归过程中非常重要的函数,主要的算法思想和这个密切相关。这个函数的性质大家可以自己下去分析,这里就不细说了。

然后我们说明下流程,首先我们将每个特征都乘以一个回归系数,然后将这个总和带入上面的函数,进而得到一个数值在0~1的值,则大于0.5归到1类,小于0.5归到0类。但是这么多维特征的系数该怎么选取成了我们最关心的问题。这样我们就构建了一个二分类的模型,判定一个东西是不是某个分类。

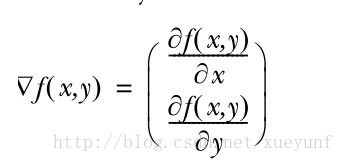

迭代使用的微分公式:

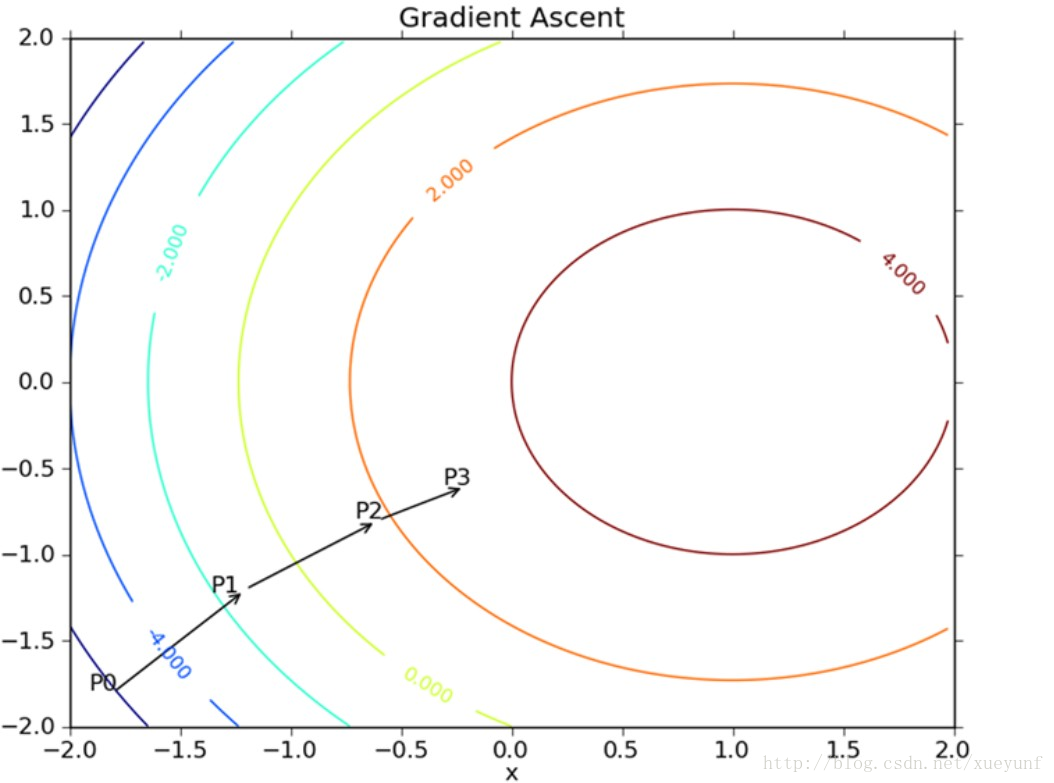

我们沿着这个进行迭代求最优权重参数,这样出来的参数就可以出来了。对于二维空间的我们可以参考一张示意图:

当然步长的设置不能太长,否则可能跨越最佳值。O(∩_∩)O~当然这里给出的只是一个玩具示意下,这个复杂的数学过程是如何进行的。

最后给出python代码:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

|

from

numpy

import

*

def

loadDataSet():

dataMat

=

[]; labelMat

=

[]

fr

=

open

(

'testSet.txt'

)

for

line

in

fr.readlines():

lineArr

=

line.strip().split()

dataMat.append([

1.0

,

float

(lineArr[

0

]),

float

(lineArr[

1

])])

labelMat.append(

int

(lineArr[

2

]))

return

dataMat,labelMat

def

sigmoid(inX):

return

1.0

/

(

1

+

exp(

-

inX))

def

gradAscent(dataMatIn, classLabels):

dataMatrix

=

mat(dataMatIn)

#convert to NumPy matrix

labelMat

=

mat(classLabels).transpose()

#convert to NumPy matrix

m,n

=

shape(dataMatrix)

alpha

=

0.001

maxCycles

=

500

weights

=

ones((n,

1

))

for

k

in

range

(maxCycles):

#heavy on matrix operations

h

=

sigmoid(dataMatrix

*

weights)

#matrix mult

error

=

(labelMat

-

h)

#vector subtraction

weights

=

weights

+

alpha

*

dataMatrix.transpose()

*

error

#matrix mult

return

weights

dataArr, labelMat

=

loadDataSet()

print

(gradAscent(dataArr,labelMat))

from

numpy

import

*

def

loadDataSet():

dataMat

=

[]; labelMat

=

[]

fr

=

open

(

'testSet.txt'

)

for

line

in

fr.readlines():

lineArr

=

line.strip().split()

dataMat.append([

1.0

,

float

(lineArr[

0

]),

float

(lineArr[

1

])])

labelMat.append(

int

(lineArr[

2

]))

return

dataMat,labelMat

def

sigmoid(inX):

return

1.0

/

(

1

+

exp(

-

inX))

def

gradAscent(dataMatIn, classLabels):

dataMatrix

=

mat(dataMatIn)

#convert to NumPy matrix

labelMat

=

mat(classLabels).transpose()

#convert to NumPy matrix

m,n

=

shape(dataMatrix)

alpha

=

0.001

maxCycles

=

500

weights

=

ones((n,

1

))

for

k

in

range

(maxCycles):

#heavy on matrix operations

h

=

sigmoid(dataMatrix

*

weights)

#matrix mult

error

=

(labelMat

-

h)

#vector subtraction

weights

=

weights

+

alpha

*

dataMatrix.transpose()

*

error

#matrix mult

return

weights

|

2270

2270

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?