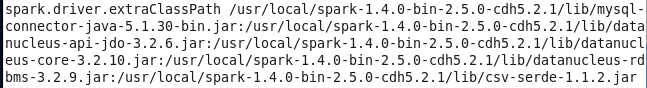

在spark-default.conf文件中明明配置了mysql的数据源连接

随后启动spark-shell 执行如下测试代码:

import org.apache.spark.{SparkContext, SparkConf}

import org.apache.spark.sql.{SaveMode, DataFrame}

import org.apache.spark.sql.hive.HiveContext

val mySQLUrl = "jdbc:mysql://localhost:3306/yangsy?user=root&password=yangsiyi"

val people_DDL = s"""

CREATE TEMPORARY TABLE PEOPLE

USING org.apache.spark.sql.jdbc

OPTIONS (

url '${mySQLUrl}',

dbtable 'person'

)""".stripMargin

sqlContext.sql(people_DDL)

val person = sql("SELECT * FROM PEOPLE").cache()

val name = "name"

val targets = person.filter(&

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

949

949

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?