Define Network Architecture

Create a classification LSTM network that classifies sequences of 28-by-28 grayscale images into 10 classes.

Define the following network architecture:

A sequence input layer with an input size of [28 28 1].

A convolution, batch normalization, and ReLU layer block with 20 5-by-5 filters.

An LSTM layer with 200 hidden units that outputs the last time step only.

A fully connected layer of size 10 (the number of classes) followed by a softmax layer and a classification layer.

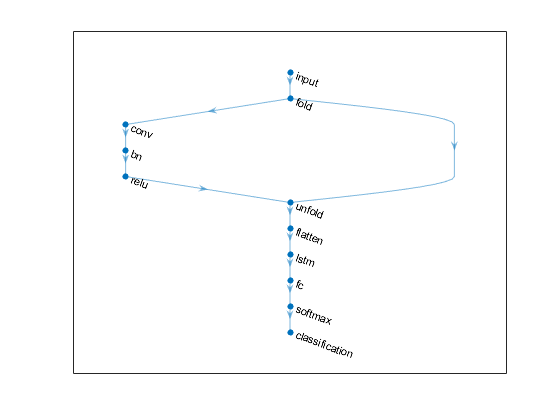

To perform the convolutional operations on each time step independently, include a sequence folding layer before the convolutional layers. LSTM layers expect vector sequence input. To restore the sequence structure and reshape the output of the convolutional layers to sequences of feature vectors, insert a sequence unfolding layer and a flatten layer between the convolutional layers and the LSTM layer.

inputSize = [28 28 1];

filterSize = 5;

numFilters = 20;

numHiddenUnits = 200;

numClasses = 10;

layers = [ ...

sequenceInputLayer(inputSize,'Name','input')

sequenceFoldingLayer('Name','fold')

convolution2dLayer(filterSize,numFilters,'Name','conv')

batchNormalizationLayer('Name','bn')

reluLayer('Name','relu')

sequenceUnfoldingLayer('Name','unfold')

flattenLayer('Name','flatten')

lstmLayer(numHiddenUnits,'OutputMode','last','Name','lstm')

fullyConnectedLayer(numClasses, 'Name','fc')

softmaxLayer('Name','softmax')

classificationLayer('Name','classification')];

Convert the layers to a layer graph and connect the miniBatchSize output of the sequence folding layer to the corresponding input of the sequence unfolding layer.

lgraph = layerGraph(layers);

lgraph = connectLayers(lgraph,'fold/miniBatchSize','unfold/miniBatchSize');

View the final network architecture using the plot function.

figure

plot(lgraph)

659

659

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?