配置文件

cd /usr/app/flume1.6/conf

vi flume-dirTohdfs.properties

#agent1 name

agent1.sources=source1

agent1.sinks=sink1

agent1.channels=channel1

#Spooling Directory #set source1 agent1.sources.source1.type=spooldir agent1.sources.source1.spoolDir=/usr/app/flumelog/dir/logdfs agent1.sources.source1.channels=channel1 agent1.sources.source1.fileHeader = false agent1.sources.source1.interceptors = i1 agent1.sources.source1.interceptors.i1.type = timestamp #set sink1 agent1.sinks.sink1.type=hdfs agent1.sinks.sink1.hdfs.path=/user/yuhui/flume agent1.sinks.sink1.hdfs.fileType=DataStream agent1.sinks.sink1.hdfs.writeFormat=TEXT agent1.sinks.sink1.hdfs.rollInterval=1 agent1.sinks.sink1.channel=channel1 agent1.sinks.sink1.hdfs.filePrefix=%Y-%m-%d #set channel1 agent1.channels.channel1.type=file agent1.channels.channel1.checkpointDir=/usr/app/flumelog/dir/logdfstmp/point agent1.channels.channel1.dataDirs=/usr/app/flumelog/dir/logdfstmp

建立Linux目录

[root@hadoop11 app]# mkdir /usr/app/flumelog/dir

[root@hadoop11 app]# mkdir /usr/app/flumelog/dir/logdfs

[root@hadoop11 app]# mkdir /usr/app/flumelog/dir/logdfstmp

[root@hadoop11 app]# mkdir /usr/app/flumelog/dir/logdfstmp/point

建立Hadoop目录

[root@hadoop11 app]#hadoop fs -mkdir /user/yuhui/flume

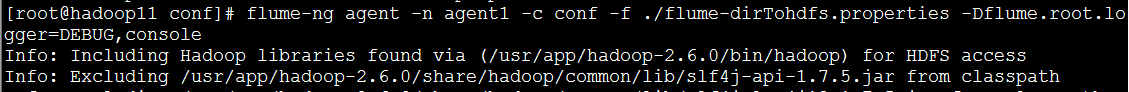

启动配置文件

flume-ng agent -n agent1 -c conf -f ./flume-dirTohdfs.properties -Dflume.root.logger=DEBUG,console >./flume1.log 2>&1 &- 1

- 2

- 1

- 2

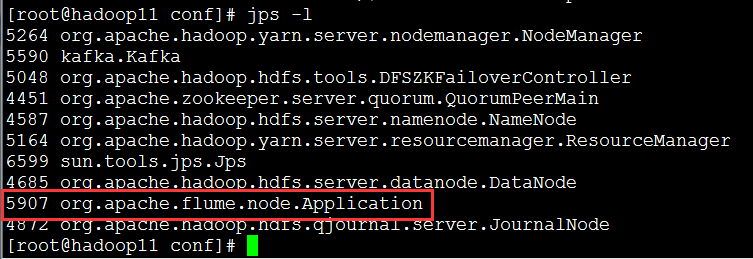

查看启动进程:

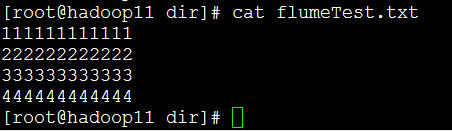

建立数据测试

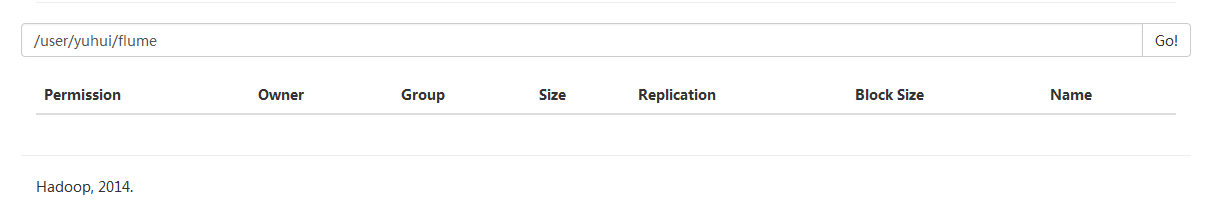

查看hdfs数据测试路径没有数据

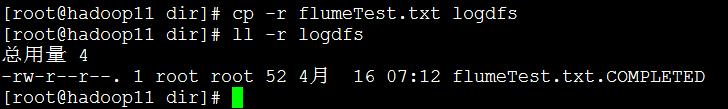

将测试数据放入监控路径,Flume读完数据之后,文件会自动更名

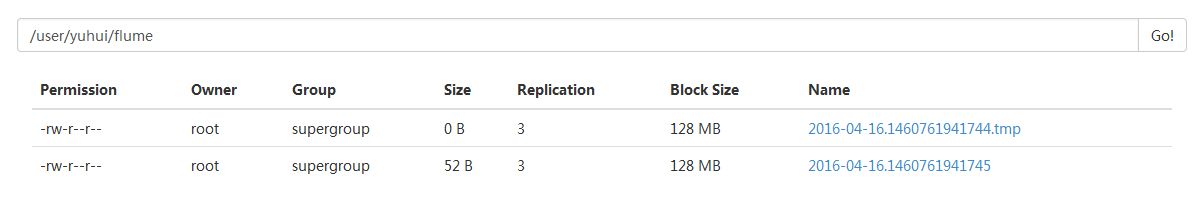

查看hdfs数据测试路径,Flume输出的数据

备注

日志监控路径,只要日志放入则被Flume监控

[root@hadoop11 app]# mkdir /usr/app/flumelog/dir/logdfs

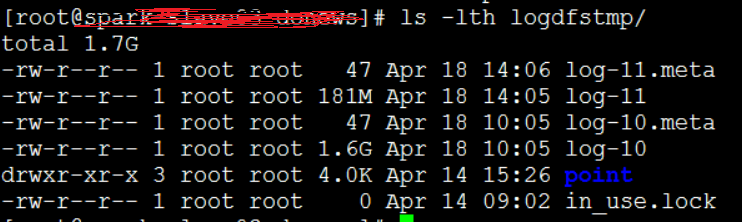

日志读取完毕存储路径,日志在这里则一直存储在Channel中(最多只有两个log-number日志,且默认达到1.6G之后删除前面一个log,建立新的log)

[root@hadoop11 app]# mkdir /usr/app/flumelog/dir/logdfstmp

日志监控路径中文件的路径存放点

[root@hadoop11 app]# mkdir /usr/app/flumelog/dir/logdfstmp/point

7766

7766

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?