环境:centos + hadoop2.5.2 +scala-2.10.5 + spark1.3.1

1、从http://spark.apache.org/downloads.html 下载编译好的spark

2、准备scala

从http://www.scala-lang.org/ 下载scala-2.10.5.rpm。不下载2.11,因为下载2.11要重新编译spark

安装scala

rpm -ivh scala-2.10.5.rpm3、解压spark

tar -zxvf spark-1.3.1-bin-hadoop2.4.tar.gz4、配置环境变量

在/etc/profile最后面增加

export SPARK_HOME=/usr/local/spark-1.3.1-bin-hadoop2.4

export PATH=$PATH:$SPARK_HOME/bin

# 生效

source /etc/profile5、配置spark

vi /usr/local/spark-1.3.1-bin-hadoop2.4/conf/spark-env.sh

在最后面增加:

export JAVA_HOME=/usr/java/jdk1.7.0_76

export SPARK_MASTER_IP=192.168.1.21

export SPARK_WORKER_MEMORY=2g

export HADOOP_CONF_DIR=/usr/local/hadoop-2.5.2/etc/hadoop6、配置slave节点

vi /usr/local/spark-1.3.1-bin-hadoop2.4/conf/slaves

master

slaver17、复制配置文件到slave节点

scp -r /usr/local/spark-1.3.1-bin-hadoop2.4/ root@slaver1:/usr/local/8、启动集群

cd /usr/local/spark-1.3.1-bin-hadoop2.4/sbin

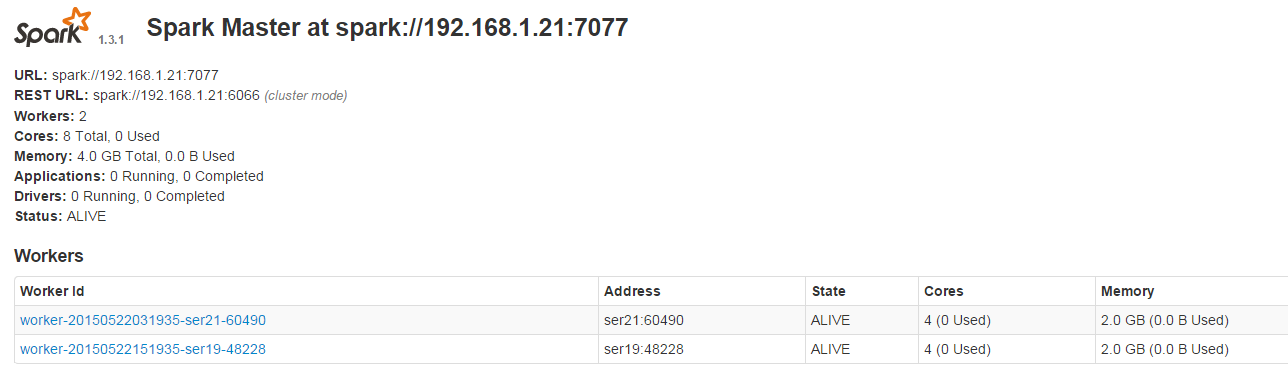

./start-all.sh9、查看集群是否启动成功

jps

# master 查看是否有:Master,Worker

# slaver 查看是否有:Worker

参考:http://stark-summer.iteye.com/blog/2173219

742

742

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?