做个整理,方便以后找不到的时候重新下载

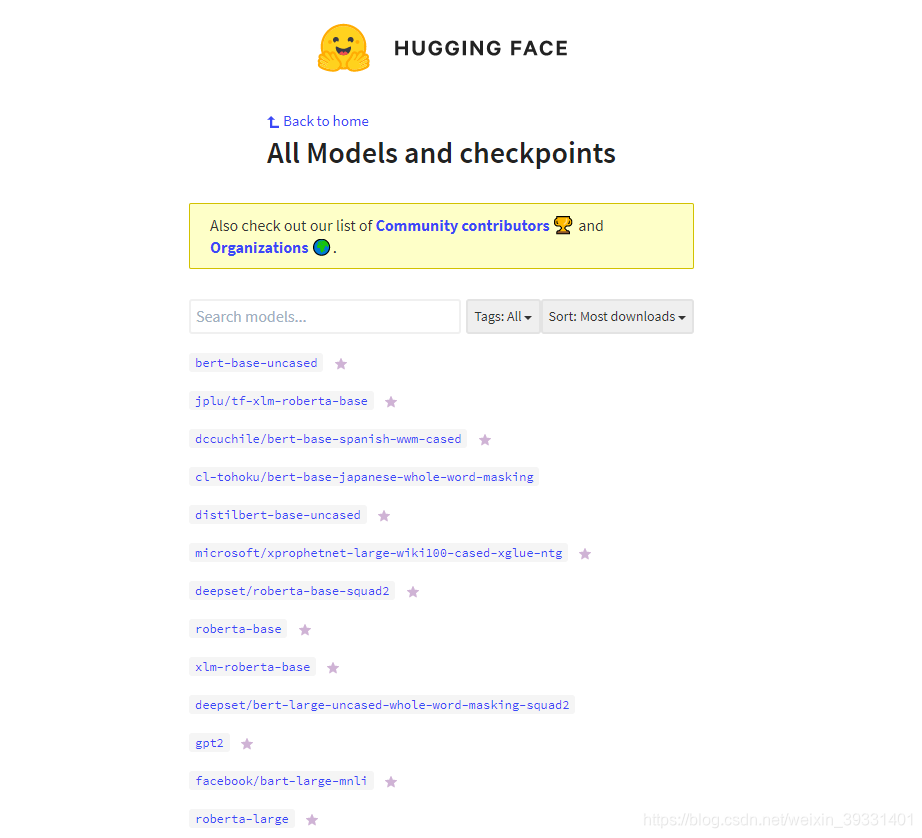

官方抱抱脸合集网址:

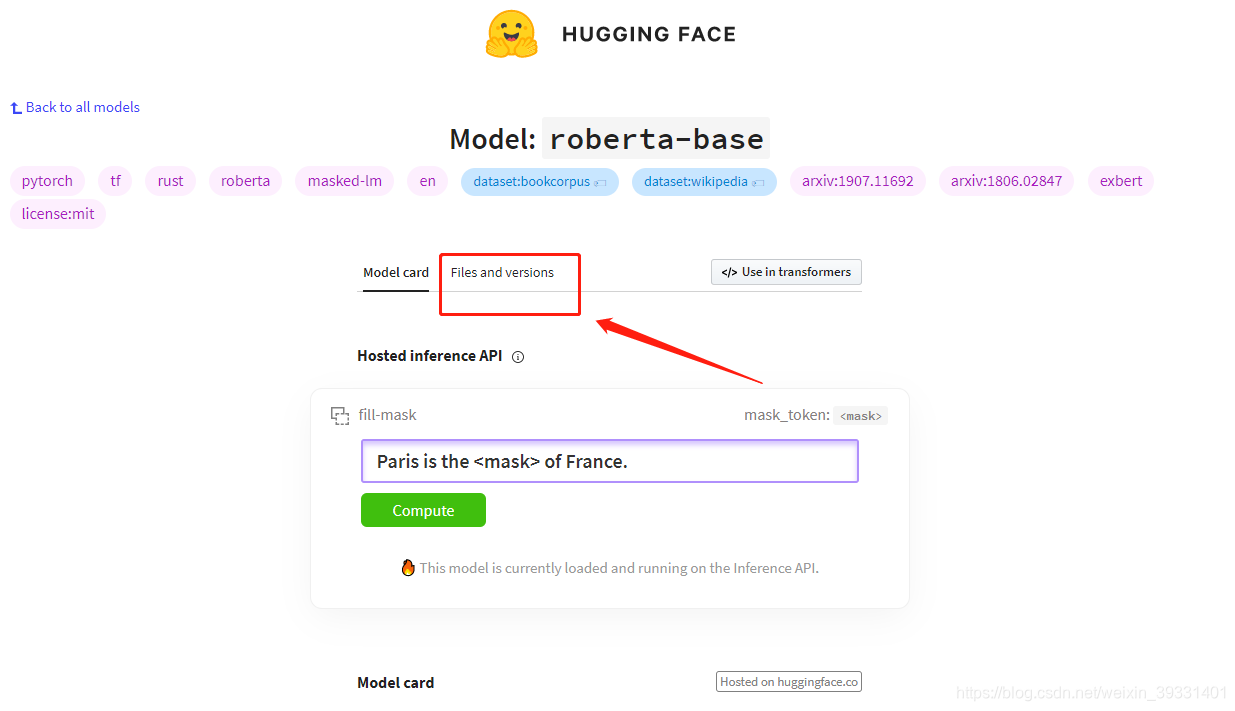

找到需要的模型点进去,选files and versions

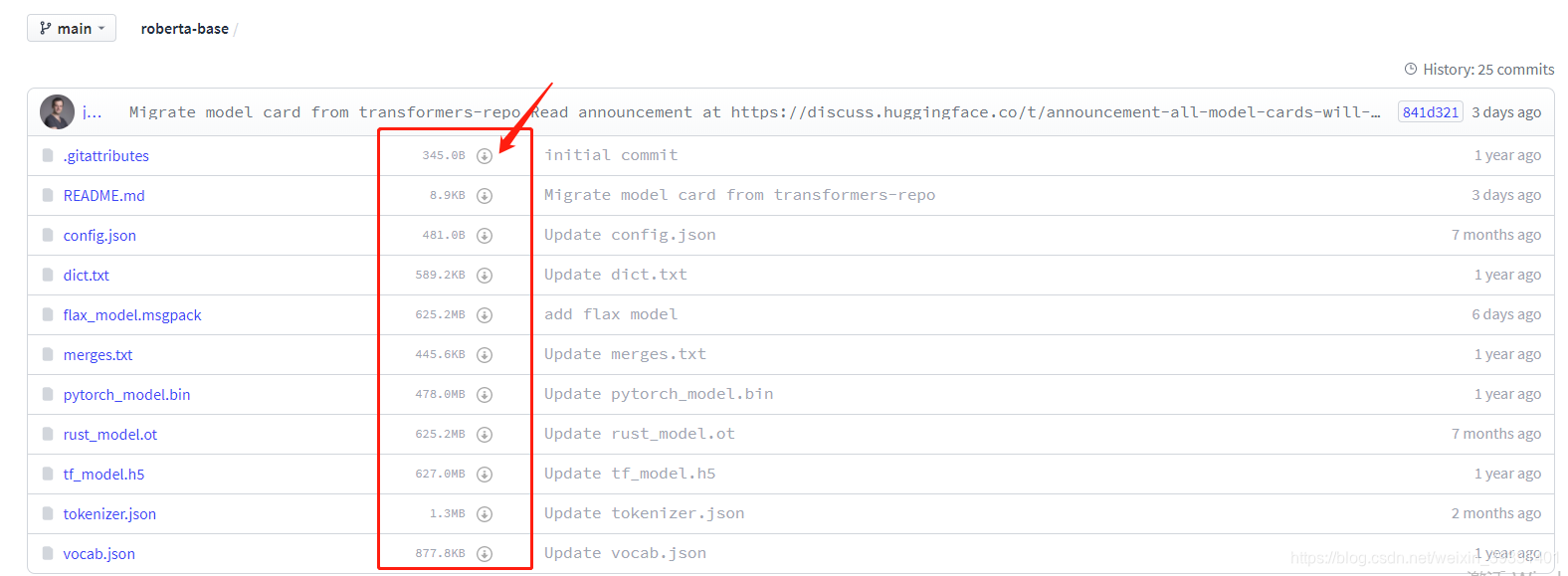

点击下载小箭头进行下载

一些基本的下载网址:

"bert-base-uncased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-uncased-config.json",

"bert-large-uncased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-large-uncased-config.json",

"bert-base-cased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-cased-config.json",

"bert-large-cased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-large-cased-config.json",

"bert-base-multilingual-uncased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-multilingual-uncased-config.json",

"bert-base-multilingual-cased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-multilingual-cased-config.json",

"bert-base-chinese": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-chinese-config.json",

"bert-base-german-cased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-german-cased-config.json",

"bert-large-uncased-whole-word-masking": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-large-uncased-whole-word-masking-config.json",

"bert-large-cased-whole-word-masking": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-large-cased-whole-word-masking-config.json",

"bert-large-uncased-whole-word-masking-finetuned-squad": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-large-uncased-whole-word-masking-finetuned-squad-config.json",

"bert-large-cased-whole-word-masking-finetuned-squad": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-large-cased-whole-word-masking-finetuned-squad-config.json",

"bert-base-cased-finetuned-mrpc": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-cased-finetuned-mrpc-config.json",

"bert-base-german-dbmdz-cased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-german-dbmdz-cased-config.json",

"bert-base-german-dbmdz-uncased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-german-dbmdz-uncased-config.json",

"bert-base-japanese": "https://s3.amazonaws.com/models.huggingface.co/bert/cl-tohoku/bert-base-japanese-config.json",

"bert-base-japanese-whole-word-masking": "https://s3.amazonaws.com/models.huggingface.co/bert/cl-tohoku/bert-base-japanese-whole-word-masking-config.json",

"bert-base-japanese-char": "https://s3.amazonaws.com/models.huggingface.co/bert/cl-tohoku/bert-base-japanese-char-config.json",

"bert-base-japanese-char-whole-word-masking": "https://s3.amazonaws.com/models.huggingface.co/bert/cl-tohoku/bert-base-japanese-char-whole-word-masking-config.json",

"bert-base-finnish-cased-v1": "https://s3.amazonaws.com/models.huggingface.co/bert/TurkuNLP/bert-base-finnish-cased-v1/config.json",

"bert-base-finnish-uncased-v1": "https://s3.amazonaws.com/models.huggingface.co/bert/TurkuNLP/bert-base-finnish-uncased-v1/config.json",

"bert-base-dutch-cased": "https://s3.amazonaws.com/models.huggingface.co/bert/wietsedv/bert-base-dutch-cased/config.json"处理中文的版本

- Google原版bert: https://github.com/google-research/bert

- brightmart版roberta: https://github.com/brightmart/roberta_zh

- 哈工大版roberta: https://github.com/ymcui/Chinese-BERT-wwm

- Google原版albert[例子]: https://github.com/google-research/ALBERT

- brightmart版albert: https://github.com/brightmart/albert_zh

- 转换后的albert: https://github.com/bojone/albert_zh

- 华为的NEZHA: https://github.com/huawei-noah/Pretrained-Language-Model/tree/master/NEZHA

- 自研语言模型: https://github.com/ZhuiyiTechnology/pretrained-models

- T5模型: https://github.com/google-research/text-to-text-transfer-transformer

- GPT2_ML: https://github.com/imcaspar/gpt2-ml

- Google原版ELECTRA: https://github.com/google-research/electra

- 哈工大版ELECTRA: https://github.com/ymcui/Chinese-ELECTRA

- CLUE版ELECTRA: https://github.com/CLUEbenchmark/ELECTRA

-

模型简称 语料 Google下载 讯飞云下载 RBT6, ChineseEXT数据[1] - TensorFlow(密码XNMA) RBT4, ChineseEXT数据[1] - TensorFlow(密码e8dN) RBTL3, ChineseEXT数据[1] TensorFlow

PyTorchTensorFlow(密码vySW) RBT3, ChineseEXT数据[1] TensorFlow

PyTorchTensorFlow(密码b9nx) RoBERTa-wwm-ext-large, ChineseEXT数据[1] TensorFlow

PyTorchTensorFlow(密码u6gC) RoBERTa-wwm-ext, ChineseEXT数据[1] TensorFlow

PyTorchTensorFlow(密码Xe1p) BERT-wwm-ext, ChineseEXT数据[1] TensorFlow

PyTorchTensorFlow(密码4cMG) BERT-wwm, Chinese中文维基 TensorFlow

PyTorchTensorFlow(密码07Xj)

这篇博客整理了Hugging Face官方的模型合集网址,提供了一系列预训练模型的下载链接,包括BERT、RoBERTa、ALBERT等不同版本。此外,还列举了处理中文的模型资源,如Google的BERT、RoBERTa的中文实现以及ELECTRA等,并提供了相应的下载地址和不同的处理中文的版本。对于中文模型,还给出了不同版本的配置文件和下载方式。

这篇博客整理了Hugging Face官方的模型合集网址,提供了一系列预训练模型的下载链接,包括BERT、RoBERTa、ALBERT等不同版本。此外,还列举了处理中文的模型资源,如Google的BERT、RoBERTa的中文实现以及ELECTRA等,并提供了相应的下载地址和不同的处理中文的版本。对于中文模型,还给出了不同版本的配置文件和下载方式。

4912

4912

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?