d:

进入D盘

scrapy startproject douban

创建豆瓣项目

cd douban

进入项目

scrapy genspider douban_spider movie.douban.com

创建爬虫

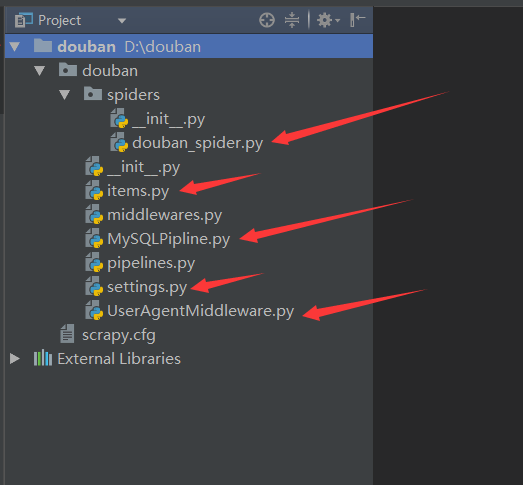

编辑items.py:

# -*- coding: utf-8 -*-

# Define here the models for your scraped items

#

# See documentation in:

# https://doc.scrapy.org/en/latest/topics/items.html

import scrapy

class DoubanItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

serial_number = scrapy.Field()

# 序号

movie_name = scrapy.Field()

# 电影的名称

introduce = scrapy.Field()

# 电影的介绍

star = scrapy.Field()

# 星级

evaluate = scrapy.Field()

# 电影的评论数

depict = scrapy.Field()

# 电影的描述

编辑douban_spider.py:

# -*- coding: utf-8 -*-

import scrapy

from douban.items import DoubanItem

class DoubanSpiderSpider(scrapy.Spider):

name = 'douban_spider'

# 爬虫的名字

allowed_domains = ['movie.douban.com']

# 允许的域名

start_urls = ['https://movie.douban.com/top250']

# 引擎入口url,扔到调度器里面去

def parse(self, response):

# 默认的解析方法

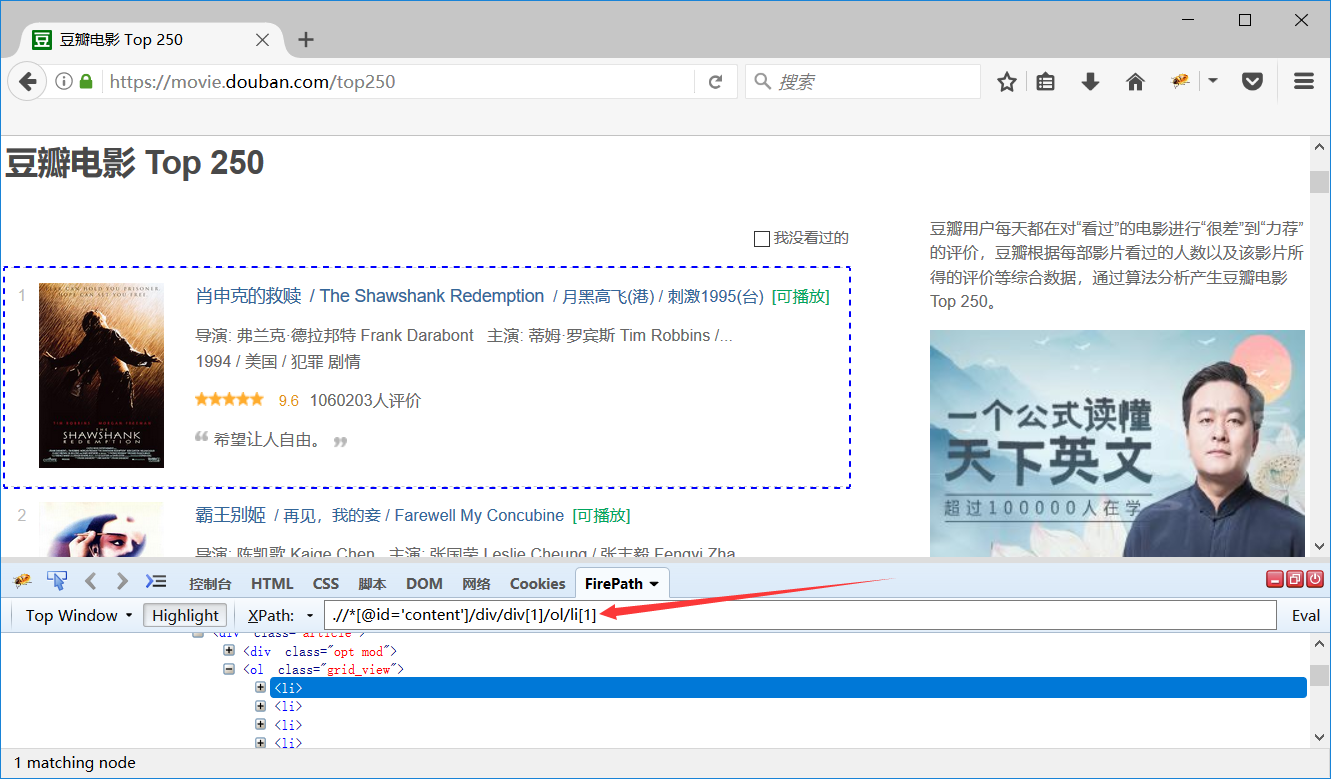

movie_list = response.xpath(".//*[@id='content']/div/div[1]/ol/li")

# 第一页展示的电影的列表

for i_item in movie_list:

# 循环电影的条目

douban_item = DoubanItem()

# 实例化DoubanItem()

douban_item["serial_number"] = i_item.xpath(".//div/div[1]/em/text()").extract_first()

douban_item["movie_name"] = i_item.xpath(".//div/div[2]/div[1]/a/span[1]/text()").extract_first()

content = i_item.xpath(".//div/div[2]/div[2]/p[1]/text()").extract()

for i_content in content:

# 处理电影的介绍中的换行的数据

content_s = "".join(i_content.split())

douban_item["introduce"] = content_s

douban_item["star"] = i_item.xpath(".//div/div[2]/div[2]/div/span[2]/text()").extract_first()

douban_item["evaluate"] = i_item.xpath(".//div/div[2]/div[2]/div/span[4]/text()").extract_first()

douban_item["depict"] = i_item.xpath(".//div/div[2]/div[2]/p[2]/span/text()").extract_first()

yield douban_item

# 需要把数据yield到pipelines里面去

next_link = response.xpath(".//*[@id='content']/div/div[1]/div[2]/span[3]/a/@href").extract()

# 解析下一页,取后页的xpath

if next_link:

next_link = next_link[0]

yield scrapy.Request("https://movie.douban.com/top250" + next_link, callback=self.parse)

新建MySQLPipline.py:

from pymysql import connect

class MySQLPipeline(object):

def __init__(self):

self.connect = connect(

host='192.168.1.23',

port=3306,

db='scrapy',

user='root',

passwd='Abcdef@123456',

charset='utf8',

use_unicode=True)

# 连接数据库

self.cursor = self.connect.cursor()

# 使用cursor()方法获取操作游标

def process_item(self, item, spider):

self.cursor.execute(

"""insert into douban(serial_number, movie_name, introduce, star, evaluate, depict)

value (%s, %s, %s, %s, %s, %s)""",

(item['serial_number'],

item['movie_name'],

item['introduce'],

item['star'],

item['evaluate'],

item['depict']

))

# 执行sql语句,item里面定义的字段和表字段一一对应

self.connect.commit()

# 提交

return item

# 返回item

def close_spider(self, spider):

self.cursor.close()

# 关闭游标

self.connect.close()

# 关闭数据库连接

新建UserAgentMiddleware.py:

import random

class UserAgentMiddleware(object):

def process_request(self, request, spider):

user_agent_list = [

"Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; AcooBrowser; .NET CLR 1.1.4322; .NET CLR 2.0.50727)",

"Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.0; Acoo Browser; SLCC1; .NET CLR 2.0.50727; Media Center PC 5.0; .NET CLR 3.0.04506)",

"Mozilla/4.0 (compatible; MSIE 7.0; AOL 9.5; AOLBuild 4337.35; Windows NT 5.1; .NET CLR 1.1.4322; .NET CLR 2.0.50727)",

"Mozilla/5.0 (Windows; U; MSIE 9.0; Windows NT 9.0; en-US)",

"Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Win64; x64; Trident/5.0; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 2.0.50727; Media Center PC 6.0)",

"Mozilla/5.0 (compatible; MSIE 8.0; Windows NT 6.0; Trident/4.0; WOW64; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 1.0.3705; .NET CLR 1.1.4322)",

"Mozilla/4.0 (compatible; MSIE 7.0b; Windows NT 5.2; .NET CLR 1.1.4322; .NET CLR 2.0.50727; InfoPath.2; .NET CLR 3.0.04506.30)",

"Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN) AppleWebKit/523.15 (KHTML, like Gecko, Safari/419.3) Arora/0.3 (Change: 287 c9dfb30)",

"Mozilla/5.0 (X11; U; Linux; en-US) AppleWebKit/527+ (KHTML, like Gecko, Safari/419.3) Arora/0.6",

"Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.8.1.2pre) Gecko/20070215 K-Ninja/2.1.1",

"Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN; rv:1.9) Gecko/20080705 Firefox/3.0 Kapiko/3.0",

"Mozilla/5.0 (X11; Linux i686; U;) Gecko/20070322 Kazehakase/0.4.5",

"Mozilla/5.0 (X11; U; Linux i686; en-US; rv:1.9.0.8) Gecko Fedora/1.9.0.8-1.fc10 Kazehakase/0.5.6",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.56 Safari/535.11",

"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_7_3) AppleWebKit/535.20 (KHTML, like Gecko) Chrome/19.0.1036.7 Safari/535.20",

"Opera/9.80 (Macintosh; Intel Mac OS X 10.6.8; U; fr) Presto/2.9.168 Version/11.52",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.11 (KHTML, like Gecko) Chrome/20.0.1132.11 TaoBrowser/2.0 Safari/536.11",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.71 Safari/537.1 LBBROWSER",

"Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E; LBBROWSER)",

"Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; QQDownload 732; .NET4.0C; .NET4.0E; LBBROWSER)",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.84 Safari/535.11 LBBROWSER",

"Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E)",

"Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E; QQBrowser/7.0.3698.400)",

"Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; QQDownload 732; .NET4.0C; .NET4.0E)",

"Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1; Trident/4.0; SV1; QQDownload 732; .NET4.0C; .NET4.0E; 360SE)",

"Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; QQDownload 732; .NET4.0C; .NET4.0E)",

"Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E)",

"Mozilla/5.0 (Windows NT 5.1) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.89 Safari/537.1",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.89 Safari/537.1",

"Mozilla/5.0 (iPad; U; CPU OS 4_2_1 like Mac OS X; zh-cn) AppleWebKit/533.17.9 (KHTML, like Gecko) Version/5.0.2 Mobile/8C148 Safari/6533.18.5",

"Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:2.0b13pre) Gecko/20110307 Firefox/4.0b13pre",

"Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:16.0) Gecko/20100101 Firefox/16.0",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.11 (KHTML, like Gecko) Chrome/23.0.1271.64 Safari/537.11",

"Mozilla/5.0 (X11; U; Linux x86_64; zh-CN; rv:1.9.2.10) Gecko/20100922 Ubuntu/10.10 (maverick) Firefox/3.6.10",

"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.36"

]

agent = random.choice(user_agent_list)

request.headers["User-Agent"] = agent

修改settings.py配置文件:

第57行修改为:

DOWNLOADER_MIDDLEWARES = {

'douban.UserAgentMiddleware.UserAgentMiddleware': 543,

}

# 启用middleware

第69行修改为:

ITEM_PIPELINES = {

'douban.MySQLPipline.MySQLPipeline': 300,

}

# 启用pipeline

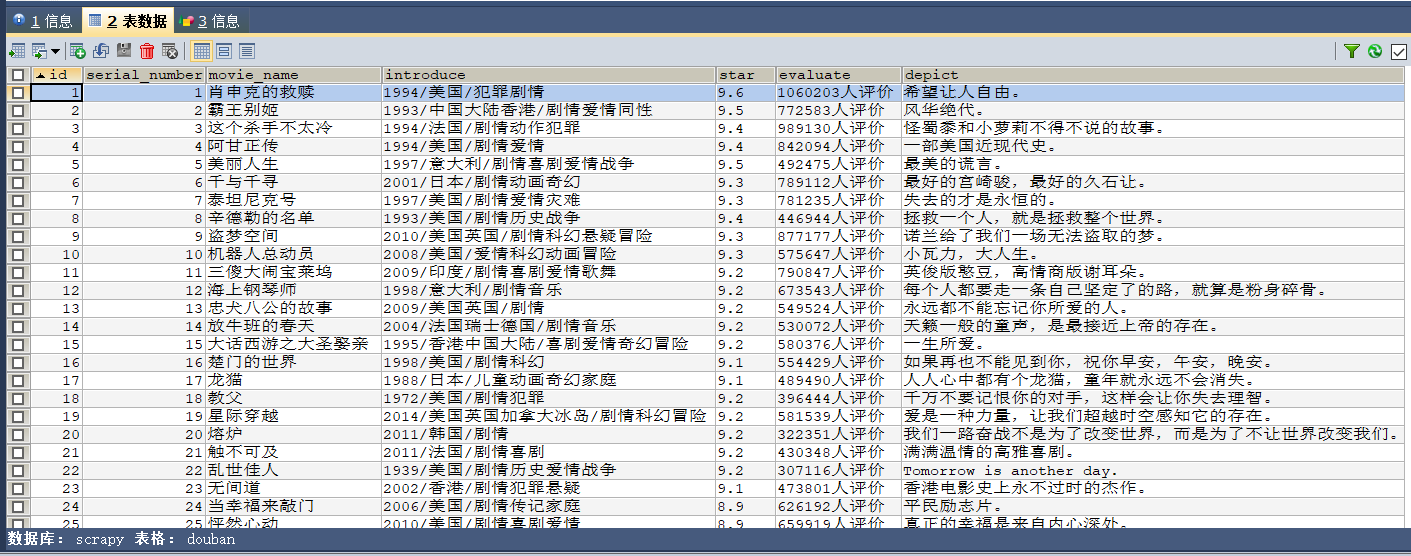

CREATE DATABASE scrapy;

创建数据库

CREATE TABLE `douban` (

`id` INT(11) NOT NULL AUTO_INCREMENT,

`serial_number` INT(11) DEFAULT NULL COMMENT '序号',

`movie_name` VARCHAR(255) DEFAULT NULL COMMENT '电影的名称',

`introduce` VARCHAR(255) DEFAULT NULL COMMENT '电影的介绍',

`star` VARCHAR(255) DEFAULT NULL COMMENT '星级',

`evaluate` VARCHAR(255) DEFAULT NULL COMMENT '电影的评论数',

`depict` VARCHAR(255) DEFAULT NULL COMMENT '电影的描述',

PRIMARY KEY (`id`)

) ENGINE=INNODB DEFAULT CHARSET=utf8 COMMENT '豆瓣表';

创建表

scrapy crawl douban_spider --nolog

运行爬虫(不打印日志)

1037

1037

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?