本文章转载自sheldon_blogs的博客,具体网址如下:https://www.cnblogs.com/blogs-of-lxl/p/5152578.html

本文章仅供学习研究使用,如须转载请附上原作者名称及网址五、Camera.takePicture()流程

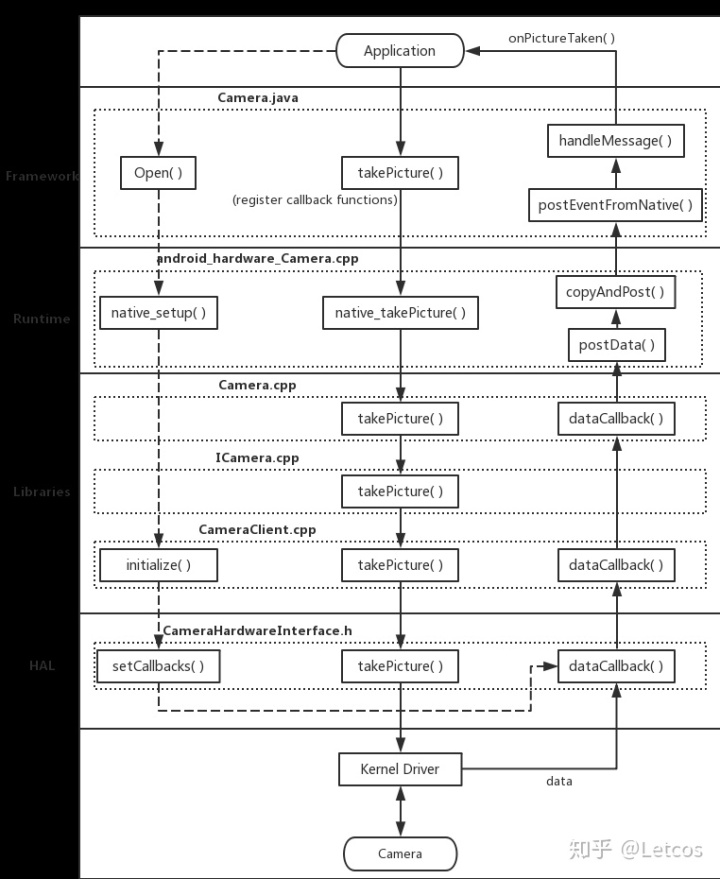

Camera API 1 中,数据流主要是通过函数回调的方式,依照从下往上的方向,逐层 return 到 Applications 中。

1.Open 时设置回调

frameworks/av/services/camera/libcameraservice/device1/CameraHardwareInterface.h

/** Set the notification and data callbacks */

void setCallbacks(notify_callback notify_cb,

data_callback data_cb,

data_callback_timestamp data_cb_timestamp,

void* user)

{

mNotifyCb = notify_cb; //设置 notify 回调,这用来通知数据已经更新。

mDataCb = data_cb; //置 data 回调以及 dataTimestamp 回调,对应的是函数指针 mDataCb 与 mDataCvTimestamp 。

mDataCbTimestamp = data_cb_timestamp;

mCbUser = user;

ALOGV("%s(%s)", __FUNCTION__, mName.string());

if (mDevice->ops->set_callbacks) { //注:设置 mDevice->ops 对应回调函数时,传入的不是之前设置的函数指针,而是 __data_cb 这样的函数。在该文件中,实现了 __data_cb ,将回调函数做了一层封装。

mDevice->ops->set_callbacks(mDevice,

__notify_cb,

__data_cb,

__data_cb_timestamp,

__get_memory,

this);

}

}__data_cb():对原 callback 函数简单封装,附加了一个防止数组越界判断。

static void __data_cb(int32_t msg_type,

const camera_memory_t *data, unsigned int index,

camera_frame_metadata_t *metadata,

void *user)

{

ALOGV("%s", __FUNCTION__);

CameraHardwareInterface *__this =

static_cast<CameraHardwareInterface *>(user);

sp<CameraHeapMemory> mem(static_cast<CameraHeapMemory *>(data->handle));

if (index >= mem->mNumBufs) {

ALOGE("%s: invalid buffer index %d, max allowed is %d", __FUNCTION__,

index, mem->mNumBufs);

return;

}

__this->mDataCb(msg_type, mem->mBuffers[index], metadata, __this->mCbUser);

}2.控制流

(1)frameworks/base/core/java/android/hardware/Camera.java

takePicture():

public final void takePicture(ShutterCallback shutter, PictureCallback raw,

PictureCallback postview, PictureCallback jpeg) {

mShutterCallback = shutter; //设置快门回调。

mRawImageCallback = raw; //设置各种类型的图片数据回调。

mPostviewCallback = postview;

mJpegCallback = jpeg;

// If callback is not set, do not send me callbacks.

int msgType = 0;

if (mShutterCallback != null) {

msgType |= CAMERA_MSG_SHUTTER;

}

if (mRawImageCallback != null) {

msgType |= CAMERA_MSG_RAW_IMAGE;

}

if (mPostviewCallback != null) {

msgType |= CAMERA_MSG_POSTVIEW_FRAME;

}

if (mJpegCallback != null) {

msgType |= CAMERA_MSG_COMPRESSED_IMAGE;

}

native_takePicture(msgType); //调用 JNI takePicture 方法,传入的参数 msgType 是根据相应 CallBack 是否存在而确定的,每种 Callback 应该对应一个二进制中的数位(如 1,10,100 中 1 的位置)

mFaceDetectionRunning = false;

}3.Android Runtime

frameworks/base/core/jni/android_hardware_Camera.cpp

static void android_hardware_Camera_takePicture(JNIEnv *env, jobject thiz, jint msgType)

{

ALOGV("takePicture");

JNICameraContext* context;

sp<Camera> camera = get_native_camera(env, thiz, &context); //获取已经打开的 camera 实例,调用其 takePicture() 接口。

if (camera == 0) return;

/*

* When CAMERA_MSG_RAW_IMAGE is requested, if the raw image callback

* buffer is available, CAMERA_MSG_RAW_IMAGE is enabled to get the

* notification _and_ the data; otherwise, CAMERA_MSG_RAW_IMAGE_NOTIFY

* is enabled to receive the callback notification but no data.

*

* Note that CAMERA_MSG_RAW_IMAGE_NOTIFY is not exposed to the

* Java application.

*

注意,在这个函数中,对于 RAW_IMAGE 有一些附加操作:

如果设置了 RAW 的 callback ,则要检查上下文中,是否能找到对应 Buffer。

若无法找到 Buffer ,则将 CAMERA_MSG_RAW_IMAGE 的信息去掉,换成 CAMERA_MSG_RAW_IMAGE_NOTIFY。

替换后,就只会获得 notification 的消息,而没有对应的图像数据。

*/

if (msgType & CAMERA_MSG_RAW_IMAGE) {

ALOGV("Enable raw image callback buffer");

if (!context->isRawImageCallbackBufferAvailable()) {

ALOGV("Enable raw image notification, since no callback buffer exists");

msgType &= ~CAMERA_MSG_RAW_IMAGE;

msgType |= CAMERA_MSG_RAW_IMAGE_NOTIFY;

}

}

if (camera->takePicture(msgType) != NO_ERROR) {

jniThrowRuntimeException(env, "takePicture failed");

return;

}

}

4.C/C++ Libraries

(1)frameworks/av/camera/Camera.cpp

// take a picture

status_t Camera::takePicture(int msgType)

{

ALOGV("takePicture: 0x%x", msgType);

sp <::android::hardware::ICamera> c = mCamera;

if (c == 0) return NO_INIT;

return c->takePicture(msgType); //获取一个 ICamera,调用其 takePicture 接口。

}

(2)frameworks/av/camera/ICamera.cpp

// take a picture - returns an IMemory (ref-counted mmap)

status_t takePicture(int msgType)

{

ALOGV("takePicture: 0x%x", msgType);

Parcel data, reply;

data.writeInterfaceToken(ICamera::getInterfaceDescriptor());

data.writeInt32(msgType);

remote()->transact(TAKE_PICTURE, data, &reply); //利用 Binder 机制发送相应指令到服务端,实际调用到的是 CameraClient::takePicture() 函数。

status_t ret = reply.readInt32();

return ret;

}

(3)frameworks/av/services/camera/libcameraservice/api1/CameraClient.cpp

// take a picture - image is returned in callback

status_t CameraClient::takePicture(int msgType) {

LOG1("takePicture (pid %d): 0x%x", getCallingPid(), msgType);

Mutex::Autolock lock(mLock);

status_t result = checkPidAndHardware();

if (result != NO_ERROR) return result;

if ((msgType & CAMERA_MSG_RAW_IMAGE) && //注:CAMERA_MSG_RAW_IMAGE 指令与 CAMERA_MSG_RAW_IMAGE_NOTIFY 指令不能同时有效,需要进行对应的检查。

(msgType & CAMERA_MSG_RAW_IMAGE_NOTIFY)) {

ALOGE("CAMERA_MSG_RAW_IMAGE and CAMERA_MSG_RAW_IMAGE_NOTIFY"

" cannot be both enabled");

return BAD_VALUE;

}

// We only accept picture related message types

// and ignore other types of messages for takePicture().

int picMsgType = msgType //对传入的指令过滤,只留下与 takePicture() 操作相关的。

& (CAMERA_MSG_SHUTTER |

CAMERA_MSG_POSTVIEW_FRAME |

CAMERA_MSG_RAW_IMAGE |

CAMERA_MSG_RAW_IMAGE_NOTIFY |

CAMERA_MSG_COMPRESSED_IMAGE);

enableMsgType(picMsgType);

return mHardware->takePicture(); //调用 CameraHardwareInterface 中的 takePicture() 接口。

}

5.数据流

由于数据流是通过 callback 函数实现的,所以探究其流程的时候我是从底层向上层进行分析的

(1)HAL:frameworks/av/services/camera/libcameraservice/device1/CameraHardwareInterface.h

/**

* Take a picture.

*/

status_t takePicture()

{

ALOGV("%s(%s)", __FUNCTION__, mName.string());

if (mDevice->ops->take_picture)

return mDevice->ops->take_picture(mDevice); //通过 mDevice 中设置的函数指针,调用 HAL 层中具体平台对应的 takePicture 操作的实现逻辑。

return INVALID_OPERATION;

}

__data_cb():该回调函数是在同文件中实现的 setCallbacks() 函数中设置的,Camera 设备获得数据后,就会往上传输,在 HAL 层中会调用到这个回调函数。

static void __data_cb(int32_t msg_type,

const camera_memory_t *data, unsigned int index,

camera_frame_metadata_t *metadata,

void *user)

{

ALOGV("%s", __FUNCTION__);

CameraHardwareInterface *__this =

static_cast<CameraHardwareInterface *>(user);

sp<CameraHeapMemory> mem(static_cast<CameraHeapMemory *>(data->handle));

if (index >= mem->mNumBufs) {

ALOGE("%s: invalid buffer index %d, max allowed is %d", __FUNCTION__,

index, mem->mNumBufs);

return;

}

__this->mDataCb(msg_type, mem->mBuffers[index], metadata, __this->mCbUser); // mDataCb 指针对应的是 CameraClient 类中实现的 dataCallback()。

}

(2)C/C++ Libraries

frameworks/av/services/camera/libcameraservice/api1/CameraClient.cpp

void CameraClient::dataCallback(int32_t msgType, //该回调在initialize() 函数中设置到 CameraHardwareInterface 中。

const sp<IMemory>& dataPtr, camera_frame_metadata_t *metadata, void* user) {

LOG2("dataCallback(%d)", msgType);

sp<CameraClient> client = static_cast<CameraClient*>(getClientFromCookie(user).get()); //启动这个回调后,就从 Cookie 中获取已连接的客户端。

if (client.get() == nullptr) return;

if (!client->lockIfMessageWanted(msgType)) return;

if (dataPtr == 0 && metadata == NULL) {

ALOGE("Null data returned in data callback");

client->handleGenericNotify(CAMERA_MSG_ERROR, UNKNOWN_ERROR, 0);

return;

}

switch (msgType & ~CAMERA_MSG_PREVIEW_METADATA) { //根据 msgType,启动对应的 handle 操作。

case CAMERA_MSG_PREVIEW_FRAME:

client->handlePreviewData(msgType, dataPtr, metadata);

break;

case CAMERA_MSG_POSTVIEW_FRAME:

client->handlePostview(dataPtr);

break;

case CAMERA_MSG_RAW_IMAGE:

client->handleRawPicture(dataPtr);

break;

case CAMERA_MSG_COMPRESSED_IMAGE:

client->handleCompressedPicture(dataPtr);

break;

default:

client->handleGenericData(msgType, dataPtr, metadata);

break;

}

}

handleRawPicture():

// picture callback - raw image ready

void CameraClient::handleRawPicture(const sp<IMemory>& mem) {

disableMsgType(CAMERA_MSG_RAW_IMAGE);

ssize_t offset;

size_t size;

sp<IMemoryHeap> heap = mem->getMemory(&offset, &size);

sp<hardware::ICameraClient> c = mRemoteCallback; //在 open 流程中,connect() 函数调用时,mRemoteCallback 已经设置为一个客户端实例,其对应的是 ICameraClient 的强指针。

mLock.unlock();

if (c != 0) {

c->dataCallback(CAMERA_MSG_RAW_IMAGE, mem, NULL); //基于 Binder 机制来启动客户端的 dataCallback客户端的,dataCallback 是实现在 Camera 类中。

}

}

frameworks/av/camera/Camera.cpp

// callback from camera service when frame or image is ready

void Camera::dataCallback(int32_t msgType, const sp<IMemory>& dataPtr,

camera_frame_metadata_t *metadata)

{

sp<CameraListener> listener;

{

Mutex::Autolock _l(mLock);

listener = mListener;

}

if (listener != NULL) {

listener->postData(msgType, dataPtr, metadata); //调用 CameraListener 的 postData 接口(android_hardware_Camera.cpp中实现),将数据继续向上传输。

}

}

(3)Android Runtime :frameworks/base/core/jni/android_hardware_Camera.cpp

void JNICameraContext::postData(int32_t msgType, const sp<IMemory>& dataPtr,

camera_frame_metadata_t *metadata) //postData是 JNICameraContext 类的成员函数,该类继承了 CameraListener。

{

// VM pointer will be NULL if object is released

Mutex::Autolock _l(mLock);

JNIEnv *env = AndroidRuntime::getJNIEnv(); //首先获取虚拟机指针

if (mCameraJObjectWeak == NULL) {

ALOGW("callback on dead camera object");

return;

}

int32_t dataMsgType = msgType & ~CAMERA_MSG_PREVIEW_METADATA; //然后过滤掉 CAMERA_MSG_PREVIEW_METADATA 信息

// return data based on callback type

switch (dataMsgType) {

case CAMERA_MSG_VIDEO_FRAME:

// should never happen

break;

// For backward-compatibility purpose, if there is no callback

// buffer for raw image, the callback returns null.

case CAMERA_MSG_RAW_IMAGE:

ALOGV("rawCallback");

if (mRawImageCallbackBuffers.isEmpty()) {

env->CallStaticVoidMethod(mCameraJClass, fields.post_event,

mCameraJObjectWeak, dataMsgType, 0, 0, NULL);

} else {

copyAndPost(env, dataPtr, dataMsgType); //关键是在于 copyAndPost() 函数

}

break;

// There is no data.

case 0:

break;

default:

ALOGV("dataCallback(%d, %p)", dataMsgType, dataPtr.get());

copyAndPost(env, dataPtr, dataMsgType);

break;

}

// post frame metadata to Java

if (metadata && (msgType & CAMERA_MSG_PREVIEW_METADATA)) {

postMetadata(env, CAMERA_MSG_PREVIEW_METADATA, metadata);

}

}

copyAndPost():

void JNICameraContext::copyAndPost(JNIEnv* env, const sp<IMemory>& dataPtr, int msgType)

{

jbyteArray obj = NULL;

// allocate Java byte array and copy data

if (dataPtr != NULL) { //首先确认 Memory 中数据是否存在

ssize_t offset;

size_t size;

sp<IMemoryHeap> heap = dataPtr->getMemory(&offset, &size);

ALOGV("copyAndPost: off=%zd, size=%zu", offset, size);

uint8_t *heapBase = (uint8_t*)heap->base();

if (heapBase != NULL) {

const jbyte* data = reinterpret_cast<const jbyte*>(heapBase + offset);

if (msgType == CAMERA_MSG_RAW_IMAGE) {

obj = getCallbackBuffer(env, &mRawImageCallbackBuffers, size);

} else if (msgType == CAMERA_MSG_PREVIEW_FRAME && mManualBufferMode) {

obj = getCallbackBuffer(env, &mCallbackBuffers, size);

if (mCallbackBuffers.isEmpty()) {

ALOGV("Out of buffers, clearing callback!");

mCamera->setPreviewCallbackFlags(CAMERA_FRAME_CALLBACK_FLAG_NOOP);

mManualCameraCallbackSet = false;

if (obj == NULL) {

return;

}

}

} else {

ALOGV("Allocating callback buffer");

obj = env->NewByteArray(size);

}

if (obj == NULL) {

ALOGE("Couldn't allocate byte array for JPEG data");

env->ExceptionClear();

} else {

env->SetByteArrayRegion(obj, 0, size, data);

}

} else {

ALOGE("image heap is NULL");

}

}

// post image data to Java

env->CallStaticVoidMethod(mCameraJClass, fields.post_event, //将图像传给 Java 端

mCameraJObjectWeak, msgType,0, 0, obj);

if (obj) {

env->DeleteLocalRef(obj);

}

}

(4)frameworks/base/core/java/android/hardware/Camera.java

private static void postEventFromNative(Object camera_ref, //继承了 Handler 类

int what, int arg1, int arg2, Object obj)

{

Camera c = (Camera)((WeakReference)camera_ref).get(); //首先确定 Camera 是否已经实例化。

if (c == null)

return;

if (c.mEventHandler != null) {

Message m = c.mEventHandler.obtainMessage(what, arg1, arg2, obj); //通过 Camera 的成员 mEventHandler 的 obtainMessage 方法将从 Native 环境中获得的数据封装成 Message 类的一个实例,

c.mEventHandler.sendMessage(m); //然后调用 sendMessage() 方法将数据传出。

}

}

....

@Override

public void handleMessage(Message msg) { //继承了 Handler 类

switch(msg.what) {

...

mPostviewCallback.onPictureTaken((byte[])msg.obj, mCamera); //通过调用这个方法,底层传输到此的数据最终发送到最上层的 Java 应用中,上层应用通过解析 Message 得到图像数据。

简图总结:

总结:

不管是控制流还是数据流,都是要通过五大层次依次执行下一步的。控制流是将命令从顶层流向底层,而数据流则是将底层的数据流向顶层。如果要自定义一个对数据进行处理的 C++ 功能库,并将其加入相机中,可以通过对 HAL 层进行一些修改,将 RAW 图像流向自己的处理库,再将处理后的 RAW 图像传回 HAL 层(需要在 HAL 层对 RAW 格式进行一些处理才能把图像上传),最后通过正常的回调流程把图像传到顶层应用中。

个人博客:https://www.letcos.top/

856

856

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?