Introduce

在Camera里,除了Image Buffer外,另外一个重要的角色就是Metadata了。

那Metadata是什么呢?当我们要获取到关于当前这个设备上一些Device的信息,此时就需要从Metadata中去获取;想要知道每一张图的相关资讯,比如曝光时间,也需要从metadata中获取。所以Metadata就是描述某个东西的一包数据,这个东西可以是一个Camera Device,也可以是Request阶段捕获到的一张图等等。

Metadata又可以分为Static\Controls\Dynamic metadata:

Static metadata

存储了相机的相关资讯,描述了camera device的规格以及对应的能力。

Controls metadata

可控制相机行为的metadata, 每个request都会带的控制信息,相当于告诉底层该如何理解这个Request。

Dynamic metadata

当HAL收到control metadata后,设定给ISP,然后产生的对应的结果。

Metadata的数据结构

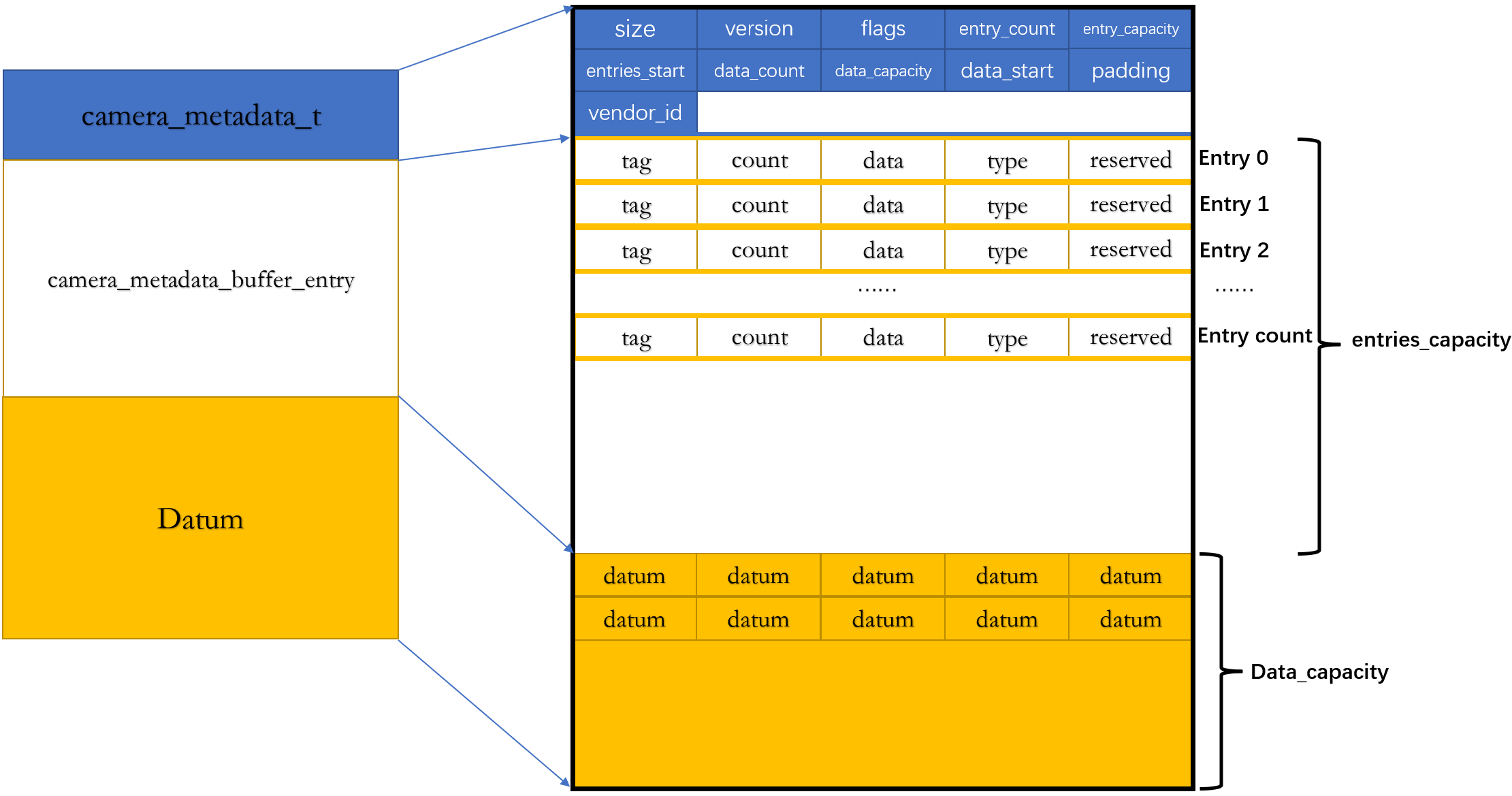

前面说“Metadata就是描述某个东西的一包数据",那这包数据到底是长什么样子呢?也就是它的数据结构是长什么样子?我们经常会遇到:通过Metadata里的一个TAG去获取其对应的Value,或者通过一个TAG去设置其对应的Value。所以不难猜测Metadata里面其实差不多是采用了<Key, Value>这样子的架构来存放数据的。

Metadata在内存中的分布

最直观的,先看一下Metadata它在内存中是什么样子的。关于Camera Meta的相关定义和操作是在system/media/camera 目录下。其中在system/media/camera/src/camera_metadata.c中,有描述了Camera Metadata的数据结构,看起来它其实是“几个Struct的组合体”.

/**

* A packet of metadata. This is a list of entries, each of which may point to

* its values stored at an offset in data.

*

* It is assumed by the utility functions that the memory layout of the packet

* is as follows:

*

* |-----------------------------------------------|

* | camera_metadata_t |

* | |

* |-----------------------------------------------|

* | reserved for future expansion |

* |-----------------------------------------------|

* | camera_metadata_buffer_entry_t #0 |

* |-----------------------------------------------|

* | .... |

* |-----------------------------------------------|

* | camera_metadata_buffer_entry_t #entry_count-1 |

* |-----------------------------------------------|

* | free space for |

* | (entry_capacity-entry_count) entries |

* |-----------------------------------------------|

* | start of camera_metadata.data |

* | |

* |-----------------------------------------------|

* | free space for |

* | (data_capacity-data_count) bytes |

* |-----------------------------------------------|

*

* With the total length of the whole packet being camera_metadata.size bytes.

*

* In short, the entries and data are contiguous in memory after the metadata

* header.

*/camera_metadata_t

它定义在system/media/camera/include/system/camera_metadata.h,但可以发现,其实camera_metadata_t就是system/media/camera/src/camera_metadata.c中的camera_metadata,它记录了这包metadata的一些基本信息。

typedef uint32_t metadata_uptrdiff_t;

typedef uint32_t metadata_size_t;

typedef uint64_t metadata_vendor_id_t;

#define METADATA_ALIGNMENT ((size_t) 4)

struct camera_metadata {

metadata_size_t size;

uint32_t version;

uint32_t flags;

metadata_size_t entry_count;

metadata_size_t entry_capacity;

metadata_uptrdiff_t entries_start; // Offset from camera_metadata

metadata_size_t data_count;

metadata_size_t data_capacity;

metadata_uptrdiff_t data_start; // Offset from camera_metadata

uint32_t padding; // padding to 8 bytes boundary

metadata_vendor_id_t vendor_id;

};

typedef struct camera_metadata camera_metadata_t;size:这包metadata所占的总的字节数

flags: 标记当前是否有对entry进行排序

entry_count: 当前Entry的数量,初始值为0

entry_capacity: 当前这包metadata能存放的最大Entry数量

entries_start: entry相对于camera_metadata_t起始地址的偏移量,即从camera_metadata_t的起始地址再偏移entries_start就到了开始存储Entry的位置了

data_count: 当前data的数量,初始值为0

data_capacity: 这包metadata能存储data的数量

data_start: data相对于camera_metadata_t起始地址的偏移量,即从camera_metadata_t的起始地址再偏移data_start就到了开始存储data的位置了

vendor_id:跟当前这个手机上的CameraDeviceProvider相关,每个CameraDeviceProvider都有自己对应的vendor_id

camera_metadata_buffer_entry

前面说”Metadata里应该是采用了<Key,Value>的结构“, 这里的camera_metadata_buffer_entry是就存储了Key(Metadata里其实就是TAG)和Value之间的关系。里面的每一个成员的含义都有说明,

这个struct里的data段,它里面存放的是这个TAG相关的数据信息,如果数据小于32bit,则直接存放到value里,不用再通过offset再跳转一次去访问数据了,因为offset本身也是需要uint32的空间的

/**

* A single metadata entry, storing an array of values of a given type. If the

* array is no larger than 4 bytes in size, it is stored in the data.value[]

* array; otherwise, it can found in the parent's data array at index

* data.offset.

*/

#define ENTRY_ALIGNMENT ((size_t) 4)

typedef struct camera_metadata_buffer_entry {

uint32_t tag; // tag的key值

uint32_t count; // tag的value对应的data的数量

union {

uint32_t offset; // offset标记当前的key值对应的value在data_start中的位

uint8_t value[4]; // 当value占用的字节数<=4时,直接存储到这里(省空间

} data;

uint8_t type; // TYPE_BYTE、TYPE_INT32、TYPE_FLOAT、TYPE_INT64

uint8_t reserved[3];

} camera_metadata_buffer_entry_t;camera_metadata_data

camera_metadata_data是前面camera_metadata_buffer_entry里offset所指向的value,因为数据类型不止一种,比如:有的TAG对应的数据是float类型,有的TAG对应的数据是int类型的, 所以camera metadata采用的是“共用体”这种数据类型来存储data。

/**

* A datum of metadata. This corresponds to camera_metadata_entry_t::data

* with the difference that each element is not a pointer. We need to have a

* non-pointer type description in order to figure out the largest alignment

* requirement for data (DATA_ALIGNMENT).

*/

#define DATA_ALIGNMENT ((size_t) 8)

typedef union camera_metadata_data {

uint8_t u8;

int32_t i32;

float f;

int64_t i64;

double d;

camera_metadata_rational_t r;

} camera_metadata_data_t;Camera metadata内存分布图

通过过前面的描述后,Camera metadata在内存分布图中的样子,可以简单地用下面这幅图来进行描述:

关于Tag和Value

TAG

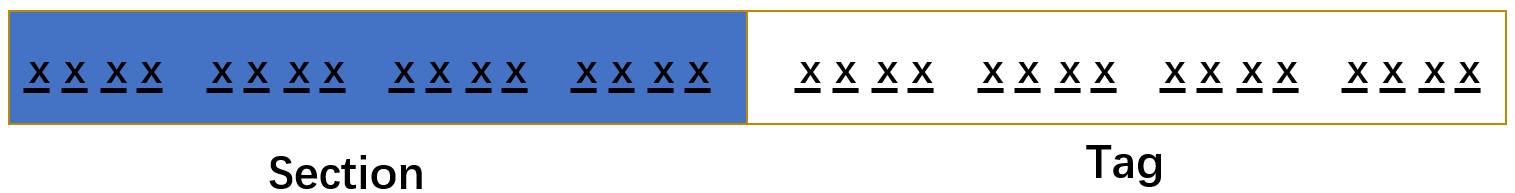

Tag即前面所说的camera_metadata_buffer_entry,对于每一个TAG来说,其中的变量uint32_t tag是这个TAG的Key值,也是区别于其他TAG的标识,它肯定是唯一的,那它是在哪里定义呢?这个tag其实是通过enum的方式定义的。

system/media/camera/include/system/camera_metadata_tags.h里枚举了AOSP里的TAG,它是按这样的步骤得到某个TAG的key值的:

先枚举Metadata section。因为总体上TAG可以按用途和模块儿进行分组,每一组就叫一个Section,比如Control meta, Sensor的meta、flash相关的meta等等。

为每一个section分配一个取值区间,在这区间内枚举出属于这个Section的所有TAG。

按照上面这样的方式就可以枚举完AOSP所有的tag

1.1枚举Metadata Section

Metadata的section定义如下,AOSP枚举了很多Section,像Control、Statistics、Sensor、flash这些。

注意看,最后还有一个VENDOR_SECTION,它的枚举值为0x8000,它的存在是因为除了Android自己定义的TAG外,还有平台厂商和OEM厂商自己定义的有其他用途的TAG,比如QualComm、MTK、Samsung和各个手机设备制造商等等。

/**

* Top level hierarchy definitions for camera metadata. *_INFO sections are for

* the static metadata that can be retrived without opening the camera device.

* New sections must be added right before ANDROID_SECTION_COUNT to maintain

* existing enumerations.

*/

typedef enum camera_metadata_section {

ANDROID_COLOR_CORRECTION,

ANDROID_CONTROL,

ANDROID_DEMOSAIC,

ANDROID_EDGE,

ANDROID_FLASH,

ANDROID_FLASH_INFO,

ANDROID_HOT_PIXEL,

ANDROID_JPEG,

ANDROID_LENS,

ANDROID_LENS_INFO,

ANDROID_NOISE_REDUCTION,

ANDROID_QUIRKS,

ANDROID_REQUEST,

ANDROID_SCALER,

ANDROID_SENSOR,

ANDROID_SENSOR_INFO,

ANDROID_SHADING,

ANDROID_STATISTICS,

ANDROID_STATISTICS_INFO,

ANDROID_TONEMAP,

ANDROID_LED,

ANDROID_INFO,

ANDROID_BLACK_LEVEL,

ANDROID_SYNC,

ANDROID_REPROCESS,

ANDROID_DEPTH,

ANDROID_LOGICAL_MULTI_CAMERA,

ANDROID_DISTORTION_CORRECTION,

ANDROID_HEIC,

ANDROID_HEIC_INFO,

ANDROID_AUTOMOTIVE,

ANDROID_AUTOMOTIVE_LENS,

ANDROID_SECTION_COUNT,

VENDOR_SECTION = 0x8000

} camera_metadata_section_t;

1.2枚举TAG

为每一个Section分配一个取值区间,就是将Section的enum值左移16 bit就是这个Section的start,以#Section_start来标记。

* Hierarchy positions in enum space. All vendor extension tags must be

* defined with tag >= VENDOR_SECTION_START

*/

typedef enum camera_metadata_section_start {

ANDROID_COLOR_CORRECTION_START = ANDROID_COLOR_CORRECTION << 16,

ANDROID_CONTROL_START = ANDROID_CONTROL << 16,

ANDROID_DEMOSAIC_START = ANDROID_DEMOSAIC << 16,

ANDROID_EDGE_START = ANDROID_EDGE << 16,

ANDROID_FLASH_START = ANDROID_FLASH << 16,

ANDROID_FLASH_INFO_START = ANDROID_FLASH_INFO << 16,

ANDROID_HOT_PIXEL_START = ANDROID_HOT_PIXEL << 16,

ANDROID_JPEG_START = ANDROID_JPEG << 16,

ANDROID_LENS_START = ANDROID_LENS << 16,

ANDROID_LENS_INFO_START = ANDROID_LENS_INFO << 16,

ANDROID_NOISE_REDUCTION_START = ANDROID_NOISE_REDUCTION << 16,

ANDROID_QUIRKS_START = ANDROID_QUIRKS << 16,

ANDROID_REQUEST_START = ANDROID_REQUEST << 16,

ANDROID_SCALER_START = ANDROID_SCALER << 16,

ANDROID_SENSOR_START = ANDROID_SENSOR << 16,

ANDROID_SENSOR_INFO_START = ANDROID_SENSOR_INFO << 16,

ANDROID_SHADING_START = ANDROID_SHADING << 16,

ANDROID_STATISTICS_START = ANDROID_STATISTICS << 16,

ANDROID_STATISTICS_INFO_START = ANDROID_STATISTICS_INFO << 16,

ANDROID_TONEMAP_START = ANDROID_TONEMAP << 16,

ANDROID_LED_START = ANDROID_LED << 16,

ANDROID_INFO_START = ANDROID_INFO << 16,

ANDROID_BLACK_LEVEL_START = ANDROID_BLACK_LEVEL << 16,

ANDROID_SYNC_START = ANDROID_SYNC << 16,

ANDROID_REPROCESS_START = ANDROID_REPROCESS << 16,

ANDROID_DEPTH_START = ANDROID_DEPTH << 16,

ANDROID_LOGICAL_MULTI_CAMERA_START

= ANDROID_LOGICAL_MULTI_CAMERA

<< 16,

ANDROID_DISTORTION_CORRECTION_START

= ANDROID_DISTORTION_CORRECTION

<< 16,

ANDROID_HEIC_START = ANDROID_HEIC << 16,

ANDROID_HEIC_INFO_START = ANDROID_HEIC_INFO << 16,

ANDROID_AUTOMOTIVE_START = ANDROID_AUTOMOTIVE << 16,

ANDROID_AUTOMOTIVE_LENS_START = ANDROID_AUTOMOTIVE_LENS << 16,

VENDOR_SECTION_START = VENDOR_SECTION << 16

} camera_metadata_section_start_t;从这个section的start开始,开始枚举其下有哪些tag,枚举完后,再以一个#Section_END来标志这个section的TAG已经枚举完毕。

/**

* Main enum for defining camera metadata tags. New entries must always go

* before the section _END tag to preserve existing enumeration values. In

* addition, the name and type of the tag needs to be added to

* system/media/camera/src/camera_metadata_tag_info.c

*/

typedef enum camera_metadata_tag {

ANDROID_COLOR_CORRECTION_MODE = // enum | public | HIDL v3.2

ANDROID_COLOR_CORRECTION_START,

ANDROID_COLOR_CORRECTION_TRANSFORM, // rational[] | public | HIDL v3.2

ANDROID_COLOR_CORRECTION_GAINS, // float[] | public | HIDL v3.2

ANDROID_COLOR_CORRECTION_ABERRATION_MODE, // enum | public | HIDL v3.2

ANDROID_COLOR_CORRECTION_AVAILABLE_ABERRATION_MODES,

// byte[] | public | HIDL v3.2

ANDROID_COLOR_CORRECTION_END,

..............

ANDROID_STATISTICS_FACE_DETECT_MODE = // enum | public | HIDL v3.2

ANDROID_STATISTICS_START,

ANDROID_STATISTICS_HISTOGRAM_MODE, // enum | system | HIDL v3.2

ANDROID_STATISTICS_SHARPNESS_MAP_MODE, // enum | system | HIDL v3.2

ANDROID_STATISTICS_HOT_PIXEL_MAP_MODE, // enum | public | HIDL v3.2

ANDROID_STATISTICS_FACE_IDS, // int32[] | ndk_public | HIDL v3.2

ANDROID_STATISTICS_FACE_LANDMARKS, // int32[] | ndk_public | HIDL v3.2

ANDROID_STATISTICS_FACE_RECTANGLES, // int32[] | ndk_public | HIDL v3.2

ANDROID_STATISTICS_FACE_SCORES, // byte[] | ndk_public | HIDL v3.2

ANDROID_STATISTICS_HISTOGRAM, // int32[] | system | HIDL v3.2

ANDROID_STATISTICS_SHARPNESS_MAP, // int32[] | system | HIDL v3.2

ANDROID_STATISTICS_LENS_SHADING_CORRECTION_MAP, // byte | java_pub

ANDROID_STATISTICS_LENS_SHADING_MAP, // float[] | ndk_public | HIDL v3.2

ANDROID_STATISTICS_PREDICTED_COLOR_GAINS, // float[] | hidden | HIDL v3.2

ANDROID_STATISTICS_PREDICTED_COLOR_TRANSFORM, // rational[] | hidden | HIDL v3.2

ANDROID_STATISTICS_SCENE_FLICKER, // enum | public | HIDL v3.2

ANDROID_STATISTICS_HOT_PIXEL_MAP, // int32[] | public | HIDL v3.2

ANDROID_STATISTICS_LENS_SHADING_MAP_MODE, // enum | public | HIDL v3.2

ANDROID_STATISTICS_OIS_DATA_MODE, // enum | public | HIDL v3.3

ANDROID_STATISTICS_OIS_TIMESTAMPS, // int64[] | ndk_public | HIDL v3.3

ANDROID_STATISTICS_OIS_X_SHIFTS, // float[] | ndk_public | HIDL v3.3

ANDROID_STATISTICS_OIS_Y_SHIFTS, // float[] | ndk_public | HIDL v3.3

ANDROID_STATISTICS_END,

....................

}总结起来,一个TAG的Key值可以这样子来看待 (uint32_t类型的变量是4Byte, 32 bits ):

所以通过前四位可以知道这个TAG是属于哪个section,再根据后四位可以知道它是这个Section下的哪个tag。

比如ANDROID_STATISTICS这个Section的所有TAG,就处于ANDROID_STATISTICS_START(0x00110000)和ANDROID_STATISTICS_END(0x00110015)这个区间. 其中描述人脸框ANDROID_STATISTICS_FACE_RECTANGLES这个TAG,就属于这个Section之间,其key为:0x00110006;而反过来,如果发现一个TAG的key为0x00110006,那从0x0011可以判断出它是属于ANDROID_STATISTICS这个Section,再根据后16 bits, 即0006,从start开始往下数0x0006个数,就能知道是ANDROID_STATISTICS_FACE_RECTANGLES了。

另外,如果看到某个Tag的Key是0x8000开头的,则可以判断出这肯定是一个Vendor Tag。

Value

介绍了TAG,那TAG所对应的Value是什么类型是怎么定义的,以及在哪里定义的呢。以前面说的人脸框ANDROID_STATISTICS_FACE_RECTANGLES来说,它所对应的数据类型是什么样的呢,是在哪里被定义的呢?

这个是在system/media/camera/src/camera_metadata_tag_info.c下面定义的每个TAG所对应的数据类型。先看一个简短的struct,这个名叫tag_info的struct里描述了这个tag的名字以及tag的type,即数据类型.

/** Tag information */

typedef struct tag_info {

const char *tag_name;

uint8_t tag_type;

} tag_info_t;为每一个section定义一个长度为 End - Start 大小的tag_info类型数组,这个数组下面就定义了这个section旗下的所有tag的名字,以及对应的数据类型。

以android_statistics这个Section下的Tag为例子:定义了一个tag_info类型的数组,其长度为

ANDROID_STATISTICS_END - ANDROID_STATISTICS_START;数组里面按照和camera_metadata_tag的顺序,为每一个tag定义了名字和数据类型;还是以人脸框这个tag为例子,可以看到它的名字为:"faceRectangles", 数据类型为“TYPE_INT32 ”

static tag_info_t android_statistics[ANDROID_STATISTICS_END -

ANDROID_STATISTICS_START] = {

[ ANDROID_STATISTICS_FACE_DETECT_MODE - ANDROID_STATISTICS_START ] =

{ "faceDetectMode", TYPE_BYTE },

[ ANDROID_STATISTICS_HISTOGRAM_MODE - ANDROID_STATISTICS_START ] =

{ "histogramMode", TYPE_BYTE },

[ ANDROID_STATISTICS_SHARPNESS_MAP_MODE - ANDROID_STATISTICS_START ] =

{ "sharpnessMapMode", TYPE_BYTE },

[ ANDROID_STATISTICS_HOT_PIXEL_MAP_MODE - ANDROID_STATISTICS_START ] =

{ "hotPixelMapMode", TYPE_BYTE },

[ ANDROID_STATISTICS_FACE_IDS - ANDROID_STATISTICS_START ] =

{ "faceIds", TYPE_INT32 },

[ ANDROID_STATISTICS_FACE_LANDMARKS - ANDROID_STATISTICS_START ] =

{ "faceLandmarks", TYPE_INT32 },

[ ANDROID_STATISTICS_FACE_RECTANGLES - ANDROID_STATISTICS_START ] =

{ "faceRectangles", TYPE_INT32 },

[ ANDROID_STATISTICS_FACE_SCORES - ANDROID_STATISTICS_START ] =

{ "faceScores", TYPE_BYTE },

[ ANDROID_STATISTICS_HISTOGRAM - ANDROID_STATISTICS_START ] =

{ "histogram", TYPE_INT32 },

[ ANDROID_STATISTICS_SHARPNESS_MAP - ANDROID_STATISTICS_START ] =

{ "sharpnessMap", TYPE_INT32 },

[ ANDROID_STATISTICS_LENS_SHADING_CORRECTION_MAP - ANDROID_STATISTICS_START ] =

{ "lensShadingCorrectionMap", TYPE_BYTE },

[ ANDROID_STATISTICS_LENS_SHADING_MAP - ANDROID_STATISTICS_START ] =

{ "lensShadingMap", TYPE_FLOAT },

[ ANDROID_STATISTICS_PREDICTED_COLOR_GAINS - ANDROID_STATISTICS_START ] =

{ "predictedColorGains", TYPE_FLOAT },

[ ANDROID_STATISTICS_PREDICTED_COLOR_TRANSFORM - ANDROID_STATISTICS_START ] =

{ "predictedColorTransform", TYPE_RATIONAL

},

[ ANDROID_STATISTICS_SCENE_FLICKER - ANDROID_STATISTICS_START ] =

{ "sceneFlicker", TYPE_BYTE },

[ ANDROID_STATISTICS_HOT_PIXEL_MAP - ANDROID_STATISTICS_START ] =

{ "hotPixelMap", TYPE_INT32 },

[ ANDROID_STATISTICS_LENS_SHADING_MAP_MODE - ANDROID_STATISTICS_START ] =

{ "lensShadingMapMode", TYPE_BYTE },

[ ANDROID_STATISTICS_OIS_DATA_MODE - ANDROID_STATISTICS_START ] =

{ "oisDataMode", TYPE_BYTE },

[ ANDROID_STATISTICS_OIS_TIMESTAMPS - ANDROID_STATISTICS_START ] =

{ "oisTimestamps", TYPE_INT64 },

[ ANDROID_STATISTICS_OIS_X_SHIFTS - ANDROID_STATISTICS_START ] =

{ "oisXShifts", TYPE_FLOAT },

[ ANDROID_STATISTICS_OIS_Y_SHIFTS - ANDROID_STATISTICS_START ] =

{ "oisYShifts", TYPE_FLOAT },

};TAG Name

最后,再说一个关于Tag name的事情,前面我们知道每一个TAG都有一个自己专属的Key,也就是说通过Key可以唯一地区分出一个TAG,但是为了方便使用,Android 中还给每一个 tag 又定义了 一个char* 类型的名字,在使用时,也可以直接通过这个char*类型名字来操作 tag。

system/media/camera/docs/docs.html 中详细列举了所有 Android 原生 Camera Tag对应的name,它是属于Controls、Static还是Dynamic类型的,以及对应的用途都有详细的描述。

还是以人脸框为例子,ANDROID_STATISTICS_FACE_RECTANGLES对应的name叫做:android.statistics.faceRectangles。

camera metadata相关API

在system/media/camera/src/camera_metadata.c里实作了对camera metadata的操作方法,包括创建和销毁metadata以及metadata的增删改查,这里面的函数众多,就不一一单独列出来分析。

我们就以创建一包metadata为例子,来看一下创建一包metadata的过程。 在构造metadata时,调用allocate_camera_metadata,传入entry_capacity和data_capacity;先根据entry_capacity和data_capacity确定这包metadata需要占多少内存;分配好内存后,然后对metadata进行初始化。

allocate_camera_metadata()

可以看到,传入了entry_capacity和data_capacity,会先根据这两个值调用calculate_camera_metadata_size()这个函数,从名字可以看出来,这个函数是在计算这包metadata要占据多大的内存空间

计算出要多大的内存后,调用calloc()去分配内存空间

分配好内存空间后,调用place_camera_metadata()对metadata进行初始化

camera_metadata_t *allocate_camera_metadata(size_t entry_capacity,

size_t data_capacity) {

size_t memory_needed = calculate_camera_metadata_size(entry_capacity,

data_capacity);

void *buffer = calloc(1, memory_needed);

camera_metadata_t *metadata = place_camera_metadata(

buffer, memory_needed, entry_capacity, data_capacity);

if (!metadata) {

/* This should not happen when memory_needed is the same

* calculated in this function and in place_camera_metadata.

*/

free(buffer);

}

return metadata;

}calculate_camera_metadata_size()

计算内存空间大小,下面是这个函数的实现,可以看出,它其实就是根据Metadata head、entry_capacity、data_capaticy以及各自的对齐来计算的这包metadata所占据的空间大小的

size_t calculate_camera_metadata_size(size_t entry_count,

size_t data_count) {

size_t memory_needed = sizeof(camera_metadata_t);

// Start entry list at aligned boundary

memory_needed = ALIGN_TO(memory_needed, ENTRY_ALIGNMENT);

memory_needed += sizeof(camera_metadata_buffer_entry_t[entry_count]);

// Start buffer list at aligned boundary

memory_needed = ALIGN_TO(memory_needed, DATA_ALIGNMENT);

memory_needed += sizeof(uint8_t[data_count]);

// Make sure camera metadata can be stacked in continuous memory

memory_needed = ALIGN_TO(memory_needed, METADATA_PACKET_ALIGNMENT);

return memory_needed;

}place_camera_metadata()

calloc()里根据calculate_camera_metadata_size()计算出来的size去分配好内存空间后,接下来需要调用place_camera_metadata()对这块内存进行初始化,下面就是这个函数的实现,可以看出,首先是进行一些异常判断,没有问题后,就对前面说的camera_metadata_t里的成员进行初始化,size、flags、version、entry_count、entry_capacity、entries_start、data_count、data_capacity、data_start、vendor_id。

camera_metadata_t *place_camera_metadata(void *dst,

size_t dst_size,

size_t entry_capacity,

size_t data_capacity) {

if (dst == NULL) return NULL;

size_t memory_needed = calculate_camera_metadata_size(entry_capacity,

data_capacity);

if (memory_needed > dst_size) {

ALOGE("%s: Memory needed to place camera metadata (%zu) > dst size (%zu)", __FUNCTION__,

memory_needed, dst_size);

return NULL;

}

camera_metadata_t *metadata = (camera_metadata_t*)dst;

metadata->version = CURRENT_METADATA_VERSION;

metadata->flags = 0;

metadata->entry_count = 0;

metadata->entry_capacity = entry_capacity;

metadata->entries_start =

ALIGN_TO(sizeof(camera_metadata_t), ENTRY_ALIGNMENT);

metadata->data_count = 0;

metadata->data_capacity = data_capacity;

metadata->size = memory_needed;

size_t data_unaligned = (uint8_t*)(get_entries(metadata) +

metadata->entry_capacity) - (uint8_t*)metadata;

metadata->data_start = ALIGN_TO(data_unaligned, DATA_ALIGNMENT);

metadata->vendor_id = CAMERA_METADATA_INVALID_VENDOR_ID;

assert(validate_camera_metadata_structure(metadata, NULL) == OK);

return metadata;

}

void free_camera_metadata(camera_metadata_t *metadata) {

free(metadata);

}这里要说明一下的是,关于Vnedor Tag的操作,因为FrameWork是无法事先知道各个厂商底层到底定义了那些TAG,以及这些TAG的名字、key和类型的,更无法知道它们的用途。所以就定义了一个 vendor_tag_ops和vendor_tag_cache_ops,用户可以自已定义 metadata 以及实现对应的操作方法 。

/**

* This structure contains basic functions for enumerating an immutable set of

* vendor-defined camera metadata tags, and querying static information about

* their structure/type. The intended use of this information is to validate

* the structure of metadata returned by the camera HAL, and to allow vendor-

* defined metadata tags to be visible in application facing camera API.

*/

typedef struct vendor_tag_ops vendor_tag_ops_t;

struct vendor_tag_ops {

/**

* Get the number of vendor tags supported on this platform. Used to

* calculate the size of buffer needed for holding the array of all tags

* returned by get_all_tags(). This must return -1 on error.

*/

int (*get_tag_count)(const vendor_tag_ops_t *v);

/**

* Fill an array with all of the supported vendor tags on this platform.

* get_tag_count() must return the number of tags supported, and

* tag_array will be allocated with enough space to hold the number of tags

* returned by get_tag_count().

*/

void (*get_all_tags)(const vendor_tag_ops_t *v, uint32_t *tag_array);

/**

* Get the vendor section name for a vendor-specified entry tag. This will

* only be called for vendor-defined tags.

*

* The naming convention for the vendor-specific section names should

* follow a style similar to the Java package style. For example,

* CameraZoom Inc. must prefix their sections with "com.camerazoom."

* This must return NULL if the tag is outside the bounds of

* vendor-defined sections.

*

* There may be different vendor-defined tag sections, for example the

* phone maker, the chipset maker, and the camera module maker may each

* have their own "com.vendor."-prefixed section.

*

* The memory pointed to by the return value must remain valid for the

* lifetime of the module, and is owned by the module.

*/

const char *(*get_section_name)(const vendor_tag_ops_t *v, uint32_t tag);

/**

* Get the tag name for a vendor-specified entry tag. This is only called

* for vendor-defined tags, and must return NULL if it is not a

* vendor-defined tag.

*

* The memory pointed to by the return value must remain valid for the

* lifetime of the module, and is owned by the module.

*/

const char *(*get_tag_name)(const vendor_tag_ops_t *v, uint32_t tag);

/**

* Get tag type for a vendor-specified entry tag. The type returned must be

* a valid type defined in camera_metadata.h. This method is only called

* for tags >= CAMERA_METADATA_VENDOR_TAG_BOUNDARY, and must return

* -1 if the tag is outside the bounds of the vendor-defined sections.

*/

int (*get_tag_type)(const vendor_tag_ops_t *v, uint32_t tag);

/* Reserved for future use. These must be initialized to NULL. */

void* reserved[8];

};

struct vendor_tag_cache_ops {

/**

* Get the number of vendor tags supported on this platform. Used to

* calculate the size of buffer needed for holding the array of all tags

* returned by get_all_tags(). This must return -1 on error.

*/

int (*get_tag_count)(metadata_vendor_id_t id);

/**

* Fill an array with all of the supported vendor tags on this platform.

* get_tag_count() must return the number of tags supported, and

* tag_array will be allocated with enough space to hold the number of tags

* returned by get_tag_count().

*/

void (*get_all_tags)(uint32_t *tag_array, metadata_vendor_id_t id);

/**

* Get the vendor section name for a vendor-specified entry tag. This will

* only be called for vendor-defined tags.

*

* The naming convention for the vendor-specific section names should

* follow a style similar to the Java package style. For example,

* CameraZoom Inc. must prefix their sections with "com.camerazoom."

* This must return NULL if the tag is outside the bounds of

* vendor-defined sections.

*

* There may be different vendor-defined tag sections, for example the

* phone maker, the chipset maker, and the camera module maker may each

* have their own "com.vendor."-prefixed section.

*

* The memory pointed to by the return value must remain valid for the

* lifetime of the module, and is owned by the module.

*/

const char *(*get_section_name)(uint32_t tag, metadata_vendor_id_t id);

/**

* Get the tag name for a vendor-specified entry tag. This is only called

* for vendor-defined tags, and must return NULL if it is not a

* vendor-defined tag.

*

* The memory pointed to by the return value must remain valid for the

* lifetime of the module, and is owned by the module.

*/

const char *(*get_tag_name)(uint32_t tag, metadata_vendor_id_t id);

/**

* Get tag type for a vendor-specified entry tag. The type returned must be

* a valid type defined in camera_metadata.h. This method is only called

* for tags >= CAMERA_METADATA_VENDOR_TAG_BOUNDARY, and must return

* -1 if the tag is outside the bounds of the vendor-defined sections.

*/

int (*get_tag_type)(uint32_t tag, metadata_vendor_id_t id);

/* Reserved for future use. These must be initialized to NULL. */

void* reserved[8];

};在Android Camera之CameraServer的启动过程里,其中一张流程图里有轻描淡写的提到:CameraService在enumProvider()里会调用CameraProviderManager::setUpVendorTags(), 这里面其实就是通过调用 set_camera_metadata_vendor_cache_ops() 方法将frameworks/av/camera/vendorTagDescriptor.cpp里所实作的vendor_cache_ops传给system/media/camera/src/camera_metadata.c里的全局变量vendor_cache_ops。这里可能看起来有疑问:为什么是FrameWork层实作的这个vendor_tag_cache_ops里的那些function,难道不是应该由Vendor来实做吗? 其实这里再继续往下追一下可以看到,Framework层vendorTagDescriptor.cpp里实作的那些fuction其实是还要根据vendor_id再转接一次到对应CameraDeviceProvider的vendorTagDescriptor里对应的function的。

在system/media/camera/src/camera_metadata.c这里面的相关的API中,如果发现操作的TAG是一个VendorTag时,就会跳到Vendor自己实作的方法里。比如通过一个TAG的key,去找它的Section name、Tag name、Tag type时,如果发现是AOSP里原生的,那就直接从对应的结构体里查找就好了;如果发现是Vendor Tag,就会根据这包meta的vendor_id调用到对应CameraDeviceProvider里的vendor_tag_cache_ops里Vendor所实作的方法里去得到相应的结果。

1295

1295

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?