随着云计算的流行与落地,越来越多的云上lb(loadbalancer)被广泛应用,但有些环境依旧没有条件使用云上lb。本文将介绍使用keepalived + haproxy配置lb,部署高可用kubernetes集群。

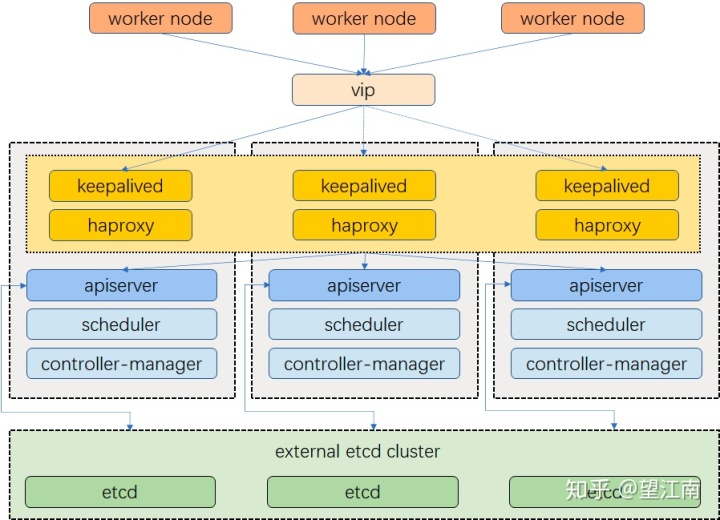

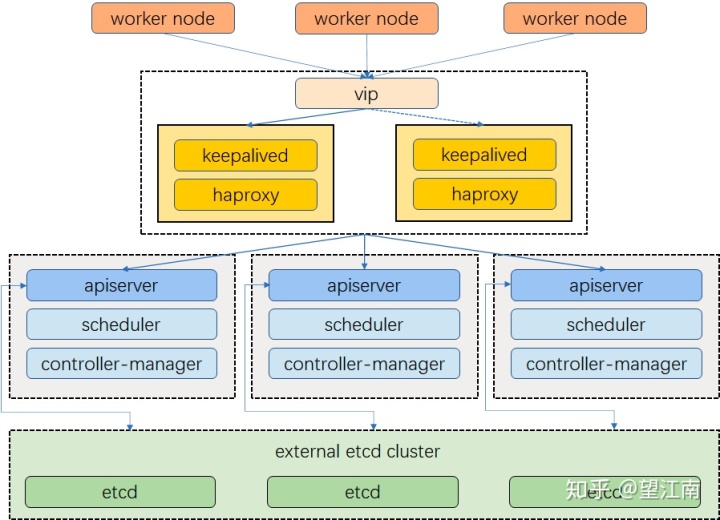

基于keepalived + haproxy部署高可用kubernetes集群一般有两种模式:融合部署,分离部署。但他们的实现原理是一样的。本文主要以融合部署模式介绍keepalived + haproxy的配置与启动,及高可用kubernetes的部署。

- 部署架构

2. 集群规划

ip 角色

192.168.6.100 vip

192.168.6.2 master,lb

192.168.6.3 master,lb

192.168.6.4 master,lb

192.168.6.5 worker

192.168.6.6 worker

192.168.6.7 worker3. 配置haproxy

每台lb机器配置一直即可,主机根据实际环境填写后端地址

创建配置文件/etc/haproxy/haproxy.cfg (放置在自己好管理的目录下即可)

global

log /dev/log local0 warning

chroot /var/lib/haproxy

pidfile /var/run/haproxy.pid

maxconn 4000

user haproxy

group haproxy

daemon

stats socket /var/lib/haproxy/stats

defaults

log global

option httplog

option dontlognull

timeout connect 5000

timeout client 50000

timeout server 50000

frontend kube-apiserver

bind *:6443

mode tcp

option tcplog

default_backend kube-apiserver

backend kube-apiserver

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server kube-apiserver-1 192.168.6.2:6443 check

server kube-apiserver-2 192.168.6.3:6443 check

server kube-apiserver-3 192.168.6.4:6443 check4. 配置keepalived

注释处根据每台lb机器实际信息填写

创建配置文件/etc/keepalived/keepalived.conf (放置在自己好管理的目录下即可)

global_defs {

notification_email {

}

router_id LVS_DEVEL

vrrp_skip_check_adv_addr

vrrp_garp_interval 0

vrrp_gna_interval 0

}

vrrp_script haproxy_check {

script "/bin/bash -c 'if [[ $(netstat -nlp | grep 8443) ]]; then exit 0; else exit 1; fi'"

interval 2

weight 2

}

vrrp_instance haproxy-vip {

state BACKUP

priority 100

interface eth0 #vip绑定网卡

virtual_router_id 60

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

unicast_src_ip 192.168.6.2 #当前机器地址

unicast_peer {

192.168.6.3 #peer中其它地址

192.168.6.4 #peer中其它地址

}

virtual_ipaddress {

192.168.6.100/24 #vip地址

}

track_script {

haproxy_check

}

}5. 启动keepalived + haproxy

# lb01

docker run -d --name6. 验证

使用ip a s命令查看各节点vip绑定情况,手动停止vip绑定节点上的haproxy,验证vip是否发生漂移。

7. 部署kubernetes

部署kubernetes的方法太多了,可以使用二进制、kubeadm等,这里以kubekey为例

# 创建配置文件

./kk create config

# 根据集群信息配置config-example.yaml

apiVersion: kubekey.kubesphere.io/v1alpha1

kind: Cluster

metadata:

name: config-sample

spec:

hosts:

- {name: node1, address: 192.168.6.2, internalAddress: 192.168.6.2, password: test}

- {name: node2, address: 192.168.6.3, internalAddress: 192.168.6.3, password: test}

- {name: node3, address: 192.168.6.4, internalAddress: 192.168.6.4, password: test}

- {name: node4, address: 192.168.6.5, internalAddress: 192.168.6.5, password: test}

- {name: node5, address: 192.168.6.6, internalAddress: 192.168.6.6, password: test}

- {name: node6, address: 192.168.6.7, internalAddress: 192.168.6.7, password: test}

roleGroups:

etcd:

- node[1:3]

master:

- node[1:3]

worker:

- node[4:6]

controlPlaneEndpoint:

domain: lb.kubesphere.local

address: 192.168.6.100 # vip

port: 8443

kubernetes:

version: v1.17.6

imageRepo: kubesphere

clusterName: cluster.local

network:

plugin: calico

kube_pods_cidr: 10.233.64.0/18

kube_service_cidr: 10.233.0.0/18

registry:

registryMirrors: []

insecureRegistries: []

# 部署集群

./kk create cluster -f config-example.yaml8. 查看集群状态

kubectl get node

NAME STATUS ROLES AGE VERSION

node1 Ready master 21h v1.17.6

node2 Ready master 21h v1.17.6

node3 Ready master 21h v1.17.6

node4 Ready worker 21h v1.17.6

node5 Ready worker 21h v1.17.6

node6 Ready worker 21h v1.17.6

1658

1658

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?