博主刚刚考入研究生,选学大数据方向,写系列博客来记录学习成果。

环境:Ubuntu18.04、Apache hadoop

1.创建Hadoop用户

sudo userad -m hadoop -s /bin/bash 创建hadoop用户

sudo passwd hadoop 设置密码

sudo adduser hadoop sudo 设置管理员权限

sudo su hadoop 切换用户

2.SSH设置免密登

sudo apt-get install openssh-server 安装SSH ,按Y

ssh localhost 登陆SSH

exit 离开SSH

cd ~/.ssh/ 进入SSH目录

ssh-keygen -t rsa 之后输入三次回车,确认空白密码

cat ./id_rsa.pub >> ./authorized_keys 授权空白密码

ssh localhost 登陆无需密码

3.安装Java环境

一个大佬挂的Linux JDK8地址:

https://pan.baidu.com/s/18IicPYf7W0j-sHBXvfKyyg

1.cd /usr 2.mkdir java 切换到usr/local/目录并创建Java目录

3.cp jdk-8u60-linux-x64.tar.gz /usr/local/java/ 复制包到Java目录

4. tar -zxvf jdk-8u60-linux-x64.tar.gz 解压

5.配置环境变量

sudo gedit /etc/environment

如果路径没错直接替换

PATH="/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:$JAVA_HOME/bin"

export CLASSPATH=.:$JAVA_HOME/lib:$JAVA_HOME/jre/lib

export JAVA_HOME=/usr/local/java/jdk1.8.0_161

生效

source /etc/environment

sudo gedit /etc/profile

在最后加上

#set Java environment

export JAVA_HOME=/usr/local/java/jdk1.8.0_161

export JRE_HOME=$JAVA_HOME/jre

export CLASSPATH=.:$JAVA_HOME/lib:$JRE_HOME/lib:$CLASSPATH export PATH=$JAVA_HOME/bin:$JRE_HOME/bin:$PATH

生效

source /etc/profile

重启

sudo shutdown -r now

java -version

java version "1.8.0_161"

目前root用户可以使用Java,为hadoop使用Java需要如下操作

chmod -R 755 /usr/local/java/jdk1.8.0_161 读写授权

chown -R hadoop /usr/local/java/jdk1.8.0_161 用户授权

最后切换Hadoop

sudo gedit ~/.bashrc

在最后加上

#set Java environment

export JAVA_HOME=/usr/local/java/jdk1.8.0_161

export JRE_HOME=$JAVA_HOME/jre

export CLASSPATH=.:$JAVA_HOME/lib:$JRE_HOME/lib:$CLASSPATH export PATH=$JAVA_HOME/bin:$JRE_HOME/bin:$PATH

生效

source ~/.bashrc

重启 双用户均可以使用Java

4.Hadoop安装

http://mirrors.hust.edu.cn/apache/hadoop/common/ hadoop下载地址

sudo tar -zxvf hadoop-2.9.2.tar.gz -C /usr/local 解压到/usr/local

cd /usr/local

sudo mv hadoop-2.9.2 hadoop 重命名为hadoop

sudo chown -R hadoop ./hadoop 为Hadoop用户授权

gedit /etc/profile 配置hadoop环境变量

底部加上这么多,然后保存

export HADOOP_HOME=/usr/local/hadoop

export CLASSPATH=$($HADOOP_HOME/bin/hadoop classpath):$CLASSPATH

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

source /etc/profile 生效

hadoop version 验证是否生效

同理

`gedit ~/.bashrc 配置hadoop环境变量

底部加上这么多,然后保存

export HADOOP_HOME=/usr/local/hadoop

export CLASSPATH=$($HADOOP_HOME/bin/hadoop classpath):$CLASSPATH

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

source ~/.bashrc 生效

hadoop version 验证是否生效

5.本地分布式配置

Hadoop配置四大天王,不多说了吧?

etc/lhadoop/core-site.xml

etc/hadoop/mapred-site.xml

etc/hadoop/hdfs-site.xml

etc/hadoop/yarn-site.xml

gedit etc/lhadoop/core-site.xml

添加

<configuration>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/usr/local/hadoop/tmp</value>

<description>Abase for other temporary directories.</description>

</property>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>

gedit etc/hadoop/hdfs-site.xml

添加

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/usr/local/hadoop/tmp/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/usr/local/hadoop/tmp/dfs/data</value>

</property>

</configuration>

gedit etc/hadoop/mapred-site.xml

添加

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

gedit etc/hadoop/yarn-site.xml

添加

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>

gedit hadoop-env.sh 配置JDK

添加:export JAVA_HOME=/usr/local/java/jdk1.8.0_161

格式化:namenode

./bin/hdfs namenode -format

启动namenode和datanode

./sbin/start-dfs.sh

关闭:./sbin/stop-all.sh

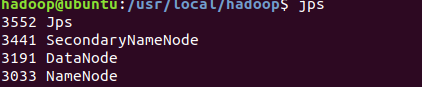

查看结果

jps

授权sbin

sudo chmod 777 sbin

启动资源管理器并查看任务

./sbin/start-yarn.sh

./sbin/mr-jobhistory-daemon.sh start historyserver

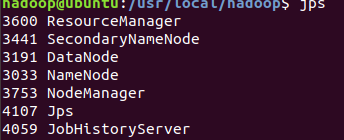

查看结果

jps

关闭资源管理器并关闭查看任务

./sbin/stop-yarn.sh

./sbin/mr-jobhistory-daemon.sh stop historyserver

完结

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?