文章目录

1、介绍

2、 Authentication(认证)

-

认证方式现共有8种,可以启用一种或多种认证方式,只要有一种认证方式通过,就不再进行其它方式的认证。通常启用X509 Client Certs和Service Accout Tokens两种认证方式。

-

Kubernetes集群有两类用户:由Kubernetes管理的Service Accounts (服务账户)和(Users Accounts) 普通账户。k8s中账号的概念不是我们理解的账号,它并不真的存在,它只是形式上存在。

UserAccount与serviceaccount:

-

用户账户是针对人而言的。 服务账户是针对运行在 pod 中的进程而言的。

-

用户账户是全局性的。 其名称在集群各 namespace 中都是全局唯一的,未来的用户资源不会做 namespace 隔离, 服务账户是 namespace 隔离的。

-

通常情况下,集群的用户账户可能会从企业数据库进行同步,其创建需要特殊权限,并且涉及到复杂的业务流程。 服务账户创建的目的是为了更轻量,允许集群用户为了具体的任务创建服务账户 ( 即权限最小化原则 )。

2.1 serviceaccount(sa)

1.创建serviceaccount(sa)

$ kubectl create serviceaccount admin

serviceaccount/admin created

$ kubectl describe sa admin //此时k8s为用户自动生成认证信息,但没有授权

Name: admin

Namespace: default

Labels: <none>

Annotations: <none>

Image pull secrets: <none>

Mountable secrets: admin-token-6xfpp

Tokens: admin-token-6xfpp

Events: <none>

2.添加secrets到serviceaccount中

$ kubectl patch serviceaccount admin -p '{"imagePullSecrets": [{"name": "myregistrykey"}]}'

3.把serviceaccount和pod绑定起来:

[root@server2 ~]# cat pod3.yaml

apiVersion: v1

kind: Pod

metadata:

name: mypod

spec:

containers:

- name: game2048

image: reg.westos.org/westos/game2048

#imagePullSecrets:

# - name: myregistrykey

serviceAccountName: admin

[root@server2 ~]# kubectl apply -f pod3.yaml

将认证信息添加到serviceAccount中,要比直接在Pod指定imagePullSecrets要安全很多。

2.2 UserAccount

4.创建UserAccount:

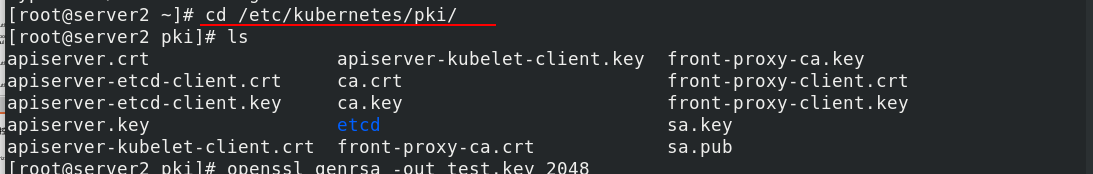

[root@server2 ~]# cd /etc/kubernetes/pki/

[root@server2 ~]# openssl genrsa -out test.key 2048

[root@server2 ~]# openssl req -new -key test.key -out test.csr -subj "/CN=test"

[root@server2 ~]# openssl x509 -req -in test.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out test.crt -days 365

[root@server2 ~]# openssl x509 -in test.crt -text -noout #查看证书内容

[root@server2 ~]# kubectl config set-credentials test --client-certificate=/etc/kubernetes/pki/test.crt --client-key=/etc/kubernetes/pki/test.key --embed-certs=true

[root@server2 ~]# kubectl config view

[root@server2 ~]# kubectl config set-context test@kubernetes --cluster=kubernetes --user=test

[root@server2 ~]# kubectl config use-context test@kubernetes

[root@server2 ~]# kubectl get pod

Error from server (Forbidden): pods is forbidden: User "test" cannot list resource "pods" in API group "" in the namespace "default"

此时用户通过认证,但还没有权限操作集群资源,需要继续添加授权。

3、 授权

基于角色访问控制授权

- 允许管理员通过Kubernetes API动态配置授权策略。RBAC就是用户通过角色与权限进行关联。

- RBAC只有授权,没有拒绝授权,所以只需要定义允许该用户做什么即可。

- RBAC包括四种类型:Role、ClusterRole、RoleBinding、ClusterRoleBinding。

3.0 RBAC(Role Based Access Control)(最重要,以此为例)

RBAC的三个基本概念:

- Subject:被作用者,它表示k8s中的三类主体, user, group, serviceAccount

- Role:角色,它其实是一组规则,定义了一组对 Kubernetes API 对象的操作权限。

- RoleBinding:定义了“被作用者”和“角色”的绑定关系。

Role 和 ClusterRole

- Role是一系列的权限的集合,Role只能授予单个namespace 中资源的访问权限。

- ClusterRole 跟 Role 类似,但是可以在集群中全局使用。

3.1 Role与Role绑定

1. 创建 role

kubectl config view ##查看用户

kubectl config use-context kubernetes-admin@kubernetes ## 切换用户

[root@server2 ~]# mkdir rbac

[root@server2 ~]# cd rbac/

[root@server2 rbac]# vim role.yaml

[root@server2 rbac]# kubectl apply -f role.yaml

[root@server2 rbac]# cat role.yaml

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

namespace: default

name: myrole

rules:

- apiGroups: [""]

resources: ["pods"]

verbs: ["get", "watch", "list", "create", "update", "patch", "delete"]

[root@server2 rbac]# kubectl describe role myrole

Name: myrole

Labels: <none>

Annotations: <none>

PolicyRule:

Resources Non-Resource URLs Resource Names Verbs

--------- ----------------- -------------- -----

pods [] [] [get watch list create update patch delete]

2. Role绑定

[root@server2 rbac]# vim role.yaml

[root@server2 rbac]# kubectl apply -f role.yaml

[root@server2 rbac]# kubectl get rolebindings.rbac.authorization.k8s.io

NAME ROLE AGE

test-read-pods Role/myrole 34s

[root@server2 rbac]# kubectl describe rolebindings.rbac.authorization.k8s.io

[root@server2 rbac]# kubectl config use-context test@kubernetes

[root@server2 rbac]# kubectl get pod ## 此时有权利访问pod

NAME READY STATUS RESTARTS AGE

demo 1/1 Running 1 4h49m

mypod 1/1 Running 0 58m

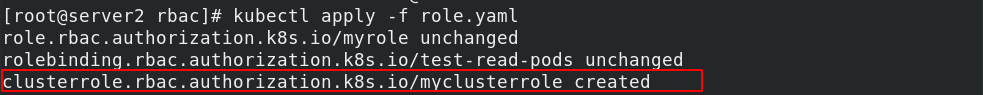

3.2 ClusterRole与RoleBinding

ClusterRole示例

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: myclusterrole

rules:

- apiGroups: [""]

resources: ["pods"]

verbs: ["get", "watch", "list", "delete", "create", "update"]

- apiGroups: ["extensions", "apps"]

resources: ["deployments"]

verbs: ["get", "list", "watch", "create", "update", "patch", "delete"]

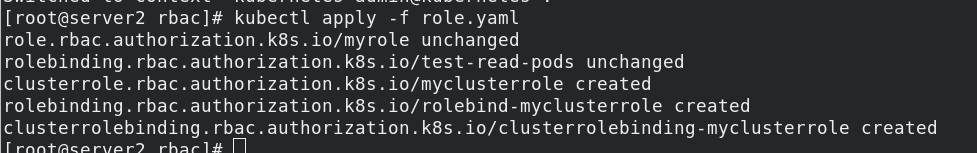

使用rolebinding绑定clusterRole:

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: rolebind-myclusterrole

namespace: default

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: myclusterrole

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: test

3.3 ClusterRole与ClusterRoleBinding

创建clusterrolebinding:

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: clusterrolebinding-myclusterrole

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: myclusterrole

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: test

3.4 补充

Kubernetes 还拥有“用户组”(Group)的概念:

ServiceAccount对应内置“用户”的名字是:

system:serviceaccount:<ServiceAccount名字 >

而用户组所对应的内置名字是:

system:serviceaccounts:<Namespace名字 >

示例1:表示mynamespace中的所有ServiceAccount

subjects:

- kind: Group

name: system:serviceaccounts:mynamespace

apiGroup: rbac.authorization.k8s.io

示例2:表示整个系统中的所有ServiceAccount

subjects:

- kind: Group

name: system:serviceaccounts

apiGroup: rbac.authorization.k8s.io

Kubernetes 还提供了四个预先定义好的 ClusterRole 来供用户直接使用:

- cluster-amdin

- admin

- edit

- view

示例:(最佳实践)

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: readonly-default

subjects:

- kind: ServiceAccount

name: default

namespace: default

roleRef:

kind: ClusterRole

name: view

apiGroup: rbac.authorization.k8s.io

4、尝试理解文档

[root@server2 nfs-client]# cat nfs-client-provisioner.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: nfs-client-provisioner

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: nfs-client-provisioner

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: nfs-client-provisioner

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: nfs-client-provisioner

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

apiGroup: rbac.authorization.k8s.io

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

labels:

app: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: nfs-client-provisioner

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-client-provisioner

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: nfs-subdir-external-provisioner:v4.0.0

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: k8s-sigs.io/nfs-subdir-external-provisioner

- name: NFS_SERVER

value: 172.25.200.1

- name: NFS_PATH

value: /nfsdata

volumes:

- name: nfs-client-root

nfs:

server: 172.25.200.1

path: /nfsdata

---

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: managed-nfs-storage

provisioner: k8s-sigs.io/nfs-subdir-external-provisioner

parameters:

archiveOnDelete: "true"

1650

1650

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?