最近在搞ES+spark的时候出现了如下问题:

Multiple ES-Hadoop versions detected in the classpath; please use only one

19/08/14 05:03:53 WARN scheduler.TaskSetManager: Lost task 0.0 in stage 12.0 (TID 632, datanode003.dev): java.lang.Error: Multiple ES-Hadoop versions detected in the classpath; please use only one

jar:file:/data/hadoop/local/usercache/hadoop/appcache/application_1564565609416_0128/container_e03_1564565609416_0128_01_000002/***-jar-with-dependencies.jar

jar:file:/data/hadoop-2.7.2/local/usercache/hadoop/appcache/application_1564565609416_0128/container_e03_1564565609416_0128_01_000002/***-jar-with-dependencies.jar

at org.elasticsearch.hadoop.util.Version.<clinit>(Version.java:73)

at org.elasticsearch.hadoop.rest.RestService.createWriter(RestService.java:573)

at org.elasticsearch.spark.rdd.EsRDDWriter.write(EsRDDWriter.scala:58)

at org.elasticsearch.spark.sql.EsSparkSQL$$anonfun$saveToEs$1.apply(EsSparkSQL.scala:101)

at org.elasticsearch.spark.sql.EsSparkSQL$$anonfun$saveToEs$1.apply(EsSparkSQL.scala:101)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:70)

at org.apache.spark.scheduler.Task.run(Task.scala:85)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:274)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)

at java.lang.Thread.run(Thread.java:745)

19/08/14 05:03:53 WARN scheduler.TaskSetManager: Lost task 1.0 in stage 12.0 (TID 633, datanode003.dev): java.lang.NoClassDefFoundError: Could not initialize class org.elasticsearch.hadoop.util.Version

at org.elasticsearch.hadoop.rest.RestService.createWriter(RestService.java:573)

at org.elasticsearch.spark.rdd.EsRDDWriter.write(EsRDDWriter.scala:58)

at org.elasticsearch.spark.sql.EsSparkSQL$$anonfun$saveToEs$1.apply(EsSparkSQL.scala:101)

at org.elasticsearch.spark.sql.EsSparkSQL$$anonfun$saveToEs$1.apply(EsSparkSQL.scala:101)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:70)

at org.apache.spark.scheduler.Task.run(Task.scala:85)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:274)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)

at java.lang.Thread.run(Thread.java:745)

我把重点摘出来:

java.lang.Error: Multiple ES-Hadoop versions detected in the classpath; please use only one

jar:file:/data/hadoop/local/usercache/hadoop/appcache/application_1564565609416_0128/container_e03_1564565609416_0128_01_000002/***-jar-with-dependencies.jar

jar:file:/data/hadoop-2.7.2/local/usercache/hadoop/appcache/application_1564565609416_0128/container_e03_1564565609416_0128_01_000002/***-jar-with-dependencies.jar字面意思是由两个不同路径的相同jar包引起的冲突(打***是包名),但问题在于

配置软连接出现了问题,导致引入两个相同jar包:yarn在解析classpath时发现软连接和真实路径存在两个jar所以报错

解决方式:

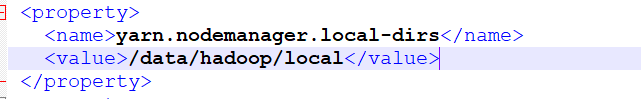

找到Hadoop的 yarn-site.xml 文件 确定 nodemanager 的配置 yarn.nodemanager.local-dirs是不是配置了软连接的路径,

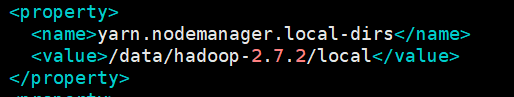

改为真实路径重启即可

改为了

附图

希望对您有帮助

另附国外的一个回答:

131

131

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?