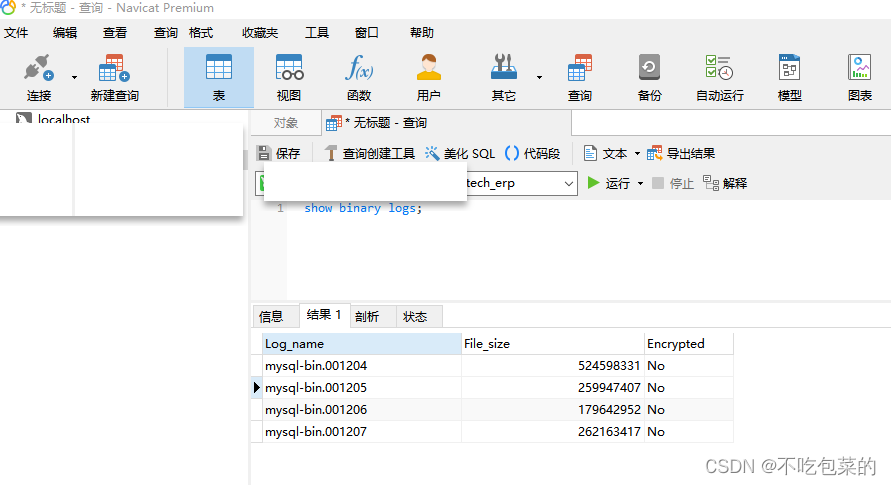

1. 执行SQL查看Binlog日志文件名:

show binary logs;

举个例子

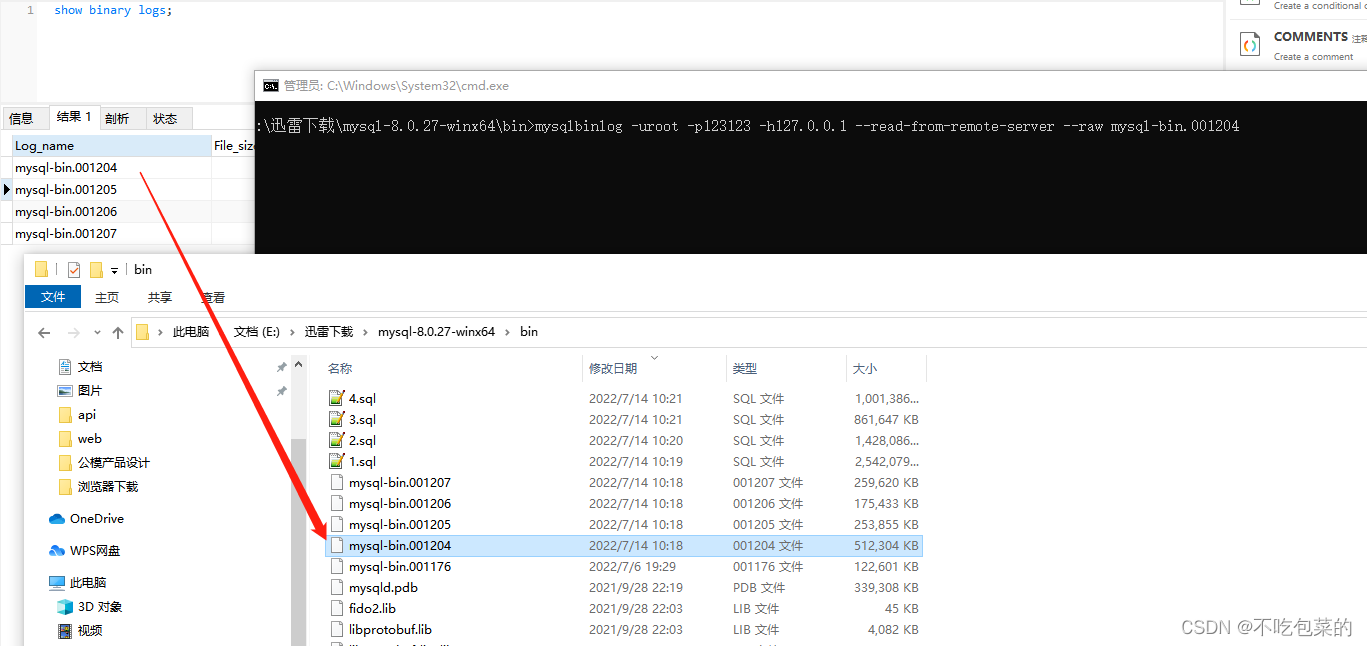

2.去官网(https://dev.mysql.com/downloads/installer/)下载一个MySQL客户端

导出原始格式的binlog

进入mysql/bin目录

mysqlbinlog -u{数据库用户名} -p{数据库密码} -h{数据库地址} --read-from-remote-server --raw mysql-bin.XXX

举个例子

mysqlbinlog -u{数据库用户名} -p{数据库密码} -h{数据库地址} --read-from-remote-server --raw mysql-bin.001204

就拿到了

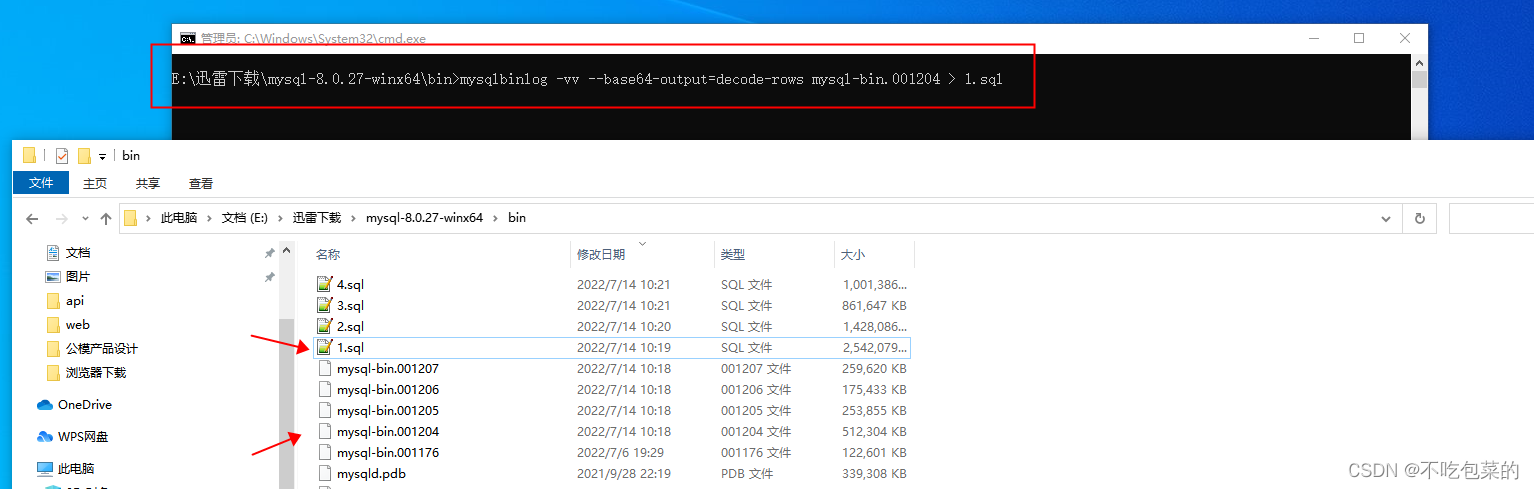

3.格式化binlog

mysqlbinlog -vv --base64-output=decode-rows mysql-bin.XXX > XXX.sql

举个例子

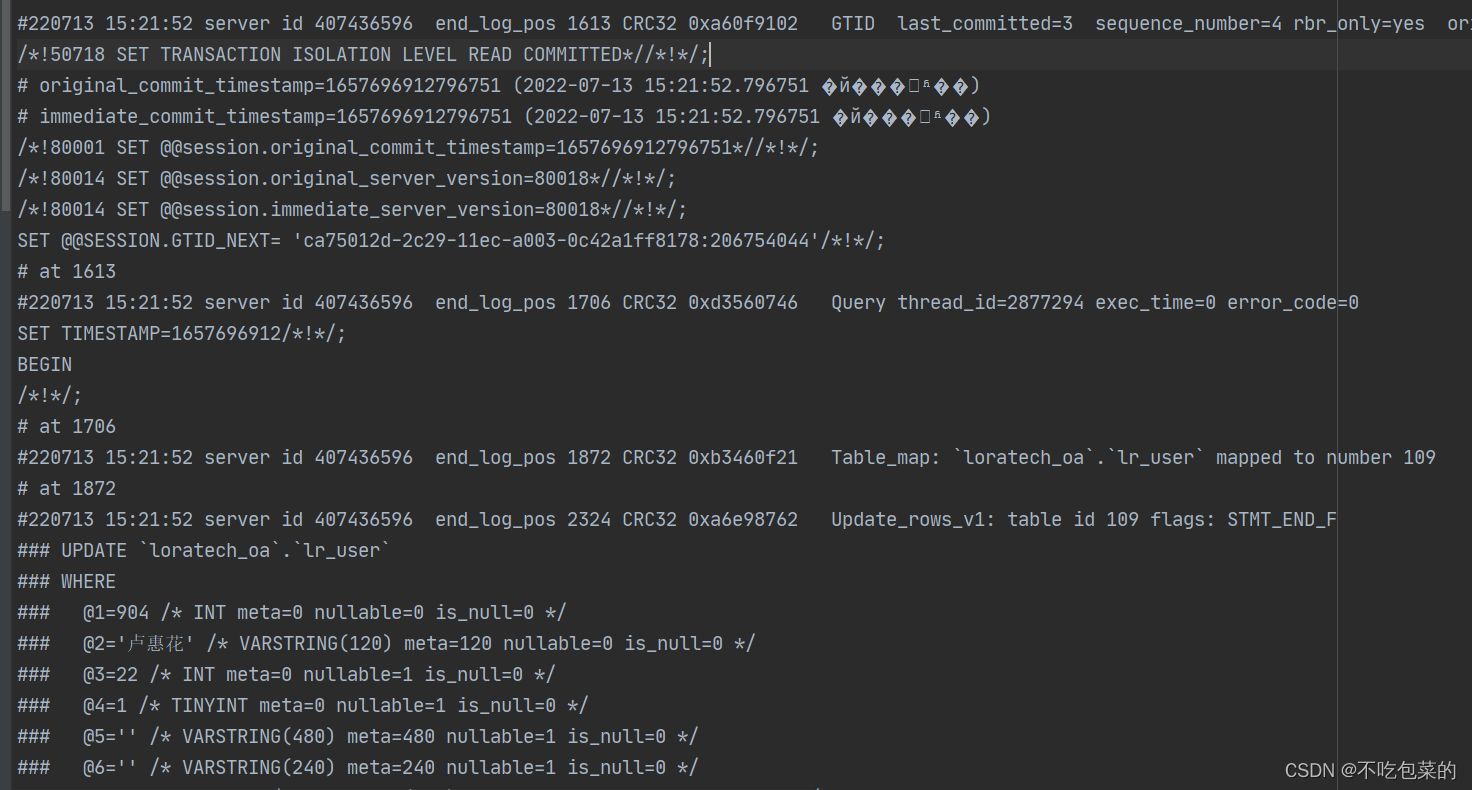

得到的数据大概长这样子

4.把binlog按事务切割,只保留匹配到关键字的事务

<?php

ini_set('max_execution_time',0);

ini_set('memory_limit',-1);

$files = [

'1.sql',

'2.sql',

'3.sql',

'4.sql',

];

$minWeight = 1; // 保留事务的最低权重

$weightList = [

[

"`loratech_erp`.`lr_custom_translation`", // 内容

1 // 权重

]

];

foreach ($files as $k=>$file) {

$handle = fopen($file,'r');

$rows = [];

$i = 0;

$dir = './'.$file.'_dir';

!file_exists($dir) AND mkdir($dir);

while (!feof($handle) && $row = fgets($handle)){

$rows = [];

$rowWeight = 0;

if (strpos($row,'BEGIN') !== false){

$row = trim($row);

do{

$rows []= $row;

$row = fgets($handle);

$row = trim($row);

foreach ($weightList as $weightItem) {

list($wStr, $w) = $weightItem;

if (strpos($row,$wStr) !== false){

$rowWeight += $w;

}

}

// 自由发挥

// if ($rowWeight && preg_match("/### @1=(.*) \/\* INT meta=0 nullable=0 is_null=0 /",$row, $matches)){

// if (!in_array($matches[1], $ids)){

// $rowWeight = -99;

// }

// }

}while(!feof($handle) && strpos($row,'COMMIT/*!*/;') === false);

$rows []= $row;

if ($rowWeight >= $minWeight){

file_put_contents("{$dir}/{$i}.sql",implode("\r\n",$rows));

$i++;

}

}

}

fclose($handle);

}

5.按实际需要处理数据和表头进行组装

举个例子

我要把原来的数据和更新后的数据都去重了写入两个文本里面

<?php

ini_set('max_execution_time',0);

ini_set('memory_limit',-1);

$patterns = [

"### UPDATE `loratech_erp`.`lr_custom_translation`",

"### WHERE",

"/### @1=(.*) \/\* INT/",

"/### @2=(.*) \/\* INT/",

"/### @3=(.*) \/\* INT/",

"/### @4=(.*) \/\* TINYINT/",

"/### @5=(.*) \/\* VARSTRING/",

"/### @6=(.*) \/\* VARSTRING/",

"/### @7=(.*) \/\* VARSTRING/",

"/### @8=(.*) \/\* TINYINT/",

"/### @9=(.*) \/\* INT/",

"/### @10=(.*) \/\* DATETIME/",

];

$dirs = [

"1.sql_dir",

"2.sql_dir",

"3.sql_dir",

"4.sql_dir",

];

$resRows = [];

foreach ($dirs as $dir) {

$fileNames = scandir("./{$dir}");

foreach ($fileNames as $fileName) {

if ($fileName == '.' || $fileName=='..'){

continue;

}

$resRows = exFile("./{$dir}/{$fileName}", $patterns, $resRows);

}

}

file_put_contents('last.json',json_encode($resRows),FILE_APPEND);

function exFile($fileName, $patterns, $resRows) {

$head = [

'',

'',

'id',

'shop_id',

'platform_id',

'type',

'name',

'translate',

'filter',

'no_translation',

'user_id',

'create_time',

];

$handle = fopen($fileName,'r');

while (!feof($handle) && $row = fgets($handle)){

if (strpos($row,$patterns[0]) !== false){

$row = fgets($handle);

if (strpos($row, $patterns[1]) !== false){

$resRow = [];

for ($pi=2; $pi<count($patterns); $pi++){

$row = fgets($handle);

$matches = [];

if (preg_match($patterns[$pi], $row, $matches)){

$resRow[$head[$pi]] = $matches[1];

}

}

file_put_contents('all.json',json_encode($resRow)."\r\n",FILE_APPEND);

$tmp = $resRow;

unset($tmp['id']);

$key = md5(json_encode($tmp));

if (!isset($resRows[$key])){

file_put_contents('first_where.json',json_encode($resRow)."\r\n",FILE_APPEND);

$resRows[$key] = $resRow;

}

$row = fgets($handle);

$resRow = [];

for ($pi=2; $pi<count($patterns); $pi++){

$row = fgets($handle);

$matches = [];

if (preg_match($patterns[$pi], $row, $matches)){

$resRow[$head[$pi]] = $matches[1];

}

}

file_put_contents('all.json',json_encode($resRow)."\r\n",FILE_APPEND);

$tmp = $resRow;

unset($tmp['id']);

$key = md5(json_encode($tmp));

if (!isset($resRows[$key])){

file_put_contents('first_update.json',json_encode($resRow)."\r\n",FILE_APPEND);

$resRows[$key] = $resRow;

}

}

}

}

fclose($handle);

return $resRows;

}

6.最后获取到的数据 要留意把字符串中的单引号移除

{

"id": "11863",

"shop_id": "NULL",

"platform_id": "5",

"type": "2",

"name": "'字体:always in my heart'",

"translate": "'handwriting'",

"filter": "''",

"no_translation": "0",

"user_id": "915",

"create_time": "'2022-03-24 11:14:32'"

}

{

"id": "8",

"shop_id": "0",

"platform_id": "4",

"type": "1",

"name": "'日期'",

"translate": "'Date'",

"filter": "''",

"no_translation": "0",

"user_id": "3",

"create_time": "'2021-08-05 14:25:19'"

}

1225

1225

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?