spark 提交脚本:

nohup /opt/soft/spark3/bin/spark-submit \

--master yarn \

--deploy-mode cluster \

--driver-memory 1g \

--num-executors 3 \

--total-executor-cores 2 \

--executor-memory 2g \

--queue spark \

--conf spark.eventLog.enabled=false \

--conf spark.driver.extraJavaOptions=-Dlog4j.configuration=file:driver-log4j.properties \

--conf spark.executor.extraJavaOptions=-Dlog4j.configuration=file:executor-log4j.properties \

--files ./driver-log4j.properties,./executor-log4j.properties \

--class streaming.SSSHudiETL \

--jars /opt/soft/hudi/hudi-0.9.0/packaging/hudi-spark-bundle/target/hudi-spark3-bundle_2.12-0.9.0.jar \ --packages org.apache.spark:spark-avro_2.12:3.0.2 streaming-1.0-SNAPSHOT-jar-with-dependencies.jar &

driver-log4j.properties :

log4j.rootLogger =warn,stdout

log4j.appender.stdout = org.apache.log4j.ConsoleAppender

log4j.appender.stdout.Target = System.out

log4j.appender.stdout.layout = org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern = %-d{yyyy-MM-dd HH:mm} %5p %t %c{2}:%L - %m%n

executor-log4j.properties :

log4j.rootLogger =warn,stdout,rolling

log4j.appender.stdout = org.apache.log4j.ConsoleAppender

log4j.appender.stdout.Target = System.out

log4j.appender.stdout.layout = org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern = %-d{yyyy-MM-dd HH:mm} %5p %t %c{2}:%L - %m%n

log4j.appender.rolling=org.apache.log4j.RollingFileAppender

log4j.appender.rolling.layout=org.apache.log4j.PatternLayout

log4j.appender.rolling.layout.conversionPattern=%-d{yyyy-MM-dd HH:mm:ss} %5p %t %c{2}:%L - %m%n

log4j.appender.rolling.maxFileSize=100MB

log4j.appender.rolling.maxBackupIndex=5

log4j.appender.rolling.file=${spark.yarn.app.container.log.dir}/stdout

log4j.appender.rolling.encoding=UTF-8

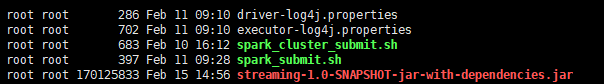

目录结构:

查看container 下日志

-rw-r--r-- 1 yarn hadoop 34840 Feb 9 13:00 directory.info

-rw-r----- 1 yarn hadoop 5709 Feb 9 13:00 launch_container.sh

-rw-r--r-- 1 yarn hadoop 0 Feb 9 13:00 prelaunch.err

-rw-r--r-- 1 yarn hadoop 100 Feb 9 13:00 prelaunch.out

-rw-r--r-- 1 yarn hadoop 4073 Feb 9 13:00 stderr

-rw-r--r-- 1 yarn hadoop 0 Feb 9 13:00 stdout

1186

1186

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?