今天学习scrapy爬取网络时遇到的一些坑的可能

正常情况:DEBUG: Crawled (200) <GET http://www.techbrood.com/> (referer: None)

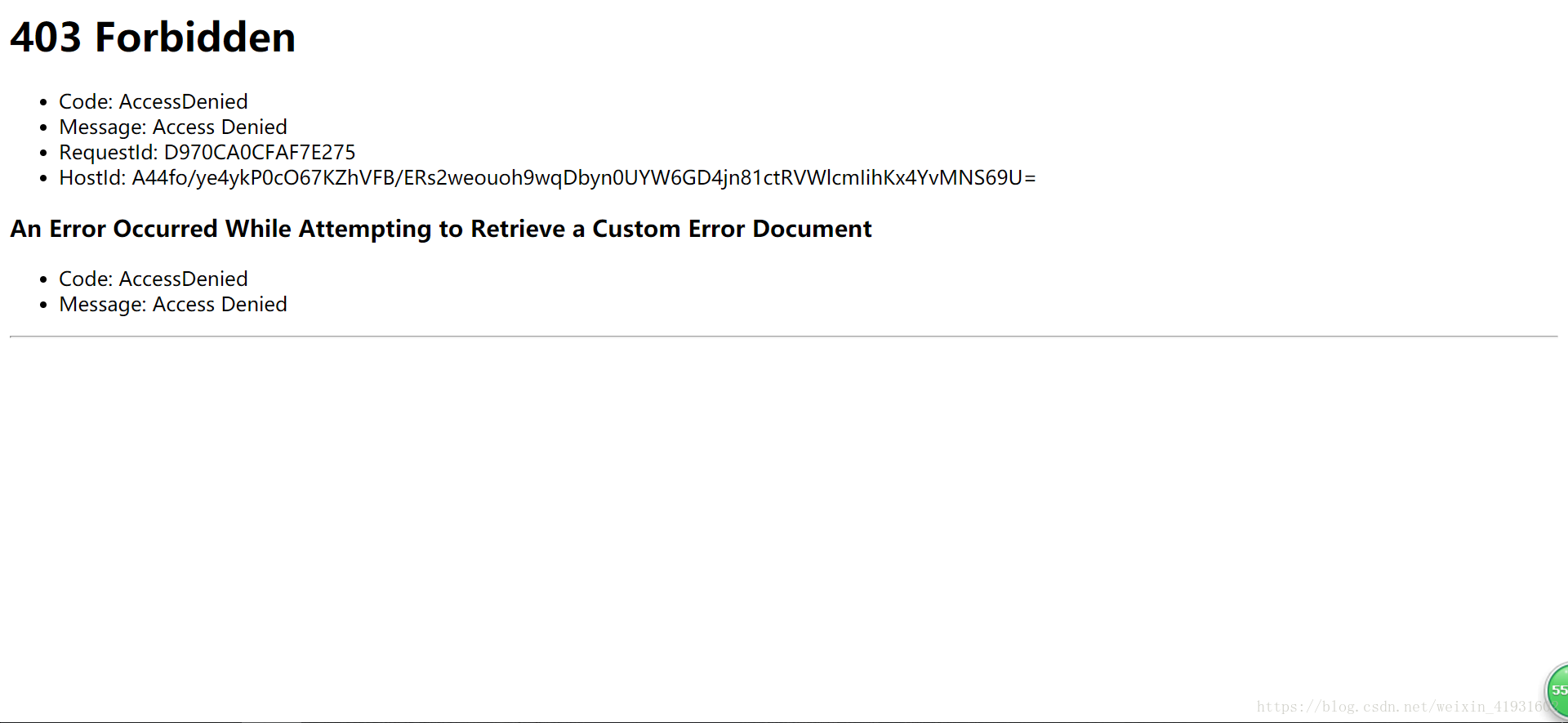

错误情况:DEBUG: Crawled (403) <GET http://www.techbrood.com/> (referer: None)

一,网址的错误

一开始看得是scrapy的文档,然后照着文档输出以下代码:

import scrapy

class DmozSpider(scrapy.spiders.Spider):

name = "dmoz"

allowed_domains = ["dmoz.org"]

start_urls = [

"http://www.dmoz.org/Computers/Programming/Languages/Python/Books/",

"http://www.dmoz.org/Computers/Programming/Languages/Python/Resources/"

]

def parse(self, response):

filename = response.url.split("/")[-2]

with open(filename, 'wb') as f:

f.write(response.body)然后出现返回403错误。找了挺久原因,毕竟其他地方没啥问题,只有通过排除法了,最终将注意力放在网址上,于是将网址输入到浏览器上,果然是错误的。两个网址都是错误的。

解决方案:首先检查你的网址没有错误,这是非常关键的一步。当我改过来之后就出现了。

# -*- coding:utf-8 -*-

import scrapy

class DmozSpider(scrapy.spiders.Spider):

name = "dmoz"

allowed_domains = ["dmoz.org"]

start_urls = [

"https://blog.csdn.net/weixin_41931602/article/details/80199750",

]

def parse(self, response):

with open("TY.html", "w") as f:

f.write(response.body)

二.缩进问题

在看黑马程序员的scrapy的公开视频时,照着打代码也一样错误,而且一直都没有出现爬取出来的网页的HTML源代码。

#scrapy genspider itcast "itcast.cn"

# -*- coding: utf-8 -*-

import scrapy

import sys

reload(sys)

sys.setdefaultencoding("utf-8")

from mySpider.items import ItcastItem

class ItcastSpider(scrapy.Spider):

name = "itcast"

allowed_domains = ["itcast.cn"]

start_urls = ("http://www.itcast.cn/channel/teacher.shtml#ajavaee")

#一定要记得将parse函数缩进

def parse(self, response):

with open("teacher.html", "w") as f:

f.write(response.text)

print response.text反反复复看好像也没啥问题吧?这次网址也正确啊!检查了许久,才发现我竟然把parse函数写到外面了,难怪没有写出相关的网页代码出来,毕竟我写的代码运行时是没有调用这个函数的。

顺便提一提,parse(self, response) :解析的方法,每个初始URL完成下载后将被调用,调用的时候传入从每一个URL传回的Response对象来作为唯一参数,主要作用如下:

- 负责解析返回的网页数据(response.body),提取结构化数据(生成item)

- 生成需要下一页的URL请求。

解决方法:一定要记得将parse函数缩进

三.网页有反爬虫

凡是使用爬虫去爬取数据时,都应提前想想爬取的网站上有没有反爬虫,你写的代码都应该有代理吧?

解决方案:在settings文件中添加

USER_AGENT = 'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/55.0.2883.87 Safari/537.36'附录:常见的user_agent:

USER_AGENTS = [

"Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; AcooBrowser; .NET CLR 1.1.4322; .NET CLR 2.0.50727)",

"Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.0; Acoo Browser; SLCC1; .NET CLR 2.0.50727; Media Center PC 5.0; .NET CLR 3.0.04506)",

"Mozilla/4.0 (compatible; MSIE 7.0; AOL 9.5; AOLBuild 4337.35; Windows NT 5.1; .NET CLR 1.1.4322; .NET CLR 2.0.50727)",

"Mozilla/5.0 (Windows; U; MSIE 9.0; Windows NT 9.0; en-US)",

"Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Win64; x64; Trident/5.0; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 2.0.50727; Media Center PC 6.0)",

"Mozilla/5.0 (compatible; MSIE 8.0; Windows NT 6.0; Trident/4.0; WOW64; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 1.0.3705; .NET CLR 1.1.4322)",

"Mozilla/4.0 (compatible; MSIE 7.0b; Windows NT 5.2; .NET CLR 1.1.4322; .NET CLR 2.0.50727; InfoPath.2; .NET CLR 3.0.04506.30)",

"Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN) AppleWebKit/523.15 (KHTML, like Gecko, Safari/419.3) Arora/0.3 (Change: 287 c9dfb30)",

"Mozilla/5.0 (X11; U; Linux; en-US) AppleWebKit/527+ (KHTML, like Gecko, Safari/419.3) Arora/0.6",

"Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.8.1.2pre) Gecko/20070215 K-Ninja/2.1.1",

"Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN; rv:1.9) Gecko/20080705 Firefox/3.0 Kapiko/3.0",

"Mozilla/5.0 (X11; Linux i686; U;) Gecko/20070322 Kazehakase/0.4.5",

"Mozilla/5.0 (X11; U; Linux i686; en-US; rv:1.9.0.8) Gecko Fedora/1.9.0.8-1.fc10 Kazehakase/0.5.6",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.56 Safari/535.11",

"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_7_3) AppleWebKit/535.20 (KHTML, like Gecko) Chrome/19.0.1036.7 Safari/535.20",

"Opera/9.80 (Macintosh; Intel Mac OS X 10.6.8; U; fr) Presto/2.9.168 Version/11.52",

]如果还不能解决的话,还需要设置以下的

1.添加IP代理:

PROXIES = [

{'ip_port': '111.11.228.75:80', 'user_pass': ''},

{'ip_port': '120.198.243.22:80', 'user_pass': ''},

{'ip_port': '111.8.60.9:8123', 'user_pass': ''},

{'ip_port': '101.71.27.120:80', 'user_pass': ''},

{'ip_port': '122.96.59.104:80', 'user_pass': ''},

{'ip_port': '122.224.249.122:8088', 'user_pass': ''},

]2.改机器人协议以及cookie

ROBOTSTXT_OBEY = False

COOKIES_ENABLED = False

3.设置延迟

DOWNLOAD_DELAY = 3

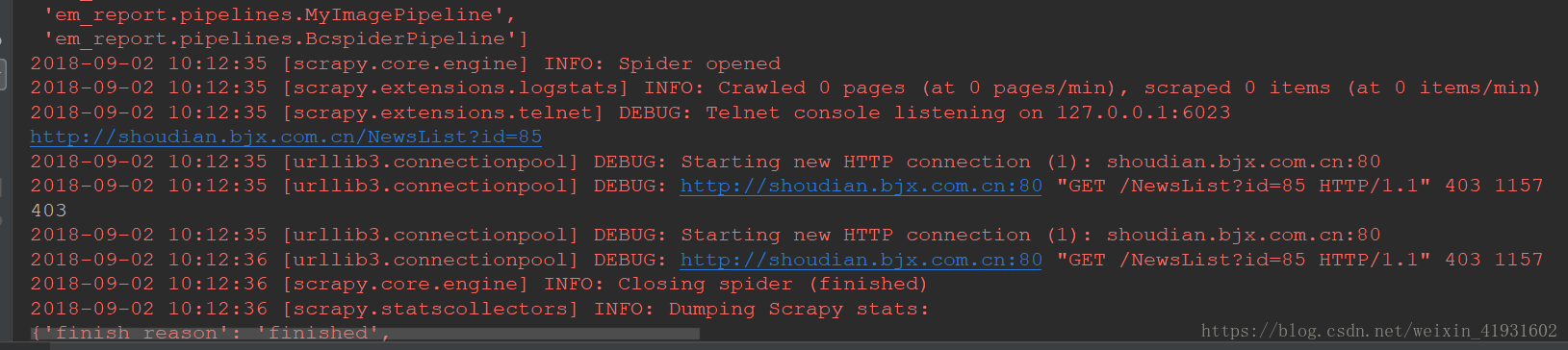

四.在分段函数中所要爬取的url有反爬虫,例如下图加了显示状态码分辨出来的

def start_requests(self):

bash_url = 'http://shoudian.bjx.com.cn/NewsList?id=85'

last_url = '&page='

for i in range(1,10):#10

if i == 1:

url = bash_url

else:

url = bash_url + last_url + str(i)

print(url)

print(requests.get(url).status_code)

if requests.get(url).status_code != 403:

yield Request(url, self.parse)

print(url)

else:

break

解决方案:在请求的url后面添加头文件如以下,头文件可以从问题三的附录中随机取

def start_requests(self):

bash_url = 'http://shoudian.bjx.com.cn/NewsList?id=85'

last_url = '&page='

for i in range(1,10):#10

if i == 1:

url = bash_url

else:

url = bash_url + last_url + str(i)

url_code = requests.get(url,headers={'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/55.0.2883.87 Safari/537.36'}).status_code

if url_code != 403:

yield Request(url, self.parse,headers={'User-Agent':'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/55.0.2883.87 Safari/537.36'})

else:

break

64万+

64万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?