好久没写技术文章了,这一年来都在做管理工作,技术都荒废了,最近因为重心调整重新捡起来,希望能快速赶上。

本篇文章主要是因为最近在给公司某个单位做投诉文本分类,也就是比较常见的NLP场景,方法有很多,比如Bert,LSTM等,但是我没玩过这些,所以还是先用最简单的Tf-IDF来。

注:数据样本因为保密要求,所以不可能分享,大家如果想要学习的话,可以到豆瓣之类的网站爬取评论,效果是类似的。

一、加载数据并剔除小样本或无效数据

import pandas as pd

import numpy as np

import jieba

import tqdm

import re

data = pd.read_excel('投诉清单202101-02.xlsx', usecols=['客户性质', '处理内容', '原因代码'])

data['reason'] = data['原因代码'].apply(lambda x:str(x)[1:] if len(str(x))>=1 else '')

data = data[data['reason']!='']

data_cause_count = data['reason'].value_counts()

label_list = list(data_cause_count[data_cause_count>=50].index)

total_data = data[data['reason'].isin(label_list)]

total_data.head()

效果:

二、分析并去除停用词

%%time

def isAllnum(input_str):

'''判断是否字母+数字'''

value = re.compile(r'^[a-zA-Z]+[0-9]+$')

result = value.match(input_str)

if result:

return True

else:

return False

def drop_stopwords(content):

'''先用jieba分词,然后去除停用词'''

contents_clean = []

content = content.strip()

word_list = jieba.lcut(content)

for word in word_list:

if word.strip() == '' or word.isdigit() or isAllnum(word) or word in stopwords:

continue

contents_clean.append(word)

new_content = ' '.join(contents_clean)

word_seg_list.append(new_content)

return new_content

word_seg_list = []

stopwords = pd.read_csv('stopwords.txt', engine='python', encoding='utf-8', sep='\r\n', names=['stopword'])

stopwords = stopwords.stopword.values.tolist()

total_data['content'] = total_data['处理内容'].astype(str).apply(drop_stopwords)

total_data.head()

数据量不大,只有5000条,但也耗时1分钟。

效果:

三、切分数据并tf-idf转换词向量

#切分数据

from sklearn.model_selection import train_test_split

from sklearn.feature_extraction.text import TfidfVectorizer

label = total_data['reason']

train_x, test_x, y_train, y_test = train_test_split(word_seg_list, label, test_size=0.3, random_state=1)

#tf-idf

vectorizer = TfidfVectorizer(min_df=1)

X_train = vectorizer.fit_transform(train_x)

terms = vectorizer.get_feature_names()

X_test = vectorizer.transform(test_x)

#打印出原始样本集、训练集和测试集的数目

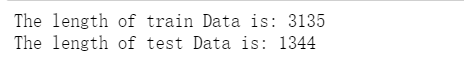

print("The length of train Data is:", X_train.shape[0])

print("The length of test Data is:", X_test.shape[0])

效果:

四、xgboost训练并预测

%%time

from xgboost import XGBClassifier

from sklearn.metrics import precision_score, recall_score, f1_score

from sklearn.metrics import classification_report

model_xgb = XGBClassifier().fit(X_train, y_train)

y_pred = model_xgb.predict(X_test)

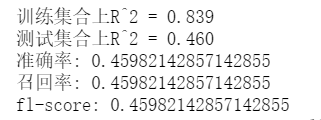

print("训练集合上R^2 = {:.3f}".format(model_xgb.score(X_train, y_train)))

print("测试集合上R^2 = {:.3f}".format(model_xgb.score(X_test, y_test)))

print("准确率:",precision_score(y_test, y_pred, average='micro'))

print("召回率:", recall_score(y_test, y_pred, average='micro'))

print("fl-score:", f1_score(y_test, y_pred, average='micro'))

print(classification_report(y_test, y_pred))

效果:

从结果来看,效果不大好,accuracy只有46%,但是这并不是说tf-idf和xgboost不好,而是基础数据的数据质量不高,样本量也不大导致的。

后面还有很多的工作,但都需要上述两个方面的优化。

313

313

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?