Hadoop 之 Hbase 配置与使用

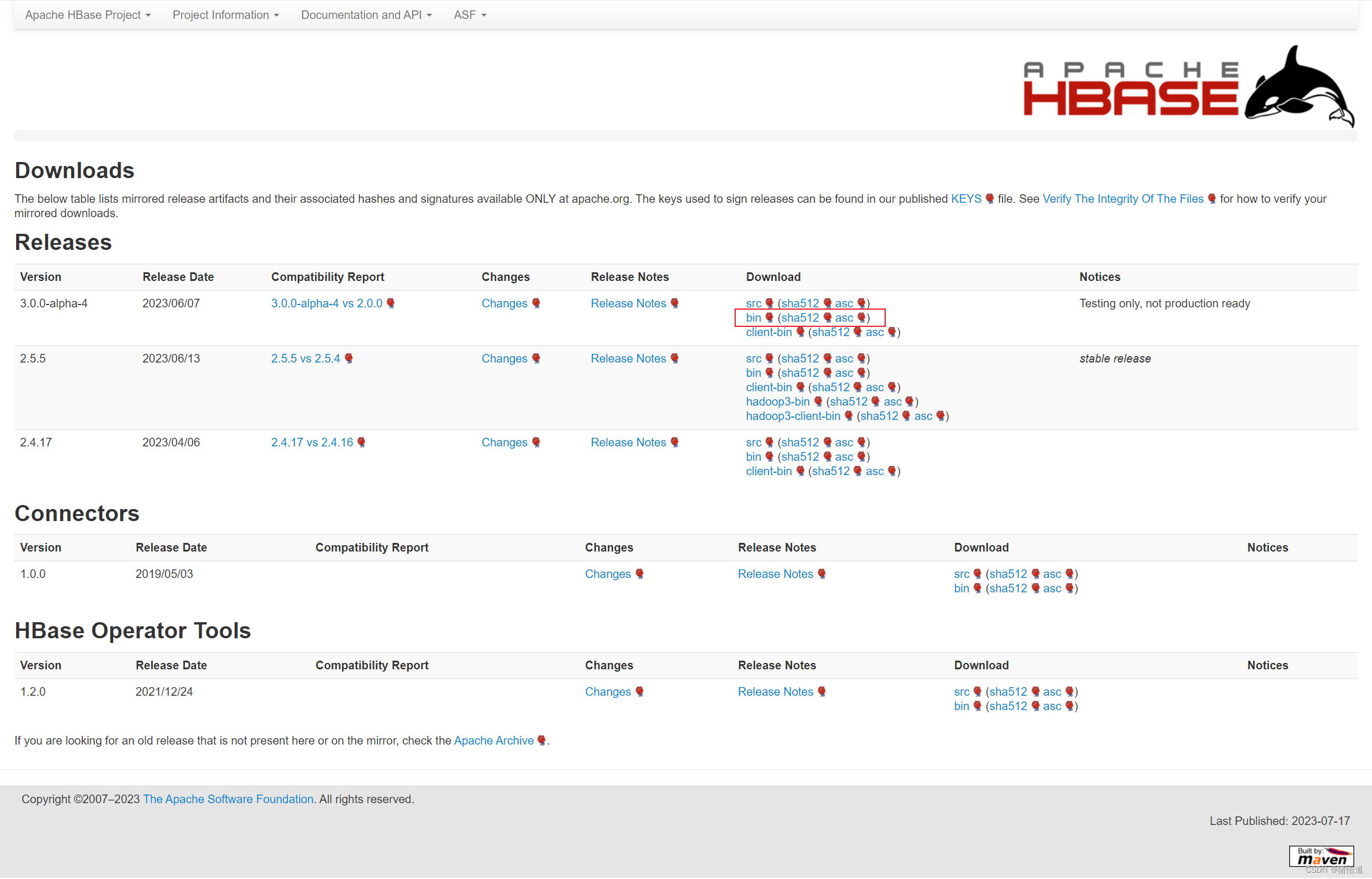

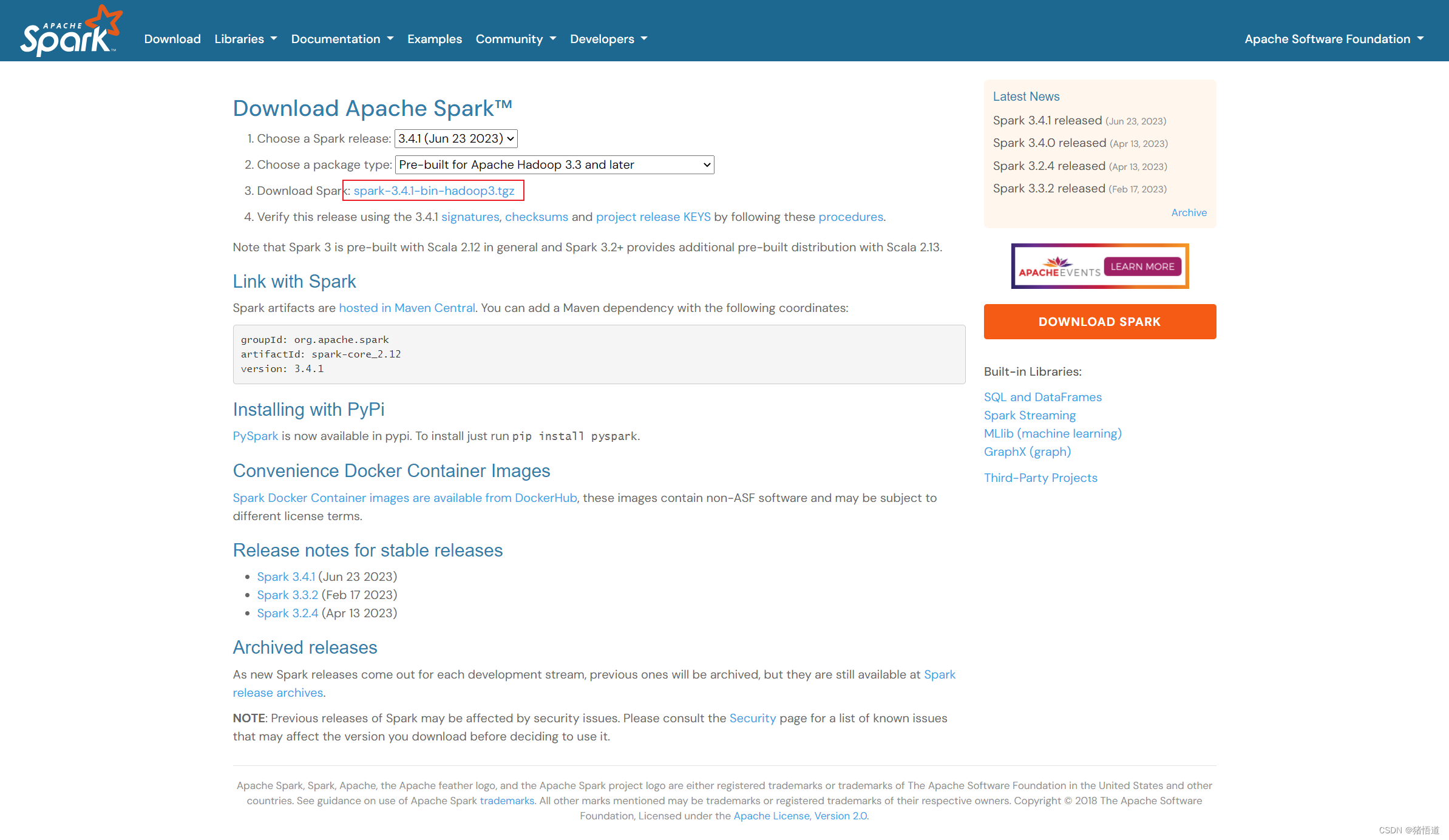

一.Hbase 下载

HBase 是一个分布式的、面向列的开源数据库:Hbase API

1.Hbase 下载

跳转到下载链接

二.Hbase 配置

1.单机部署

## 1.创建安装目录

mkdir -p /usr/local/hbase

## 2.将压缩包拷贝到虚拟机并解压缩

tar zxvf hbase-3.0.0-alpha-4-bin.tar.gz -C /usr/local/hbase/

## 3.添加环境变量

echo 'export HBASE_HOME=/usr/local/hbase/hbase-3.0.0-alpha-4' >> /etc/profile

echo 'export PATH=${HBASE_HOME}/bin:${PATH}' >> /etc/profile

source /etc/profile

## 4.指定 JDK 版本

echo 'export JAVA_HOME=/usr/local/java/jdk-11.0.19' >> $HBASE_HOME/conf/hbase-env.sh

## 5.创建 hbase 存储目录

mkdir -p /home/hbase/data

## 6.修改配置

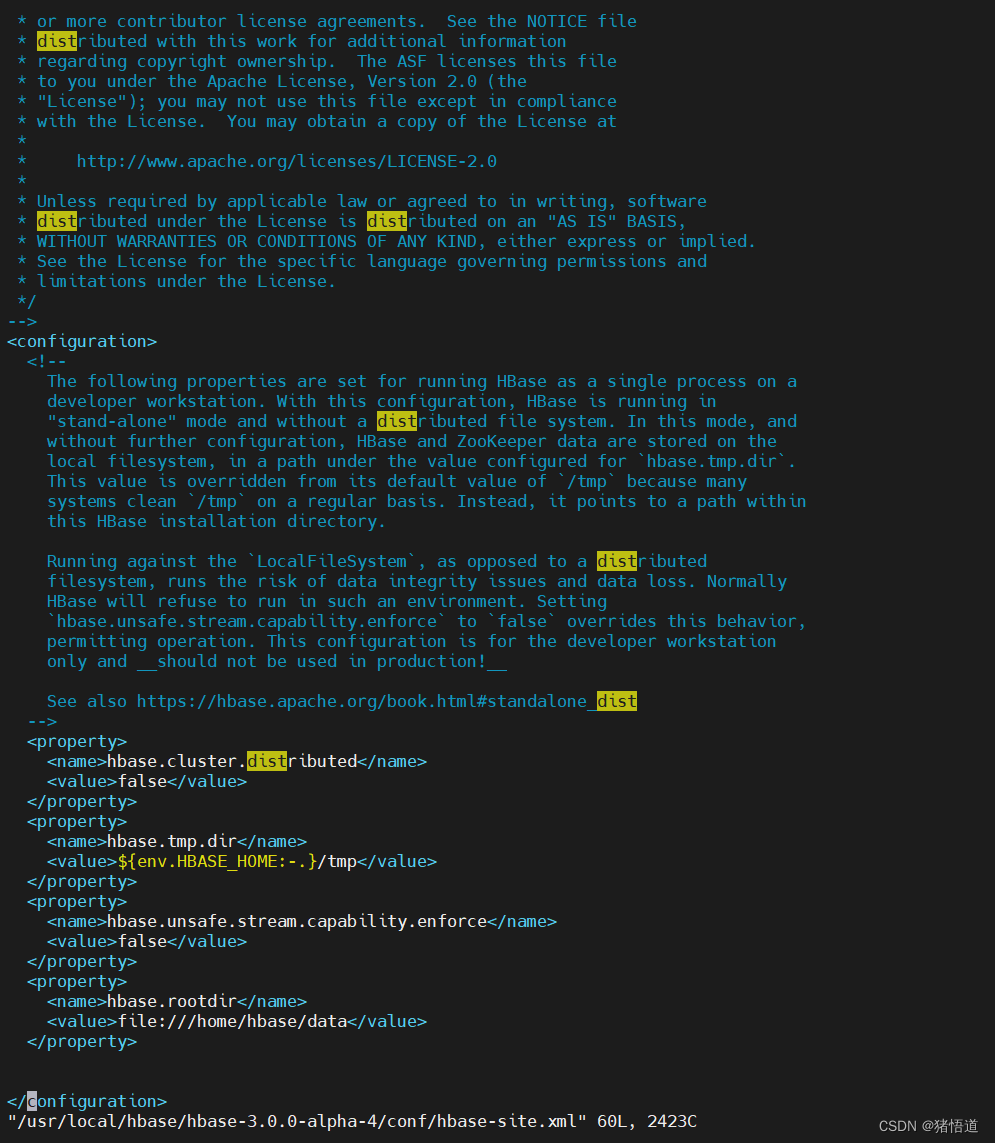

vim $HBASE_HOME/conf/hbase-site.xml

添加如下信息

<property>

<name>hbase.rootdir</name>

<value>file:///home/hbase/data</value>

</property>

## 1.进入安装目录

cd $HBASE_HOME

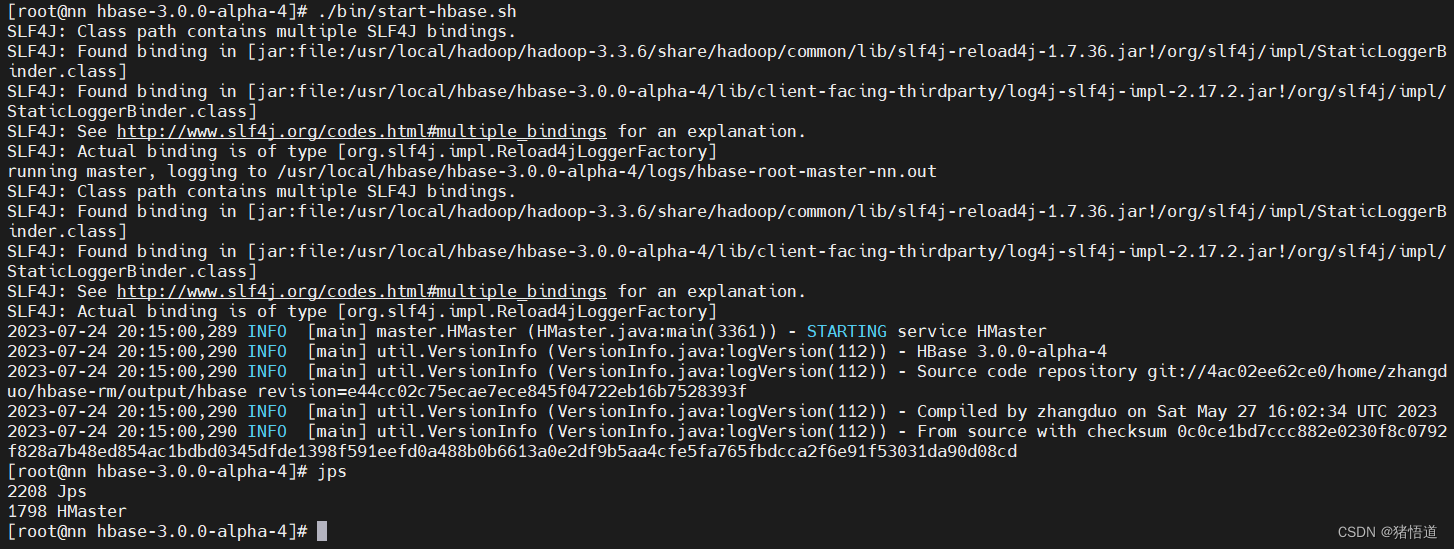

## 2.启动服务

./bin/start-hbase.sh

## 1.进入安装目录

cd $HBASE_HOME

## 2.关闭服务

./bin/stop-hbase.sh

2.伪集群部署(基于单机配置)

## 1.修改 hbase-env.sh

echo 'export JAVA_HOME=/usr/local/java/jdk-11.0.19' >> $HBASE_HOME/conf/hbase-env.sh

echo 'export HBASE_MANAGES_ZK=true' >> $HBASE_HOME/conf/hbase-env.sh

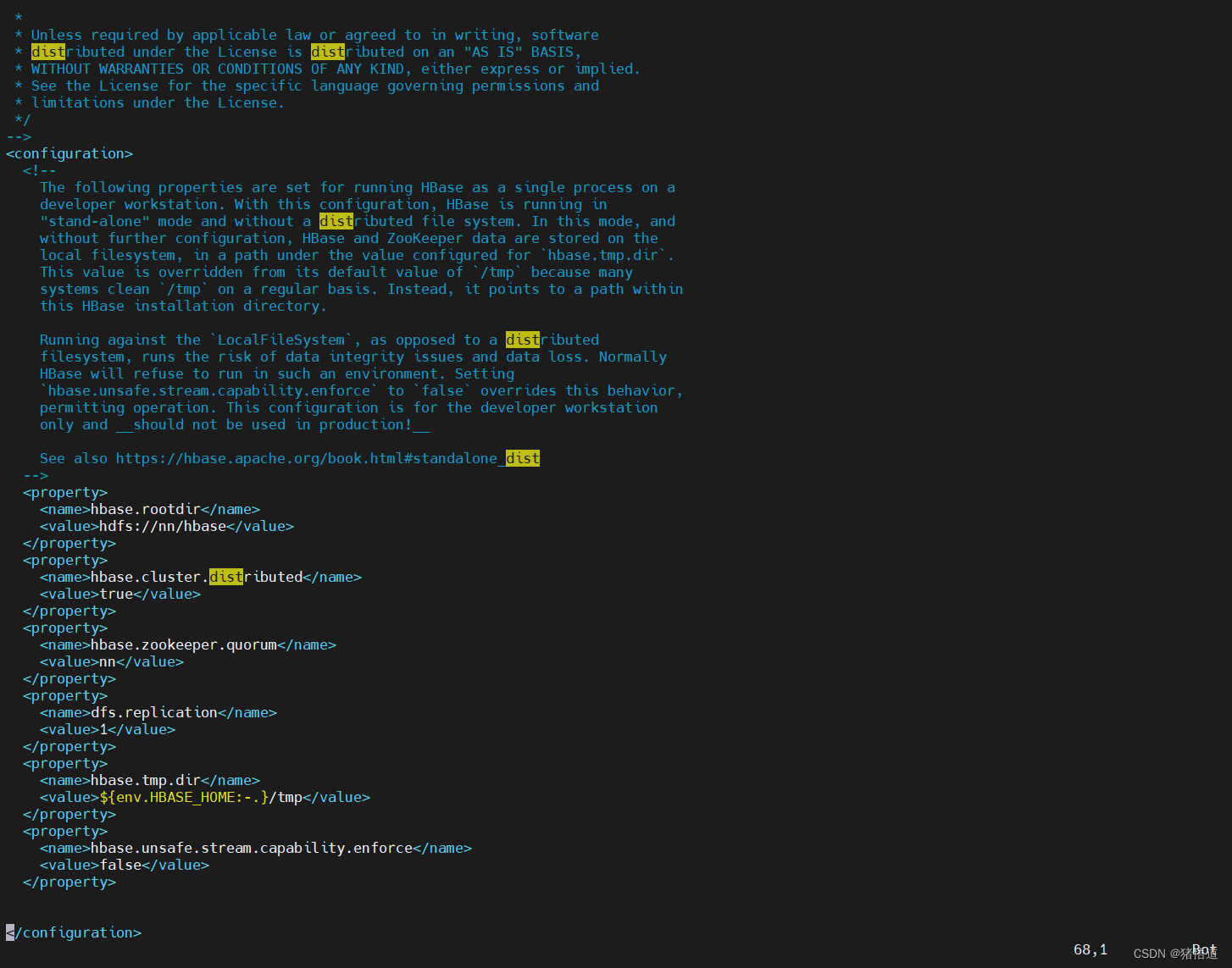

## 2.修改 hbase_site.xml

vim $HBASE_HOME/conf/hbase-site.xml

<!-- 将 hbase 数据保存到 hdfs -->

<property>

<name>hbase.rootdir</name>

<value>hdfs://nn/hbase</value>

</property>

<!-- 分布式配置 -->

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<!-- 配置 ZK 地址 -->

<property>

<name>hbase.zookeeper.quorum</name>

<value>nn</value>

</property>

<!-- 配置 JK 地址 -->

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

## 3.修改 regionservers 的 localhost 为 nn

echo nn > $HBASE_HOME/conf/regionservers

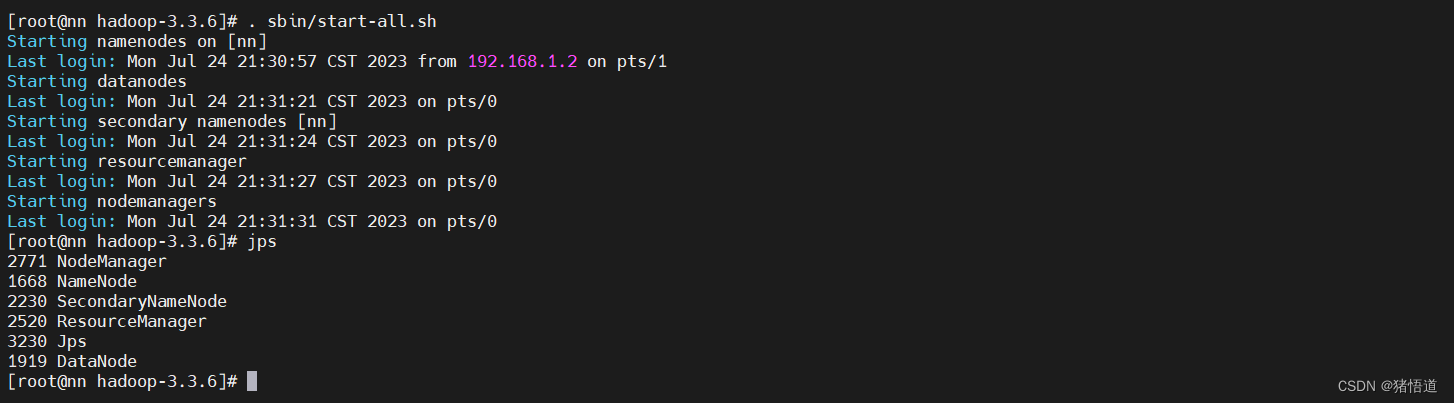

## 1.进入安装目录

cd $HADOOP_HOME

## 2.启动 hadoop 服务

./sbin/start-all.sh

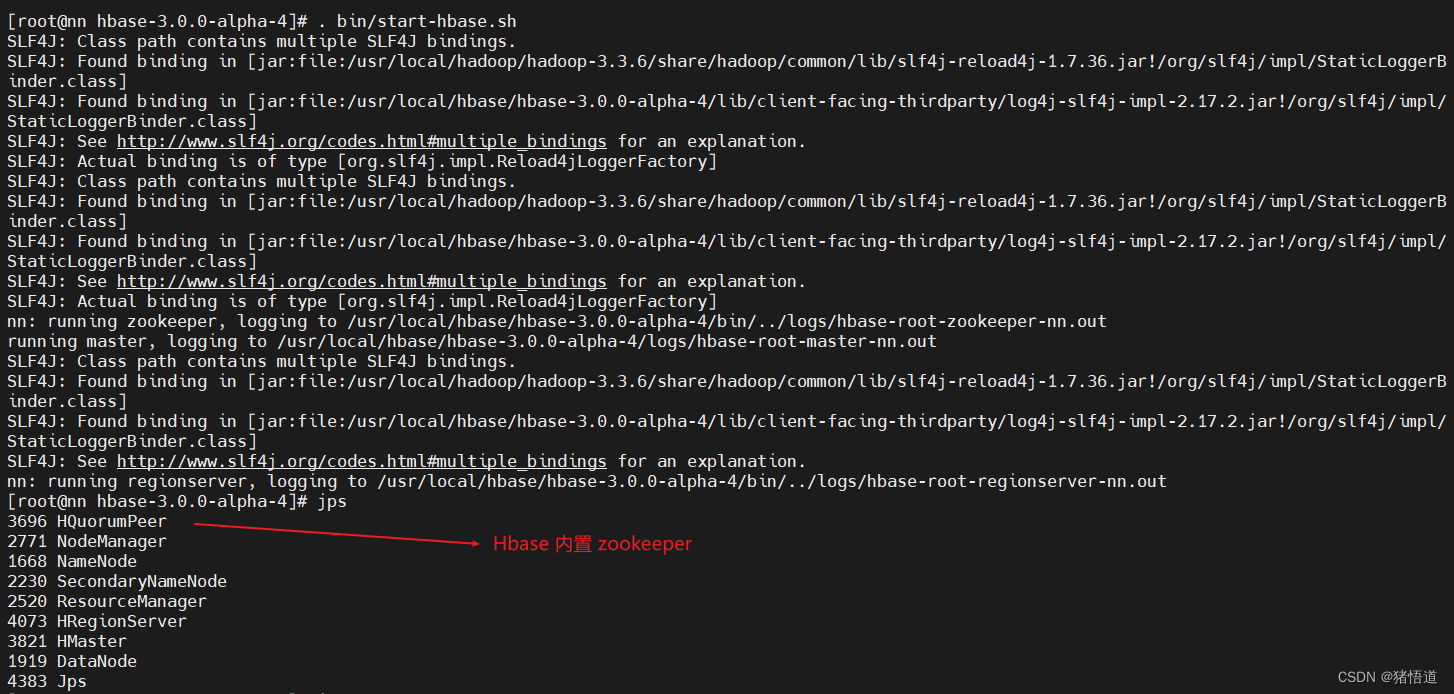

## 1.进入安装目录

cd $HBASE_HOME

## 2.启动服务

./bin/start-hbase.sh

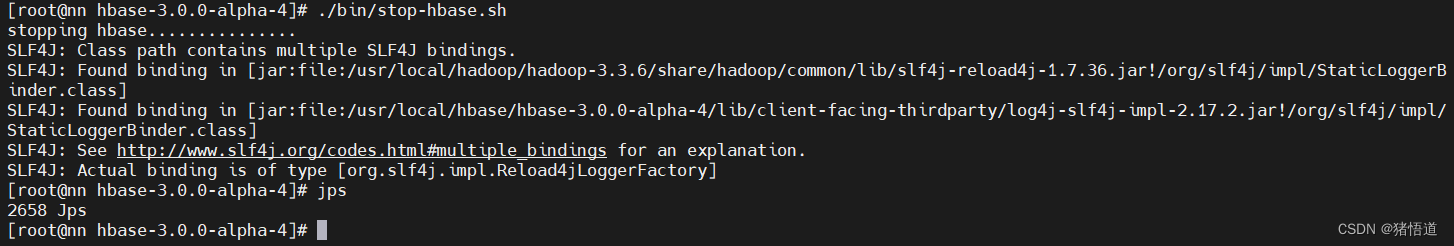

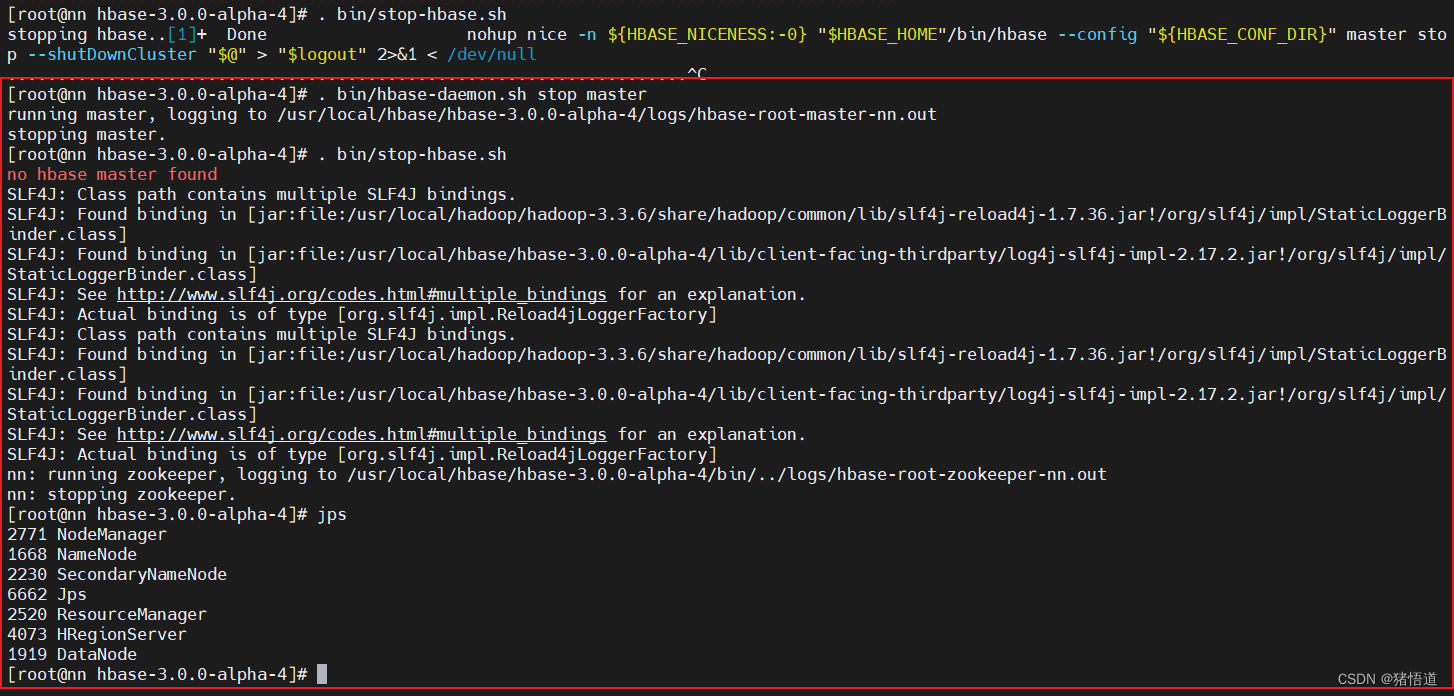

## 1.进入安装目录

cd $HBASE_HOME

## 2.关闭主节点服务(直接关服务是关不掉的,如图)

. bin/hbase-daemon.sh stop master

## 3.关闭服务

./bin/stop-hbase.sh

3.集群部署

## 1.创建 zookeeper 数据目录

mkdir -p $HBASE_HOME/zookeeper/data

## 2.进入安装目录

cd $HBASE_HOME/conf

## 3.修改环境配置

vim hbase-env.sh

## 添加 JDK / 启动外置 Zookeeper

# JDK

export JAVA_HOME=/usr/local/java/jdk-11.0.19

# Disable Zookeeper

export HBASE_MANAGES_ZK=false

## 4.修改 hbase-site.xml

vim hbase-site.xml

## 配置如下信息

<!--允许的最大同步时钟偏移-->

<property>

<name>hbase.master.maxclockskew</name>`

<value>6000</value>

</property>

<!--配置 HDFS 存储实例-->

<property>

<name>hbase.rootdir</name>

<value>hdfs://nn:9000/hbase</value>

</property>

<!--启用分布式配置-->

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<!--配置 zookeeper 集群节点-->

<property>

<name>hbase.zookeeper.quorum</name>

<value>zk1,zk2,zk3</value>

</property>

<!--配置 zookeeper 数据目录-->

<property>

<name>hbase.zookeeper.property.dataDir</name>

<value>/usr/local/hbase/hbase-3.0.0-alpha-4/zookeeper/data</value>

</property>

<!-- Server is not running yet -->

<property>

<name>hbase.wal.provider</name>

<value>filesystem</value>

</property>

## 5.清空 regionservers 并添加集群节点域名

echo '' > regionservers

echo 'nn' >> regionservers

echo 'nd1' >> regionservers

echo 'nd2' >> regionservers

## 6.分别为 nd1 / nd2 创建 hbase 目录

mkdir -p /usr/local/hbase

## 7.分发 hbase 配置到另外两台虚拟机 nd1 / nd2

scp -r /usr/local/hbase/hbase-3.0.0-alpha-4 root@nd1:/usr/local/hbase

scp -r /usr/local/hbase/hbase-3.0.0-alpha-4 root@nd2:/usr/local/hbase

## 8.分发环境变量配置

scp /etc/profile root@nd1:/etc/profile

scp /etc/profile root@nd2:/etc/profile

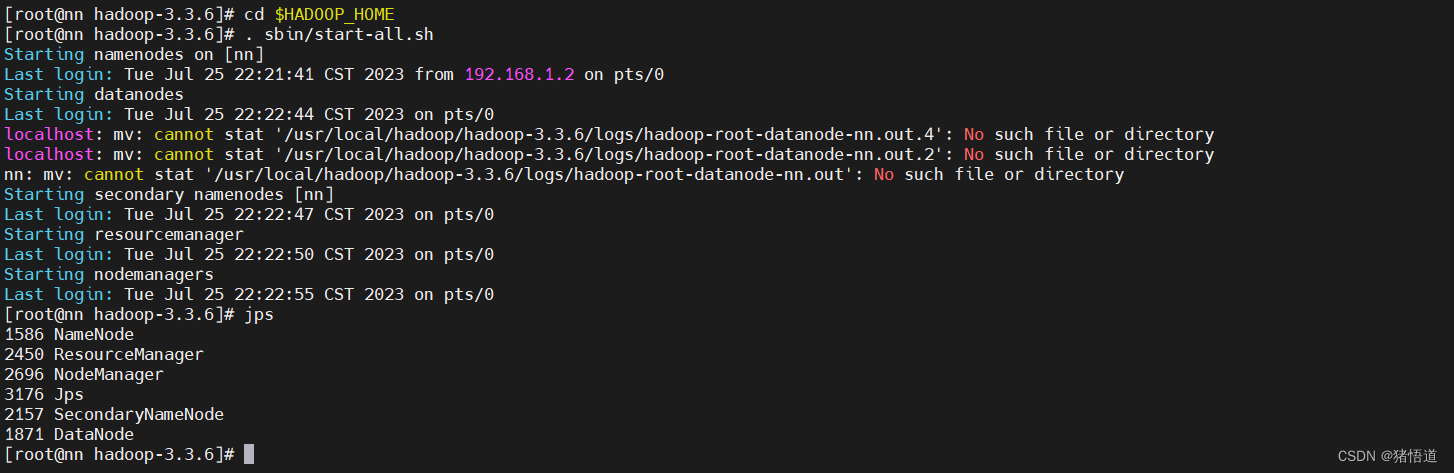

1.启动 hadoop 集群

Hadoop 集群搭建参考:Hadoop 搭建

## 1.启动 hadoop

cd $HADOOP_HOME

. sbin/start-all.sh

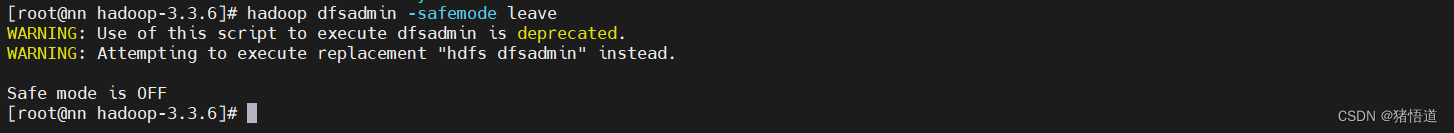

## 1.关闭 hadoop 安全模式

hadoop dfsadmin -safemode leave

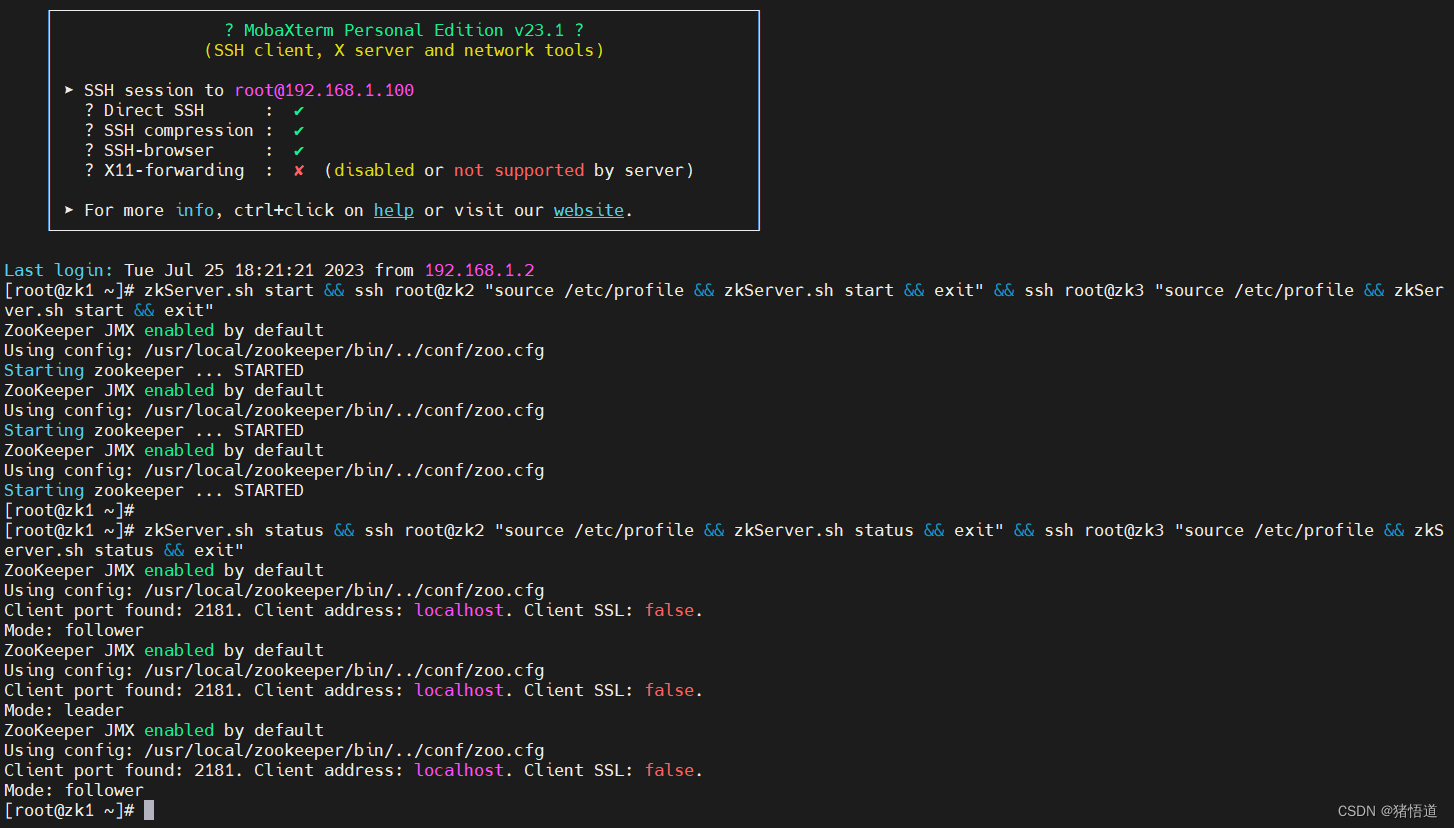

2.启动 zookeeper 集群

## 1.启动 zookeeper 集群

zkServer.sh start && ssh root@zk2 "source /etc/profile && zkServer.sh start && exit" && ssh root@zk3 "source /etc/profile && zkServer.sh start && exit"

## 2.查看状态

zkServer.sh status && ssh root@zk2 "source /etc/profile && zkServer.sh status && exit" && ssh root@zk3 "source /etc/profile && zkServer.sh status && exit"

3.启动 hbase 集群

## 1.分别为 nn /nd1 / nd2 配置 zookeeper 域名解析

echo '192.168.1.100 zk1' >> /etc/hosts

echo '192.168.1.101 zk2' >> /etc/hosts

echo '192.168.1.102 zk3' >> /etc/hosts

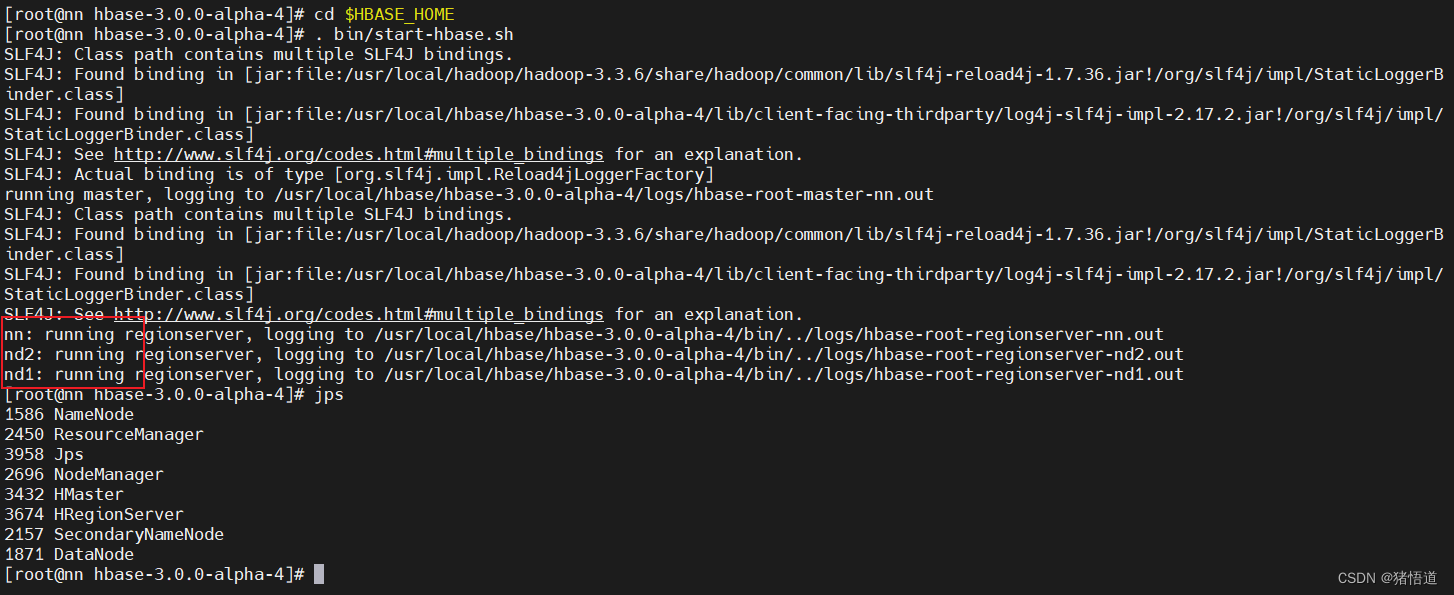

## 2.启动 habase

cd $HBASE_HOME

. bin/start-hbase.sh

## 3.停止服务

. bin/hbase-daemon.sh stop master

. bin/hbase-daemon.sh stop regionserver

. bin/stop-hbase.sh

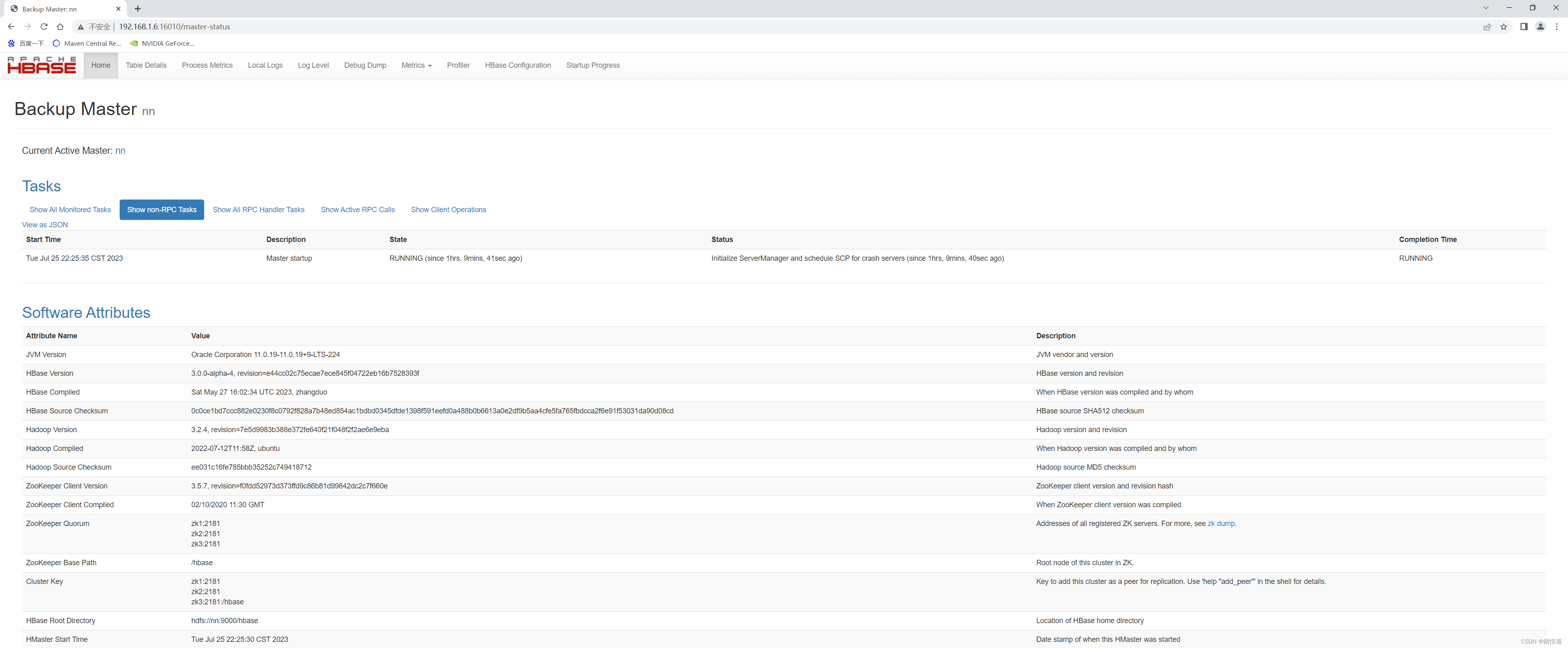

查看 UI 监控:http://192.168.1.6:16010/master-status

4.集群启停脚本

#!/bin/bash

case $1 in

"start")

## start hadoop

start-all.sh

## start zookeeper (先配置免密登录)

zkServer.sh start && ssh root@zk2 "source /etc/profile && zkServer.sh start && exit" && ssh root@zk3 "source /etc/profile && zkServer.sh start && exit"

## start hbase

start-hbase.sh

;;

"stop")

## stop hbase

ssh root@nd1 "source /etc/profile && hbase-daemon.sh stop regionserver && stop-hbase.sh && exit"

ssh root@nd2 "source /etc/profile && hbase-daemon.sh stop regionserver && stop-hbase.sh && exit"

hbase-daemon.sh stop master && hbase-daemon.sh stop regionserver && stop-hbase.sh

## stop zookeeper

zkServer.sh stop && ssh root@zk2 "source /etc/profile && zkServer.sh stop && exit" && ssh root@zk3 "source /etc/profile && zkServer.sh stop && exit"

## stop hadoop

stop-all.sh

;;

*)

echo "pls inout start|stop"

;;

esac

三.测试

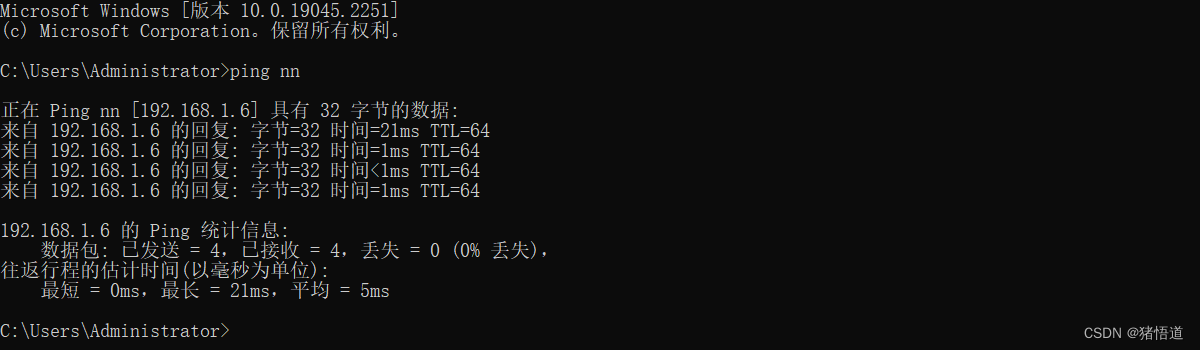

## 1.为 Windows 增加 Hosts 配置,添加 Hbase 集群域名解析 编辑如下文件

C:\Windows\System32\drivers\etc\hosts

## 2.增加如下信息

192.168.1.6 nn

192.168.1.7 nd1

192.168.1.8 nd2

测试配置效果

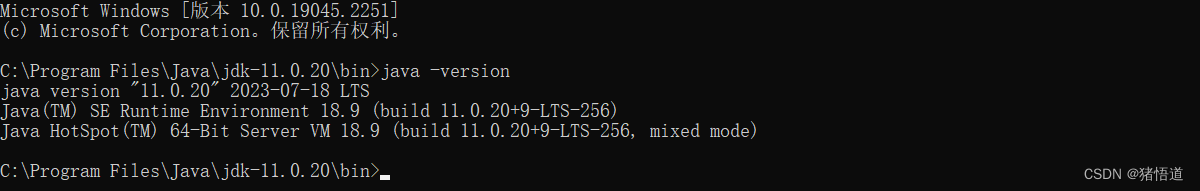

JDK 版本

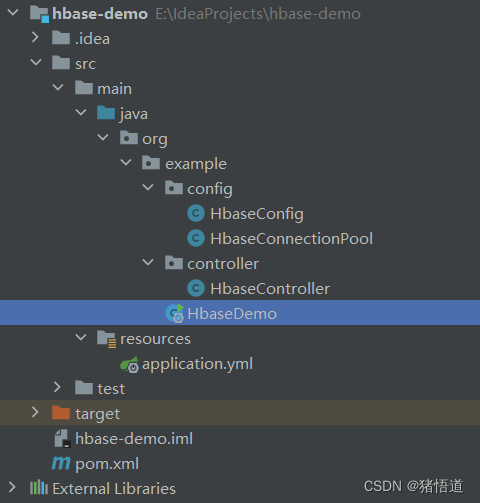

工程结构

1.Pom 配置

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>org.example</groupId>

<artifactId>hbase-demo</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<maven.compiler.source>11</maven.compiler.source>

<maven.compiler.target>11</maven.compiler.target>

<spring.version>2.7.8</spring.version>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

</properties>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

<version>${spring.version}</version>

</dependency>

<dependency>

<groupId>org.projectlombok</groupId>

<artifactId>lombok</artifactId>

<version>1.18.28</version>

</dependency>

<dependency>

<groupId>com.alibaba</groupId>

<artifactId>fastjson</artifactId>

<version>2.0.32</version>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

&l

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

9450

9450

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?