Spark on K8S(spark-on-kubernetes-operator)环境搭建

环境要求

Operator Version:最新即可

Kubernetes Version: 1.13或更高

Spark Version:2.4.4或以上,我用的是2.4.4

Operator image:最新即可

基本原理

Spark作为计算模型,搭配资源调度+存储服务即可发挥作用,一直用的是Yarn+HDFS,近期考虑尝试使用Spark+HDFS进行计算,因此本质上是对资源调度框架进行替换;

Yarn在资源调度的逻辑单位是Container,Container在资源管理上对比K8S存在一些不足,没有完全的做到计算任务的物理环境独立(例如python/java/TensorFlow等混部计算),在K8S上可用过容器实现相同物理资源的不同运行环境隔离,充分利用资源,简单来看两者的区别:

- job on pod VS job on container;

- 集中调度(api-server) VS 两级调度(ResourceManager/ApplicationMaster);

- 容器实现物理资源独立共享,计算环境隔离 VS Container实现物理资源独立共享(磁盘和网络IO除外),计算环境耦合

- I层弹性 VS none

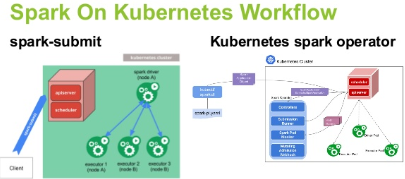

用户角度看,两种提交任务的流程:

Spark on Yarn

spark-submit ---- ResourceManager ----- ApplicaitonMaster(Container) ---- Driver(Container)----Executor(Container)

PS:如果Cluster模式则Driver随机用一个Container启动;Client模式则会在提交方本地启动;

Spark on Kubernetes

spark-submit ---- Kube-api-server(Pod) ---- Kube-scheduler(Pod) ---- Driver(Pod) ---- Executor(Pod)

PS:和Deployment/Statefulset不同,Spark在调度执行时缺少自定义的controller,因此在集群中提交后看到的就是driver+executor的pod,没有deployment/statefulset等类似的controller管理;

本身在1.13的K8S版本也是直接可以submit的,为了方便用户使用,通过CRD(Custumer Resource Definition?),定义SparkApplication,用户可以直接在K8S上申请创建该资源对象,Spark的submit过程在该CRD内完成,我理解operator支持新CRD类型的作用不是很明显,但是可以支持了独立调度,pod状态监控,把driver/executor的pod封装为sparkApplication整体监控,executor失败重试啥的,方便后续的开发和维护,所以尝试一下;

初步的了解可以参考如下两部分内容:

https://www.slidestalk.com/AliSpark/MicrosoftPowerPoint55236?video

http://www.sohu.com/a/343653335_315839

分享一篇调度的文章:

https://io-meter.com/2018/02/09/A-summary-of-designing-schedulers/

关于性能,有人也做过测试比对:

https://xiaoxubeii.github.io/articles/practice-of-spark-on-kubernetes/

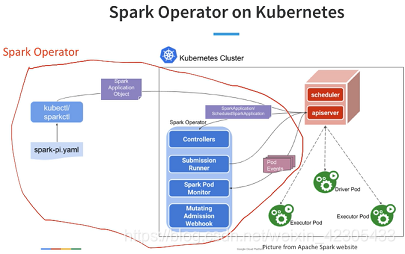

官方的图画的比较明白了:

- submit过程拿出来,用sparkctl做

- 加了controller,支持了sparkApplication类型

- 调度抽出来由operator自己做

感兴趣可以跳转到这里看看简答的介绍:https://www.slideshare.net/databricks/apache-spark-on-k8s-best-practice-and-performance-in-the-cloud

具体到operator的流程,包括一下几步:

- 提交sparkApplication的请求到api-server

- 把sparkApplication的CRD持久化到etcd

- operator订阅发现有sparkApplication,获取后通过submission runner提交spark-submit过程,请求给到api-server后生成对应的driver/executor pod

- spark pod monitor会监控到application执行的状态(所以通过sparkctl可以通过list、status看到)

- mutating adminission webhook建svc,可以查看spark web ui

通过这种方式,我们的submit任务过程可以和部署一个deployment/statefulset一样简单,镜像+yaml,同时还可以使用comfigMap、secret、volume等K8S的资源,这样比自己submit,通过–conf或在镜像里固定写死要方便很多(当然Spark在3.0版本也增加了一些配置项,但归根结底还是要在submit过程或打镜像时来管理的)

环境安装

kubernetes 1.13环境安装

得写一篇流水账,不单独写了,放在这里:

https://blog.csdn.net/weixin_42305433/article/details/103931032

https://blog.csdn.net/weixin_42305433/article/details/103931045

Spark-on-kubernetes-operator环境安装

也得写一篇流水账,不单独写了,放在这里:

https://blog.csdn.net/weixin_42305433/article/details/103930666

Demo过程

准备spark-pi镜像

准备就用官方推荐的spark-pi来实践一下,该jar包在spark2.4.4镜像里有,后来由于想本地调测一些东西,在spark github上对应目录能找到该example源码,官方源码如下:

// scalastyle:off println

package org.apache.spark.examples

import scala.math.random

import org.apache.spark.sql.SparkSession

/** Computes an approximation to pi */

object SparkPi {

def main(args: Array[String]): Unit = {

val spark = SparkSession

.builder

.appName("Spark Pi")

.getOrCreate()

val slices = if (</

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1398

1398

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?