一、Apriori算法原理

参考:Python --深入浅出Apriori关联分析算法(一)www.cnblogs.com

二、在Python中使用Apriori算法

查看Apriori算法的帮助文档:

from mlxtend.frequent_patterns import apriori

help(apriori)

Help on function apriori in module mlxtend.frequent_patterns.apriori:

apriori(df, min_support=0.5, use_colnames=False, max_len=None, verbose=0, low_memory=False)

Get frequent itemsets from a one-hot DataFrame

Parameters

-----------

df : pandas DataFrame

pandas DataFrame the encoded format. Also supports

DataFrames with sparse data;

Please note that the old pandas SparseDataFrame format

is no longer supported in mlxtend >= 0.17.2.

The allowed values are either 0/1 or True/False.

For example,

#apriori算法对输入数据类型有特殊要求!

#需要是数据框格式,并且数据要进行one-hot编码转换,转换后商品名称为列名,值为True或False。

#每一行记录代表一个顾客一次购物记录。

#第0条记录为[Apple,Beer,Chicken,Rice],第1条记录为[Apple,Beer,Rice],以此类推。

```

Apple Bananas Beer Chicken Milk Rice

0 True False True True False True

1 True False True False False True

2 True False True False False False

3 True True False False False False

4 False False True True True True

5 False False True False True True

6 False False True False True False

7 True True False False False False

```

min_support : float (default: 0.5)#最小支持度

A float between 0 and 1 for minumum support of the itemsets returned.

The support is computed as the fraction

`transactions_where_item(s)_occur / total_transactions`.

use_colnames : bool (default: False)

#设置为True,则返回的关联规则、频繁项集会使用商品名称,而不是商品所在列的索引值

If `True`, uses the DataFrames' column names in the returned DataFrame

instead of column indices.

max_len : int (default: None)

Maximum length of the itemsets generated. If `None` (default) all

possible itemsets lengths (under the apriori condition) are evaluated.

verbose : int (default: 0)

Shows the number of iterations if >= 1 and `low_memory` is `True`. If

>=1 and `low_memory` is `False`, shows the number of combinations.

low_memory : bool (default: False)

If `True`, uses an iterator to search for combinations above

`min_support`.

Note that while `low_memory=True` should only be used for large dataset

if memory resources are limited, because this implementation is approx.

3-6x slower than the default.

Returns

-----------

pandas DataFrame with columns ['support', 'itemsets'] of all itemsets

that are >= `min_support` and < than `max_len`

(if `max_len` is not None).

Each itemset in the 'itemsets' column is of type `frozenset`,

which is a Python built-in type that behaves similarly to

sets except that it is immutable.

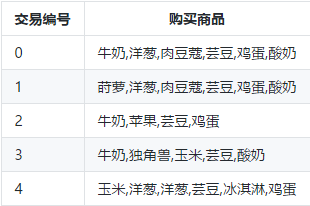

练习数据集:

提取码: 6mbg

部分数据截图:

导入数据:

import pandas as pd

path = 'C:\\Users\\Cara\\Desktop\\store_data.csv'

records = pd.read_csv(path,header=None,encoding='utf-8')

print(records)

结果如下:

使用TransactionEncoder对交易数据进行one-hot编码:

先查看TransactionEncoder的帮助文档:

from mlxtend.preprocessing import TransactionEncoder

... help(TransactionEncoder)

...

Help on class TransactionEncoder in module mlxtend.preprocessing.transactionencoder:

class TransactionEncoder(sklearn.base.BaseEstimator, sklearn.base.TransformerMixin)

| Encoder class for transaction data in Python lists

|

| Parameters

| ------------<

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?