%% II. 声明全局变量

global P_train % 训练集输入数据

global T_train % 训练集输出数据

global R % 输入神经元个数

global S2 % 输出神经元个数

global S1 % 隐层神经元个数

global S % 编码长度

S1 =7;

%% 训练集/测试集产生

input=xlsread('input.xlsx');

output=xlsread('output.xlsx');

% 随机产生训练集和测试集

temp = randperm(size(input,1));

% 训练集——25个样本

P_train = input(temp(1:28),:)';

T_train = output(temp(1:28),:)';

% 测试集——5个样本

P_test =input((28:end),:)';

T_test = output((28:end),:)';

N = size(P_test,2);

%% III. 数据归一化

[p_train, ps_input] = mapminmax(P_train,0.2,0.8);

p_test = mapminmax('apply',P_test,ps_input);

[t_train, ps_output] = mapminmax(T_train,0.2,0.8);

%% IV. BP神经网络创建、训练及仿真测试

%%

% 1. 创建网络

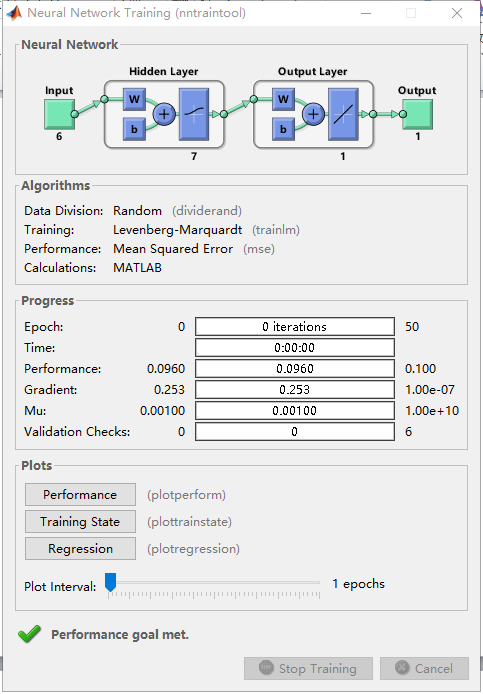

net = newff(p_train,t_train,[S1],{'logsig','purelin'},'trainlm');

%%

% 2. 设置训练参数

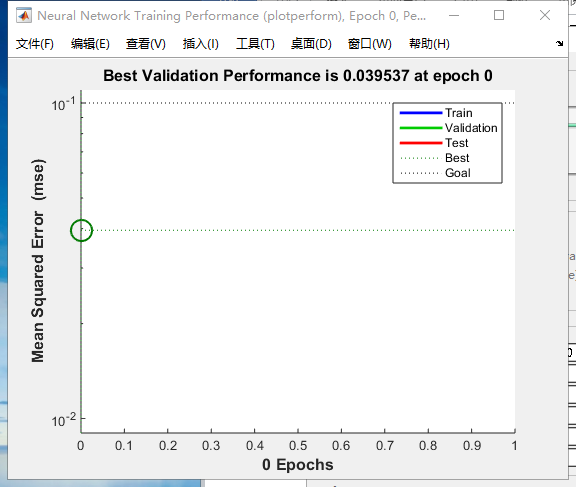

net.trainParam.epochs = 50;

net.trainParam.goal =0.1;

net.trainParam.lr = 0.06;

%%

% 3. 训练网络

net = train(net,p_train,t_train);

%%

% 4. 仿真测试

t_sim = sim(net,p_test);

%

T_sim = mapminmax('reverse',t_sim,ps_output);

error = abs(T_sim - T_test)./T_test;

%% V. GA-BP神经网络

R = size(p_train,1);

S2 = size(t_train,1);

S = R*S1 + S1*S2 + S1 + S2;

aa = ones(S,1)*[-1,1];

%% VI. 遗传算法优化

%%

% 1. 初始化种群

popu = 50; % 种群规模

initPpp = initializega(popu,aa,'gabpEval',[],[1e-6 1]); % 初始化种群

%%

% 2. 迭代优化

gen = 100; % 遗传代数

% 调用GAOT工具箱,其中目标函数定义为gabpEval

[x,endPop,bPop,trace] = gaot(aa,'gabpEval',[],initPpp,[1e-6 1 1],'maxGenTerm',gen,...

'normGeomSelect',0.09,'arithXover',2,'nonUnifMutation',[2 gen 3]);

%%

% 3. 绘均方误差变化曲线

figure(1)

plot(trace(:,1),1./trace(:,3),'r-');

title( 'GA优化BP神经网络,绘制均方误差变化曲线—Jason niu')

hold on

plot(trace(:,1),1./trace(:,2),'b-');

xlabel('Generation');

ylabel('Sum-Squared Error');

%%

% 4. 绘制适应度函数变化

figure(2)

plot(trace(:,1),trace(:,3),'r-');

title( 'GA优化BP神经网络,绘制适应度函数变化曲线—Jason niu')

hold on

plot(trace(:,1),trace(:,2),'b-');

xlabel('Generation');

ylabel('Fittness');

%% VII. 解码最优解并赋值

%%

% 1. 解码最优解

[W1,B1,W2,B2,val] = gadecod(x);

inputnum=size(P_train,1); % 输入层神经元个数

outputnum=size(T_train,1); % 输出层神经元个数

w1num=R*S1; % 输入层到隐层的权值个数

w2num=S2*S1;% 隐层到输出层的权值个数

N=w1num+S1+w2num+outputnum; %待优化的变量的个数

%%

% 2. 赋值给神经网络

w1=x(1:R*S1);

B1=x(R*S1+1:R*S1+S1);

w2=x(R*S1+S1+1:R*S1+S1+S1*S2);

B2=x(R*S1+S1+S1*S2+1:R*S1+S1+S1*S2+S2);

net.iw{1,1}=reshape(w1,S1,R);

net.lw{2,1}=reshape(w2,S2,S1);

net.b{1}=reshape(B1,S1,1);

net.b{2}=B2;

%% VIII. 利用新的权值和阈值进行训练

net = train(net,p_train,t_train);

%% IX. 仿真测试

s_ga = sim(net,P_test) %遗传优化后的仿真结果

T_sim = mapminmax('reverse',s_ga,ps_output);%%

% 1. 相对误差error

error = abs(T_sim - T_test)./T_test;

%%

% 2. 决定系数R^2

R2 = (N * sum(T_sim .* T_test) - sum(T_sim) * sum(T_test))^2 / ((N * sum((T_sim).^2) - (sum(T_sim))^2) * (N * sum((T_test).^2) - (sum(T_test))^2));

%%

% 3. 结果对比

result = [T_test' T_sim' error'];

2020-3-27 15:15 上传

2020-3-27 15:15 上传

2373

2373

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?