目录

一、自定义InputFormat

mapreduce框架当中已经给我们提供了很多的文件输入类,用于处理文件数据的输入,如下图所示:

当然,如果内部提供的文件数据类还不够用的话,我们也可以通过自定义InputFormat来实现文件数据的输入。

案例实操

1、需求

现在有大量的小文件,我们通过自定义InputFormat实现将小文件全部读取,然后输出成为一个SequenceFile格式的大文件,进行文件的合并。

2、实现

第一步:自定义InputFormat

package xsluo.inputformat;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.mapreduce.InputSplit;

import org.apache.hadoop.mapreduce.JobContext;

import org.apache.hadoop.mapreduce.RecordReader;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import java.io.IOException;

public class MyInputFormat extends FileInputFormat<NullWritable, BytesWritable> {

@Override

public RecordReader<NullWritable, BytesWritable> createRecordReader(InputSplit inputSplit, TaskAttemptContext taskAttemptContext) throws IOException, InterruptedException {

MyRecordReader myRecordReader = new MyRecordReader();

myRecordReader.initialize(inputSplit,taskAttemptContext);

return myRecordReader;

}

/**

* 注意这个方法,决定我们的文件是否可以切分,如果不可切分,直接返回false

* 到时候读取数据的时候,一次性将文件内容全部都读取出来

* @param context

* @param filename

* @return

*/

@Override

protected boolean isSplitable(JobContext context, Path filename) {

return false;

}

}

第二步:自定义RecordReader读取数据

package xsluo.inputformat;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.IOUtils;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.mapreduce.InputSplit;

import org.apache.hadoop.mapreduce.RecordReader;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import java.io.IOException;

public class MyRecordReader extends RecordReader<NullWritable, BytesWritable> {

private FileSplit fileSplit;

private Configuration configuration;

private BytesWritable bytesWritable;

//读取文件的标识

private boolean flag = false;

/**

* 初始化的方法 只在初始化的时候调用一次.只要拿到了文件的切片,就拿到了文件的内容

* @param inputSplit 输入的文件的切片

* @param taskAttemptContext

* @throws IOException

* @throws InterruptedException

*/

@Override

public void initialize(InputSplit inputSplit, TaskAttemptContext taskAttemptContext) throws IOException, InterruptedException {

this.fileSplit = (FileSplit) inputSplit;

this.configuration = taskAttemptContext.getConfiguration();

bytesWritable = new BytesWritable();

}

/**

* 读取数据

* 返回值boolean 类型,如果返回true,表示文件已经读取完成,不用再继续往下读取了

* 如果返回false,文件没有读取完成,继续读取下一行

* @return

* @throws IOException

* @throws InterruptedException

*/

@Override

public boolean nextKeyValue() throws IOException, InterruptedException {

if (!flag){

long length = fileSplit.getLength();

byte[] bytes = new byte[(int) length];

//获取到了文件的切片之后,我们就需要将文件切片的内容获取出来

Path path = fileSplit.getPath(); //获取文件切片的路径 file:/// hdfs://

FileSystem fileSystem = path.getFileSystem(configuration); //获取文件系统

FSDataInputStream inputStream = fileSystem.open(path); //打开文件的输入流

//已经获取到了文件的输入流,我们需要将流对象,封装到BytesWritable 里面去

// inputStream ==> byte[] ==> BytesWritable

IOUtils.readFully(inputStream,bytes,0,(int)length);

bytesWritable.set(bytes,0,(int)length);

flag = true;

inputStream.close();

fileSystem.close();

return true;

}

return false;

}

/**

* 获取数据的key1

* @return

* @throws IOException

* @throws InterruptedException

*/

@Override

public NullWritable getCurrentKey() throws IOException, InterruptedException {

return NullWritable.get();

}

/**

* 获取数据的value1

* @return

* @throws IOException

* @throws InterruptedException

*/

@Override

public BytesWritable getCurrentValue() throws IOException, InterruptedException {

return bytesWritable;

}

/**

* 读取文件的进度,没什么用

* @return

* @throws IOException

* @throws InterruptedException

*/

@Override

public float getProgress() throws IOException, InterruptedException {

return flag ? 1.0f : 0.0f;

}

/**

* 关闭资源

* @throws IOException

*/

@Override

public void close() throws IOException {

}

}

第三步:自定义mapper类

package xsluo.inputformat;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import java.io.IOException;

public class MyInputFormatMapper extends Mapper<NullWritable, BytesWritable, Text,BytesWritable> {

@Override

protected void map(NullWritable key, BytesWritable value, Context context) throws IOException, InterruptedException {

FileSplit inputSplit = (FileSplit) context.getInputSplit();

String name = inputSplit.getPath().getName(); //获取文件的名称

context.write(new Text(name),value);

}

}

第四步:定义main方法

package xsluo.inputformat;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.output.SequenceFileOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class MyInputFormatMain extends Configured implements Tool {

@Override

public int run(String[] strings) throws Exception {

Job job = Job.getInstance(super.getConf(), "mergeSmallFiles");

job.setInputFormatClass(MyInputFormat.class);

MyInputFormat.addInputPath(job,new Path("F:\\testDatas\\small_files"));

job.setMapperClass(MyInputFormatMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(BytesWritable.class);

//没有reduce。但是要设置reduce的输出的k3 value3 的类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(BytesWritable.class);

//将我们的文件输出成为sequenceFile这种格式

job.setOutputFormatClass(SequenceFileOutputFormat.class);

SequenceFileOutputFormat.setOutputPath(job,new Path("F:\\testDatas\\out_sequence"));

boolean b = job.waitForCompletion(true);

return b?0:1;

}

public static void main(String[] args) throws Exception {

int i = ToolRunner.run(new Configuration(), new MyInputFormatMain(), args);

System.exit(i);

}

}

二、自定义分区

在mapreduce执行当中,有一个默认的步骤就是partition分区;

-

分区主要的作用就是默认将key相同的kv对数据发送到同一个分区中;

-

在mapreduce当中有一个抽象类叫做Partitioner,默认使用的实现类是HashPartitioner,我们可以通过HashPartitioner的源码,查看到分区的逻辑如下

我们MR编程的第三步就是分区;这一步中决定了map生成的每个kv对,被分配到哪个分区里

-

那么这是如何做到的呢?

-

要实现此功能,涉及到了分区器的概念;

默认分区器HashPartitioner

-

MR框架有个默认的分区器HashPartitioner

我们能观察到:

-

HashPartitioner实现了Partitioner接口

-

它实现了getPartition()方法

-

此方法中对k取hash值

-

再与MAX_VALUE按位与

-

结果再模上reduce任务的个数

-

-

所以,能得出结论,相同的key会落入同一个分区中

自定义分区器

实际生产中,有时需要自定义分区的逻辑,让key落入我们想让它落入的分区

此时就需要自定义分区器

如何实现?

参考默认分区器HashPartitioner

-

自定义的分区器类,如CustomPartitioner

-

实现接口Partitioner

-

实现getPartition方法;此方法中定义分区的逻辑

-

-

main方法

-

将自定义的分区器逻辑添加进来job.setPartitionerClass(CustomPartitioner.class)

-

设置对应的reduce任务个数job.setNumReduceTasks(3)

-

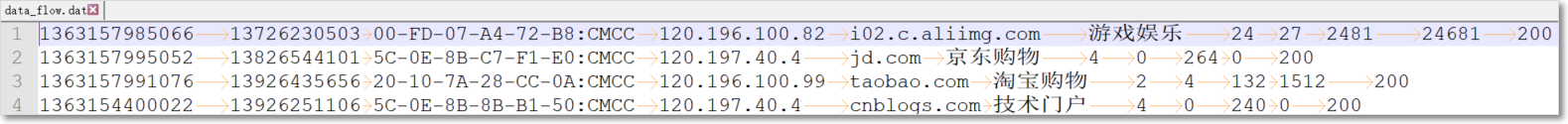

现有一份关于手机的流量数据,样本数据如下

数据格式说明

需求:使用mr,实现将不同的手机号的数据划分到6个不同的文件里面去,具体划分规则如下

135开头的手机号分到一个文件里面去,

136开头的手机号分到一个文件里面去,

137开头的手机号分到一个文件里面去,

138开头的手机号分到一个文件里面去,

139开头的手机号分到一个文件里面去,

其他开头的手机号分到一个文件里面去实现:

根据mr编程8步,需要实现的代码有:

-

一、针对输入数据,设计JavaBean

-

二、自定义的Mapper逻辑(第二步)

-

三、自定义的分区类(第三步)

-

四、自定义的Reducer逻辑(第七步)

-

五、main程序入口

代码实现

1、针对数据文件,设计JavaBean

package xsluo.partition;

import org.apache.hadoop.io.Writable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

public class FlowBean implements Writable {

private Integer upFlow;

private Integer downFlow;

private Integer upCountFlow;

private Integer downCountFlow;

/**

* 序列化方法

* @param dataOutput

* @throws IOException

*/

@Override

public void write(DataOutput dataOutput) throws IOException {

dataOutput.writeInt(upFlow);

dataOutput.writeInt(downFlow);

dataOutput.writeInt(upCountFlow);

dataOutput.writeInt(downCountFlow);

}

/**

* 反序列化方法

* 反序列化需要注意:使用正确的反序列化的方法;反序列化的顺序跟序列化的顺序保持一致

* @param dataInput

* @throws IOException

*/

@Override

public void readFields(DataInput dataInput) throws IOException {

this.upFlow = dataInput.readInt();

this.downFlow = dataInput.readInt();

this.upCountFlow = dataInput.readInt();

this.downCountFlow = dataInput.readInt();

}

@Override

public String toString() {

return "FlowBean{" +

"upFlow=" + upFlow +

", downFlow=" + downFlow +

", upCountFlow=" + upCountFlow +

", downCountFlow=" + downCountFlow +

'}';

}

public Integer getUpFlow() {

return upFlow;

}

public void setUpFlow(Integer upFlow) {

this.upFlow = upFlow;

}

public Integer getDownFlow() {

return downFlow;

}

public void setDownFlow(Integer downFlow) {

this.downFlow = downFlow;

}

public Integer getUpCountFlow() {

return upCountFlow;

}

public void setUpCountFlow(Integer upCountFlow) {

this.upCountFlow = upCountFlow;

}

public Integer getDownCountFlow() {

return downCountFlow;

}

public void setDownCountFlow(Integer downCountFlow) {

this.downCountFlow = downCountFlow;

}

}

2、自定义Mapper类

package xsluo.partition;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class FlowMapper extends Mapper<LongWritable, Text, Text, FlowBean> {

private FlowBean flowBean;

private Text text;

@Override

protected void setup(Context context) throws IOException, InterruptedException {

flowBean = new FlowBean();

text = new Text();

}

/**

* 1363157985066 13726230503 00-FD-07-A4-72-B8:CMCC

* 120.196.100.82 i02.c.aliimg.com 游戏娱乐 24 27 2481 24681 200

*

* @param key

* @param value

* @param context

* @throws IOException

* @throws InterruptedException

*/

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String[] fields = value.toString().split("\t");

String phoneNum = fields[1];

String upFlow = fields[6];

String downFlow = fields[7];

String upCountFlow = fields[8];

String downCountFlow = fields[9];

text.set(phoneNum);

flowBean.setUpFlow(Integer.parseInt(upFlow));

flowBean.setDownFlow(Integer.parseInt(downFlow));

flowBean.setUpCountFlow(Integer.parseInt(upCountFlow));

flowBean.setDownCountFlow(Integer.parseInt(downCountFlow));

context.write(text,flowBean);

}

}

3、自定义分区

package xsluo.partition;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Partitioner;

public class MyPartition extends Partitioner<Text,FlowBean> {

@Override

public int getPartition(Text text, FlowBean flowBean, int numPartitions) {

String phoneNum = text.toString();

if (null != phoneNum && !phoneNum.equals("")){

if (phoneNum.startsWith("135")){

return 0;

}else if (phoneNum.startsWith("136")){

return 1;

}else if (phoneNum.startsWith("137")){

return 2;

}else if (phoneNum.startsWith("138")){

return 3;

}else if (phoneNum.startsWith("139")){

return 4;

}else {

return 5;

}

}else {

return 5;

}

}

}

4、自定义Reducer

package xsluo.partition;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class FlowReducer extends Reducer<Text,FlowBean,Text,Text> {

@Override

protected void reduce(Text key, Iterable<FlowBean> values, Context context) throws IOException, InterruptedException {

int upFlow = 0;

int donwFlow = 0;

int upCountFlow = 0;

int downCountFlow = 0;

for (FlowBean value : values) {

upFlow += value.getUpFlow();

donwFlow += value.getDownFlow();

upCountFlow += value.getUpCountFlow();

downCountFlow += value.getDownCountFlow();

}

context.write(key,new Text(upFlow + "\t" + donwFlow + "\t" + upCountFlow + "\t" + downCountFlow));

}

}

5、main入口

package xsluo.partition;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class FlowMain extends Configured implements Tool {

@Override

public int run(String[] strings) throws Exception {

//获取job对象

Job job = Job.getInstance(super.getConf(), "flowCount");

//如果程序打包运行必须要设置这一句

job.setJarByClass(FlowMain.class);

job.setInputFormatClass(TextInputFormat.class);

TextInputFormat.addInputPath(job, new Path(strings[0]));

job.setMapperClass(FlowMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(FlowBean.class);

//自定义分区器:

job.setPartitionerClass(MyPartition.class);

//设置reduce个数

job.setNumReduceTasks(Integer.parseInt(strings[2]));

job.setReducerClass(FlowReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

job.setOutputFormatClass(TextOutputFormat.class);

TextOutputFormat.setOutputPath(job, new Path(strings[1]));

boolean b = job.waitForCompletion(true);

return b ? 0 : 1;

}

public static void main(String[] args) throws Exception {

Configuration configuration = new Configuration();

//configuration.set("mapreduce.framework.name", "local");

//configuration.set("yarn.resourcemanager.hostname", "local");

int run = ToolRunner.run(configuration, new FlowMain(), args);

System.exit(run);

}

}

注意:对于我们自定义分区的案例,==必须打成jar包上传到集群==上面去运行,因为我们本地已经没法通过多线程模拟本地程序运行了,将我们的数据上传到hdfs上面去,然后通过 hadoop jar提交到集群上面去运行,观察我们分区的个数与reduceTask个数的关系

思考:

- 如果手动指定6个分区,reduceTask个数设置为3个会出现什么情况?

- 如果手动指定6个分区,reduceTask个数设置为9个会出现什么情况?

三、自定义排序

1. 可排序的Key

-

排序是MapReduce框架中最重要的操作之一。

-

MapTask和ReduceTask均会对数据按照key进行排序。该操作属于Hadoop的默认行为。任何应用程序中的数据均会被排序,而不管逻辑上是否需要。

-

默认排序是按照字典顺序排序,且实现该排序的方法是快速排序。

-

-

对于MapTask,它会将处理的结果暂时放到环形缓冲区中,当环形缓冲区使用率达到一定阈值后,再对缓冲区中的数据进行一次快速排序,并将这些有序数据溢写到磁盘上,而当数据处理完毕后,它会对磁盘上所有文件进行归并排序。

-

对于ReduceTask,它从每个执行完成的MapTask上远程拷贝相应的数据文件

-

如果文件大小超过一定阈值,则溢写磁盘上,否则存储在内存中。

-

如果磁盘上文件数目达到一定阈值,则进行一次归并排序以生成一个更大文件;

-

如果内存中文件大小或者数目超过一定阈值,则进行一次合并后将数据溢写到磁盘上。

-

当所有数据拷贝完毕后,ReduceTask统一对内存和磁盘上的所有数据进行一次归并排序。

-

2. 排序的种类:

-

部分排序

MapReduce根据输入记录的键对数据集排序。保证输出的每个文件内部有序

-

全排序

最终输出结果只有一个文件,且文件内部有序。实现方式是只设置一个ReduceTask。但该方法在处理大型文件时效率极低,因为一台机器处理所有文件,完全丧失了MapReduce所提供的并行架构

-

辅助排序

在Reduce端对key进行分组。应用于:在接收的key为bean对象时,想让一个或几个字段相同(全部字段比较不相同)的key进入到同一个reduce方法时,可以采用==分组排序==。

-

二次排序

-

二次排序:mr编程中,需要先按输入数据的某一列a排序,如果相同,再按另外一列b排序;

-

mr自带的类型作为key无法满足需求,往往需要自定义JavaBean作为map输出的key

-

JavaBean中,使用compareTo方法指定排序规则。

-

3. 二次排序

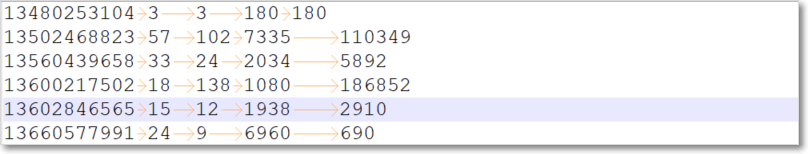

数据:样本数据如下;

每条数据有5个字段,分别是手机号、上行包总个数、下行包总个数、上行总流量、下行总流量

需求:先对下行包总个数升序排序;若相等,再按上行总流量进行降序排序

根据mr编程8步,需要实现的代码有:

-

一、针对输入数据及二次排序规则,设计JavaBean

-

二、自定义的Mapper逻辑(第二步)

-

三、自定义的Reducer逻辑(第七步)

-

四、main程序入口

代码实现:

1)定义javaBean对象,用于封装数据及定义排序规则

package xsluo.sort;

import org.apache.hadoop.io.WritableComparable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

public class FlowSortBean implements WritableComparable<FlowSortBean> {

private String phone;

//上行包个数

private Integer upPackNum;

//下行包个数

private Integer downPackNum;

//上行总流量

private Integer upPayload;

//下行总流量

private Integer downPayload;

//用于比较两个FlowSortBean对象

/**

* 先对下行包总个数升序排序;若相等,再按上行总流量进行降序排序

* @param o

* @return

*/

@Override

public int compareTo(FlowSortBean o) {

//升序

int i = this.downPackNum.compareTo(o.downPackNum);

if (i == 0){

//降序 -

i = -this.upPayload.compareTo(o.upPayload);

}

return i;

}

//序列化

@Override

public void write(DataOutput dataOutput) throws IOException {

dataOutput.writeUTF(phone);

dataOutput.writeInt(upPackNum);

dataOutput.writeInt(downPackNum);

dataOutput.writeInt(upPayload);

dataOutput.writeInt(downPayload);

}

//反序列化

@Override

public void readFields(DataInput dataInput) throws IOException {

this.phone = dataInput.readUTF();

this.upPackNum = dataInput.readInt();

this.downPackNum = dataInput.readInt();

this.upPayload = dataInput.readInt();

this.downPayload = dataInput.readInt();

}

@Override

public String toString() {

return "FlowSortBean{" +

"phone='" + phone + '\'' +

", upPackNum=" + upPackNum +

", downPackNum=" + downPackNum +

", upPayload=" + upPayload +

", downPayload=" + downPayload +

'}';

}

public String getPhone() {

return phone;

}

public void setPhone(String phone) {

this.phone = phone;

}

public Integer getUpPackNum() {

return upPackNum;

}

public void setUpPackNum(Integer upPackNum) {

this.upPackNum = upPackNum;

}

public Integer getDownPackNum() {

return downPackNum;

}

public void setDownPackNum(Integer downPackNum) {

this.downPackNum = downPackNum;

}

public Integer getUpPayload() {

return upPayload;

}

public void setUpPayload(Integer upPayload) {

this.upPayload = upPayload;

}

public Integer getDownPayload() {

return downPayload;

}

public void setDownPayload(Integer downPayload) {

this.downPayload = downPayload;

}

}

2)自定义mapper类

package xsluo.sort;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class FlowSortMapper extends Mapper<LongWritable, Text,FlowSortBean, NullWritable> {

private FlowSortBean flowSortBean;

//初始化

@Override

protected void setup(Context context) throws IOException, InterruptedException {

flowSortBean = new FlowSortBean();

}

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

/**

* 手机号 上行包 下行包 上行总流量 下行总流量

* 13480253104 3 3 180 180

*/

String[] fields = value.toString().split("\t");

flowSortBean.setPhone(fields[0]);

flowSortBean.setUpPackNum(Integer.parseInt(fields[1]));

flowSortBean.setDownPackNum(Integer.parseInt(fields[2]));

flowSortBean.setUpPayload(Integer.parseInt(fields[3]));

flowSortBean.setDownPayload(Integer.parseInt(fields[4]));

context.write(flowSortBean, NullWritable.get());

}

}

3)自定义reducer类

package xsluo.sort;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class FlowSortReducer extends Reducer<FlowSortBean, NullWritable,FlowSortBean,NullWritable> {

@Override

protected void reduce(FlowSortBean key, Iterable<NullWritable> values, Context context) throws IOException, InterruptedException {

//经过排序后的数据,直接输出即可

context.write(key,NullWritable.get());

}

}

4)main程序入口

package xsluo.sort;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class FlowSortMain extends Configured implements Tool {

@Override

public int run(String[] strings) throws Exception {

//获取job对象

Job job = Job.getInstance(super.getConf(), "flowSort");

//如果程序打包运行必须要设置这一句

job.setJarByClass(FlowSortMain.class);

job.setInputFormatClass(TextInputFormat.class);

TextInputFormat.addInputPath(job, new Path(strings[0]));

job.setMapperClass(FlowSortMapper.class);

job.setMapOutputKeyClass(FlowSortBean.class);

job.setMapOutputValueClass(NullWritable.class);

job.setReducerClass(FlowSortReducer.class);

job.setOutputKeyClass(FlowSortBean.class);

job.setOutputValueClass(NullWritable.class);

job.setOutputFormatClass(TextOutputFormat.class);

TextOutputFormat.setOutputPath(job, new Path(strings[1]));

boolean b = job.waitForCompletion(true);

return b ? 0 : 1;

}

public static void main(String[] args) throws Exception {

Configuration configuration = new Configuration();

int run = ToolRunner.run(configuration, new FlowSortMain(), args);

System.exit(run);

}

}

四、自定义Combine

1. combiner基本介绍

-

combiner类本质也是reduce聚合,combiner类继承Reducer父类

-

combine是运行在map端的,对map task的结果做聚合;而reduce是将来自不同的map task的数据做聚合

-

作用:

-

combine可以减少map task落盘及向reduce task传输的数据量

-

-

是否可以做map端combine:

-

并非所有的mapreduce job都适用combine,无论适不适用combine,都不能对最终的结果造成影响;比如下边求平均值的例子,就不适用适用combine

-

Mapper

3 5 7 ->(3+5+7)/3=5

2 6 ->(2+6)/2=4

Reducer

(3+5+7+2+6)/5=23/5 不等于 (5+4)/2=9/22. 需求:

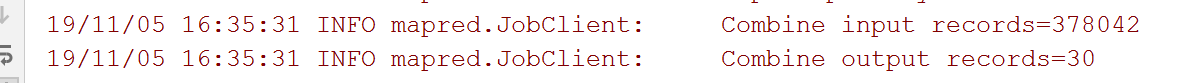

对于我们前面的wordCount单词计数统计,我们加上Combiner过程,实现map端的数据进行汇总之后,再发送到reduce端,减少数据的网络拷贝

3.实现:

-

自定义combiner类

其实直接使用词频统计中的reducer类作为combine类即可

-

在main方法中加入

job.setCombinerClass(MyReducer.class);运行程序,观察控制台有combiner和没有combiner的异同

五、自定义分组

关键类GroupingComparator

-

是mapreduce当中reduce端决定哪些数据作为一组,调用一次reduce的逻辑

-

默认是key相同的kv对,作为同一组;每组调用一次reduce方法;

-

可以自定义GroupingComparator,实现自定义的分组逻辑

1. 自定义WritableComparator类

-

(1)继承WritableComparator

-

(2)重写compare()方法

@Override

public int compare(WritableComparable a, WritableComparable b) {

// 比较的业务逻辑

return result;

}2. 需求

现在有订单数据如下

| 订单id | 商品id | 成交金额 |

|---|---|---|

| Order_0000001 | Pdt_01 | 222.8 |

| Order_0000001 | Pdt_05 | 25.8 |

| Order_0000002 | Pdt_03 | 522.8 |

| Order_0000002 | Pdt_04 | 122.4 |

| Order_0000002 | Pdt_05 | 722.4 |

| Order_0000003 | Pdt_01 | 222.8 |

现在需要求取每个订单当中金额最大的商品

根据mr编程8步,需要实现的代码有:

-

一、针对输入数据及相同订单按金额降序排序,设计JavaBean

-

二、自定义的Mapper逻辑(第二步)

-

三、自定义分区器,相同订单分到同一区(第三步)

-

四、自定义分区内排序(在JavaBean中已完成)(第四步)

-

五、自定义分组,相同订单的为同一组(第六步)

-

六、自定义的Reducer逻辑(第七步)

-

七、main程序入口

3. 自定义OrderBean对象

package xsluo.group;

import org.apache.hadoop.io.WritableComparable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

public class OrderBean implements WritableComparable<OrderBean> {

private String orderId;

private Double price;

/**

* key间的比较规则

*

* @param o

* @return

*/

@Override

public int compareTo(OrderBean o) {

//注意:如果是不同的订单之间,金额不需要排序,没有可比性

int orderIdCompare = this.orderId.compareTo(o.orderId);

if (orderIdCompare == 0){

//比较金额,按照金额进行倒序排序

int priceCompare = this.price.compareTo(o.price);

return -priceCompare;

}else {

//如果订单号不同,没有可比性,直接返回订单号的升序排序即可

return orderIdCompare;

}

}

/**

* 序列化方法

*

* @param dataOutput

* @throws IOException

*/

@Override

public void write(DataOutput dataOutput) throws IOException {

dataOutput.writeUTF(orderId);

dataOutput.writeDouble(price);

}

/**

* 反序列化方法

*

* @param dataInput

* @throws IOException

*/

@Override

public void readFields(DataInput dataInput) throws IOException {

this.orderId = dataInput.readUTF();

this.price = dataInput.readDouble();

}

@Override

public String toString() {

return "OrderBean{" +

"orderId='" + orderId + '\'' +

", price=" + price +

'}';

}

public String getOrderId() {

return orderId;

}

public void setOrderId(String orderId) {

this.orderId = orderId;

}

public Double getPrice() {

return price;

}

public void setPrice(Double price) {

this.price = price;

}

}

4. 自定义mapper类

package xsluo.group;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class GroupMapper extends Mapper<LongWritable, Text,OrderBean, NullWritable> {

/**

* Order_0000001 Pdt_01 222.8

* Order_0000001 Pdt_05 25.8

* Order_0000002 Pdt_03 322.8

* Order_0000002 Pdt_04 522.4

* Order_0000002 Pdt_05 822.4

* Order_0000003 Pdt_01 222.8

* Order_0000003 Pdt_03 322.8

* Order_0000003 Pdt_04 522.4

*

* @param key

* @param value

* @param context

* @throws IOException

* @throws InterruptedException

*/

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String[] fields = value.toString().split("\t");

//Order_0000003 Pdt_04 522.4

OrderBean orderBean = new OrderBean();

orderBean.setOrderId(fields[0]);

orderBean.setPrice(Double.parseDouble(fields[2]));

//输出orderBean

context.write(orderBean,NullWritable.get());

}

}

5. 自定义分区类

package xsluo.group;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.mapreduce.Partitioner;

public class GroupPartitioner extends Partitioner<OrderBean, NullWritable> {

@Override

public int getPartition(OrderBean orderBean, NullWritable nullWritable, int numPartitions) {

//将每个订单的所有的记录,传入到一个reduce当中

return orderBean.getOrderId().hashCode() % numPartitions;

}

}

6. 自定义分组类

package xsluo.group;

import org.apache.hadoop.io.WritableComparable;

import org.apache.hadoop.io.WritableComparator;

//自定义分组类

public class MyGroup extends WritableComparator {

public MyGroup() {

//分组类:要对OrderBean类型的k进行分组

super(OrderBean.class,true);

}

@Override

public int compare(WritableComparable a, WritableComparable b) {

OrderBean a1 = (OrderBean)a;

OrderBean b1 = (OrderBean)b;

//需要将同一订单的kv作为一组

return a1.getOrderId().compareTo(b1.getOrderId());

}

}

7. 自定义reducer类

package xsluo.group;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class GroupReducer extends Reducer<OrderBean, NullWritable, OrderBean, NullWritable> {

/**

* Order_0000002 Pdt_03 322.8

* Order_0000002 Pdt_04 522.4

* Order_0000002 Pdt_05 822.4

* => 这一组中有3个kv

* 并且是排序的

* Order_0000002 Pdt_05 822.4

* Order_0000002 Pdt_04 522.4

* Order_0000002 Pdt_03 322.8

*

* @param key

* @param values

* @param context

* @throws IOException

* @throws InterruptedException

*/

@Override

protected void reduce(OrderBean key, Iterable<NullWritable> values, Context context) throws IOException, InterruptedException {

//Order_0000002 Pdt_05 822.4 获得了当前订单中进而最高的商品

//top1

context.write(key, NullWritable.get());

//top2

//这样出不了正确结果,只会将同一个key输出两次;结果如下

/*

*

Order_0000001 222.8

Order_0000001 222.8

Order_0000002 822.4

Order_0000002 822.4

Order_0000003 222.8

Order_0000003 222.8

* */

// for(int i = 0; i < 2; i++){

// context.write(key, NullWritable.get());

// }

//正确的做法:

// int num = 0;

// for(NullWritable value: values) {

// context.write(key, value);

// num++;

// if(num == 2)

// break;

// }

}

}

8. 定义程序入口类

package xsluo.group;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

/**

* 分组求top 1

*/

public class GroupMain extends Configured implements Tool {

@Override

public int run(String[] args) throws Exception {

//获取job对象

Job job = Job.getInstance(super.getConf(), "group");

job.setJarByClass(GroupMain.class);

//第一步:读取文件,解析成为key,value对

job.setInputFormatClass(TextInputFormat.class);

TextInputFormat.addInputPath(job, new Path(args[0]));

//第二步:自定义map逻辑

job.setMapperClass(GroupMapper.class);

job.setMapOutputKeyClass(OrderBean.class);

job.setMapOutputValueClass(NullWritable.class);

//第三步:分区

job.setPartitionerClass(GroupPartitioner.class);

//第四步:排序 已经做了

//第五步:规约 combiner 省掉

//第六步:分组 自定义分组逻辑

job.setGroupingComparatorClass(MyGroup.class);

//第七步:设置reduce逻辑

job.setReducerClass(GroupReducer.class);

job.setOutputKeyClass(OrderBean.class);

job.setOutputValueClass(NullWritable.class);

//第八步:设置输出路径

job.setOutputFormatClass(TextOutputFormat.class);

TextOutputFormat.setOutputPath(job, new Path(args[1]));

//如果设置reduce任务数为多个,必须打包到集群运行

//mr中reduce个数,默认是1

// job.setNumReduceTasks(1);

//job.setNumReduceTasks(3);

boolean b = job.waitForCompletion(true);

return b ? 0 : 1;

}

public static void main(String[] args) throws Exception {

int run = ToolRunner.run(new Configuration(), new GroupMain(), args);

System.exit(run);

}

}

六、自定义OutputFormat

1. 需求

现在有一些订单的评论数据,需求,将订单的好评与其他评论(中评、差评)进行区分开来,将最终的数据分开到不同的文件夹下面去,数据内容参见资料文件夹,其中数据第九个字段表示好评,中评,差评。0:好评,1:中评,2:差评

2. 分析

程序的关键点是要在一个mapreduce程序中根据数据的不同输出两类结果到不同目录,这类灵活的输出需求可以通过自定义outputformat来实现

3. 实现

实现要点:

- 在mapreduce中访问外部资源

- 自定义outputformat,改写其中的recordwriter,改写具体输出数据的方法write()

第一步:自定义一个outputformat

package xsluo.outputformat;

import org.apache.hadoop.fs.FSDataOutputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.RecordWriter;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

//泛型指的是输出的k,v类型

public class MyOutputFormat extends FileOutputFormat<Text, NullWritable> {

@Override

public RecordWriter<Text, NullWritable> getRecordWriter(TaskAttemptContext job) throws IOException, InterruptedException {

FileSystem fileSystem = FileSystem.get(job.getConfiguration());

Path goodComment = new Path("F:\\testDatas\\good\\1.txt");

Path badComment = new Path("F:\\testDatas\\bad\\1.txt");

FSDataOutputStream goodOutputStream = fileSystem.create(goodComment);

FSDataOutputStream badOutputStream = fileSystem.create(badComment);

return new MyRecordWriter(goodOutputStream,badOutputStream);

}

static class MyRecordWriter extends RecordWriter<Text, NullWritable>{

FSDataOutputStream goodStream = null;

FSDataOutputStream badStream = null;

public MyRecordWriter(FSDataOutputStream goodStream, FSDataOutputStream badStream) {

this.goodStream = goodStream;

this.badStream = badStream;

}

@Override

public void write(Text key, NullWritable value) throws IOException, InterruptedException {

if (key.toString().split("\t")[9].equals("0")){//好评

goodStream.write(key.toString().getBytes());

goodStream.write("\r\n".getBytes());

}else {//中评或差评

badStream.write(key.toString().getBytes());

badStream.write("\r\n".getBytes());

}

}

//释放资源

@Override

public void close(TaskAttemptContext context) throws IOException, InterruptedException {

if (badStream != null) {

badStream.close();

}

if (goodStream != null) {

goodStream.close();

}

}

}

}

第二步:开发mapreduce处理流程

package xsluo.outputformat;

import com.xsluo.MyMapper;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

import java.io.IOException;

public class MyOwnOutputFormatMain extends Configured implements Tool {

@Override

public int run(String[] args) throws Exception {

Configuration conf = super.getConf();

Job job = Job.getInstance(conf, MyOwnOutputFormatMain.class.getSimpleName());

job.setJarByClass(MyOwnOutputFormatMain.class);

job.setInputFormatClass(TextInputFormat.class);

TextInputFormat.addInputPath(job, new Path(args[0]));

job.setMapperClass(MyOwnMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(NullWritable.class);

//使用默认的Reduce类的逻辑

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(NullWritable.class);

job.setOutputFormatClass(MyOutputFormat.class);

//设置一个输出目录,这个目录会输出一个success的成功标志的文件

MyOutputFormat.setOutputPath(job, new Path(args[1]));

//可以观察现象

job.setNumReduceTasks(2);

boolean b = job.waitForCompletion(true);

return b ? 0 : 1;

}

//kout:评分等级 0, 1, 2

public static class MyOwnMapper extends Mapper<LongWritable, Text, Text, NullWritable> {

@Override

protected void map(LongWritable key, Text value, Mapper.Context context) throws IOException, InterruptedException {

//评分

//String commentStatus = split[9];

context.write(value, NullWritable.get());

}

}

public static void main(String[] args) throws Exception {

Configuration configuration = new Configuration();

ToolRunner.run(configuration, new MyOwnOutputFormatMain(), args);

}

}

149

149

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?