#导入 requests 和 BeautifulSoup模块

import requests

from bs4 import BeautifulSoup

#链家的网站

url = 'https://gz.lianjia.com/zufang/'

def get_page(url):

#向链家的网站发起请求并得到返回结果

response = requests.get(url)

#用lxml解析上一步返回的结果

soup = BeautifulSoup(response.text, 'lxml')

return soup

查看网页得知,我们需要的信息在<a class = “…”…>

再获取href

def get_links(url):

soup = get_page(url)

#find_all('a', class_="content__list--item--aside")方法获得符合这个条件下的内容

links_a = soup.find_all('a', class_="content__list--item--aside")

#利用get方法获取href的内容,并用列表生成式生成一个列表。

#href中缺少头部‘https://gz.lianjia.com’,所以要主动加上去

links = ['https://gz.lianjia.com' + a.get('href') for a in links_a]

return links

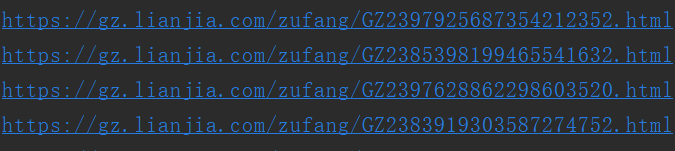

这样就获取了一个links列表,列表里包含了我们爬取到的房屋信息的链接如下

#先用一个包含租房信息的链接尝试一下,最后运行时要删除house_url = ......

house_url = 'https://gz.lianjia.com/zufang/GZ2397925687354212352.html'

def get_house_information(house_url):

soup = get_page(house_url)

#价格信息

price = soup.find('li', class_="table_col font_orange").text

#unit中包含了面积,楼层,房屋朝向,车位等信息,用字符串切片方式简单获取面积信息和楼层信息。

unit = soup.find_all('li', class_="fl oneline")

area = unit[1].text[3:]

floor = unit[7].text

#创建一个字典来保存这些信息

house = {

'价格': price,

'面积': area,

'楼层': floor

}

return house

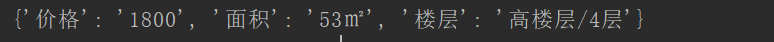

house字典数据

连接数据库,将house字典数据存入数据库中

import pymysql

db = pymysql.connect(host = 'localhost', user = 'root', password = 'root',db ='ckw',port = 3306)

def insert(db,house):

cols = ", ".join('`{}`'.format(k) for k in house.keys())

val_cols = ', '.join('%({})s'.format(k) for k in house.keys())

print(val_cols)

sql = "insert into house(%s) values(%s)"

res_sql = sql % (cols, val_cols)

cursor = db.cursor()

cursor.execute(res_sql, house)

db.commit()

运行代码,启动`

url = 'https://gz.lianjia.com/zufang/'

links = get_links(url)

for link in links:

time.sleep(3)

house = get_house_information(link)

insert(db,house)

776

776

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?