引入需要的库

import jieba

import wordcloud

from PIL import Image

from matplotlib import pyplot as plt

from wordcloud import WordCloud,ImageColorGenerator,STOPWORDS

打开文件读入

textfile=open('2.txt',encoding="utf-8").read()

textfile.close()

用jieba进行分词

wordlist1=jieba.cut(textfile,cut_all=True)

space=" ".join(wordlist1)

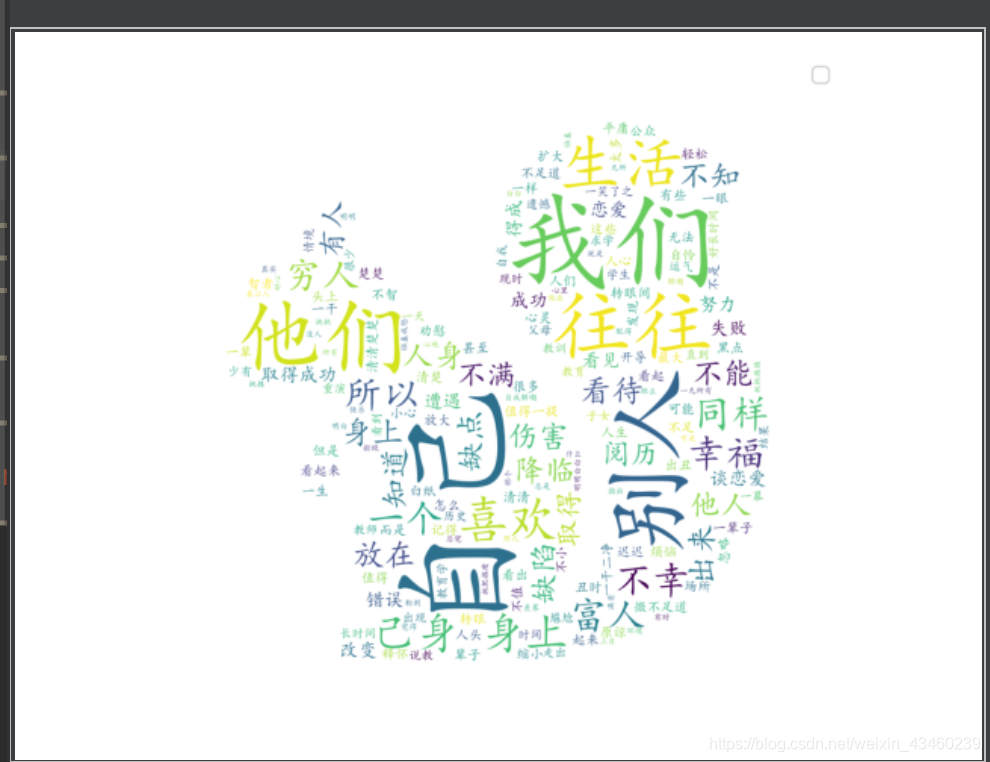

源代码

import jieba

from matplotlib import pyplot as plt

import wordcloud

from wordcloud import WordCloud,ImageColorGenerator,STOPWORDS

from PIL import Image

textfile=open('2.txt',encoding="utf-8").read()

wordlist1=jieba.cut(textfile,cut_all=True)

space=" ".join(wordlist1)

background=np.array(Image.open('4.jpg'))

mywordclound=WordCloud(background_color='white',

max_words=300,

stopwords=STOPWORDS,

max_font_size=100,

font_path="simkai.ttf",

random_state=50,

scale=2,

mask=background).generate(space)

img=ImageColorGenerator(background)

mywordclound.to_file('save1.jpg')

plt.imshow(mywordclound)

plt.legend()

plt.axis('off')

plt.show()

原照片

词云

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?