参考

https://www.jianshu.com/p/0c725519bbc5

- 安装chi-operator

kubectl apply -f https://github.com/radondb/radondb-clickhouse-kubernetes/clickhouse-operator-install-bundle.yaml

优先使用这个

kubectl apply -f https://raw.githubusercontent.com/Altinity/clickhouse-operator/master/deploy/operator/clickhouse-operator-install-bundle.yaml

假如不能访问https://raw.githubusercontent.com 参考

https://blog.csdn.net/u011046452/article/details/113574352

或者直接clone https://github.com/xdqt/clickhouse-operator

- 部署集群

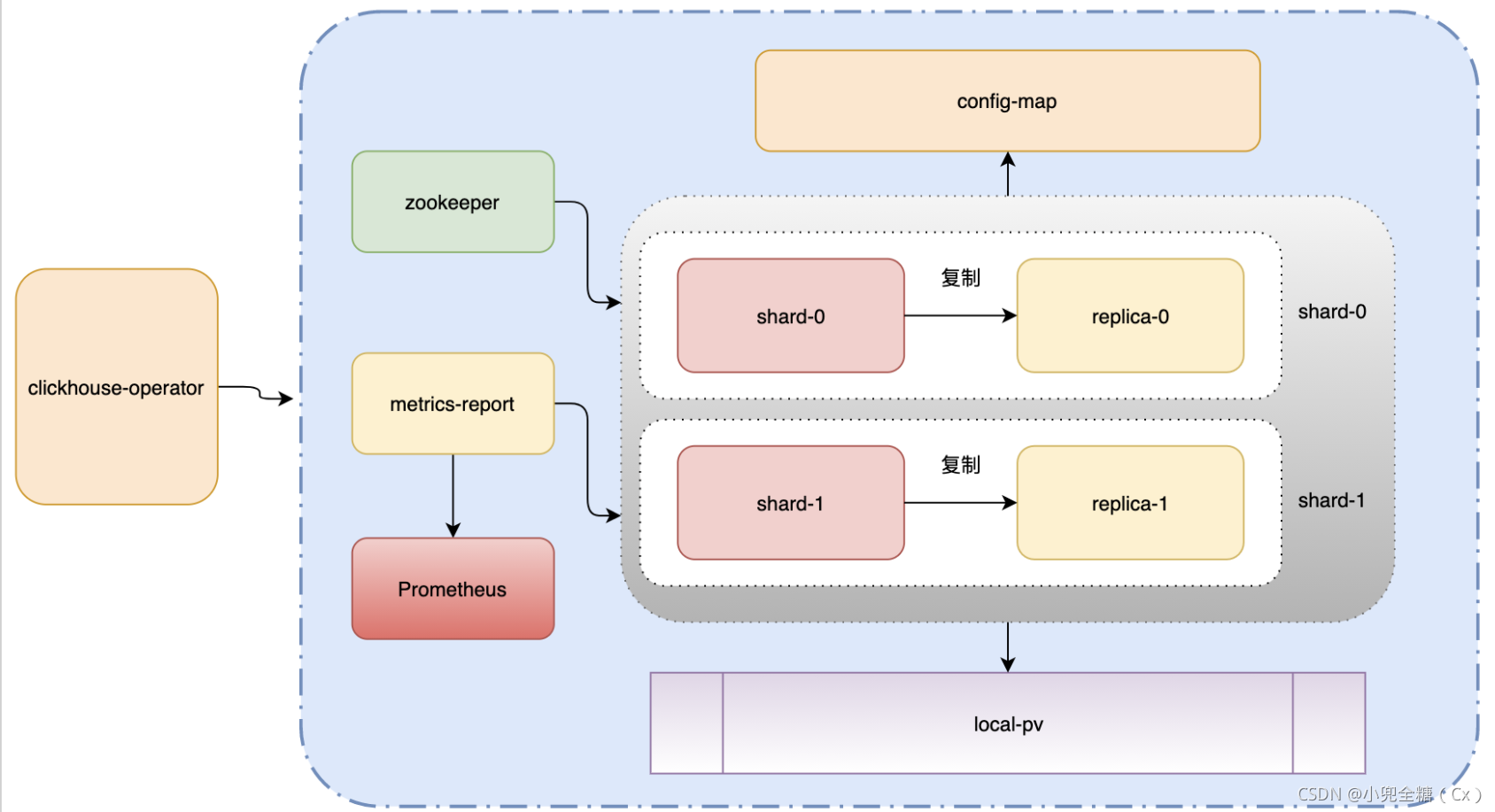

按照下图,将要部署一个2shard,2replica的一个集群,即需要四个pod。每个pod的存储使用loca pv的方式。也就是需要四台机器。

- 部署集群

下面的代码包含两个部分

local pv的部署yaml,注意此处选定了四台机器。

chi的部署yaml。

关于zookeeper的安装,参考

https://docs.altinity.com/clickhouseonkubernetes/kubernetesquickstartguide/quickzookeeper/

https://github.com/Altinity/clickhouse-operator/blob/master/docs/zookeeper_setup.md#explore-zookeeper-cluster

sudo kubectl create namespace zoo1ns

sudo kubectl apply -f deploy/zookeeper/zookeeper-1-node.yaml -n zoo1ns

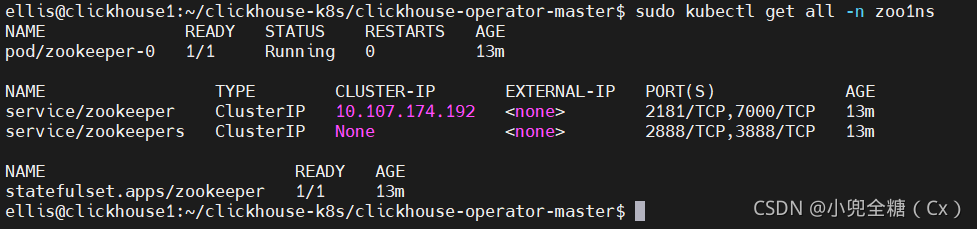

查看zookeeper

sudo kubectl get all -n zoo1ns

修改下面这个yaml中的zookeeper的host zookeeper.zoo1ns 其中zoo1ns 是namespace

注意需要将clickhouse1/2/3/4替换成你自己集群的node的hostname

https://stackoverflow.com/questions/55112404/local-persistent-volume-1-nodes-didnt-find-available-persistent-volumes-to-bi

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

name: clickhouse-local-volume

provisioner: kubernetes.io/no-provisioner

volumeBindingMode: WaitForFirstConsumer

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv-clickhouse-0

spec:

capacity:

storage: 100Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Retain

storageClassName: clickhouse-local-volume

hostPath:

path: /mnt/data/clickhouse

type: DirectoryOrCreate

nodeAffinity:

required:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- "clickhouse1"

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv-clickhouse-1

spec:

capacity:

storage: 100Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Retain

storageClassName: clickhouse-local-volume

hostPath:

path: /mnt/data/clickhouse

type: DirectoryOrCreate

nodeAffinity:

required:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- "clickhouse2"

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv-clickhouse-2

spec:

capacity:

storage: 100Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Retain

storageClassName: clickhouse-local-volume

hostPath:

path: /mnt/data/clickhouse

type: DirectoryOrCreate

nodeAffinity:

required:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- "clickhouse3"

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv-clickhouse-3

spec:

capacity:

storage: 100Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Retain

storageClassName: clickhouse-local-volume

hostPath:

path: /mnt/data/clickhouse

type: DirectoryOrCreate

nodeAffinity:

required:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- "clickhouse4"

---

apiVersion: "clickhouse.altinity.com/v1"

kind: "ClickHouseInstallation"

metadata:

name: "aibee"

spec:

defaults:

templates:

serviceTemplate: service-template

podTemplate: pod-template

dataVolumeClaimTemplate: volume-claim

configuration:

settings:

compression/case/method: zstd

disable_internal_dns_cache: 1

timezone: Asia/Shanghai

zookeeper:

nodes:

- host: zookeeper.zoo1ns#换成你得host的名称

port: 2181

session_timeout_ms: 30000

operation_timeout_ms: 10000

clusters:

- name: "clickhouse"

layout:

shardsCount: 2

replicasCount: 2

templates:

serviceTemplates:

- name: service-template

spec:

ports:

- name: http

port: 8123

- name: tcp

port: 9000

type: LoadBalancer

podTemplates:

- name: pod-template

spec:

containers:

- name: clickhouse

imagePullPolicy: Always

image: yandex/clickhouse-server:latest

volumeMounts:

# 挂载数据文件路径

- name: volume-claim

mountPath: /var/lib/clickhouse

# 挂载数据文件路径

- name: volume-claim

mountPath: /var/log/clickhouse-server

resources:

# 配置cpu和内存大小

limits:

memory: "1Gi"

cpu: "1"

requests:

memory: "1Gi"

cpu: "1"

volumeClaimTemplates:

- name: volume-claim

reclaimPolicy: Retain

spec:

storageClassName: "clickhouse-local-volume"

accessModes:

- ReadWriteOnce

resources:

# pv的存储大小

requests:

storage: 100Gi

注意: volumeClaimTemplates的reclaimPolicy必须是Retain,这样即使删除集群,数据会保留下来。否则在删除集群的时候会删除所有以"Replica*"开头的table。我被这个坑了很久。源码如下:

- 连接集群

使用上面的svc的ClusterIP,默认账户密码:clickhouse_operator/clickhouse_operator_password

账号密码在clickhouse-operator-install-bundle.yaml声明了

clickhouse-client -h 10.100.185.34 -u clickhouse_operator --password clickhouse_operator_password

关于/etc/clickhouse-server mount失败

https://github.com/ClickHouse/ClickHouse/issues/18927

725

725

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?