一、部署HAproxy

主机 server1 server2 server3 server4

ip 172.25.47.1 172.25.47.2 172.25.47.3 172.25.47.4

服务 中控机 http http haproxy

方法一

1、部署http

在server2/server3部署http

部署具体见:

https://blog.csdn.net/weixin_43697701/article/details/90183259

[root@server1 apache]# pwd

/srv/salt/apache

[root@server1 apache]# salt server2 state.sls apache.install

[root@server1 apache]# salt server3 state.sls apache.install

2、haproxy实现负载均衡

1)建立目录

[root@server1 salt]# pwd

/srv/salt

[root@server1 salt]# mkdir haproxy

[root@server1 salt]# cd haproxy/

2)写sls文件

[root@server1 haproxy]# vim install.sls

haproxy-install:

pkg.installed:

- pkgs:

- haproxy

file.managed:

- name: /etc/haproxy/haproxy.cfg

- source: salt://haproxy/files/haproxy.cfg

service.running:

- name: haproxy

- reload: Ture

- watch:

- file: haproxy-install

3)修改配置文件

此处需要把haproxy的配置文件放在files目录下,因此,采取的方法是先在server4安装haproxy,将配置文件发送给中控机,在卸载

[root@server4 ~]# yum install haproxy -y

[root@server1 haproxy]# mkdir files

[root@server1 haproxy]# cd files

[root@server1 files]# pwd

/srv/salt/haproxy/files

[root@server4 haproxy]# scp /etc/haproxy/haproxy.cfg root@172.25.47.1:/srv/salt/haproxy/files

[root@server4 haproxy]# yum remove haproxy -y

[root@server1 files]# ls

haproxy.cfg

[root@server1 files]# vim haproxy.cfg

stats uri /status

frontend main *:80

default_backend app

backend app

balance roundrobin

server app1 172.25.47.2:80 check

server app2 172.25.47.3:80 check

4)查看修改http发布页面

(此处不同主机写不同发布页面,是为了实验效果明显)

[root@server2 salt]# cat /var/www/html/index.html

server2

[root@server3 ~]# cat /var/www/html/index.html

server3

5)修改server4 minion文件,加入到master中

[root@server4 ~]# vim /etc/salt/minion

master: 172.25.47.1

[root@server4 ~]# systemctl start salt-minion

[root@server1 haproxy]# salt-key -L

[root@server1 haproxy]# salt-key -A

6)推送

[root@server1 haproxy]# ls

files install.sls

[root@server1 haproxy]# salt server4 state.sls haproxy.install

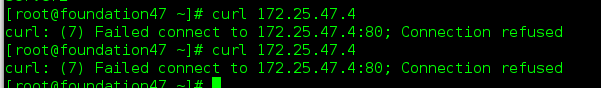

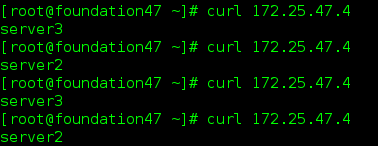

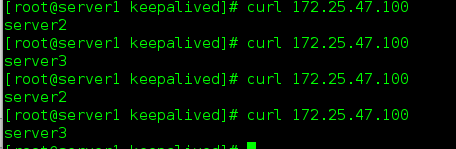

7)测试

方法二

部署还有另外一种方法

安装步骤参见博客:

https://blog.csdn.net/weixin_43697701/article/details/90183259

基于此博客,需要再写入一部分文件

1)修改推送文件

[root@server1 apache]# pwd

/srv/salt/apache

[root@server1 apache]# vim install.sls

apache-install:

pkg.installed:

- pkgs:

- httpd

[root@server1 apache]# vim service.sls

include:

- apache.install

/etc/httpd/conf/httpd.conf:

file.managed:

- source: salt://apache/files/httpd.conf

httpd-service:

service.running:

- name: httpd

- enable: False

- reload: True

watch:

- file: /etc/httpd/conf/httpd.conf

[root@server1 salt]# cd apache/files/

[root@server1 files]# ls

httpd.conf index.html ##配置文件从其他主机拷贝过来

2)写top.sls文件

[root@server1 apache]# cd /srv/salt/

[root@server1 salt]# vim top.sls

base:

'server4'

- haproxy.install

'server2'

- apache.service

'server3'

- apache.service

3)推送(由于上一实验已经运行haproxy,此处需要先停止服务,利于看出效果)

[root@server2 conf]# systemctl stop httpd

[root@server3 ~]# systemctl stop httpd

[root@server4 ~]# systemctl stop haproxy

推送:

[root@server1 salt]# salt '*' state.highstate

4)测试:

查看文件树状结构

二、Grans

Saltstack里的Grains功能,讲的是minion端的静态变量,在master端通过Grains可以获得minion对应的变量值

1、在minion的配置文件

[root@server2 ~]# vim /etc/salt/minion

120 grains:

121 roles:

122 - apache

[root@server2 ~]# systemctl restart salt-minion

[root@server1 salt]# salt server2 grains.item roles

server2:

----------

roles:

- apache

2、在minion里新建文件

三、pillar工具的配置

1、修改master配置文件,建立pillar目录

[root@server1 salt]# vim /etc/salt/master

844 pillar_roots:

845 base:

846 - /srv/pillar

[root@server1 salt]# mkdir /srv/pillar

[root@server1 salt]# systemctl restart salt-master

2、

[root@server1 salt]# cd /srv/pillar/

[root@server1 pillar]# mkdir web

[root@server1 pillar]# cd web/

[root@server1 web]# pwd

/srv/pillar/web

[root@server1 web]# salt server2 grains.item fqdn

server2:

----------

fqdn:

server2

3、

[root@server1 web]# vim apache.sls

{% if grains['fqdn'] == 'server4' %}

package: haproxy

ip: 172.25.47.4

{% elif grains['fqdn'] == 'server3' %}

package: httpd

ip: 172.25.47.3

{% endif %}

[root@server1 web]# cd ..

[root@server1 pillar]# vim top.sls

base:

'server4':

- web.apache

'server3':

- web.apache

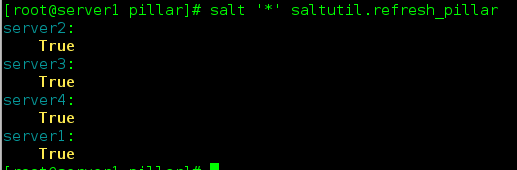

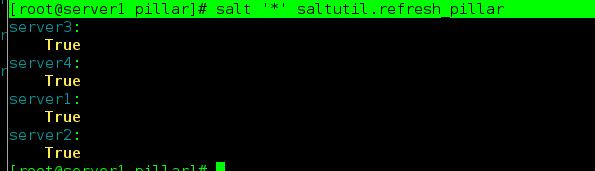

[root@server1 pillar]# salt '*' saltutil.refresh_pillar

[root@server1 pillar]# salt '*' pillar.items

注意pillar方法是针对master的,在minion上不会出现

四、部署高可用keepalived

server4是master

server1作backup

1、写安装文件,安装keepalived

[root@server1 salt]# pwd

/srv/salt

[root@server1 salt]# mkdir keepalived

[root@server1 salt]# cd keepalived/

[root@server1 keepalived]# vim install.sls

keepalived:

pkg.installed

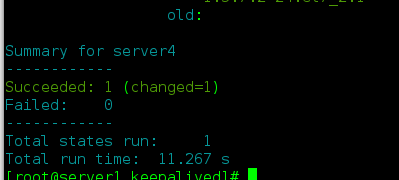

[root@server1 keepalived]# salt server4 state.sls keepalived.install

2、获取配置文件

[root@server1 keepalived]# mkdir files

[root@server1 keepalived]# cd files/

[root@server1 files]# scp server4:/etc/keepalived/keepalived.conf .

[root@server1 files]# cd ..

[root@server1 keepalived]# ls

files install.sls

3、编辑推送文件

[root@server1 keepalived]# vim install.sls

keepalived:

pkg.installed

/etc/keepalived/keepalived.conf:

file.managed:

- source: salt://keepalived/files/keepalived.conf

[root@server1 keepalived]# vim service.sls

include:

- keepalived.install

kp-service:

service.running:

- name: keepalived

- reload: true

- watch:

- file: /etc/keepalived/keepalived.conf

[root@server1 keepalived]# cd files/

[root@server1 files]# vim keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

root@localhost

}

notification_email_from keepalived.conf

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id LVS_DEVEL

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.25.47.100

}

}

[root@server1 files]# cd ..

[root@server1 keepalived]# ls

files install.sls service.sls

[root@server1 keepalived]# salt server4 state.sls keepalived.service

4、测试负载均衡

5、将server1设置为BACKUP,server4为MASTER

[root@server1 keepalived]# yum install salt-minion -y

[root@server1 keepalived]# vim /etc/salt/minion

master: 172.25.47.1

[root@server1 keepalived]# systemctl start salt-minion

[root@server1 keepalived]# systemctl enable salt-minion

[root@server1 keepalived]# salt-key -L

[root@server1 keepalived]# salt-key -A

[root@server1 keepalived]# salt server1 test.ping

server1:

True

6、编辑files下的配置文件,采用变量的方式定义

[root@server1 keepalived]# vim files/keepalived.conf

vrrp_instance VI_1 {

state {{ STATE }}

interface eth0

virtual_router_id {{ VRID }}

priority {{ PRIORITY }}

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.25.47.100

}

}

[root@server1 keepalived]# cd ..

[root@server1 salt]# cd ..

[root@server1 srv]# cd pillar/

[root@server1 pillar]# vim web/apache.sls

{% if grains['fqdn'] == 'server3' %}

ip: 172.25.47.3

{% elif grains['fqdn'] == 'server2' %}

ip: 172.25.47.2

{% endif %}

[root@server1 pillar]# cd ..

[root@server1 srv]# ls

pillar salt

[root@server1 srv]# cd salt/

[root@server1 salt]# cd keepalived/

[root@server1 keepalived]# vim install.sls

keepalived:

pkg.installed

/etc/keepalived/keepalived.conf:

file.managed:

- source: salt://keepalived/files/keepalived.conf

- template: jinja

- context:

STATE: {{ pillar['state'] }}

VRID: {{ pillar['vird'] }}

PRIORITY: {{ pillar['priority'] }}

[root@server1 keepalived]# cd /srv/pillar/

[root@server1 pillar]# mkdir keepalived

[root@server1 pillar]# cp web/apache.sls keepalived/install.sls

[root@server1 pillar]# vim keepalived/install.sls

{% if grains['fqdn'] == 'server4' %}

state: MASTER

vird: 51

priority: 100

{% elif grains['fqdn'] == 'server1' %}

state: BACKUP

vird: 51

priority: 50

{% endif %}

[root@server1 pillar]# vim top.sls

base:

'*':

- web.apache

- keepalived.install

[root@server1 pillar]# salt '*' saltutil.refresh_pillar

[root@server1 pillar]# cd ..

[root@server1 srv]# cd salt/

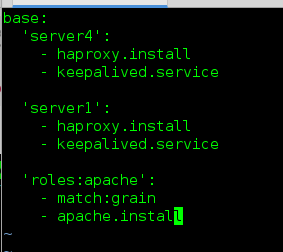

[root@server1 salt]# vim top.sls

base:

'server4':

- haproxy.install

- keepalived.service

'server1':

- haproxy.install

- keepalived.service

'roles:apache':

- match:grain

- apache.install

[root@server1 salt]# salt ‘*’ state.highstate

7、测试

server4停止keepalived

[root@server4 keepalived]# systemctl stop keepalived

依然可以轮循

4397

4397

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?