项目概述

通过抓取各大养殖专业网站新闻咨询,构建生猪市场新闻主题模型,构建生猪市场咨询情绪值,进而透视生猪市场状态。

新闻文本获取

主要新闻网站:中国养猪网、猪价格网。

构建数据获取框架

- 导入模块

from selenium import webdriver

import pandas as pd

import re

- 中国养猪网新闻咨询获取,并保存为txt文件至本地。

url_base = 'https://hangqing.zhuwang.cc/hangqingfenxi/list-81-1.html'

wd = webdriver.Chrome(r'd:/chromedriver.exe')

#获取咨询链接列表

url_list = {}

for i in range(1,2):

url_base = 'https://hangqing.zhuwang.cc/hangqingfenxi/list-81-%d.html'%i

wd.get(url_base)

elements = wd.find_elements_by_css_selector('body > div.main > div.zxleft > div.zxleft3 > ul > li > p.zxleft31 > a')

for element in elements:

url = element.get_attribute('href')

title = element.text

url_list[title] = url

#保存为txt文件至本地

for url in url_list.values():

wd.get(url)

text = wd.find_element_by_css_selector('body > div.zxxwmain.juli > div.zxxwleft > div.zxxw').text

partten = re.compile(r'\d.?\d.?-\d.?-\d.?\s\d.?')

file_name = partten.findall(text)[0]

file = open('%s.txt'%file_name,'w')

file.write(str(text))

file.close()

- 猪价格网

wd = webdriver.Chrome(r'd:/webdrives/chromedriver.exe')

#获取咨询链接列表

url_list = {}

url_base = 'http://www.zhujiage.com.cn/article/List_8.html'

wd.get(url_base)

elements = wd.find_elements_by_css_selector('#contentbox > div.lmlist a')

for element in elements:

url = element.get_attribute('href')

title = element.get_attribute('title')

url_list[title] = url

#保存为txt文件至本地

for url in url_list.values():

wd.get(url)

text = wd.find_element_by_css_selector('#content').text

file_name = wd.find_element_by_css_selector('#left > h1').text

file = open('%s.txt'%file_name,'w',encoding='utf-8')

file.write(str(text))

file.close()

新闻数据清洗

def clean_text(text):

text = text.replace('\n','')

text = re.sub('\d.?-\d.?-\d.?','',text)

text = re.sub('\s','',text)

text = re.sub('\d.?','',text)

text = re.sub('\.','',text)

text = re.findall('[\u4e00-\u9fa5]',text)

text = (''.join(text))

return text

构建LDA主题模型

- 导入模块

import gensim

from gensim import models,corpora,similarities

import os

import jieba

-

构建doc词典及语料库

-

导入保存的txt文件并清洗

fileList = os.listdir('C:/Users/Liu di/Desktop/生猪市场报告/全国生猪项目/NLP for hog market/txt')

stop_words = pd.read_csv('stopwords.txt',index_col=False,sep='\t',names='stopwords',encoding='utf-8')

doc = []

for file in fileList:

da1 = pd.read_table('./txt/'+file,encoding='utf-8')

d = da1.iloc[:,0].tolist()

text=(''.join(d))

doc.append(text)

doc_clean = []

for t in doc:

text = clean_text(t)

text_s = jieba.lcut(text)

text_clean = [word for word in text_s if word not in stop_words.s.tolist()]

doc_clean.append(text_clean)

- 构建字典及语料库

dictionary = corpora.Dictionary(doc_clean)

corpus = [dictionary.doc2bow(text) for text in doc_clean]

- 构建模型

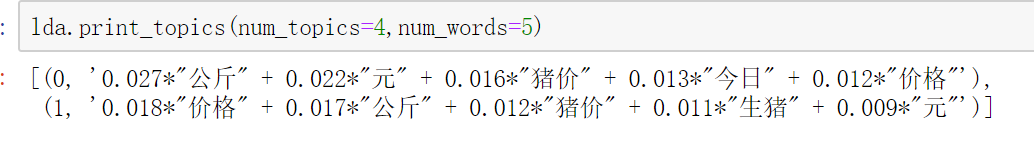

lda=gensim.models.ldamodel.LdaModel(corpus=corpus,id2word=dictionary,num_topics=2)

模型输出:

模型可视化

利用pyLDAvis可以查看生成的LDA主题模型。

import pyLDAvis.gensim

vis = pyLDAvis.gensim.prepare(lda, corpus, dictionary)

pyLDAvis.show(vis)

pyLDAvis.save_html(vis, 'lda.html')

536

536

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?