爬虫的请求库为什么要学习requests,⽽不是urllib?

- requests的底层实现就是urllib

- requests在Python2和Python3通⽤,⽅法完全⼀样

- requests简单易⽤

- requests能够⾃动帮助我们解压(gzip压缩的)⽹⻚内容

requests的作用

作⽤:发送⽹络请求,返回相应数据

requests的基本用法

import requests

url = 'https://www.baidu.com/'

response = requests.get(url)

print(response.text)

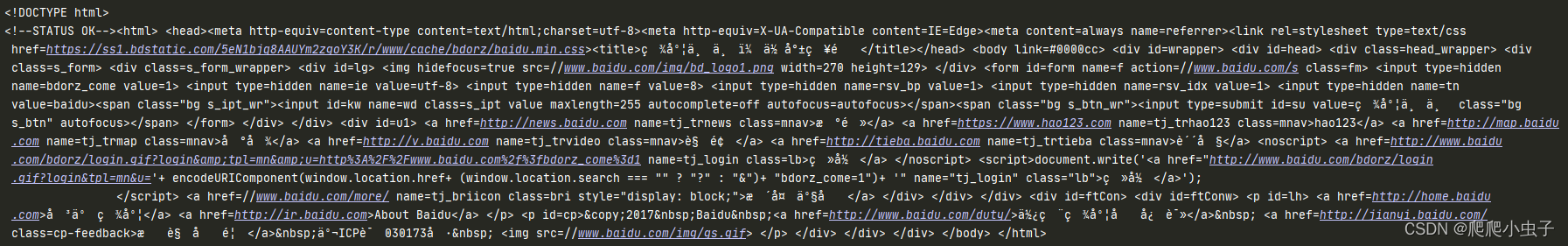

打印出来的结果是:

可以看到相应结果中有乱码。

解决requests的相应结果的乱码问题的方法

有两个:

(1)

import requests

url = 'https://www.baidu.com/'

response = requests.get(url)

contents = response.content.decode('utf-8')

print(contents)

(2)

import requests

url = 'https://www.baidu.com/'

response = requests.get(url)

response.encoding = 'utf-8'

print(response.text)

response.text和response.content的区别

(1)response.text

- 类型:str

- 修改编码⽅式:response.encoding = ‘utf-8’

(2)response.content - 类型:bytes

- 修改编码⽅式:response.content.decode(‘utf8’)

发送简单请求

response = requests.get(url)

# response的常⽤⽅法:

response.text

response.content

response.status_code

response.request.headers

response.headers

下载图片

url = 'https://www.baidu.com/img/bd_logo1.png?where=su'

response = requests.get(url)

with open('baidu.png','wb') as f:

f.write(response.content)

发送带header的请求

为什么请求需要带上header?

模拟浏览器,欺骗服务器,获取和浏览器⼀致的内容

header的形式:字典

headers = {

'User-Agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/107.0.0.0 Safari/537.36'

}

用法是:

requests.get(url,headers = headers)

发送带参数的请求

如果url中带有参数,形式为:

https://cn.bing.com/search?q=python

那么我们用requests发送请求时,需要发送带参数的请求,参数的形式为字典。

kw = {‘q’:‘python’}

用法:requests.get(url,params=kw)

url = 'https://cn.bing.com/'

kw = {'q':'python'}

headers = {

'User-Agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/107.0.0.0 Safari/537.36'

}

response = requests.get(url,headers=headers,params=kw)

print(response.url)

print(response.content.decode('utf-8'))

贴吧练习

import requests

class TiebaSpider(object):

def __init__(self,name):

self.url = 'https://tieba.baidu.com/f?kw=' + name + '&ie=utf-8&pn='

self.name = name

self.headers = {'User-Agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/107.0.0.0 Safari/537.36'}

def get_urllist(self):

self.urllist = []

for i in range(10):

self.urllist.append(self.url + str(50*i))

return self.urllist

def jiexi(self,url):

response = requests.get(url,headers=self.headers)

return response.text

def baocun(self,contents,page):

file_name = '{}第{}页.html'.format(self.name,page)

with open(file_name,'w',encoding='utf-8') as f:

f.write(contents)

def run(self):

urllist = self.get_urllist()

for url in urllist:

contents = self.jiexi(url)

page = urllist.index(url) + 1

self.baocun(contents,page)

exit()

if __name__ == '__main__':

tieba = TiebaSpider('lol')

tieba.run()

文章介绍了Python爬虫中使用requests库的原因,包括其易用性、兼容性和自动解压功能。同时,解释了requests的基本用法,如发送GET请求、处理响应的乱码问题、理解response.text和response.content的区别,以及如何发送带header和参数的请求。文章还提供了一个简单的贴吧爬虫示例,展示如何利用requests进行网页抓取和保存。

文章介绍了Python爬虫中使用requests库的原因,包括其易用性、兼容性和自动解压功能。同时,解释了requests的基本用法,如发送GET请求、处理响应的乱码问题、理解response.text和response.content的区别,以及如何发送带header和参数的请求。文章还提供了一个简单的贴吧爬虫示例,展示如何利用requests进行网页抓取和保存。

1629

1629

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?