b站up主:刘二大人《PyTorch深度学习实践》

教程: https://www.bilibili.com/video/BV1Y7411d7Ys?p=6&vd_source=715b347a0d6cb8aa3822e5a102f366fe

三层模型

:

t

o

r

c

h

.

n

n

.

L

i

n

e

a

r

激活函数:

R

e

L

U

+

s

i

g

m

o

i

d

交叉熵损失函数:

n

n

.

B

C

E

L

o

s

s

优化器:

o

p

t

i

m

.

A

d

a

m

数据集:

d

i

a

b

e

t

e

s

.

c

s

v

.

g

z

(

在

b

站课程的下载里有

)

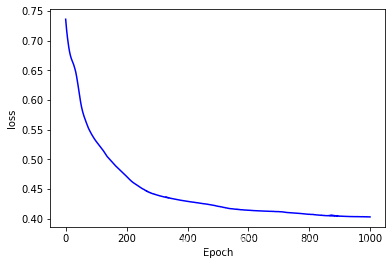

三层模型:torch.nn.Linear \\激活函数:ReLU+sigmoid \\交叉熵损失函数:nn.BCELoss \\优化器:optim.Adam \\数据集:diabetes.csv.gz (在b站课程的下载里有)

三层模型:torch.nn.Linear激活函数:ReLU+sigmoid交叉熵损失函数:nn.BCELoss优化器:optim.Adam数据集:diabetes.csv.gz(在b站课程的下载里有)

import torch

import matplotlib.pyplot as plt

import numpy as np

#数据集

xy = np.loadtxt('/content/drive/MyDrive/pytorch_lab/data/diabetes.csv.gz', delimiter=',', dtype = np.float32)

x_data = torch.from_numpy(xy[:,:-1]) #取除了最后一列的所有数据

y_data = torch.from_numpy(xy[:,[-1]]) #取最后一列

epoch_p = []

loss_p = []

#设计模型

class Model(torch.nn.Module):

def __init__(self):

super(Model, self).__init__()

self.linear1 = torch.nn.Linear(8, 6) #输入feature为8维(8列 N*8),输出为6维(6列 N*6)

self.linear2 = torch.nn.Linear(6, 4)

self.linear3 = torch.nn.Linear(4, 1)

self.activate = torch.nn.ReLU() #ReLU为层结构,需要添加在Model中才能使用,torch.nn.functional.relu为函数可以直接使用

def forward(self, x):

x = self.activate(self.linear1(x))

x = self.activate(self.linear2(x))

y_pred = torch.sigmoid(self.linear3(x))

return y_pred

model = Model()

#构造损失函数和优化器

criterion = torch.nn.BCELoss() #交叉熵损失函数,计算概率损失

optimizer = torch.optim.Adam(model.parameters(), lr = 0.01)

#train

for epoch in range(1000):

y_pred = model(x_data)

loss = criterion(y_pred, y_data)

loss_p.append(loss.item())

epoch_p.append(epoch)

print("第{}个Epoch,loss = {:.6f}".format(epoch, loss))

optimizer.zero_grad()

loss.backward()

optimizer.step()

plt.figure()

plt.plot(epoch_p, loss_p, c = 'b')

plt.xlabel('Epoch')

plt.ylabel('loss')

plt.show()

793

793

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?