一、数据选取

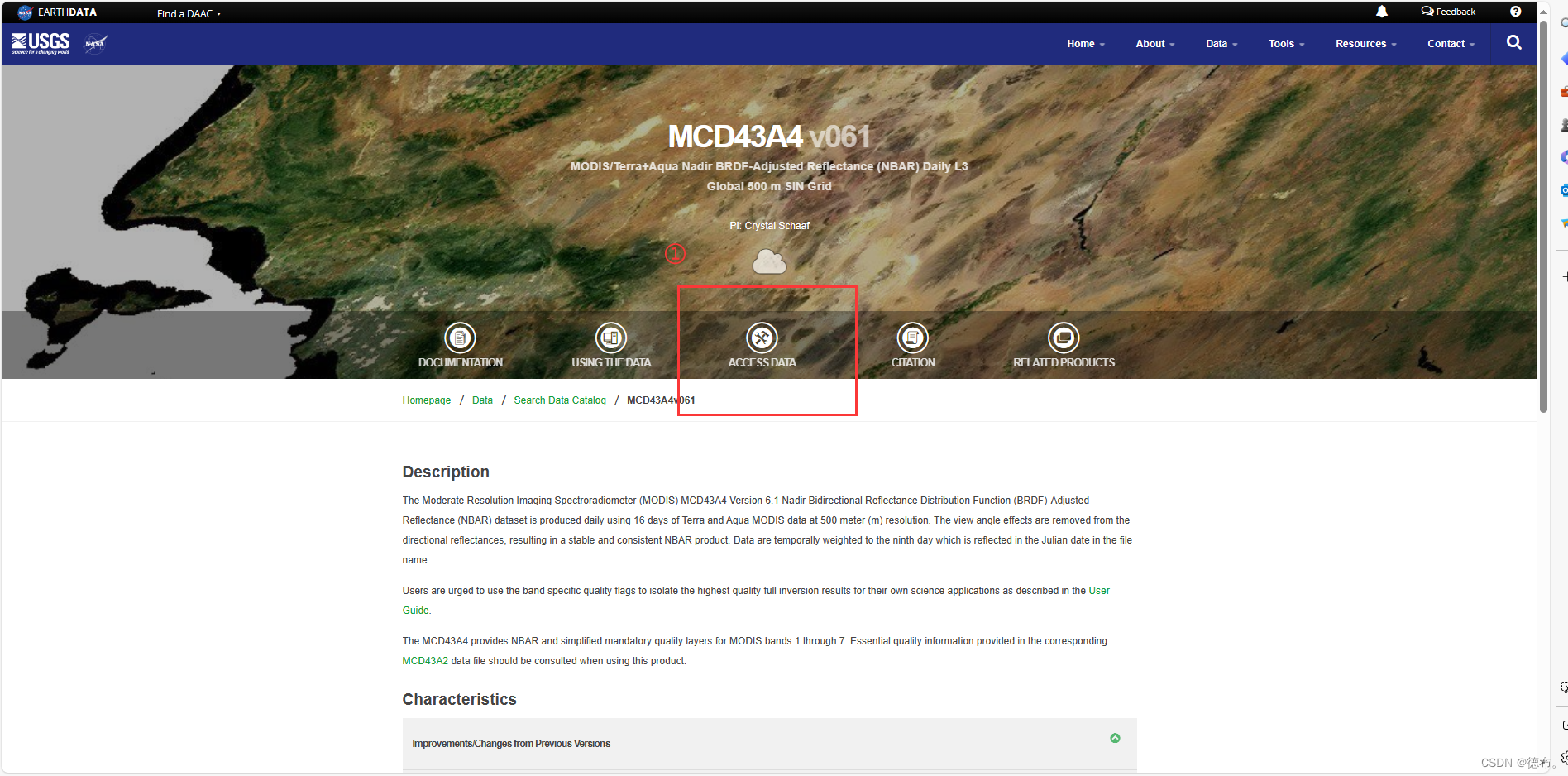

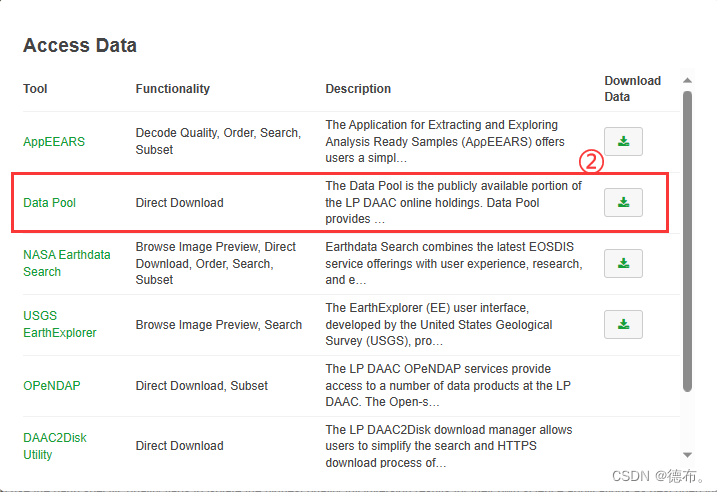

以MODIS/061/MCD43A4为例:

二、数据获取Python代码:

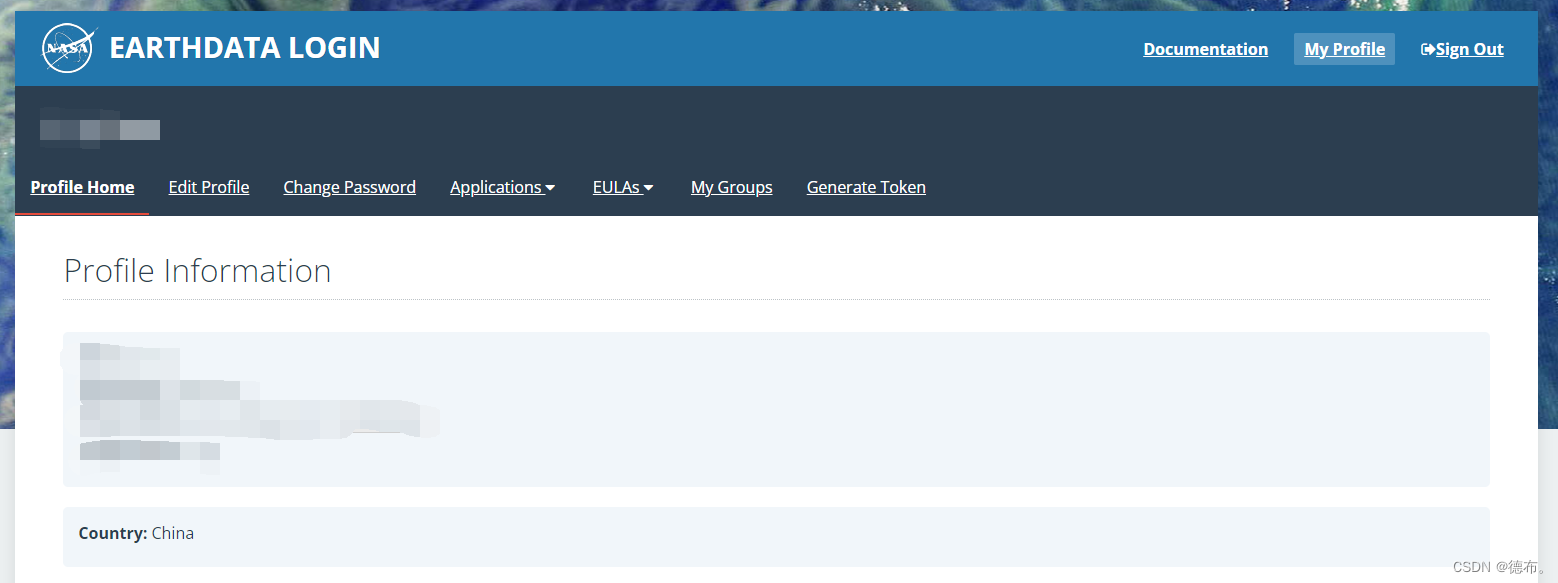

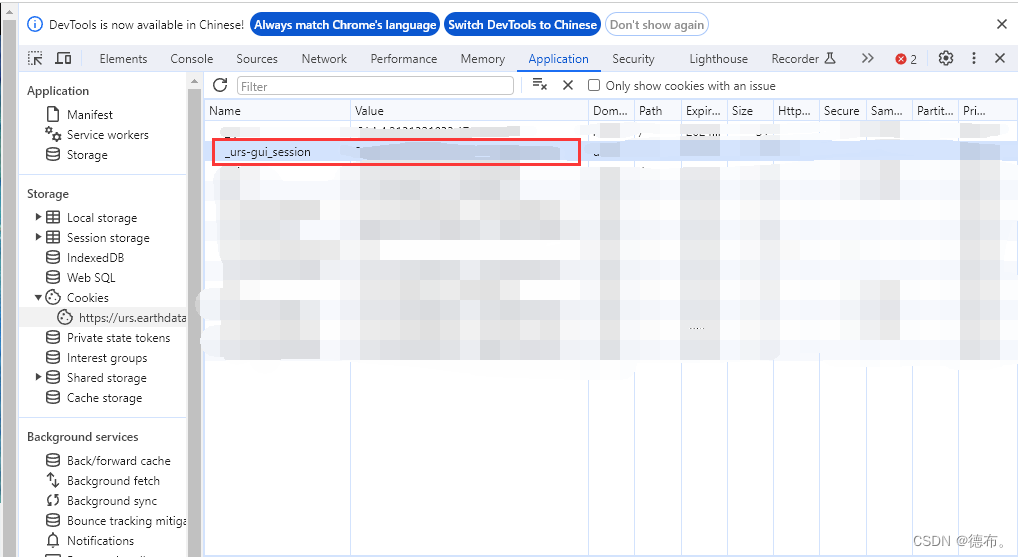

2.1 获取Cookies

谷歌浏览器登陆:https://urs.earthdata.nasa.gov/profile

按F12打开浏览器控制台,复制对应的Value

2.2 Python代码

import os

from datetime import datetime

from multiprocessing import Pool

import pandas as pd

import requests

from bs4 import BeautifulSoup

def main(SUB_URL):

# 下载到哪个文件夹

dst_folder = "F:\\2012_Python"

# 创建目录

os.makedirs(dst_folder, exist_ok=True)

# 请求链接

r_subfolder = requests.get(SUB_URL)

soup_subfolder = BeautifulSoup(r_subfolder.text, "html.parser")

# 遍历所有链接

for link_carpetas in soup_subfolder.find_all("a"):

file_name = link_carpetas.get("href")

# 如果是HDF文件

if file_name.endswith('.hdf'):

remote_file = SUB_URL + "/" + file_name

dst_file = os.path.join(dst_folder, file_name)

# # 判断是否已下载

# if os.path.exists(dst_file) and os.path.getsize(dst_file) > 2048:

# continue

print(remote_file)

# cookies信息

cookies = {'_urs-gui_session': 'XXXXXXX'} #获取的Cookies,cookies _urs-gui_session 需要修改,有效期一般只有1天

requests.DEFAULT_RETRIES = 5

response = requests.get(remote_file, cookies=cookies, timeout=300)

with open(dst_file, "wb") as handle:

handle.write(response.content)

print("download_success", dst_file)

if __name__ == '__main__':

# 影像的起始和截止时间

time_series = pd.date_range(start=datetime.strptime("2012-01-01", '%Y-%m-%d'),

end=datetime.strptime("2013-01-01", '%Y-%m-%d'), freq='d').to_list()

# 影像的下载地址,上图复制的链接

url_list = ["https://e4ftl01.cr.usgs.gov/MOTA/MCD43A4.061/" + datetime.strftime(t, '%Y.%m.%d') for t in time_series]

pool = Pool(20) # 设置并行处理的线程数

pool.map(main, url_list)

三、数据处理python代码

3.1 读取HDF文件信息

列出HDF文件中所有数据集和文件级别的属性名及其对应的值,特别注意识别包含空间范围信息的属性。

from pyhdf.SD import SD, SDC

def list_hdf_attributes(hdf_file):

"""列出HDF文件中的所有属性。"""

file = SD(hdf_file, SDC.READ)

# 打印文件级别的属性

print("File-level attributes:")

for name in file.attributes().keys():

value = file.attributes()[name]

print(f" {name}: {value}")

# 打印每个数据集的属性

datasets_dic = file.datasets()

for ds_name in datasets_dic.keys():

print(f"\nDataset: {ds_name}")

sds = file.select(ds_name)

for name in sds.attributes().keys():

value = sds.attributes()[name]

print(f" {name}: {value}")

file.end() # 关闭文件

# HDF文件路径

hdf_file_path = 'hdf_file.hdf' #替换为HDF文件的实际路径

# 列出属性

list_hdf_attributes(hdf_file_path)

- 获取到的空间信息如下:

以下信息包含在StructMetadata.0中:

GridName=“MOD_Grid_BRDF”

XDim=2400

YDim=2400

UpperLeftPointMtrs=(4447802.078667,-2223901.039333)

LowerRightMtrs=(5559752.598333,-3335851.559000)

Projection=GCTP_SNSOID 【正弦投影Sinusoidal projection】

ProjParams=(6371007.181000,0,0,0,0,0,0,0,0,0,0,0,0) 【投影设置的必要参数:地球半径m】

- 获取到数据集"Nadir_Reflectance_Band1"的信息如下,其他波段类似:

Dataset: Nadir_Reflectance_Band1

long_name: Nadir_Reflectance_Band1

> units: reflectance, no units

valid_range: [0, 32766]

> _FillValue:32767

> scale_factor: 0.0001

add_offset: 0.0

scale_factor_err: 0.0

add_offset_err: 0.0

calibrated_nt: 5

3.2 转换单个HDF to TIFF

# -*- coding: utf-8 -*-

from pyhdf.SD import SD, SDC

from osgeo import gdal, osr

import os

def get_spatial_extent(hdf_file):

"""从HDF文件中读取空间范围信息。"""

file = SD(hdf_file, SDC.READ)

# 从文件属性中提取空间范围信息

# 3.1过程中获取得到

attrs = file.attributes()

struct_metadata = attrs['StructMetadata.0']

# 解析StructMetadata获取空间范围信息

parts = struct_metadata.split('\n')

upper_left = None

lower_right = None

for part in parts:

if "UpperLeftPointMtrs" in part:

upper_left = tuple(map(float, part.split('=')[1].strip('()').split(',')))

elif "LowerRightMtrs" in part:

lower_right = tuple(map(float, part.split('=')[1].strip('()').split(',')))

file.end() # 关闭文件

return upper_left, lower_right

def convert_hdf_to_tiff(hdf_file, output_folder):

"""将 HDF 文件中的多个波段转换为 TIFF 格式,并设置地理参考。"""

hdf = SD(hdf_file, SDC.READ)

# 选择一个波段来获取尺寸

sds_obj = hdf.select('Nadir_Reflectance_Band1')

band_dims = sds_obj.dimensions()

# 列出所有维度

print("Dimensions in the dataset:", band_dims)

# 根据实际的维度名称获取列数和行数

cols = band_dims['YDim:MOD_Grid_BRDF']

rows = band_dims['XDim:MOD_Grid_BRDF']

# 获取空间范围信息

upper_left, lower_right = get_spatial_extent(hdf_file)

# 设置GeoTransform参数

pixel_width = (lower_right[0] - upper_left[0]) / cols

pixel_height = (lower_right[1] - upper_left[1]) / rows

geotransform = (upper_left[0], pixel_width, 0, upper_left[1], 0, pixel_height)

# 设置空间参考

sr = osr.SpatialReference()

sr.ImportFromProj4('+proj=sinu +R=6371007.181 +nadgrids=@null +wktext') # 设置正弦投影和相关参数

spatial_ref = sr.ExportToWkt()

# 转换每个波段为TIFF

for idx in range(1, 8):

band_name = f'Nadir_Reflectance_Band{idx}'

sds_obj = hdf.select(band_name)

band_data = sds_obj.get()

output_filename = os.path.join(output_folder, f'{band_name}.tif')

driver = gdal.GetDriverByName("GTiff")

dataset = driver.Create(output_filename, cols, rows, 1, gdal.GDT_Int16, ["TILED=YES", "COMPRESS=LZW"])

dataset.SetGeoTransform(geotransform)

dataset.SetProjection(spatial_ref)

dataset.GetRasterBand(1).WriteArray(band_data)

dataset = None # 关闭文件

# HDF 文件和输出文件夹的路径

hdf_file_path = 'F:\XX.hdf' #替换为HDF文件的实际路径

output_folder = 'F:\XXXX' #替换为保存TIFF文件夹的实际路径

# 调用函数进行转换

convert_hdf_to_tiff(hdf_file_path, output_folder)

3.3 对TIFF文件进行拼接、投影变换和重采样

import arcpy

import os

def process_tiff_in_subfolders(input_root_folder, output_root_folder, inf):

# 重采样参考栅格文件及其属性

cellsize = "{0} {1}".format(arcpy.Describe(inf).meanCellWidth, arcpy.Describe(inf).meanCellHeight)

spatial_reference = arcpy.Describe(inf).spatialReference

# 遍历根文件夹中的所有子文件夹

# 对于每个子文件夹中的 1 到 7 波段('Band1' 到 'Band7'),函数寻找以 '.tif' 结尾且包含对应波段名称的 TIFF 文件。

for subdir, dirs, files in os.walk(input_root_folder):

for band in range(1, 8):

band_str = 'Band{}'.format(band)

print("Processing {} in {}...".format(band_str, subdir))

# 构建输入文件列表

tiff_files = [os.path.join(subdir, f) for f in files if f.endswith(band_str + '.tif')]

if not tiff_files:

continue # 如果没有找到波段文件,跳过当前循环

input_rasters = ";".join(tiff_files)

# 输出文件夹

output_folder = os.path.join(output_root_folder, os.path.basename(subdir))

# 确保输出文件夹存在

if not os.path.exists(output_folder):

os.makedirs(output_folder)

# 拼接后的 TIFF 文件名

mosaic_tiff = 'mosaic_{}.tif'.format(band_str)

# 坐标系转换后输出的 TIFF 文件名

final_tiff = 'final_output_{}.tif'.format(band_str)

# 重采样后的 TIFF 文件名

resampled_tiff = 'final_output_{}_{}.tif'.format(os.path.basename(subdir), band_str)

# 拼接 TIFF 文件

arcpy.management.MosaicToNewRaster(

input_rasters=input_rasters,

output_location=output_folder,

raster_dataset_name_with_extension=mosaic_tiff,

pixel_type="16_BIT_UNSIGNED",

cellsize="",

number_of_bands=1,

mosaic_method="LAST"

)

# 定义转换和重采样后的文件路径

mosaic_tiff_path = os.path.join(output_folder, mosaic_tiff)

final_tiff_path = os.path.join(output_folder, final_tiff)

resampled_tiff_path = os.path.join(output_folder, resampled_tiff)

# 进行投影转换,将拼接后的 TIFF 文件投影转换到参考栅格文件的空间参考系统

arcpy.ProjectRaster_management(

in_raster=mosaic_tiff_path,

out_raster=final_tiff_path,

out_coor_system=spatial_reference

)

# 删除拼接后的 TIFF 文件

# arcpy.Delete_management(mosaic_tiff_path)

# 进行重采样,对投影转换后的 TIFF 文件进行重采样,使用双线性插值方法,并设置像素大小为参考栅格文件的平均像素大小

arcpy.Resample_management(

in_raster=final_tiff_path,

out_raster=resampled_tiff_path,

cell_size=cellsize,

resampling_type="BILINEAR"

)

# 删除坐标系转换后的 TIFF 文件

# arcpy.Delete_management(final_tiff_path)

print("Finished processing {} in {}".format(band_str, subdir))

# 定义文件夹路径

input_root_folder = r'F:\ATIFF'

output_root_folder = r'F:\ATIFF_Global'

inf = r"F:\MGPP01.tif" #投影、重采样步骤的参考TIFF

# 调用函数处理所有子文件夹中的 TIFF 文件

process_tiff_in_subfolders(input_root_folder, output_root_folder, inf)

以上内容仅供自己学习使用,参考文章:https://zhuanlan.zhihu.com/p/585437107

本文详细介绍了如何使用Python编程从NASA的MODIS数据获取模块下载HDF文件,包括设置Cookies、数据处理(如读取HDF属性、转HDF为TIFF并进行拼接和投影变换),以及如何使用GDAL库进行空间参考和重采样操作。

本文详细介绍了如何使用Python编程从NASA的MODIS数据获取模块下载HDF文件,包括设置Cookies、数据处理(如读取HDF属性、转HDF为TIFF并进行拼接和投影变换),以及如何使用GDAL库进行空间参考和重采样操作。

2232

2232

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?