前言

在跑Stable Diffusion v1系列的时候遇到了

OSError: Can’t load tokenizer for ‘clip-vit-large-patch14’. If you were trying to load it from ‘https://huggingface.co/models’, make sure you don’t have a local directory with the same name. Otherwise, make sure ‘clip-vit-large-patch14’ is the correct path to a directory containing all relevant files for a CLIPTokenizer tokenizer.

原因

据说是下载的时候

clip-vit-large-patch14 国内已经不能访问了

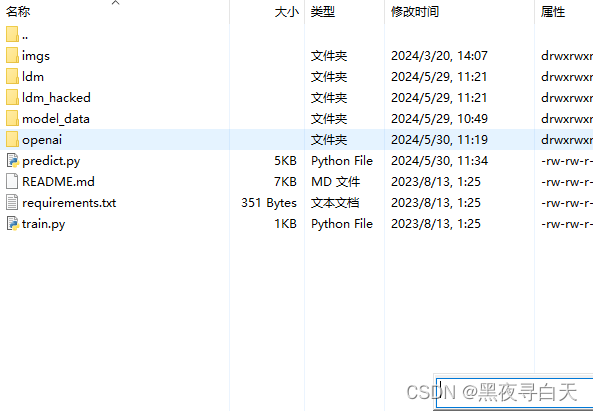

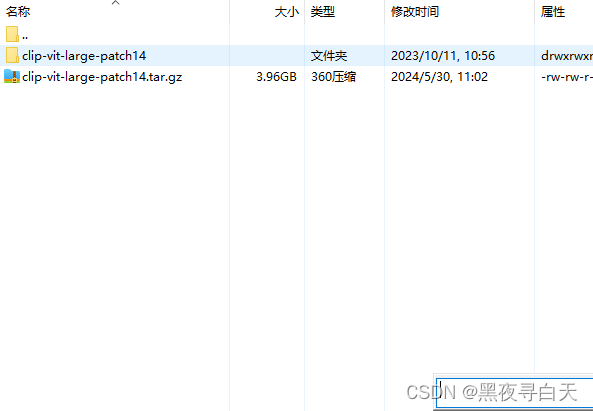

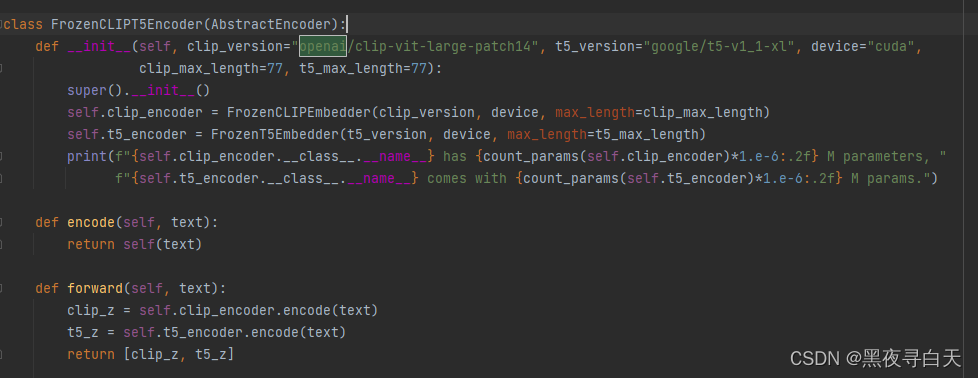

需要手动创建openai 目录并把 下载后解压的资源拖入到openai目录下面,我自己连代码都不用改

没改:

下载链接:

[已解决] Can‘t load tokenizer for ‘openai/clip-vit-large-patch14‘

另外如果不行的话,可以去官网下载

clip-vit-large-patch14官网链接

欢迎点赞或收藏~

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?