前言

本节学习逻辑回归

- 解决分类问题

- 样本特征和发生概率联系在一起

最后涉及多分类的OvR和OvO

1、逻辑回归

将样本的特征和样本发生的概率联系在一起

用到了sigmoid函数

通过训练得到θ

然后可以进行预测

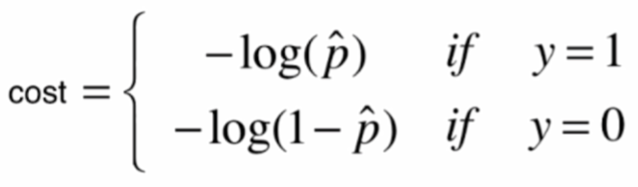

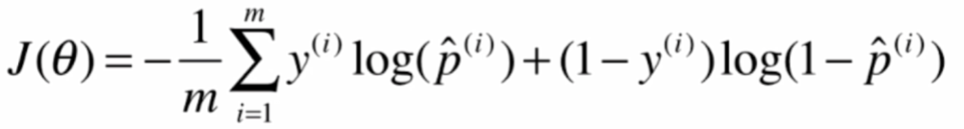

损失函数是

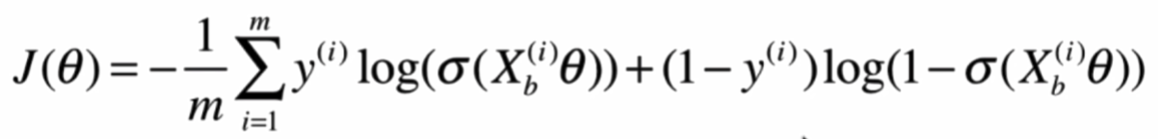

进行演化和代入如下

由于这个损失函数没有解析解

所以用梯度下降法来求解

梯度是

实现如下

import numpy as np

from sklearn.metrics import accuracy_score

class LogisticRegression:

def __init__(self):

"""初始化Logistic Regression模型"""

self.coef_ = None

self.intercept_ = None

self._theta = None

def _sigmoid(self, t):

return 1. / (1. + np.exp(-t))

def fit(self, X_train, y_train, eta=0.01, n_iters=1e4):

"""根据训练数据集X_train, y_train, 使用梯度下降法训练Logistic Regression模型"""

assert X_train.shape[0] == y_train.shape[0], \

"the size of X_train must be equal to the size of y_train"

# 损失函数

def J(theta, X_b, y):

y_hat = self._sigmoid(X_b.dot(theta))

try:

return - np.sum(y*np.log(y_hat) + (1-y)*np.log(1-y_hat)) / len(y)

except:

return float('inf')

# 梯度

def dJ(theta, X_b, y):

return X_b.T.dot(self._sigmoid(X_b.dot(theta)) - y) / len(y)

# 梯度下降

def gradient_descent(X_b, y, initial_theta, eta, n_iters=1e4, epsilon=1e-8):

theta = initial_theta

cur_iter = 0

while cur_iter < n_iters:

gradient = dJ(theta, X_b, y)

last_theta = theta

theta = theta - eta * gradient

if (abs(J(theta, X_b, y) - J(last_theta, X_b, y)) < epsilon):

break

cur_iter += 1

return theta

X_b = np.hstack([np.ones((len(X_train), 1)), X_train])

initial_theta = np.zeros(X_b.shape[1])

self._theta = gradient_descent(X_b, y_train, initial_theta, eta, n_iters)

self.intercept_ = self._theta[0]

self.coef_ = self._theta

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

2260

2260

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?