UDP分包传输视频

一、Webcam的使用

下载地址

https://github.com/sarxos/webcam-capture?tab=readme-ov-file

可以使用maven或者手动导包的方式使用

窗口中使用webcam

Webcam webcam = Webcam.getDefault();

webcam.setViewSize(WebcamResolution.VGA.getSize());

WebcamPanel panel = new WebcamPanel(webcam);

panel.setFPSDisplayed(true);

panel.setDisplayDebugInfo(true);

panel.setImageSizeDisplayed(true);

panel.setMirrored(true);

JFrame window = new JFrame("Test webcam panel");

window.add(panel);

window.setResizable(true);

window.setDefaultCloseOperation(JFrame.EXIT_ON_CLOSE);

window.pack();

webcam获取图片

BufferedImage image = webcam.getImage();

二、图片传输

方式:将BufferedImage转换为二维数组,将二维数组分片并进行传输

BufferedImage转换为二维数组

int width = image.getWidth();

int height = image.getHeight();

int[][] arr = new int[width][height];

for (int i = 0; i < width; i++) {

for (int j = 0; j < height; j++) {

arr[i][j] = image.getRGB(i,j);

}

}

分包传输

分片传输:

udp传输数据有大小限制,最大为65507 ,根据UDP协议,从UDP数据包的包头可以看出,UDP的最大包长度是2^16 -1的个字节。由于UDP包头占8个字节,而在IP层进行封装后的IP包头占去20字节,所以这个是UDP数据包的最大理论长度是2^16 - 1 - 8 - 20 = 65507字节

图片大小640* 480 *4 超过upd最大包长度,因此需要分片传输,分片需要给数据包添加序号,接收端根据序号对接收到的数据报进行排序并还原成二维数组

先将图片的宽高发送给接收端,随后将每一列数据添加序号发送给接收端

DatagramSocket s = new DatagramSocket();

ByteArrayOutputStream bas = new ByteArrayOutputStream();

DataOutputStream ds = new DataOutputStream(bas);

//把宽高发送给接收端

ds.writeInt(width);

ds.writeInt(height);

byte[] data = bas.toByteArray();

DatagramPacket p = new DatagramPacket(data,data.length,address);

s.send(p);

for (int i = 0; i < width; i++) {

ByteArrayOutputStream baos = new ByteArrayOutputStream ();

DataOutputStream dos = new DataOutputStream(baos);

//包的序号

dos.writeInt(i);

System.out.println("序号为:"+i);

//一列数据

for (int j = 0; j < height; j++) {

dos.writeInt(arr[i][j]);

}

dos.flush();

byte[] imageData = baos.toByteArray();

System.out.println(Arrays.toString(imageData));

DatagramPacket dp = new DatagramPacket(imageData,imageData.length,address);

s.send(dp);

System.out.println("imageData.length="+imageData.length);

}

数据包组装

byte[] buffer = new byte[8];

DatagramPacket p = new DatagramPacket(buffer, buffer.length);

s.receive(p);

ByteArrayInputStream bis = new ByteArrayInputStream(p.getData());

DataInputStream dis = new DataInputStream(bis);

int width = dis.readInt();

int height = dis.readInt();

System.out.println("width="+width+" height="+height);

int[][] arr = new int[width][height];

for (int i = 0; i < width; i++) {

byte[] b = new byte[height * 4 + 4];

DatagramPacket dp = new DatagramPacket(b, b.length);

s.receive(dp);

ByteArrayInputStream bs = new ByteArrayInputStream(dp.getData());

DataInputStream ds = new DataInputStream(bs);

int seque = ds.readInt();

// System.out.println("接收到的序号为:" + seque);

if(seque < width){

for (int j = 0; j < height; j++) {

arr[seque][j] = ds.readInt();

}

}

}

二维数组转换成BufferedImage

public static BufferedImage matrixToBufferedImage(int[][] matrix) {

int type = BufferedImage.TYPE_INT_RGB;

BufferedImage image = new BufferedImage(matrix.length, matrix[0].length, type);

for (int i = 0; i < matrix.length; i++) {

for (int j = 0; j < matrix[0].length; j++) {

image.setRGB(i,j,matrix[i][j]);

}

}

return image;

}

三、完整代码

WebcamPanelExample.java

发送方

package udp.webcamv2;

import com.github.sarxos.webcam.Webcam;

import com.github.sarxos.webcam.WebcamPanel;

import com.github.sarxos.webcam.WebcamResolution;

import javax.swing.*;

import java.awt.image.BufferedImage;

import java.io.ByteArrayOutputStream;

import java.io.DataOutputStream;

import java.io.IOException;

import java.net.DatagramPacket;

import java.net.DatagramSocket;

import java.net.InetSocketAddress;

import java.util.Arrays;

public class WebcamPanelExample {

public static void main(String[] args) throws InterruptedException {

Webcam webcam = Webcam.getDefault();

webcam.setViewSize(WebcamResolution.VGA.getSize());

WebcamPanel panel = new WebcamPanel(webcam);

panel.setFPSDisplayed(true);

panel.setDisplayDebugInfo(true);

panel.setImageSizeDisplayed(true);

panel.setMirrored(true);

JFrame window = new JFrame("Test webcam panel");

window.add(panel);

window.setResizable(true);

window.setDefaultCloseOperation(JFrame.EXIT_ON_CLOSE);

window.pack();

InetSocketAddress address = new InetSocketAddress("127.0.0.1",1239);

new Thread(new Runnable() {

@Override

public void run() {

System.out.println("webcam端线程执行啦!");

while(true){

try {

BufferedImage image = webcam.getImage();

if(image == null) continue;

Thread.sleep(50);

int width = image.getWidth();

int height = image.getHeight();

int[][] arr = new int[width][height];

for (int i = 0; i < width; i++) {

for (int j = 0; j < height; j++) {

arr[i][j] = image.getRGB(i,j);

}

}

DatagramSocket s = new DatagramSocket();

ByteArrayOutputStream bas = new ByteArrayOutputStream();

DataOutputStream ds = new DataOutputStream(bas);

//把宽高发送给接收端

ds.writeInt(width);

ds.writeInt(height);

byte[] data = bas.toByteArray();

DatagramPacket p = new DatagramPacket(data,data.length,address);

s.send(p);

for (int i = 0; i < width; i++) {

ByteArrayOutputStream baos = new ByteArrayOutputStream ();

DataOutputStream dos = new DataOutputStream(baos);

//包的序号

dos.writeInt(i);

System.out.println("序号为:"+i);

//一列数据

for (int j = 0; j < height; j++) {

dos.writeInt(arr[i][j]);

}

dos.flush();

byte[] imageData = baos.toByteArray();

System.out.println(Arrays.toString(imageData));

DatagramPacket dp = new DatagramPacket(imageData,imageData.length,address);

s.send(dp);

System.out.println("imageData.length="+imageData.length);

}

} catch (IOException | InterruptedException e) {

e.printStackTrace();

}

}

}

}).start();

window.setVisible(true);

}

}

Receiver.java

接收方

package udp.webcamv2;

import javax.swing.*;

import java.awt.*;

import java.awt.image.BufferedImage;

import java.io.ByteArrayInputStream;

import java.io.DataInputStream;

import java.io.IOException;

import java.net.DatagramPacket;

import java.net.DatagramSocket;

import java.net.InetSocketAddress;

import java.net.SocketException;

public class Receiver extends JFrame {

private String MY_IP = "127.0.0.1";

private int MY_PORT = 1239;

public Receiver(){

setSize(800,800);

setLayout(new FlowLayout());

setDefaultCloseOperation(JFrame.EXIT_ON_CLOSE);

setVisible(true);

}

public static void main(String[] args) {

Receiver re = new Receiver();

//接收图片并画在窗口展示出来

try {

InetSocketAddress address = new InetSocketAddress(re.MY_IP, re.MY_PORT);

DatagramSocket s = new DatagramSocket(address);

new Thread(new Runnable(){

@Override

public void run() {

while(true) {

try {

byte[] buffer = new byte[8];

DatagramPacket p = new DatagramPacket(buffer, buffer.length);

s.receive(p);

ByteArrayInputStream bis = new ByteArrayInputStream(p.getData());

DataInputStream dis = new DataInputStream(bis);

int width = dis.readInt();

int height = dis.readInt();

System.out.println("width="+width+" height="+height);

int[][] arr = new int[width][height];

for (int i = 0; i < width; i++) {

byte[] b = new byte[height * 4 + 4];

DatagramPacket dp = new DatagramPacket(b, b.length);

s.receive(dp);

ByteArrayInputStream bs = new ByteArrayInputStream(dp.getData());

DataInputStream ds = new DataInputStream(bs);

int seque = ds.readInt();

// System.out.println("接收到的序号为:" + seque);

if(seque < width){

for (int j = 0; j < height; j++) {

arr[seque][j] = ds.readInt();

}

}

}

BufferedImage bi = matrixToBufferedImage(arr);

Graphics g = re.getGraphics();

g.drawImage(bi,0,0,re);

} catch (IOException exception) {

exception.printStackTrace();

}

}

}

}).start();

} catch (SocketException e) {

e.printStackTrace();

} catch (IOException ioException) {

ioException.printStackTrace();

}

}

public static BufferedImage matrixToBufferedImage(int[][] matrix) {

int type = BufferedImage.TYPE_INT_RGB;

BufferedImage image = new BufferedImage(matrix.length, matrix[0].length, type);

for (int i = 0; i < matrix.length; i++) {

for (int j = 0; j < matrix[0].length; j++) {

image.setRGB(i,j,matrix[i][j]);

}

}

return image;

}

}

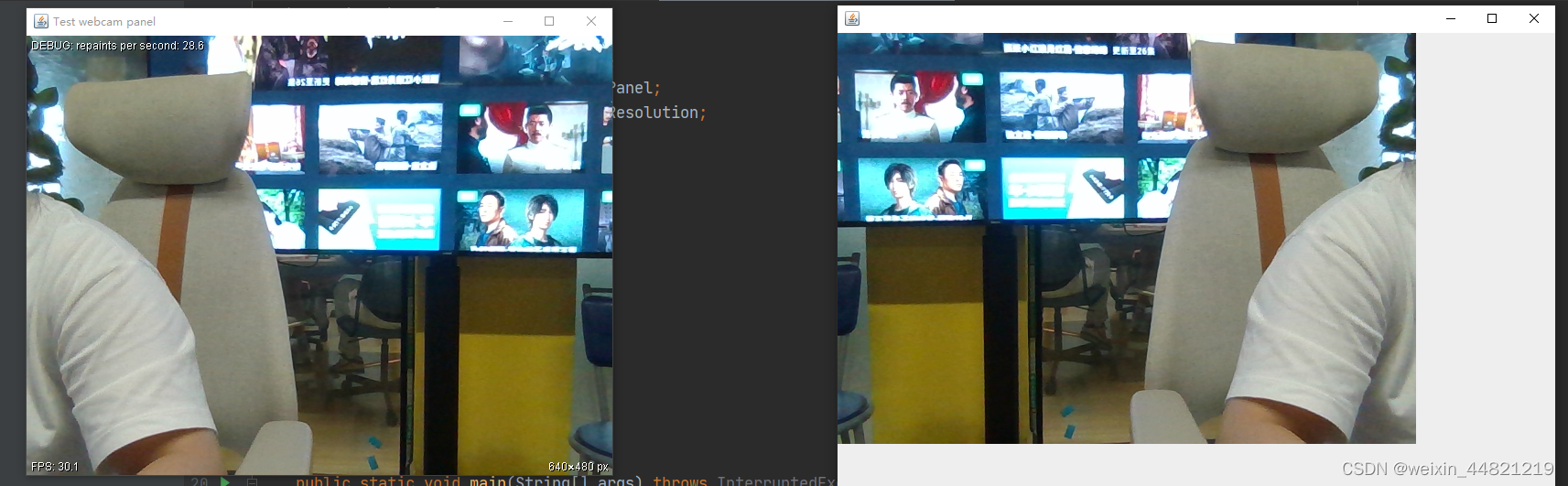

三、实现效果

四、总结

本文介绍了利用upd传输视频的整体流程,代码目前还存在的问题是必须先启动接收方再启动发送方,否则数据会混乱,接收方无法正确展示图片。

2091

2091

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?