import requests

import os

import time

import pandas as pd

# url = 'http://model.super202.cn/' # 网站门户地址

url = 'http://model.super202.cn/getJixingData.html' # 动态公共链接

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/103.0.0.0 Safari/537.36'

}

def craw_table(page,limit):

params = {

'page': page,

'limit':limit

}

resp = requests.get(url,headers = headers,params = params)

resp.encoding = 'utf-8'

data = resp.json()["data"]

df = pd.DataFrame(data)

df = df[['id', 'pinpai', 'name', 'wangluo', 'jixing']]

return df

df_list = []

for page in range(1,11):

print("正在爬取第%s页"%page)

df = craw_table(page,50)

'''合并多个dateframe'''

df_list.append(df)

time.sleep(1)

data = pd.concat(df_list)

print(data.tail())

os.chdir(r'C:\Users\DELL\Desktop')

data.to_csv('手机型号对照表.csv',encoding='utf-8-sig') # ,encoding='utf-8-sig'

# 查看尾页

print(data.tail())

# df = craw_table(2015,10)

# df_list = []

# for page in range(1,11):

# print("爬取第%s页:"%page)

# df = craw_table(page)

# '''合并多个dateframe'''

# df_list.append(df)

#

# if __name__ == '__main__':

# os.chdir(r'C:\Users\DELL\Desktop')

# data = pd.concat(df_list)

# data.to_excel('手机型号对照表.xlsx',index =False)

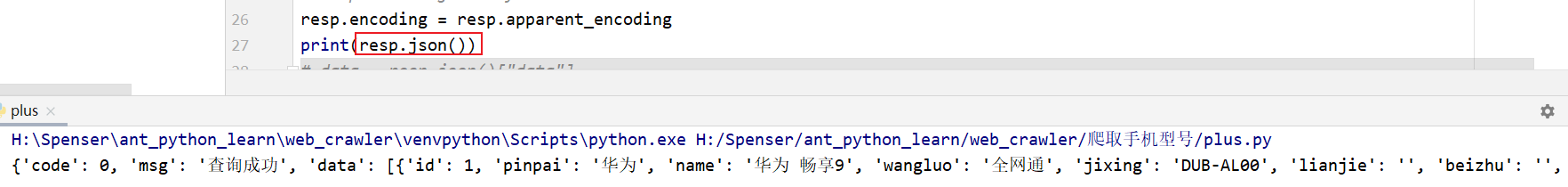

resp.json() 后:

2558

2558

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?