1.编译安装tengine,配置虚拟主机,实现api.x.com代理9001端口

1.1 安装源

yum install https://dl.fedoraproject.org/pub/epel/epel-release-latest-7.noarch.rpm

1.2 安装系统依赖包

yum install gcc patch libffi-devel python-devel zlib-devel bzip2-devel openssl-devel ncurses-devel sqlite-devel readline-devel tk-devel gdbm-devel db4-devel libpcap-devel xz-devel openssl openssl-devel -y

1.3 下载源码包

稳定版本

wget http://tengine.taobao.org/download/tengine-2.1.2.tar.gz

1.4 解压

[root@web16 tengine-2.1.2]# tar -zxvf tengine-2.1.2.tar.gz -C /usr/local/src/

1.5 编译

#编译

在目录/usr/local/src/tengine-2.1.2下

[root@web16 tengine-2.1.2]# ./configure --prefix=/apps/tengine --with-http_ssl_module --with-http_v2_module --with-http_realip_module --with-http_stub_status_module --with-http_gzip_static_module --with-pcre --with-file-aio

编译安装

make && make install

1.6 查看tengine版本

[root@web16 sbin]# /apps/tengine/sbin/nginx -v

Tengine version: Tengine/2.1.2 (nginx/1.6.2)

[root@web15 sbin]# /apps/tengine/sbin/nginx -v

Tengine version: Tengine/2.1.2 (nginx/1.6.2)

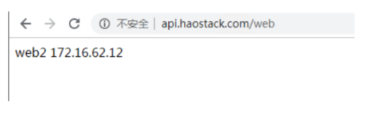

1.7 后端服务器配置

#安装apache服务

在172.16.62.12上安装和配置

yum install httpd

#配置

[root@node12 web]# pwd

/var/www/web

[root@node12 web]# more index.html

web2 172.16.62.12

[root@node12 web]#

#更改端口为9001

[root@node12 conf]# more httpd.conf | grep 9001

Listen 9001

[root@node12 conf]#

#启动httpd服务

systemctl restart httpd

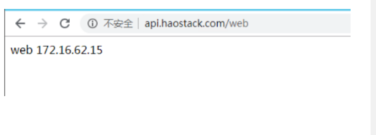

在172.16.62.15上安装

[root@web15 html]# more index.html

web 172.16.62.15

[root@web15 html]# pwd

/var/www/html

[root@web15 html]#

#更改端口为9001

[root@web15 conf]# more httpd.conf | grep 9001

Listen 9001

[root@web15 conf]#

#启动httpd服务

systemctl restart httpd

1.8 反向代理配置

#多台主机时需要指定组

upstream webserver {

server 172.16.62.12:9001 weight=1 fail_timeout=5s max_fails=3;

server 172.16.62.15:9001 weight=1 fail_timeout=5s max_fails=3;

}

server {

listen 80;

server_name www.api.haostack.com;

location / {

index index.html index.php;

root html;

}

location /web { #web项目才转发

proxy_pass http://webserver/; # webserver组

}

}

1.9 访问测试

2.配置haproxy,实现7层代理,/a路径代理转发到a集群,/b路径代理转发到b集群

2.1.haproxy 源码包下载

源码包官网下载地址:

- http://www.haproxy.org/download/

HAProxy 支持基于lua实现功能扩展,为应用程序提供灵活的扩展和定制功能,由于centos自带的lua版本比较低并不符合HAProxy要求的lua最低版本(5.3)的要求,因此编译时需要安装该包,lua官方下载地址

- https://www.lua.org/download.html

2.2 luau.3.5安装

#yum安装编译环境

yum install libtermcap-devel ncurses-devel libevent-devel readline-devel

下载luau5.3.5

wget http://lua.org/ftp/lua-5.3.5.tar.gz

#解压

[root@web16 apps]# tar xf lua-5.3.5.tar.gz -C /usr/local/src/

[root@web16 apps]# cd /usr/local/src/

#编译

cd src/

[root@web16 src]# make linux

make all SYSCFLAGS="-DLUA_USE_LINUX" SYSLIBS="-Wl,-E -ldl -lreadline"

make[1]: Entering directory `/usr/local/src/lua-5.3.5/src'

make[1]: Nothing to be done for `all'.

make[1]: Leaving directory `/usr/local/src/lua-5.3.5/src'

#生产两个执行文件 lua luac

[root@web16 src]# ls

lapi.c lbaselib.o lcorolib.o ldebug.h lfunc.c linit.c llimits.h loadlib.o loslib.c lstate.h ltable.c ltm.o lua.h lutf8lib.c lzio.o

lapi.h lbitlib.c lctype.c ldebug.o lfunc.h linit.o lmathlib.c lobject.c loslib.o lstate.o ltable.h lua lua.hpp lutf8lib.o Makefile

lapi.o lbitlib.o lctype.h ldo.c lfunc.o liolib.c lmathlib.o lobject.h lparser.c lstring.c ltable.o luac lualib.h lvm.c

lauxlib.c lcode.c lctype.o ldo.h lgc.c liolib.o lmem.c lobject.o lparser.h lstring.h ltablib.c lua.c lua.o lvm.h

lauxlib.h lcode.h ldblib.c ldo.o lgc.h llex.c lmem.h lopcodes.c lparser.o lstring.o ltablib.o luac.c lundump.c lvm.o

lauxlib.o lcode.o ldblib.o ldump.c lgc.o llex.h lmem.o lopcodes.h lprefix.h lstrlib.c ltm.c luac.o lundump.h lzio.c

lbaselib.c lcorolib.c ldebug.c ldump.o liblua.a llex.o loadlib.c lopcodes.o lstate.c lstrlib.o ltm.h luaconf.h lundump.o lz

#系统版本

[root@web16 src]# lua -v

Lua 5.3.0 Copyright (C) 1994-2015 Lua.org, PUC-Rio

编译的版本

[root@web16 src]# ./lua -v

Lua 5.3.5 Copyright (C) 1994-2018 Lua.org, PUC-Rio

[root@web16 src]#

2.3 haproxy 编译安装

下载源码包

haproxy-1.8.20.tar.gz

解压

[root@web16 apps]# tar xf haproxy-1.8.20.tar.gz -C /usr/local/src/

[root@web16 apps]# cd /usr/local/src/

编译

make ARCH=x86_64 TARGET=linux2628 USE_PCRE=1 USE_OPENSSL=1 USE_ZLIB=1 USE_SYSTEMD=1 USE_CPU_AFFINITY=1

编译安装

make install PREFIX=/usr/local/haproxy

复制到sbin系统环境下

[root@web16 haproxy-1.8.20]# cp haproxy /usr/sbin/

[root@web16 haproxy-1.8.20]# cd /usr/sbin/

#查看版本

[root@web16 haproxy-1.8.20]# haproxy -v

HA-Proxy version 1.8.20 2019/04/25

Copyright 2000-2019 Willy Tarreau <willy@haproxy.org>

[root@web16 haproxy-1.8.20]#

准备HAProxy启动脚本

[root@web16 haproxy-1.8.20]# more /usr/lib/systemd/system/haproxy.service

[Unit]

Description=HAProxy Load Balancer

After=syslog.target network.target

#目录需对应安装目录

#[Service]

#ExecStartPre=/usr/sbin/haproxy -f /etc/haproxy/haproxy.cfg -c -q

#ExecStart=/usr/sbin/haproxy -Ws -f /etc/haproxy/haproxy.cfg -p /var/lib/haproxy/haproxy.pid

#ExecReload=/bin/kill -USR2 $MAINPID

#

#[Install]

#WantedBy=multi-user.target

[root@web16 haproxy-1.8.20]#

#准备HAProxy配置文件

mkdir /etc/haproxy

mkdir /var/lib/haproxy

chown haproxy:haproxy -P /var/lib/haproxy

#配置文件

[root@web16 haproxy]# more haproxy.cfg

global

maxconn 100000

chroot /usr/local/haproxy

stats socket /var/lib/haproxy/haproxy.sock mode 600 level admin

#stats socket /var/lib/haproxy/haproxy.sock1 mode 600 level admin process 1

#stats socket /var/lib/haproxy/haproxy.sock2 mode 600 level admin process 2

#stats socket /var/lib/haproxy/haproxy.sock3 mode 600 level admin process 3

#stats socket /var/lib/haproxy/haproxy.sock4 mode 600 level admin process 4

uid 993

gid 992

daemon

#nbproc 4 #默认单进程启动

#nbthread 4 #可设置为单进程多线程或者多进程单线程,以及针对进程进程cpu绑定

#cpu-map 1 0

#cpu-map 2 1

#cpu-map 3 2

#cpu-map 4 3

pidfile /var/lib/haproxy/haproxy.pid

log 127.0.0.1 local3 info

defaults

option http-keep-alive

option forwardfor

maxconn 100000

mode http

timeout connect 300000ms

timeout client 300000ms

timeout server 300000ms

# listen stats #启动web监控

# bind :9009

# stats enable

# stats hide-version

# stats uri /haproxy-status

# stats realm HAPorxy\Stats\Page

# stats auth admin:123456

# #stats refresh 3s

# stats admin if TRUE

listen stats

mode http

bind 0.0.0.0:9999

stats enable

log global

stats uri /haproxy-status

stats auth haadmin:123456

#listen webserver

#bind 0.0.0.0:80

#mode http

#log global

#server web1 172.16.62.12:80 check inter 3000 fall 2 rise 5

#server web2 172.16.62.15:80 check inter 3000 fall 2 rise 5

[root@web16 haproxy]#

[root@web16 haproxy]#

#启动haproxy 服务

[root@web16 ~]# systemctl restart haproxy

[root@web16 ~]# systemctl status haproxy

● haproxy.service - HAProxy Load Balancer

Loaded: loaded (/usr/lib/systemd/system/haproxy.service; disabled; vendor preset: disabled)

Active: active (running) since Sun 2020-06-21 21:33:21 CST; 2s ago

Process: 2521 ExecStartPre=/usr/sbin/haproxy -f /etc/haproxy/haproxy.cfg -c -q (code=exited, status=0/SUCCESS)

Main PID: 2523 (haproxy)

CGroup: /system.slice/haproxy.service

├─2523 /usr/sbin/haproxy -Ws -f /etc/haproxy/haproxy.cfg -p /var/lib/haproxy/haproxy.pid

└─2526 /usr/sbin/haproxy -Ws -f /etc/haproxy/haproxy.cfg -p /var/lib/haproxy/haproxy.pid

Jun 21 21:33:21 web16 systemd[1]: Starting HAProxy Load Balancer...

Jun 21 21:33:21 web16 systemd[1]: Started HAProxy Load Balancer.

[root@web16 ~]#

2.4 部署后端服务

#a 集群

web1 172.16.62.12:81

web2 172.16.62.15:81

#b 集群

tomcat1 172.16.62.12:8080

tomcat2 172.16.62.15:8080

安装apache

[root@web12 html]# more index.html

web1 172.16.62.12

[root@web12 html]# pwd

/var/www/html

[root@web12 html]#

[root@web15 html]# more index.html

web2 172.16.62.15

[root@web15 html]# pwd

/var/www/html

[root@web15 html]#

安装tomcat

#解压

tar -zxvf apache-tomcat-8.5.43.tar.gz

进入目录

cd apache-tomcat-8.5.43/

# 启动脚本

./bin/startup.sh

#查看端口

[root@web15 apache-tomcat-8.5.43]# ss -tnl

State Recv-Q Send-Q Local Address:Port Peer Address:Port

LISTEN 0 128 *:2222 *:*

LISTEN 0 100 :::8009 :::*

LISTEN 0 128 :::2222 :::*

LISTEN 0 100 :::8080 :::*

LISTEN 0 128 :::81 :::*

访问测试

[root@web16 haproxy-1.8.20]# curl -l 172.16.62.12:81

web2 172.16.62.12

[root@web16 haproxy-1.8.20]# curl -l 172.16.62.15:81

web22 172.16.62.15

[root@web16 haproxy-1.8.20]# curl -l 172.16.62.15:8080

tomcat2 172.16.62.15

[root@web16 haproxy-1.8.20]# curl -l 172.16.62.12:8080

tomcat1 172.16.62.12

[root@web16 haproxy-1.8.20]#

2.5 haproxy配置

[root@web16 haproxy]# more haproxy.cfg

global

maxconn 100000

chroot /usr/local/haproxy

stats socket /var/lib/haproxy/haproxy.sock mode 600 level admin

#stats socket /var/lib/haproxy/haproxy.sock1 mode 600 level admin process 1

#stats socket /var/lib/haproxy/haproxy.sock2 mode 600 level admin process 2

#stats socket /var/lib/haproxy/haproxy.sock3 mode 600 level admin process 3

#stats socket /var/lib/haproxy/haproxy.sock4 mode 600 level admin process 4

uid 993

gid 992

daemon

#nbproc 4 #默认单进程启动

#nbthread 4 #可设置为单进程多线程或者多进程单线程,以及针对进程进程cpu绑定

#cpu-map 1 0

#cpu-map 2 1

#cpu-map 3 2

#cpu-map 4 3

pidfile /var/lib/haproxy/haproxy.pid

log 127.0.0.1 local0 info

log 127.0.0.1 local1 warning

defaults

option http-keep-alive

option forwardfor

maxconn 100000

mode http

timeout connect 300000ms

timeout client 300000ms

timeout server 300000ms

listen stats #启动web监控

bind :9009

stats enable

stats hide-version

stats uri /haproxy-status

stats realm HAPorxy\Stats\Page

stats auth haadmin:123456

stats refresh 3s

stats admin if TRUE

mode http

log global

#backend webserver

# balance roundrobin

# server web1 172.16.62.12:81 maxconn 300 check inter 2000ms rise 2 fall 3

# server web2 172.16.62.15:81 maxconn 300 check inter 2000ms rise 2 fall 3

#backend tomcatserver

# balance roundrobin

# server tomcat1 172.16.62.12:8080 maxconn 300 check inter 2000ms rise 2 fall 3

# server tomcat2 172.16.62.15:8080 maxconn 300 check inter 2000ms rise 2 fall 3

listen webcluster

bind 0.0.0.0:81

mode http

log global

balance roundrobin

server web1 172.16.62.12:81 check inter 3000 fall 2 rise 5

server web2 172.16.62.15:81 check inter 3000 fall 2 rise 5

listen tomcatcluster

bind 0.0.0.0:8080

mode http

log global

balance roundrobin

server tomcat1 172.16.62.12:8080 check inter 3000 fall 2 rise 5

server tomcat2 172.16.62.15:8080 check inter 3000 fall 2 rise 5

2.6 登录状态页

- http://172.16.62.16:9009/haproxy-status

2.7访问测试

[root@node24 named]# curl -l api.haostack.com:81

web1 172.16.62.12

[root@node24 named]# curl -l api.haostack.com:81

web2 172.16.62.15

[root@node24 named]# curl -l api.haostack.com:8080

tomcat1 172.16.62.12

[root@node24 named]# curl -l api.haostack.com:8080

tomcat2 172.16.62.15

[root@node24 named]#

163

163

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?