Hadoop–Java操作HDFS

文章目录

一. 导入依赖

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

<hadoop.version>2.9.2</hadoop.version>

<!--<packaging>jar</packaging>-->

</properties>

<dependencies>

<!--hadoop公共依赖-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>${hadoop.version}</version>

</dependency>

<!--hadoop client 依赖-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>${hadoop.version}</version>

</dependency>

<!--map reduce-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-core</artifactId>

<version>${hadoop.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-common</artifactId>

<version>${hadoop.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-jobclient</artifactId>

<version>2.9.2</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency>

<dependency>

<groupId>log4j</groupId>

<artifactId>log4j</artifactId>

<version>1.2.17</version>

</dependency>

</dependencies>

1. 获取hdfs客户端

public class TestHDFS {

//FileSystem是java操作HDFS的客户端对象

private FileSystem fileSystem; //hdfs客户端对象

@Before

public void before() throws IOException {

//将window 用户名在运行时修改为root用户

//hadoop文件系统的权限设置为root

System.setProperty("HADOOP_USER_NAME","root");

//用来对core-site.xml hdfs-site.xml进行配置

Configuration configuration= new Configuration();

//连接hdfs

configuration.set("fs.defaultFS","hadoop1:9000");

//设置上传文件的副本集

configuration.set("dfs.replication","1");

this.fileSystem = FileSystem.get(configuration);

}

@After

public void close() throws IOException {

fileSystem.close();

}

}

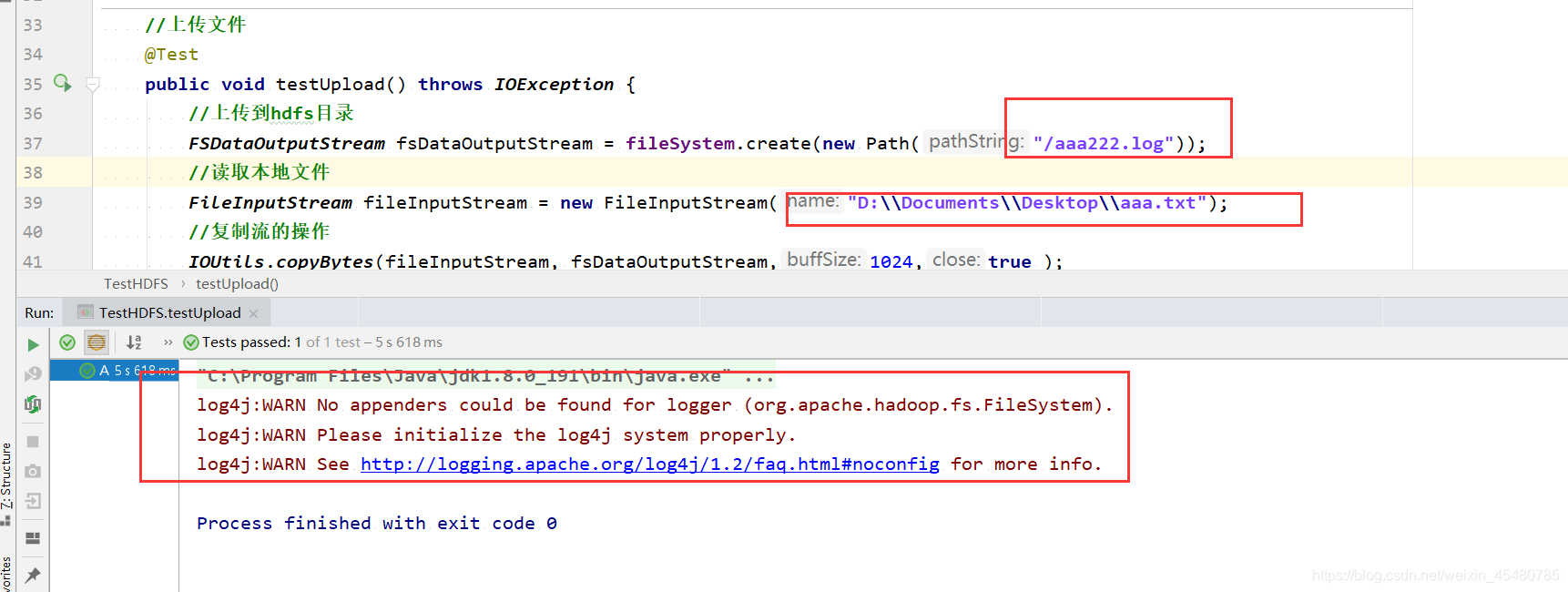

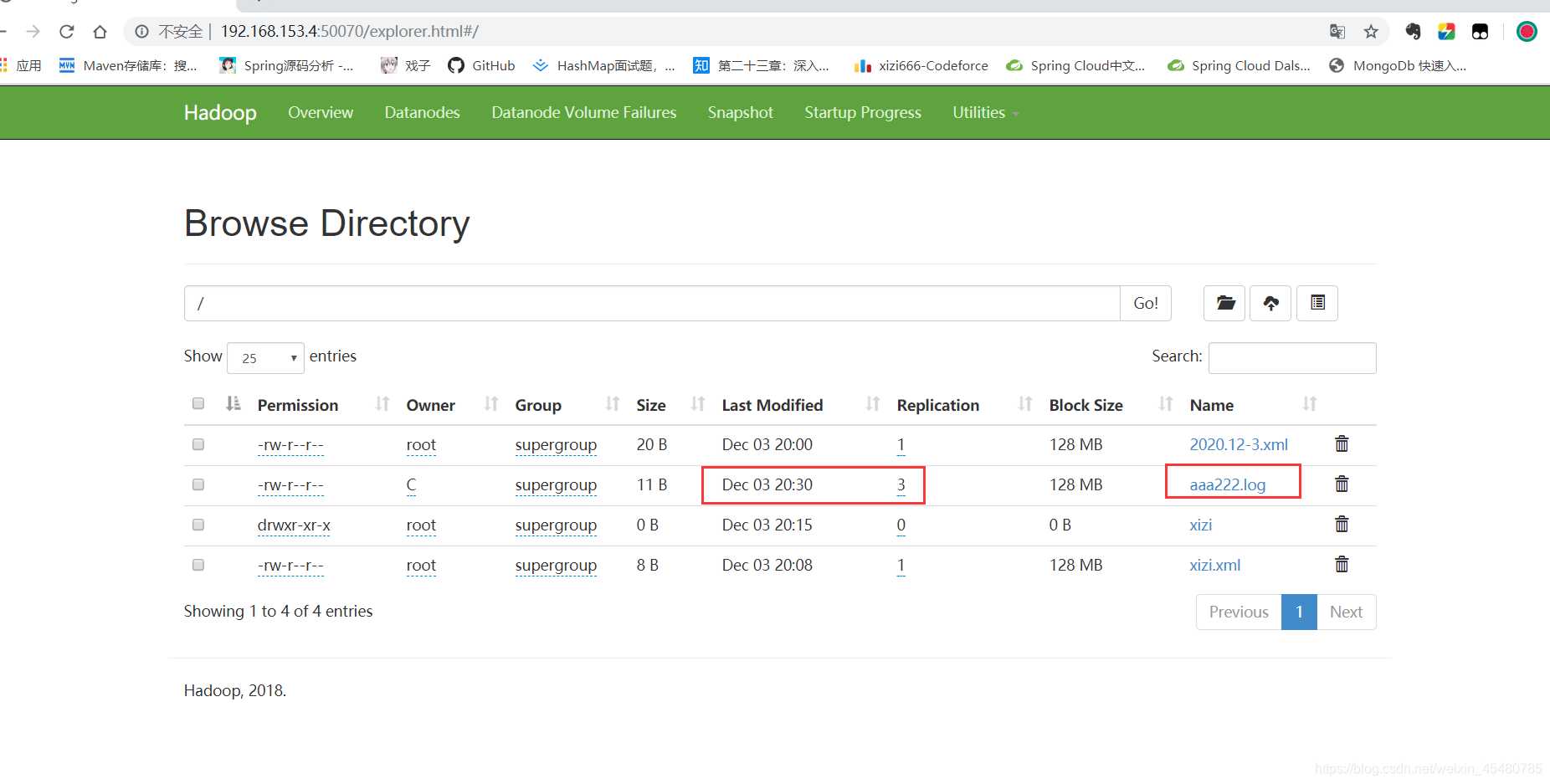

2. 上传文件到hdfs

//上传文件

@Test

public void testUpload() throws IOException {

//上传到hdfs目录

FSDataOutputStream fsDataOutputStream = fileSystem.create(new Path("/aaa222.log"));

//读取本地文件

FileInputStream fileInputStream = new FileInputStream("D:\\Documents\\Desktop\\aaa.txt");

//复制流的操作

IOUtils.copyBytes(fileInputStream, fsDataOutputStream,1024,true );

}

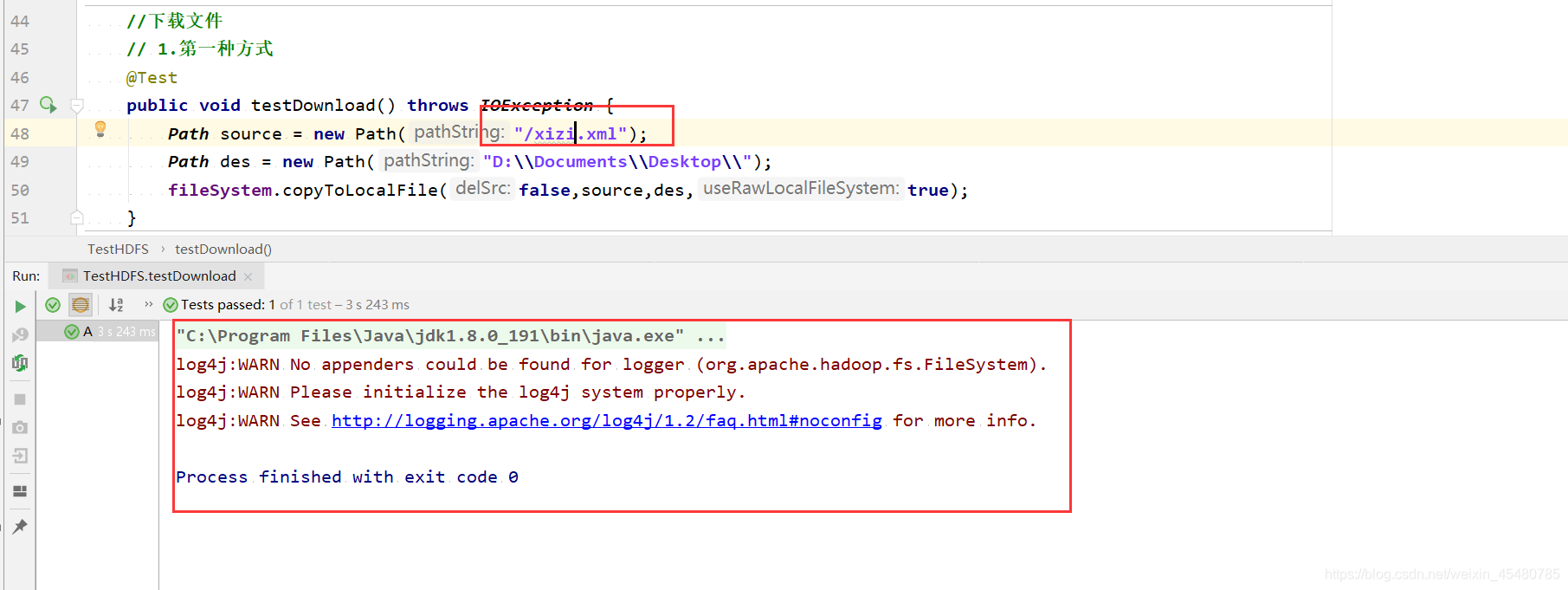

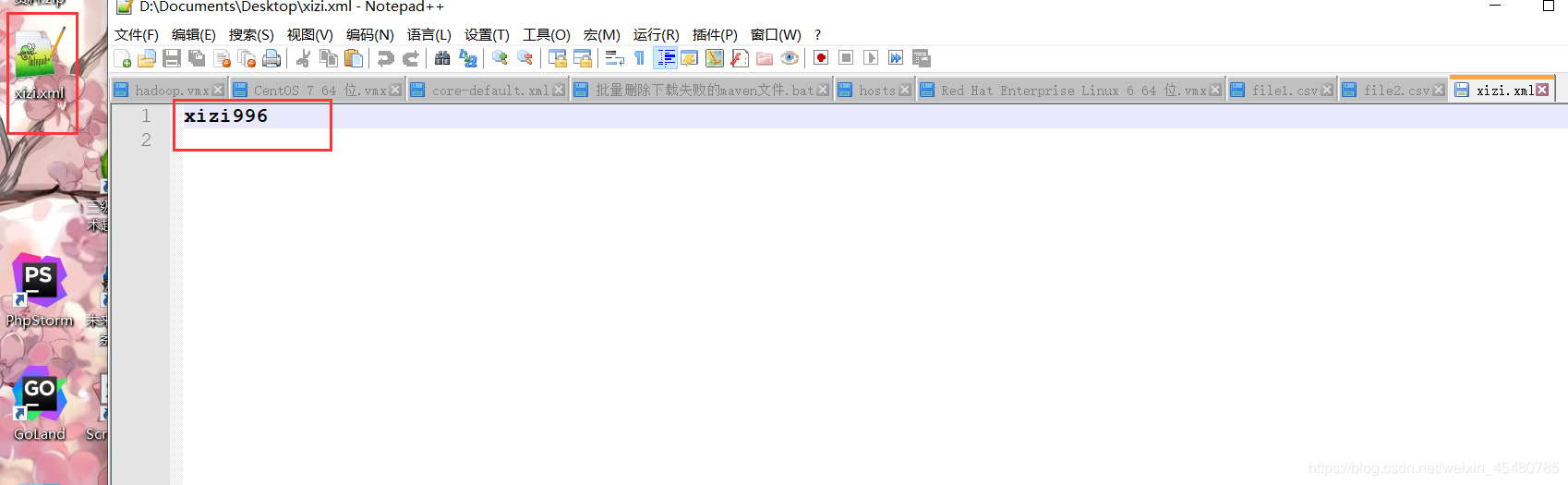

3. hdfs下载文件

//下载文件

// 1.第一种方式

@Test

public void testDownload() throws IOException {

Path source = new Path("/xizi.xml");

Path des = new Path("D:\\Documents\\Desktop\\");

fileSystem.copyToLocalFile(false,source,des,true);

}

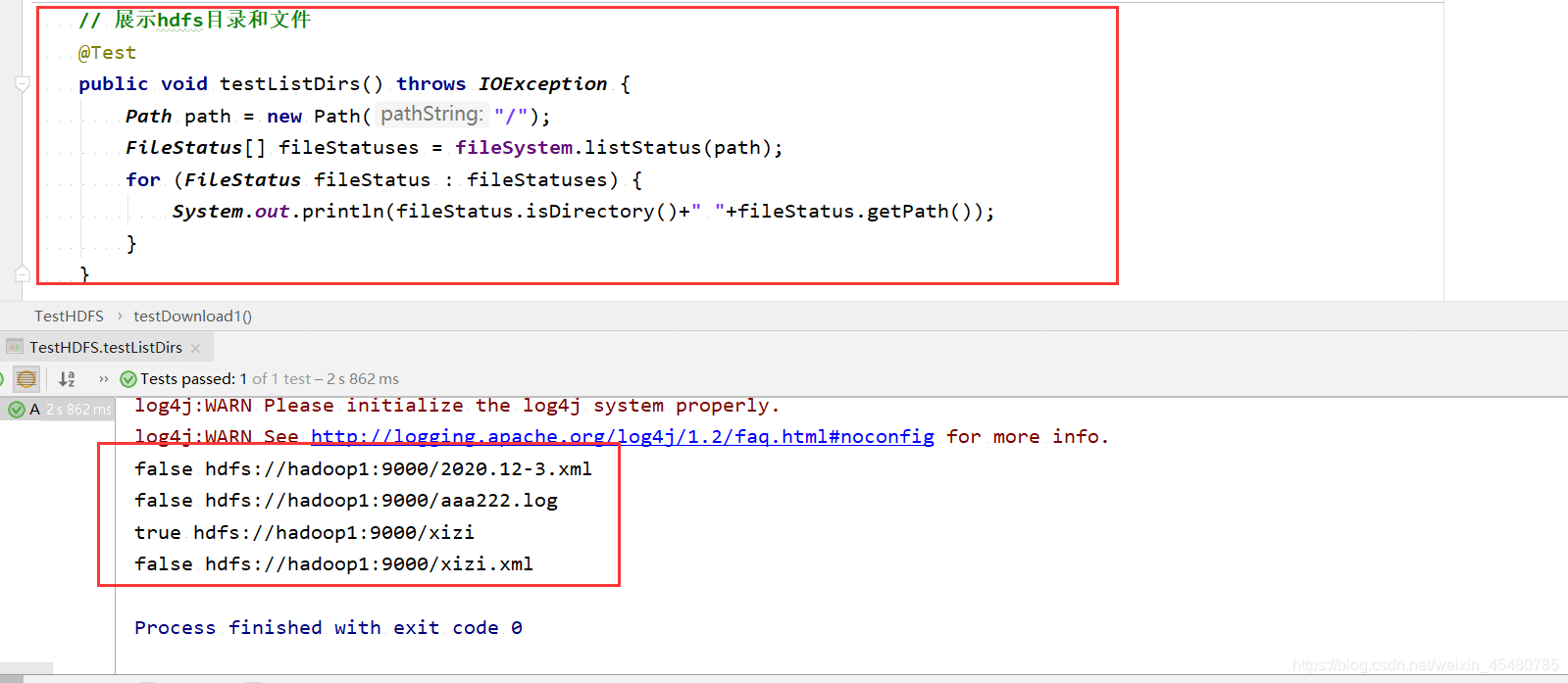

4. 展示hdfs目录和文件

// 展示hdfs目录和文件

@Test

public void testListDirs() throws IOException {

Path path = new Path("/");

FileStatus[] fileStatuses = fileSystem.listStatus(path);

for (FileStatus fileStatus : fileStatuses) {

System.out.println(fileStatus.isDirectory()+" "+fileStatus.getPath());

}

}

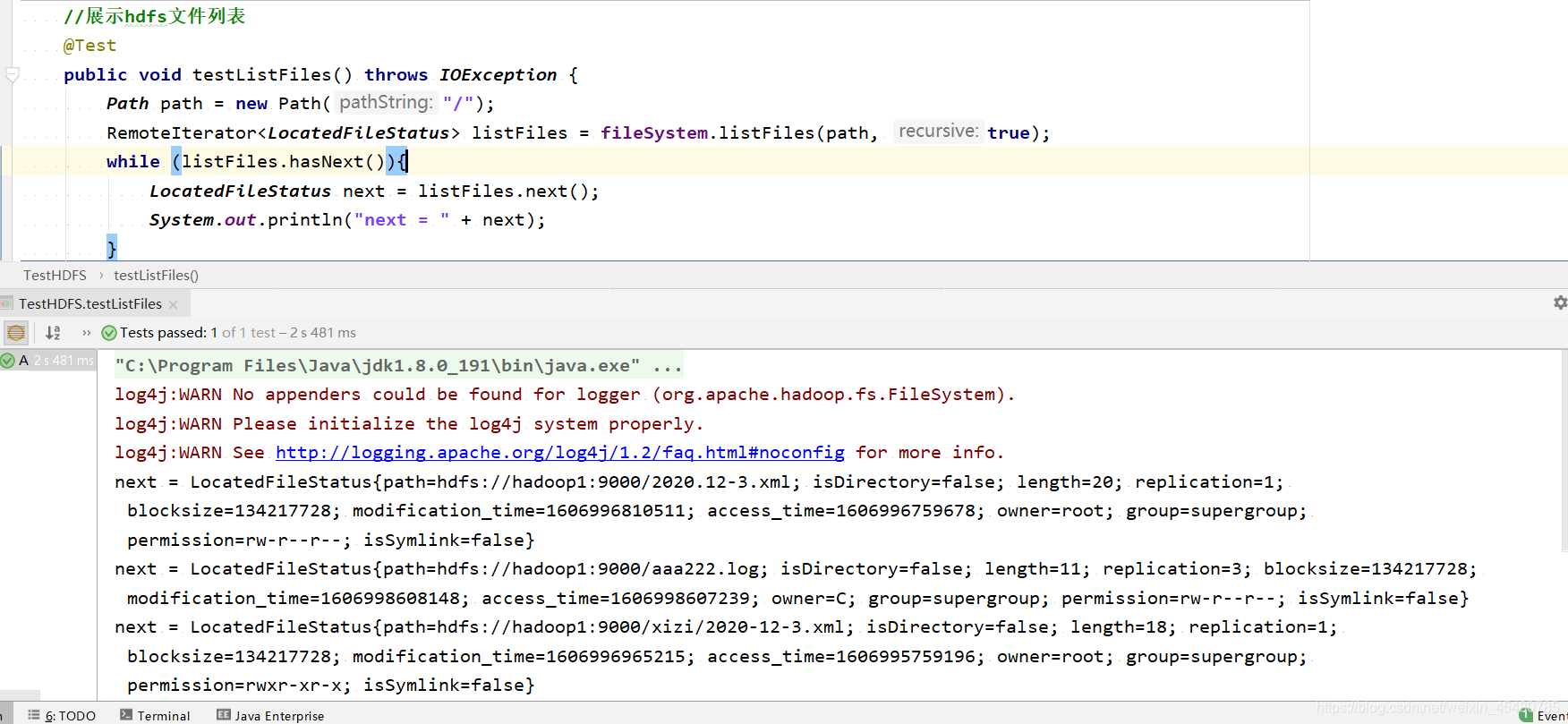

5. 展示hdfs文件列表

//展示hdfs文件列表

@Test

public void testListFiles() throws IOException {

Path path = new Path("/");

RemoteIterator<LocatedFileStatus> listFiles = fileSystem.listFiles(path, true);

while (listFiles.hasNext()){

LocatedFileStatus next = listFiles.next();

System.out.println("next = " + next);

}

}

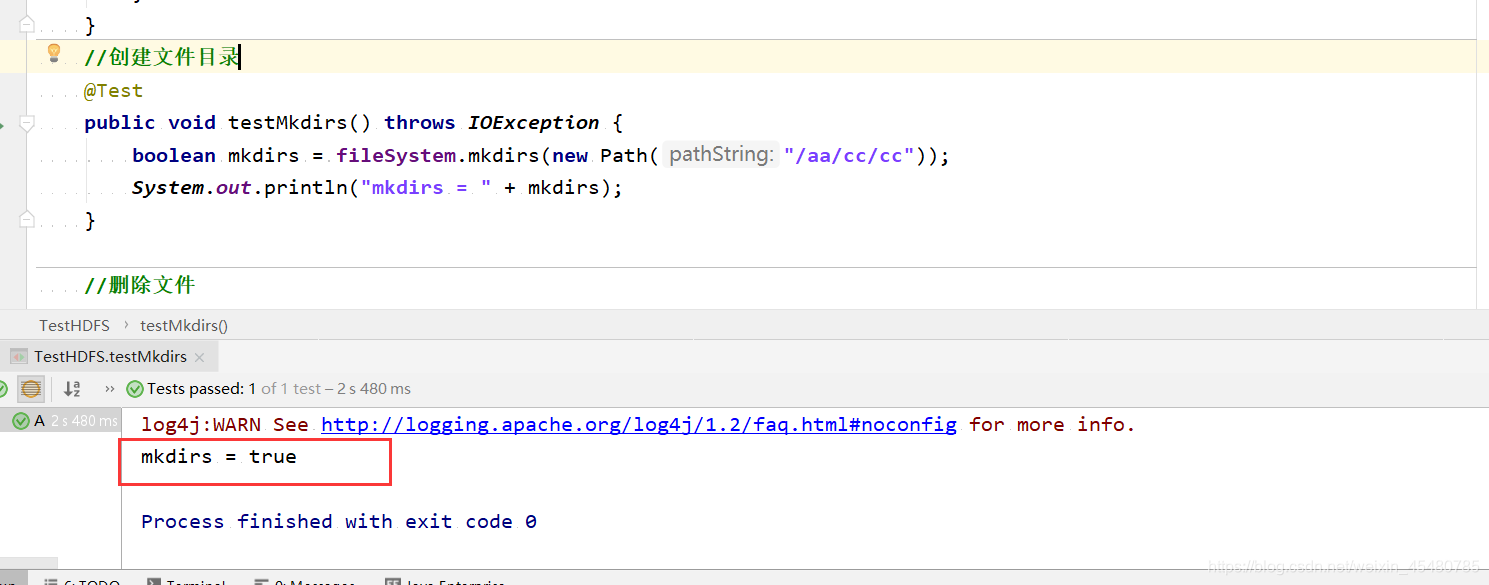

6. hdfs创建目录

//创建文件目录

@Test

public void testMkdirs() throws IOException {

boolean mkdirs = fileSystem.mkdirs(new Path("/aa/cc/cc"));

System.out.println("mkdirs = " + mkdirs);

}

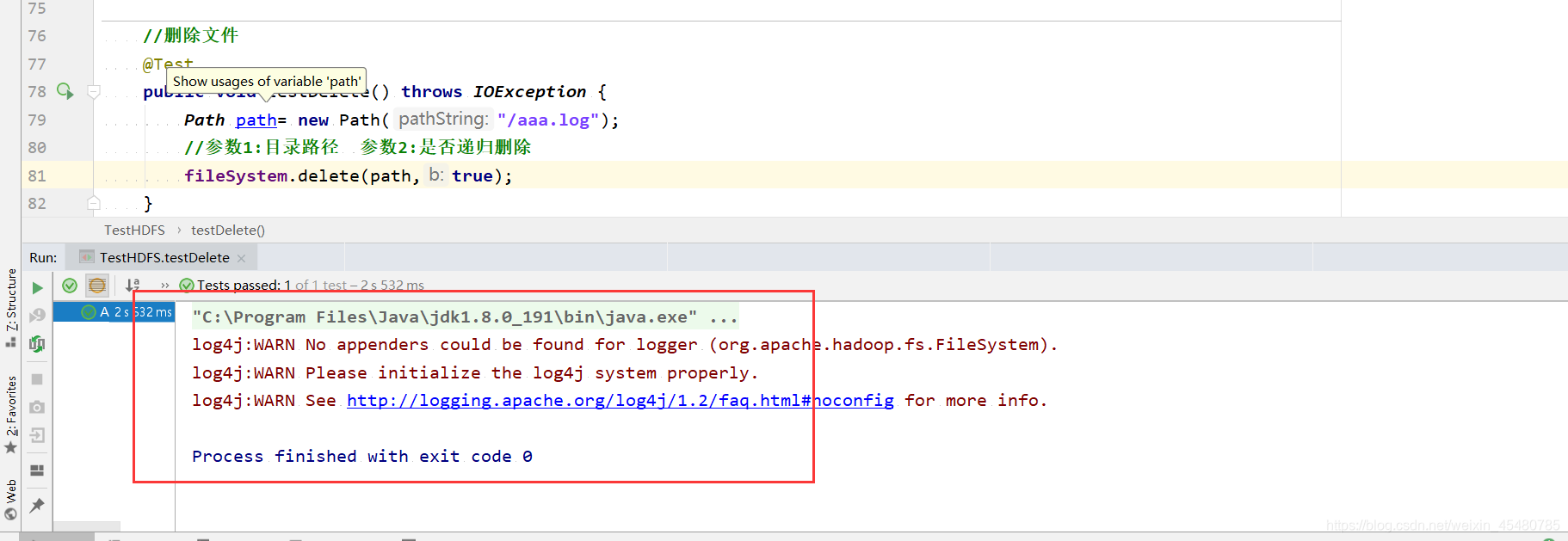

7. 删除文件

//删除文件

@Test

public void testDelete() throws IOException {

Path path= new Path("/aaa.log");

//参数1:目录路径 参数2:是否递归删除

fileSystem.delete(path,true);

}

8. HDFS配置文件的优先级详解

hadoop的配置文件解析顺序java代码客户端优于 > hadoop目录中etc/中配置优于 >share中jar默认配置

121

121

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?