部署Openvino在win平台上走了不少坑,这里将从第一步开始进行,避免以后遗忘。

第一步肯定是先把yolo5的工程跑通啦,基本上7.0运行一下会自动下载各种,非常方便,基本不存在复杂的配置过程。

跑通后需要pip一下export.py所需要的openvino包:

![]()

-

openvino:这一般是OpenVINO的主要安装包,它包含了一系列的工具,库,和插件,用于优化,执行和部署各种深度学习模型。它可能包括但不限于Model Optimizer(模型优化器), nGraph API, Inference Engine(推理引擎),以及不同硬件设备的插件等。 openvino-dev:这个包通常包含用于开发者的头文件和库。为了开发自己的程序并与OpenVINO库接口,需要用到这些开发工具。 openvino-telemetry:这个包与收集和发送运行时的数据或者日志有关,以便在系统运行过程中进行监视或者调试。版本不需要与前两版本一致也不影响。

-

具体配置export的部分:

首先修改自己训练好的的pt模型路径,yaml路径

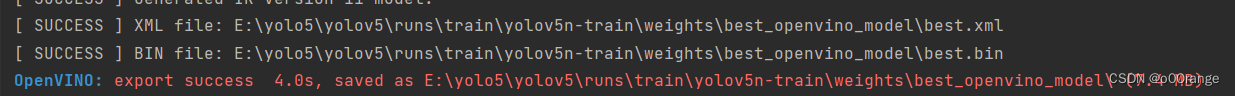

首先修改自己训练好的的pt模型路径,yaml路径 根据自己需求选择导出的格式,这里选择openvino;然后运行一下等着就行啦,如果这里报错dll导入失败大概是版本不对应的问题。

根据自己需求选择导出的格式,这里选择openvino;然后运行一下等着就行啦,如果这里报错dll导入失败大概是版本不对应的问题。 然后就得到xml和bin。XML文件:这个文件描述了模型的网络图结构。在这个文件中,你能找到每一层的信息,例如层名称,层类型,层参数,以及它们是如何连接起来的。BIN文件:这是一个二进制文件,包含了模型的权重和偏置。

然后就得到xml和bin。XML文件:这个文件描述了模型的网络图结构。在这个文件中,你能找到每一层的信息,例如层名称,层类型,层参数,以及它们是如何连接起来的。BIN文件:这是一个二进制文件,包含了模型的权重和偏置。 -

配置openvino和opencv部分VS+OpenCV+OpenVINO2022详细配置(更新) - 知乎 (zhihu.com)这部分照这个配置想应的vs环境就行。

-

部署代码

#include <opencv2/dnn.hpp> #include <openvino/openvino.hpp> #include <opencv2/opencv.hpp> using namespace std; const float SCORE_THRESHOLD = 0.2; const float NMS_THRESHOLD = 0.4; const float CONFIDENCE_THRESHOLD = 0.4; struct Detection { int class_id; float confidence; cv::Rect box; }; struct Resize { cv::Mat resized_image; int dw; int dh; }; Resize resize_and_pad(cv::Mat& img, cv::Size new_shape) { float width = img.cols; float height = img.rows; float r = float(new_shape.width / max(width, height)); int new_unpadW = int(round(width * r)); int new_unpadH = int(round(height * r)); Resize resize; cv::resize(img, resize.resized_image, cv::Size(new_unpadW, new_unpadH), 0, 0, cv::INTER_AREA); resize.dw = new_shape.width - new_unpadW; resize.dh = new_shape.height - new_unpadH; cv::Scalar color = cv::Scalar(100, 100, 100); cv::copyMakeBorder(resize.resized_image, resize.resized_image, 0, resize.dh, 0, resize.dw, cv::BORDER_CONSTANT, color); return resize; } int main() { // Step 1. Initialize OpenVINO Runtime core ov::Core core; // Step 2. Read a model std::shared_ptr<ov::Model> model = core.read_model("D://best.xml", "D://best.bin"); //此处需要自行修改xml和bin的路径 // Step 3. Read input image // 图像路径 cv::Mat img = cv::imread("D:/p.bmp"); // resize image Resize res = resize_and_pad(img, cv::Size(640, 640)); // Step 4. Inizialize Preprocessing for the model ov::preprocess::PrePostProcessor ppp = ov::preprocess::PrePostProcessor(model); // Specify input image format ppp.input().tensor().set_element_type(ov::element::u8).set_layout("NHWC").set_color_format(ov::preprocess::ColorFormat::BGR); // Specify preprocess pipeline to input image without resizing ppp.input().preprocess().convert_element_type(ov::element::f32).convert_color(ov::preprocess::ColorFormat::RGB).scale({ 255., 255., 255. }); // Specify model's input layout ppp.input().model().set_layout("NCHW"); // Specify output results format ppp.output().tensor().set_element_type(ov::element::f32); // Embed above steps in the graph model = ppp.build(); ov::CompiledModel compiled_model = core.compile_model(model, "CPU"); // Step 5. Create tensor from image float* input_data = (float*)res.resized_image.data; ov::Tensor input_tensor = ov::Tensor(compiled_model.input().get_element_type(), compiled_model.input().get_shape(), input_data); // Step 6. Create an infer request for model inference ov::InferRequest infer_request = compiled_model.create_infer_request(); infer_request.set_input_tensor(input_tensor); //增加计时器统计推理时间 double start = clock(); infer_request.infer(); double end = clock(); double last = start - end; cout << "Detect Time" << last << "ms" << endl; //Step 7. Retrieve inference results const ov::Tensor& output_tensor = infer_request.get_output_tensor(); ov::Shape output_shape = output_tensor.get_shape(); float* detections = output_tensor.data<float>(); // Step 8. Postprocessing including NMS std::vector<cv::Rect> boxes; vector<int> class_ids; vector<float> confidences; for (int i = 0; i < output_shape[1]; i++) { float* detection = &detections[i * output_shape[2]]; float confidence = detection[4]; if (confidence >= CONFIDENCE_THRESHOLD) { float* classes_scores = &detection[5]; cv::Mat scores(1, output_shape[2] - 5, CV_32FC1, classes_scores); cv::Point class_id; double max_class_score; cv::minMaxLoc(scores, 0, &max_class_score, 0, &class_id); if (max_class_score > SCORE_THRESHOLD) { confidences.push_back(confidence); class_ids.push_back(class_id.x); float x = detection[0]; float y = detection[1]; float w = detection[2]; float h = detection[3]; float xmin = x - (w / 2); float ymin = y - (h / 2); boxes.push_back(cv::Rect(xmin, ymin, w, h)); } } } std::vector<int> nms_result; cv::dnn::NMSBoxes(boxes, confidences, SCORE_THRESHOLD, NMS_THRESHOLD, nms_result); std::vector<Detection> output; for (int i = 0; i < nms_result.size(); i++) { Detection result; int idx = nms_result[i]; result.class_id = class_ids[idx]; result.confidence = confidences[idx]; result.box = boxes[idx]; output.push_back(result); } // Step 9. Print results and save Figure with detections for (int i = 0; i < output.size(); i++) { auto detection = output[i]; auto box = detection.box; auto classId = detection.class_id; auto confidence = detection.confidence; float rx = (float)img.cols / (float)(res.resized_image.cols - res.dw); float ry = (float)img.rows / (float)(res.resized_image.rows - res.dh); box.x = rx * box.x; box.y = ry * box.y; box.width = rx * box.width; box.height = ry * box.height; cout << "Bbox" << i + 1 << ": Class: " << classId << " " << "Confidence: " << confidence << " Scaled coords: [ " << "cx: " << (float)(box.x + (box.width / 2)) / img.cols << ", " << "cy: " << (float)(box.y + (box.height / 2)) / img.rows << ", " << "w: " << (float)box.width / img.cols << ", " << "h: " << (float)box.height / img.rows << " ]" << endl; float xmax = box.x + box.width; float ymax = box.y + box.height; cv::rectangle(img, cv::Point(box.x, box.y), cv::Point(xmax, ymax), cv::Scalar(0, 255, 0), 3); cv::rectangle(img, cv::Point(box.x, box.y - 20), cv::Point(xmax, box.y), cv::Scalar(0, 255, 0), cv::FILLED); cv::putText(img, std::to_string(classId), cv::Point(box.x, box.y - 5), cv::FONT_HERSHEY_SIMPLEX, 0.5, cv::Scalar(0, 0, 0)); } cv::imwrite("D:/pres.bmp", img); //显示具体结果 cv::namedWindow("ImageWindow", cv::WINDOW_NORMAL); cv::resizeWindow("ImageWindow", 800, 600); cv::imshow("ImageWindow", img); cv::waitKey(0); cv::destroyAllWindows(); return 0; }正常来说前面没问题这步就能直接跑啦,一般有问题的话可以选择从debug,release上选择哪个,配置是否有d考虑,其他各种崩溃都是版本原因引起,得从版本考虑,不行就重新装一次,版本问题确实麻烦,找起来不是非常方便,到这基本上就配置完成了。

-

下面是opencvDNN的部署方式

OpenCV DNN (Deep Neural Network) 是 OpenCV 库中的一个模块,旨在提供对深度神经网络的支持。它允许你加载、推理和使用预训练的深度学习模型,以进行对象检测、图像分类、姿态估计等计算机视觉任务。OpenCV DNN 模块支持各种深度学习框架训练的模型,使用 OpenCV DNN,你可以通过简单的 API 载入训练好的模型并输入图像进行推理。该模块提供了高性能的计算图执行引擎,允许在 CPU 或者支持 GPU 加速的硬件上实现实时图像处理和分析。总之,OpenCV DNN 为开发者提供了一种方便快捷的方式,利用深度学习模型进行图像处理和计算机视觉任务。

-

此处贴上官方方法:Hexmagic/ONNX-yolov5: deploy yolov5 in c++ (github.com)因为原作者是在linux上配置我们是win,所以接下来,我们需要cmake一下cmakelist,然后就会得到一堆文件,新建过程,读取解决方案,当然,我们也可以直接复制他的源码自己建立一个工程也是可以的,不需要cmakelist,这里可以注意下

此处贴上官方方法:Hexmagic/ONNX-yolov5: deploy yolov5 in c++ (github.com)因为原作者是在linux上配置我们是win,所以接下来,我们需要cmake一下cmakelist,然后就会得到一堆文件,新建过程,读取解决方案,当然,我们也可以直接复制他的源码自己建立一个工程也是可以的,不需要cmakelist,这里可以注意下 打开后的话就会有四个项目,我们可以移除三个,剩个main即可,配置一下

打开后的话就会有四个项目,我们可以移除三个,剩个main即可,配置一下

基本上opencv配置一下就行,然后就可以改改路径就可以run了。main.cpp:

基本上opencv配置一下就行,然后就可以改改路径就可以run了。main.cpp:include <string> #include <vector> #include <fstream> #include "loguru.hpp" #include "detector.h" using namespace cv; using namespace std; /* main */ int main(int argc, char *argv[]) { // 默认参数 std::cout << "OpenCV version : " << CV_VERSION << std::endl; string model_path = "D://best.onnx"; string img_path = "D://p.bmp"; //string model_path = "3_best.onnx"; //string img_path = "data/images/zidane.jpg"; loguru::init(argc, argv); Config config = {0.25f, 0.45f, model_path, "E://yolo5//ONNX-yolov5-master//data//coco.names", Size(640, 640),false}; LOG_F(INFO,"Start main process"); Detector detector(config); LOG_F(INFO,"Load model done .."); Mat img = imread(img_path, IMREAD_COLOR); LOG_F(INFO,"Read image from %s", img_path.c_str()); double start = clock(); Detection detection = detector.detect(img); double end = clock(); double last = start - end; cout << "Detect Time"<< last << "ms" << endl; LOG_F(INFO,"Detect process finished"); Colors cl = Colors(); detector.postProcess(img, detection,cl); LOG_F(INFO,"Post process done save image to assets/output.bmp"); cv::imwrite("E:/yolo5/ONNX-yolov5-master/assets/p.bmp", img); std::cout << "detect Image And Save to assets/output.bmp" << endl; return 0; }到这基本上就结束了opencv和openvino部署的过程,大部分问题都可以从版本上寻找,因为部署代码多次测试是没有问题的。tks~

1627

1627

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?