Hive On Hbase

首先让我们创建一张表:并且导入数据

create external table t_employee_hbase(empno INT,

ename STRING,

job STRING,

mgr INT,

hiredate TIMESTAMP,

sal DECIMAL(7,2),

comm DECIMAL(7,2),

deptno INT)

STORED BY 'org.apache.hadoop.hive.hbase.HBaseStorageHandler'

WITH SERDEPROPERTIES("hbase.columns.mapping" = ":key,cf1:name,cf1:job,cf1:mgr,cf1:hiredate,cf1:sal,cf1:comm,cf1:deptno")

TBLPROPERTIES("hbase.table.name" = "baizhi:t_employee");

查询表数据信息

0: jdbc:hive2://CentOS:10000> select empno,ename,sal,deptno from t_employee_hbase;

+--------+-----------+-------+---------+--+

| empno | ename | sal | deptno |

+--------+-----------+-------+---------+--+

| 1 | zhangsan | NULL | 10 |

| 2 | lisi | 5000 | 20 |

+--------+-----------+-------+---------+--+

2 rows selected (0.447 seconds)

0: jdbc:hive2://CentOS:10000> select deptno,count(*) from t_employee_hbase group by deptno;

以上操作都是读操作,因此可以正常运行,当用户执行写操作的时候,将数据写入Hbase

0: jdbc:hive2://CentOS:10000> insert overwrite table t_employee_hbase select empno,ename,job,mgr,hiredate,sal,comm,deptno from t_employee;

系统会抛出一下错误。

ERROR : Ended Job = job_1578881006622_0016 with errors

Error: Error while processing statement: FAILED: Execution Error, return code 2 from org.apache.hadoop.hive.ql.exec.mr.MapRedTask (state=08S01,code=2)

0: jdbc:hive2://CentOS:10000>

需要替换hive-hbase-handler-1.2.2.jar,问题参考: https://blog.csdn.net/weixin_38231448/article/details/103935613

UDF&UDTF

UDF函数其实就是一个简单的函数,执行过程就是在Hive转换成MapReduce程序后,执行java方法,类似于像MapReduce执行过程中加入一个插件,方便扩展。目前Hive除去一些内置的函数以外,还提供了一些内置的函数的扩扎接口:

UDF:针对单行数据操作,需要继承UDF

UDTF:操作一个数据行,产生多个数据行或者是多个列,需要用户继承GenericUDTF

UDAF:操作多个数据行,产生一个数据行,主要用以聚合统计,需要继承AbstractGenericUDAFResolver

目前由于Hive中已经提供了强大的聚合函数,本篇文章就UDF和UDTF实现给出以下两个案例:

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-exec</artifactId>

<version>1.2.2</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>2.9.2</version>

</dependency>

UDF

package com.jiangzz;

import org.apache.hadoop.hive.ql.exec.UDF;

public class DeptUDF extends UDF {

public String evaluate(String value){

return value+"部门";

}

}

这里的evaluate方法的参数和返回值需要用户根据自己的需求定义

- 只能输入一条记录当中的数据,同时返回一条处理结果。

- 属于最常见的自定义函数,像cos,sin,substring,indexof等均是如此要求

代码实现

- 功能要求:实现当输入字符串超过2个字符的时候,多余的字符以”…”来表示。

- 如“12”则返回“12”,如“123”返回“12…”

- 自定义类、继承UDF、重写evaluate方法已在代码中体现

import org.apache.hadoop.hive.ql.exec.UDF;

/*

* 功能:实现当输入字符串超过2个字符的时候,多余的字符以"..."来表示。

* 输入/输出:* 如“12”则返回“12”,如“123”返回“12..."

*/

public class ValueMaskUDF extends UDF{

public String evaluate(String input,int maxSaveStringLength,String replaceSign) {

if(input.length()<=maxSaveStringLength){

return input;

}

return input.substring(0,maxSaveStringLength)+replaceSign;

}

public static void main(String[] args) {

System.out.println(new ValueMaskUDF().evaluate("辽宁省铁岭市",2,"..."));;

}

}

UDAF

- 接受输入N条记录当中的数据,同时返回一条处理结果。

- 属于最常见的自定义函数,像count,sum,avg,max等均是如此要求

- 输入:

- 输出:

- 代码实现:

import java.util.HashMap;

import java.util.Map;

import java.util.Set;

import org.apache.hadoop.hive.ql.exec.UDAF;

import org.apache.hadoop.hive.ql.exec.UDAFEvaluator;

import org.apache.log4j.Logger;

/**

* 实现多条数据合并成一条数据

*/

// 主类继承UDAF

public class StudentScoreAggUDAF extends UDAF {

// 日志对象初始化

public static Logger logger = Logger.getLogger(StudentScoreAggUDAF.class);

// 静态类实现UDAFEvaluator

public static class Evaluator implements UDAFEvaluator {

// 设置成员变量,存储每个统计范围内的总记录数

private Map<String, String> courseScoreMap;

//初始化函数,map和reduce均会执行该函数,起到初始化所需要的变量的作用

public Evaluator() {

init();

}

// 初始化函数间传递的中间变量

public void init() {

courseScoreMap = new HashMap<String, String>();

}

//map阶段,返回值为boolean类型,当为true则程序继续执行,当为false则程序退出

public boolean iterate(String course, String score) {

if (course == null || score == null) {

return true;

}

courseScoreMap.put(course, score);

return true;

}

/**

* 类似于combiner,在map范围内做部分聚合,将结果传给merge函数中的形参mapOutput

* 如果需要聚合,则对iterator返回的结果处理,否则直接返回iterator的结果即可

*/

public Map<String, String> terminatePartial() {

return courseScoreMap;

}

// reduce 阶段,用于逐个迭代处理map当中每个不同key对应的 terminatePartial的结果

public boolean merge(Map<String, String> mapOutput) {

this.courseScoreMap.putAll(mapOutput);

return true;

}

// 处理merge计算完成后的结果,即对merge完成后的结果做最后的业务处理

public String terminate() {

return courseScoreMap.toString();

}

}

}

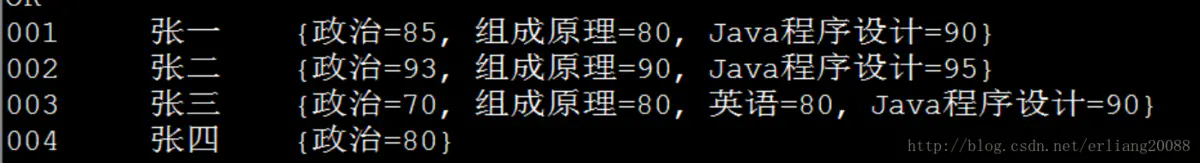

- 测试:

select id,username,score_agg(course,score) from student_score group by id,username;

- 自定义udaf实现max

import org.apache.hadoop.hive.ql.exec.UDAF;

import org.apache.hadoop.hive.ql.exec.UDAFEvaluator;

import org.apache.hadoop.io.IntWritable;

public class Maximum extends UDAF {

public static class MaximumIntUDAFEvaluator implements UDAFEvaluator {

private IntWritable result;

public void init() {

result = null;

}

public boolean iterate(IntWritable value) {

if (value == null) {

return true;

}

if (result == null) {

result = new IntWritable(value.get());

} else {

result.set(Math.max(result.get(), value.get()));

}

return true;

}

public IntWritable terminatePartial() {

return result;

}

public boolean merge(IntWritable other) {

return iterate(other);

}

public IntWritable terminate() {

return result;

}

}

}

UDTF

- 要解决一行输入多行输出的问题,问题的应用场景不少

- 用udtf解决一行输入多行输出的不多,往往被lateral view explode+udf等替代实现,比直接用udtf会更简单、直接一些

package com.jiangzz;

import org.apache.hadoop.hive.ql.exec.UDFArgumentException;

import org.apache.hadoop.hive.ql.metadata.HiveException;

import org.apache.hadoop.hive.ql.udf.generic.GenericUDTF;

import org.apache.hadoop.hive.serde2.objectinspector.ObjectInspector;

import org.apache.hadoop.hive.serde2.objectinspector.ObjectInspectorFactory;

import org.apache.hadoop.hive.serde2.objectinspector.StructObjectInspector;

import org.apache.hadoop.hive.serde2.objectinspector.primitive.PrimitiveObjectInspectorFactory;

import java.util.ArrayList;

import java.util.Arrays;

import java.util.List;

public class UserUDTF extends GenericUDTF {

@Override

public StructObjectInspector initialize(StructObjectInspector argOIs) throws UDFArgumentException {

List<ObjectInspector> objectInspectors=new ArrayList<ObjectInspector>();

objectInspectors.add(PrimitiveObjectInspectorFactory.javaIntObjectInspector);

objectInspectors.add(PrimitiveObjectInspectorFactory.javaStringObjectInspector);

objectInspectors.add(PrimitiveObjectInspectorFactory.javaBooleanObjectInspector);

//返回的类型参数

return ObjectInspectorFactory.getStandardStructObjectInspector(Arrays.asList("id","name","sex"),objectInspectors);

}

public void process(Object[] args) throws HiveException {

String value = args[0].toString();

String sep= args[1].toString();

String[] tokens = value.split(sep);

forward(Arrays.asList(Integer.parseInt(tokens[0]),tokens[1],Boolean.valueOf(tokens[2])));

}

public void close() throws HiveException {

}

}

代码打包

将代码进行package打包 hive-function-1.0-SNAPSHOT.jar创建以下function.sql文件进行测试

create temporary function dept_fun as 'com.jiangzz.DeptUDF';

create temporary function user_fun as 'com.jiangzz.UserUDTF';

select dept_fun(20);

select user_fun('1,zhangsan,true',',');

[root@CentOS ~]# hive -f function.sql --auxpath /root/hive-function-1.0-SNAPSHOT.jar

import org.apache.hadoop.hive.serde2.objectinspector.ObjectInspector;

import org.apache.hadoop.hive.serde2.objectinspector.ObjectInspectorFactory;

import org.apache.hadoop.hive.serde2.objectinspector.StructObjectInspector;

import org.apache.hadoop.hive.serde2.objectinspector.primitive.PrimitiveObjectInspectorFactory;

import java.util.ArrayList;

import java.util.Arrays;

import java.util.List;

public class UserUDTF extends GenericUDTF {

@Override

public StructObjectInspector initialize(StructObjectInspector argOIs) throws UDFArgumentException {

List<ObjectInspector> objectInspectors=new ArrayList<ObjectInspector>();

objectInspectors.add(PrimitiveObjectInspectorFactory.javaIntObjectInspector);

objectInspectors.add(PrimitiveObjectInspectorFactory.javaStringObjectInspector);

objectInspectors.add(PrimitiveObjectInspectorFactory.javaBooleanObjectInspector);

//返回的类型参数

return ObjectInspectorFactory.getStandardStructObjectInspector(Arrays.asList("id","name","sex"),objectInspectors);

}

public void process(Object[] args) throws HiveException {

String value = args[0].toString();

String sep= args[1].toString();

String[] tokens = value.split(sep);

forward(Arrays.asList(Integer.parseInt(tokens[0]),tokens[1],Boolean.valueOf(tokens[2])));

}

public void close() throws HiveException {

}

}

代码打包

将代码进行package打包 hive-function-1.0-SNAPSHOT.jar创建以下function.sql文件进行测试

create temporary function dept_fun as 'com.jiangzz.DeptUDF';

create temporary function user_fun as 'com.jiangzz.UserUDTF';

select dept_fun(20);

select user_fun('1,zhangsan,true',',');

[root@CentOS ~]# hive -f function.sql --auxpath /root/hive-function-1.0-SNAPSHOT.jar

更多Function请参考:https://cwiki.apache.org/confluence/display/Hive/LanguageManual+UDF

5162

5162

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?