所使用数据:(cifar10[test_batch]和cifar100[test]测试集的二进制数据)

链接:https://pan.baidu.com/s/1Faw9C3NOdqEcg7ZrlP9Hzg

提取码:yh4k

1.对cifar10图片进行显示并保存

# -*- coding: utf-8 -*-

import numpy as np

import pickle

import imageio

# 解压缩,返回解压后的字典

def unpickle(file):

fo = open(file, 'rb')

dict = pickle.load(fo, encoding='latin1')

fo.close()

return dict

test_file = "test_batch"

# 显示测试集图片

dict_test = unpickle(file)

x_test = dict_test.get("data")

y_test = dict_test.get("labels")

dict_test = unpickle(file)

x_test = dict_test.get("data")

y_test = dict_test.get("labels")

image_m = np.reshape(x_test[1], (3, 32, 32))

r = image_m[0, :, :]

g = image_m[1, :, :]

b = image_m[2, :, :]

img23 = cv2.merge([r, g, b])

plt.figure()

plt.imshow(img23)

plt.show()

# 保存测试集图片

testXtr = unpickle(test_file)

for i in range(1, 100): #保存全部的使用for i in range(1, 10000):

img = np.reshape(testXtr['data'][i], (3, 32, 32))

img = img.transpose(1, 2, 0)

picName = 'datatest/10/' + str(testXtr['labels'][i]) + '_' + str(i) + '.jpg'

imageio.imsave(picName, img)#, dpi=(600.0,600.0))

print("test_batch loaded.")

2.对cifar100图片进行显示并保存

from PIL import Image

import numpy as np

TO_ROOT='./datatest/100'

import imageio

def unpickle(file):

import pickle

with open(file, 'rb') as fo:

dict = pickle.load(fo, encoding='latin1')

return dict

#加载数据集

test_dict=unpickle('test')

data, label = np.array(test_dict['data']).reshape(-1, 3, 32, 32).transpose(0, 2, 3, 1), \

test_dict['fine_labels']

#显示指定的图片

numofimg = 25 # 图片序号

img = np.reshape(data[numofimg], (3, 32, 32)) # 导出指定的图片

img = img.transpose(1, 2, 0)

plt.figure(1)

plt.imshow(img)

plt.show()

print(label[numofimg])

#导出所有图片

count=0

for i in range(data.shape[0]):

count = count + 1

img = Image.fromarray(data[i])

picName = 'datatest/100/' + str(label[i]) + '_' + str(i) + '.jpg'

imageio.imsave(picName, img) # , dpi=(600.0,600.0))

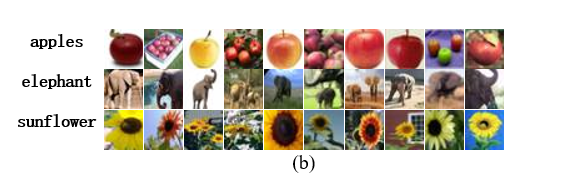

注:如果你的论文中用到了cifar10和cifar100的数据集,提取出cifar10和cifar100的图片后,在word中插入表格就可以作出类似的图~

913

913

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?