Spark SQL是⽤于结构化数据处理的⼀个模块。同Spark RDD 不同地⽅在于Spark SQL的API可以给Spark计算引擎提供更多地 信息,例如:数据结构、计算算⼦等。在内部Spark可以通过这些信息有针对对任务做优化和调整。这⾥有⼏种⽅式和Spark SQL进⾏交互,例如Dataset API和SQL等,这两种API可以混合使⽤。Spark SQL的⼀个⽤途是执⾏SQL查询。 Spark SQL还可⽤于从现有Hive安装中读取数据。从其他编程语⾔中运⾏SQL时,结果将作为Dataset/DataFrame返回,使⽤命令 ⾏或JDBC / ODBC与SQL接⼝进⾏交

互。Dataset是⼀个分布式数据集合在Spark 1.6提供⼀个新的接⼝,Dataset提供RDD的优势(强类型,使⽤强⼤的lambda函 数)以及具备了Spark SQL执⾏引擎的优点。Dataset可以通过JVM对象构建,然后可以使⽤转换函数等(例如:map、flatMap、filter等),⽬前Dataset API⽀持Scala和Java ⽬前Python对Dataset⽀持还不算完备。DataFrame是命名列的数据集,他在概念是等价于关系型数据库。DataFrames可以从很多地⽅构建,⽐如说结构化数据⽂ 件、hive中的表或者外部数据库,使⽤Dataset[row]的数据集,可以理解DataFrame就是⼀个Dataset[Row(行)]

SparkSession

- Spark中所有功能的⼊⼝点是SparkSession类。要创建基本的SparkSession,只需使⽤SparkSession.builder():

依赖

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.11</artifactId>

<version>2.4.3</version>

</dependency>

Drvier程序

//1.创建SparkSession

val spark = SparkSession.builder()

.appName("hellosql")

.master("local[10]")

.getOrCreate()

//2.引⼊改隐试转换 主要是 将 RDD 转换为 DataFrame/Dataset

import spark.implicits._

spark.sparkContext.setLogLevel("FATAL")

//关闭spark

spark.stop()

创建Dataset/DataFrame

Dataset

Dataset与RDD类似,但是它们不使⽤Java序列化或Kryo(架构),⽽是使⽤专⽤的Encoder(编码器)来序列化对象以便通过⽹络进⾏处理或传输。虽然Encoder和标准序列化都负责将对象转换为字节,但Encoder是动态⽣成的代码,并使⽤⼀种格式,允许Spark执⾏许多操作,如过滤,排序和散列,⽽⽆需将字节反序列化为对象。

case-class

import org.apache.spark.sql.{Dataset, SparkSession}

case class Person(id: Int, name: String, age: Int, sex: Boolean)

object sql {

def main(args: Array[String]): Unit = {

//1.创建SparkSession

val spark = SparkSession.builder()

.appName("hellosql")

.master("local[10]")

.getOrCreate()

//2.引⼊改隐试转换 主要是 将 RDD 转换为 DataFrame/Dataset

import spark.implicits._

spark.sparkContext.setLogLevel("FATAL")

val dataset: Dataset[Person]

= List(Person(1, "zhangsan", 18, true), Person(2, "wangwu", 28, true)).toDS()

dataset.select($"id", $"name").show()

//关闭spark

spark.stop()

}

}

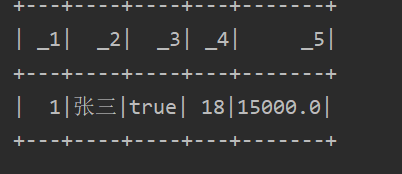

运行结果:

Tuple元组

import org.apache.spark.sql.{Dataset, SparkSession}

object sql {

def main(args: Array[String]): Unit = {

//1.创建SparkSession

val spark = SparkSession.builder()

.appName("hellosql")

.master("local[10]")

.getOrCreate()

//2.引⼊改隐试转换 主要是 将 RDD 转换为 DataFrame/Dataset

import spark.implicits._

spark.sparkContext.setLogLevel("FATAL")

val dataset: Dataset[(Int,String,Int,Boolean)] = List((1,"zhangsan",18,true),

(2,"wangwu",28,true)).toDS()

dataset.select($"_1",$"_2").show()

dataset.selectExpr("_1 as id","_2 as name","(_3 * 10) as age").show()

//关闭spark

spark.stop()

}

}

json数据

{"name":"张三","age":18}

{"name":"lisi","age":28}

{"name":"wangwu","age":38}

case class Person(id:Int,name:String,age:Int,sex:Boolean)

val dataset = spark.read.json("D:///Persion.json").as[Person]

dataset.show()

- rdd

元组

import org.apache.spark.sql. SparkSession

object sql {

def main(args: Array[String]): Unit = {

//1.创建SparkSession

val spark = SparkSession.builder()

.appName("hellosql")

.master("local[10]")

.getOrCreate()

//2.引⼊改隐试转换 主要是 将 RDD 转换为 DataFrame/Dataset

import spark.implicits._

spark.sparkContext.setLogLevel("FATAL")

val userRDD = spark.sparkContext.makeRDD(List((1,"张三",true,18,15000.0)))

userRDD.toDS().show()

//关闭spark

spark.stop()

}

}

case-class

case class User(id:Int,name:String,sex:Boolean,age:Int,gongz:Double)

val userRDD = spark.sparkContext.makeRDD(List(User(1,"张三",true,18,15000.00)))

userRDD.toDS().show()

DataFrame

- Data Frame是命名列的数据集,他在概念是等价于关系型数据库。DataFrames可以从很多地⽅构建,⽐如说结构化数据⽂ 件、hive中的表或者外部数据库,使⽤Dataset[row(行)]的数据集,可以理解DataFrame就是⼀个Dataset[Row].

json⽂件

val frame = spark.read.json("file:///f:/person.json")

frame.show()

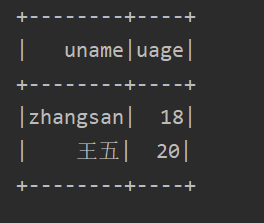

case-class

import org.apache.spark.sql. SparkSession

case class Person(name:String,age:Int)

object sql {

def main(args: Array[String]): Unit = {

//1.创建SparkSession

val spark = SparkSession.builder()

.appName("hellosql")

.master("local[10]")

.getOrCreate()

//2.引⼊改隐试转换 主要是 将 RDD 转换为 DataFrame/Dataset

import spark.implicits._

spark.sparkContext.setLogLevel("FATAL")

List(Person("zhangsan",18),Person("王五",20)).toDF("uname","uage").show()

//关闭spark

spark.stop()

}

}

Tuple元组

List(("zhangsan",18),("王五",20)).toDF("name","age").show()

- RDD转换

Row 行

import org.apache.spark.sql.{Row, SparkSession}

import org.apache.spark.sql.types.StructType

object sql {

def main(args: Array[String]): Unit = {

//1.创建SparkSession

val spark = SparkSession.builder()

.appName("hellosql")

.master("local[10]")

.getOrCreate()

//2.引⼊改隐试转换 主要是 将 RDD 转换为 DataFrame/Dataset

import spark.implicits._

spark.sparkContext.setLogLevel("FATAL")

val userRDD = spark.sparkContext.makeRDD(List((1,"张三",true,18,15000.0)))

.map(t=>Row(t._1,t._2,t._3,t._4,t._5))

var schema=new StructType()

.add("id","int")

.add("name","string")

.add("sex","boolean")

.add("age","int")

.add("salary","double")

spark.createDataFrame(userRDD,schema).show()

//关闭spark

spark.stop()

}

}

Javabean

import org.apache.spark.sql.SparkSession

object sql {

def main(args: Array[String]): Unit = {

//1.创建SparkSession

val spark = SparkSession.builder()

.appName("hellosql")

.master("local[10]")

.getOrCreate()

//2.引⼊改隐试转换 主要是 将 RDD 转换为 DataFrame/Dataset

import spark.implicits._

spark.sparkContext.setLogLevel("FATAL")

val userRDD = spark.sparkContext.makeRDD(List(new User(1,"张三",true,18,15000.0)))

spark.createDataFrame(userRDD,classOf[User]).show()

//关闭spark

spark.stop()

}

}

提示 :这⾥的 User 须是JavaBean对象。如果是Scala的类,⽤户需要额外提供getXxx⽅法(没这个必要)

JavaBean 就是简单的提供属性和公共get/set 根据需求的构造的一个类

import java.io.Serializable;

public class User implements Serializable {

private Integer id;

private String name;

private Boolean sex;

private Integer age;

private Double salary;

public User(Integer id, String name, Boolean sex, Integer age, Double salary) {

this.id = id;

this.name = name;

this.sex = sex;

this.age = age;

this.salary = salary;

}

get/set

case-class

case class User(id:Int,name:String,sex:Boolean,age:Int,salary:Double)

val userRDD = spark.sparkContext.makeRDD(List(User(1,"张三",true,18,15000.0)))

spark.createDataFrame(userRDD).show()

tuple元组

val userRDD = spark.sparkContext.makeRDD(List((1,"张三",true,18,15000.0)))

spark.createDataFrame(userRDD).show()

Dataset/DataFrame API操作

准备数据

1,Michael,false,29,2000

5,Lisa,false,19,1000

3,Justin,true,19,1000

2,Andy,true,30,5000

4,Kaine,false,20,5000

尝试将⽂本数据转变为DataFrame

import org.apache.spark.rdd.RDD

import org.apache.spark.{SparkConf, SparkContext}

import org.apache.spark.sql.SparkSession

case class User01(id:Int,name:String,sex:Boolean,age:Int,salary:Double)

object sql {

def main(args: Array[String]): Unit = {

val sc = new SparkContext(new SparkConf().setAppName("sdfas").setMaster("local[10]"))

//读取本地文件 一行一行的读

val value = sc.textFile("D:/user.txt")

//1.创建SparkSession

val spark = SparkSession.builder()

.appName("hellosql")

.master("local[10]")

.getOrCreate()

//或者

/*

*读取本地文件 一行一行的读

*val value = spark.sparkContext.textFile("D:/user.txt")

*/

//2.引⼊改隐试转换 主要是 将 RDD 转换为 DataFrame/Dataset

import spark.implicits._

spark.sparkContext.setLogLevel("FATAL")

var userRDD:RDD[User01]=value.map(line=>line.split(","))

.map(ts=>User01(ts(0).toInt,ts(1),ts(2).toBoolean,ts(3).toInt,ts(4).toDouble))

val userDataFrame = userRDD.toDF()

userDataFrame.show()

//关闭spark

spark.stop()

}

}

printSchema 打印创建的表结构信息

userDataFrame.printSchema()

root

|-- id: integer (nullable = false)

|-- name: string (nullable = true)

|-- sex: boolean (nullable = false)

|-- age: integer (nullable = false)

|-- salary: double (nullable = false)

show 的数据打印在控制台

默认将dataframe或者是dataset中前20⾏的数据打印在控制台,⼀般⽤于测试。

userDataFrame.show()

例如只查询前2⾏ userDataFrame.show(2)

select

- 等价于sql脚本的select语句,⽤于过滤、投影出需要的字段信息。⽤户可以直接给列名,但是不⽀持计算

userDataFrame.select(“id”,“name”,“sex”,“age”,“salary”) .show()

- ⽤户可以给select传递Cloumn,这样⽤户可以针对Column做⼀些简单的计算

userDataFrame.select(new Column("id"),new Column("name"),new Column("age"),new

Column("salary"),new Column("salary").*(12))

.show()

简写

userDataFrame.select($"id",$"name",$"age",$"salary",$"salary" * 12)

.show()

selectExpr

- 允许直接给字段名,并且基于字段名指定⼀些常⻅字符串SQL运算符。

userDataFrame.selectExpr("id","name || '⽤户'","salary * 12 as annal_salary").show()

where 类似SQL中的where

- 类似SQL中的where,主要⽤于过滤查询结果。该算⼦可以传递Conditiion(条件)或者ConditionExp(条件实验)

userDataFrame.select($"id",$"name",$"age",$"salary",$"salary" * 12)

.where($"name" like "%a%")

.show()

等价写法

userDataFrame.select($"id",$"name",$"age",$"salary",$"salary" * 12)

.where($"name" like "%a%")

.show()

注意:spark中别名不要出现中⽂,如果出现中⽂,在where表达式中存在bug

withColumn 可以给dataframe添加⼀个字段信息

userDataFrame.select($"id",$"name",$"age",$"salary",$"sex")

.withColumn("年薪",$"salary" * 12)

.show()

等等还有很多就不一一写了 网上一搜都有

Dataset/DataFrame SQL

数据准备

- t_user

Michael,29,20000,true,MANAGER,1

Andy,30,15000,true,SALESMAN,1

Justin,19,8000,true,CLERK,1

Kaine,20,20000,true,MANAGER,2

Lisa,19,18000,false,SALESMAN,2

- t_dept

1,研发

2,设计

3,产品

将以上数据上传到HDFS⽂件系统

def main(args: Array[String]): Unit = {

//1.创建SparkSession

val spark = SparkSession.builder()

.appName("hellosql")

.master("local[10]")

.getOrCreate()

//引⼊改隐试转换 主要是 将 集合、RDD 转换为 DataFrame/Dataset

import spark.implicits._

spark.sparkContext.setLogLevel("FATAL")

val userDF = spark.sparkContext.textFile("hdfs://CentOS:9000/demo/user")

.map(line => line.split(","))

.map(ts => User(ts(0), ts(1).toInt, ts(2).toDouble, ts(3).toBoolean, ts(4),

ts(5).toInt))

.toDF()

val deptDF = spark.sparkContext.textFile("hdfs://CentOS:9000/demo/dept")

.map(line => line.split(","))

.map(ts => Dept(ts(0).toInt, ts(1)))

.toDF()

userDF.show()

deptDF.show()

//关闭SparkSession

spark.close()

}

注册视图

userDF.createOrReplaceTempView("t_user")

deptDF.createOrReplaceTempView("t_dept")

执⾏SQL

var sql=

"""

select *, salary * 12 as annual_salary from t_user

"""

spark.sql(sql).show()

单表查询

like模糊

select *, salary * 12 as annual_salary from t_user where name

like‘%a%’

排序查询

select * from t_user

order bydeptNo asc,salary desc

limit查询

select * from t_user order by deptNo asc,salary desc

limit3

分组查询

select deptNo,avg(salary) avg from t_user

group bydeptNo

Having过滤

select deptNo,avg(salary) avg from t_user group by deptNo

havingavg > 15000

case-when

select deptNo,name,salary,sex,

(casesexwhentrue then ‘男’ else ‘⼥’ end ) as user_sex,

(casewhensalary >= 20000 then ‘⾼’whensalary >= 15000 then ‘中’ else

‘低’ end ) as level from t_user

⾏转列

val coursedf = spark.sparkContext.parallelize(List(

(1, "语⽂", 100),

(1, "数学", 100),

(1, "英语", 100),

(2, "数学", 79),

(2, "语⽂", 80),

(2, "英语", 100)

)).toDF("id","course","score")

//注册视图

coursedf.createOrReplaceTempView("t_course")

select id,

sum(case course when '语⽂' then score else 0 end) as chinese,

sum(case course when '数学' then score else 0 end) as math,

sum(case course when '英语' then score else 0 end) as english

from t_course

group by id

- 使⽤

pivot实现⾏转列

select * from t_course

pivot(max(score) for course in (‘数学’,‘语⽂’,‘英语’))

表连接

select u.*,d.dname from t_user u

left joint_dept donu.deptNo=d.deptNo

⼦查询

select *,

(select sum(t1.salary) from t_user t1 where (t1.deptNo = t2.deptNo) group by t1.deptNo) as total

from t_user t2left joint_dept dont2.deptNo=d.deptNo order by t2.deptNo asc,t2.salary desc

开窗函数

select *,rank() over(partition by t2.deptNo order by t2.salary desc) as rank from

t_user t2 left join t_dept d on t2.deptNo=d.deptNo order by t2.deptNo asc,t2.salary

desc

- cube分析

select deptNo,job,max(salary),avg(salary) from t_user group by deptNo,job with cube

- 等价写法

select deptNo,job,max(salary),avg(salary) from t_user group by cube(deptNo,job)

⾃定义函数

spark内置很多函数都定义在 org.apache.spark.sql.functions 单例对象中,如果不满⾜实际需求,⼤家可以考虑对Spark函数库进⾏扩展。

√单⾏函数

1、定义函数

val sexFunction=(sex:Boolean)=> sex match {

case true => "男"

case false => "⼥"

case default => "位置"

}

val commFunction=(age:Int,salary:Double)=> {

if(age>=30){

salary+500

}else{

salary

}

}

2、注册⽤户的函数

spark.udf.register("sexFunction",sexFunction)

spark.udf.register("commFunction",commFunction)

3、测试使⽤函数

select name,sexFunction(sex),age,salary,job,commFunction(age,salary) as comm from

t_user

聚合函数-了解

Untyped 无类型的

1、定义聚合函数

import org.apache.spark.sql.Row

import org.apache.spark.sql.expressions.{MutableAggregationBuffer, UserDefinedAggregateFunction}

import org.apache.spark.sql.types.{DataType, DoubleType, IntegerType, StructField, StructType}

object CustomUserDefinedAggregateFunction extends UserDefinedAggregateFunction {

//接收数据类型是什么

override def inputSchema: StructType = {

StructType(StructField("inputColumn", DoubleType) :: Nil)

}

//⽤于作为缓冲中间结果类型

override def bufferSchema: StructType = {

StructType(StructField("count", IntegerType) :: StructField("total", DoubleType) ::

Nil)

}

//最终返回值类型

override def dataType: DataType = DoubleType

//表示函数输出结果类型是否⼀致

override def deterministic: Boolean = true

//设置聚合初始化状态

override def initialize(buffer: MutableAggregationBuffer): Unit = {

buffer(0) = 0 //总计数

buffer(1) = 0.0 //总和

}

//将row中结果累加到buffer中

override def update(buffer: MutableAggregationBuffer, input: Row): Unit = {

var historyCount = buffer.getInt(0)

var historyTotal = buffer.getDouble(1)

if (!input.isNullAt(0)) {

historyTotal += input.getDouble(0)

historyCount += 1

buffer(0)= historyCount

buffer(1) = historyTotal

}

}

//做最终汇总操作

override def merge(buffer1: MutableAggregationBuffer, buffer2: Row): Unit = {

buffer1(0)=buffer1.getInt(0) + buffer2.getInt(0)

buffer1(1)=buffer1.getDouble(1) + buffer2.getDouble(1)

}

//计算最终结果

override def evaluate(buffer: Row): Any = {

buffer.getDouble(1) / buffer.getInt(0)

}

}

2、注册聚合函数

spark.udf.register("custom_avg",CustomUserDefinedAggregateFunction)

3、测试使⽤聚合函数

select deptNo,custom_avg(salary) from t_user group by deptNo

Type-Safe 类型安全

1、定义聚合函数

import org.apache.spark.sql.{Encoder, Encoders}

import org.apache.spark.sql.catalyst.expressions.GenericRowWithSchema

import org.apache.spark.sql.expressions.Aggregator

case class Average(total:Double,count:Int)

object CustomAggregator extends Aggregator[GenericRowWithSchema,Average,Double]{

//初始化值

override def zero: Average = Average(0.0,0)

//计算局部结果

override def reduce(b: Average, a: GenericRowWithSchema): Average = {

Average(b.total+a.getAs[Double]("salary"),b.count+1)

}

//将局部结果合并

override def merge(b1: Average, b2: Average): Average = {

Average(b1.total+b2.total,b1.count+b2.count)

}

//计算总结果

override def finish(reduction: Average): Double = {

reduction.total/reduction.count

}

//指定中间结果类型的Encoders(编码器)

override def bufferEncoder: Encoder[Average] = Encoders.product[Average]

//指定最终结果类型的Encoders

override def outputEncoder: Encoder[Double] = Encoders.scalaDouble

}

2、注册声明聚合函数

val averageSalary = CustomAggregator.toColumn.name("average_salary")

3、测试使⽤聚合

import org.apache.spark.sql.functions._

userDF.select("deptNo","salary")

.groupBy("deptNo")

.agg(averageSalary,avg("salary"))

.show()

Load&Save 加载和保存

paquet⽂件

- save 保存

Parquet仅仅是⼀种存储格式,它是语⾔、平台⽆关的,并且不需要和任何⼀种数据处理框架绑定.

var sql=

"""

select * from t_user

"""

val result: DataFrame = spark.sql(sql)

result.write.save("hdfs://CentOS:9000/results/paquet")

load 加载

val dataFrame = spark.read.load("hdfs://CentOS:9000/results/paquet")

dataFrame.printSchema()

dataFrame.show()

root

|-- name: string (nullable = true)

|-- age: integer (nullable = true)

|-- salary: double (nullable = true)

|-- sex: boolean (nullable = true)

|-- job: string (nullable = true)

|-- deptNo: integer (nullable = true)

等价写法:spark.read.parquet(“hdfs://CentOS:9000/results/paquet”)

Json格式

- save

var sql=

"""

select * from t_user

"""

val result: DataFrame = spark.sql(sql)

result.write

.format("json")

.mode(SaveMode.Overwrite)

.save("hdfs://CentOS:9000/results/json")

- load

val dataFrame = spark.read

.format("json")

.load("hdfs://CentOS:9000/results/json")

dataFrame.printSchema()

dataFrame.show()

⽤户也可以简写 spark.read.json(“hdfs://CentOS:9000/results/json”)

csv格式

- save

var sql=

"""

select * from t_user

"""

val result: DataFrame = spark.sql(sql)

result.write

.format("csv")

.mode(SaveMode.Overwrite)

.option("sep", ",")//指定分隔符

.option("inferSchema", "true")//参照表schema信息

.option("header", "true")//是否产⽣表头信息

.save("hdfs://CentOS:9000/results/csv")

- load

val dataFrame = spark.read

.format("csv")

.option("sep", ",")//指定分隔符

.option("inferSchema", "true")//参照表schema信息

.option("header", "true")//是否产⽣表头信息

.load("hdfs://CentOS:9000/results/csv")

ORC格式

ORC的全称是(Optimized Row Columnar),ORC⽂件格式是⼀种Hadoop⽣态圈中的列式存储格式,它的产⽣早在2013年初,最初产⽣⾃Apache Hive,⽤于降低Hadoop数据存储空间和加速Hive查询速度。

- save

var sql=

"""

select * from t_user

"""

val result: DataFrame = spark.sql(sql)

result.write

.format("orc")

.mode(SaveMode.Overwrite)

.save("hdfs://CentOS:9000/results/orc")

- load

val dataFrame = spark.read

.orc("hdfs://CentOS:9000/results/orc")

dataFrame.printSchema()

dataFrame.show()

SQL读取⽂件

val parqeutDF = spark.sql("SELECT * FROM parquet.`hdfs://CentOS:9000/results/paquet`")

val jsonDF = spark.sql("SELECT * FROM json.`hdfs://CentOS:9000/results/json`")

val orcDF = spark.sql("SELECT * FROM orc.`hdfs://CentOS:9000/results/orc/`")

//val csvDF = spark.sql("SELECT * FROM csv.`hdfs://CentOS:9000/results/csv/`")

parqeutDF.show()

jsonDF.show()

orcDF.show()

// csvDF.show()

JDBC数据读取

- load

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.48</version>

</dependency>

val dataFrame = spark.read

.format("jdbc")

.option("url", "jdbc:mysql://CentOS:3306/test")

.option("dbtable", "t_user")

.option("user", "root")

.option("password", "root")

.load()

dataFrame.show()

- save

val props = new Properties()

props.put("user", "root")

props.put("password", "root")

result .write

.mode(SaveMode.Overwrite)

.jdbc("jdbc:mysql://CentOS:3306/test","t_user",props)

提醒:系统会⾃动创建t_user表

Spark & Hive集成

- 修改hive-site.xml

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://CentOS:3306/hive?createDatabaseIfNotExist=true</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>root</value>

</property>

<!--开启MetaStore服务,⽤于Spark读取hive中的元数据-->

<property>

<name>hive.metastore.uris</name>

<value>thrift://CentOS:9083</value>

</property>

- 启动metastore服务

[root@CentOS apache-hive-1.2.2-bin]# ./bin/hive --service metastore >/dev/null 2>&1 &

[1] 55017

- 导⼊以下依赖

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.11</artifactId>

<version>2.4.5</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-hive -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-hive_2.11</artifactId>

<version>2.4.5</version>

</dependency>

- 编写如下代码

//配置spark

val spark = SparkSession.builder()

.appName("Spark Hive Example")

.master("local[*]")

.config("hive.metastore.uris", "thrift://CentOS:9083")

.enableHiveSupport() //启动hive⽀持

.getOrCreate()

spark.sql("show databases").show()

spark.sql("use baizhi")

spark.sql("select * from t_emp").na.fill(0.0).show()

spark.close()

3034

3034

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?